GLM-5 Turbo: The New AI Model Built Exclusively for AI Agents

The AI agent revolution just got its first purpose-built engine. While every major lab has been retrofitting their general-purpose models to handle agent tasks, Chinese AI powerhouse Zhipu AI (now operating globally as Z.ai) has done something nobody else has attempted: they built a model from scratch, designed exclusively for AI agents. It is called GLM-5 Turbo, it launched on March 16, 2026, and it is already shaking up the entire industry. See also: Claude Code’s loop and skills system.

We have been tracking every major model release this year, from GPT-5.4 to Claude Opus 4.5 to Gemini 2.5 Pro. But GLM-5 Turbo stands apart because it is not trying to be the best at everything. It is trying to be the best at one thing: powering autonomous AI agents in the OpenClaw ecosystem. And based on what we have seen so far, that bet is paying off. See also: 61 AI agents on GitHub.

Table of Contents

- What Is GLM-5 Turbo?

- What Is OpenClaw and Why Does It Matter?

- How GLM-5 Turbo Is Optimized for Agent Workflows

- Technical Specifications

- Pricing Breakdown

- Benchmarks: GLM-5 Turbo vs GPT-5.4, Claude, and Gemini

- What Makes It Different from General-Purpose Models

- How to Use GLM-5 Turbo

- The Chinese AI Competition Angle

- Should You Use GLM-5 Turbo?

- FAQ

What Is GLM-5 Turbo?

GLM-5 Turbo is a specialized large language model developed by Zhipu AI (Z.ai), released on March 16, 2026. Unlike every other frontier model on the market, it was not designed to be a general-purpose chatbot or reasoning engine. Instead, it was built from the ground up to excel at one specific use case: powering AI agents in real-world, multi-step, tool-using workflows.

The model is a proprietary variant of Z.ai’s open-source GLM-5 flagship model (which features 744 billion total parameters with 40 billion activated via a Mixture of Experts architecture). While GLM-5 itself was released under an MIT license and is fully open-source, GLM-5 Turbo is closed-source and available only through Z.ai’s API and third-party providers like OpenRouter.

Think of it this way: GLM-5 is the Swiss Army knife. GLM-5 Turbo is the scalpel, precision-engineered for the operating room of autonomous AI agents.

What Is OpenClaw and Why Does It Matter?

To understand GLM-5 Turbo, you need to understand OpenClaw. OpenClaw is the rapidly growing ecosystem of AI agents that can autonomously perform complex tasks by using tools, browsing the web, writing and executing code, managing files, and interacting with external APIs. If you have used tools like Claude Code, Cursor, or Devin, you have experienced a version of this. See also: best AI coding tools we reviewed.

OpenClaw scenarios involve:

- Multi-step task execution where the AI breaks down a complex request into dozens of sub-tasks

- Tool invocation where the agent calls external tools, APIs, and services

- Persistent execution where tasks run over extended periods, sometimes hours or days

- Collaborative multi-agent workflows where multiple AI agents work together on different parts of a problem

The market for OpenClaw-style agents is exploding. Every major tech company is building agent infrastructure, and the models powering those agents need to be reliable across long execution chains, not just good at answering a single question.

How GLM-5 Turbo Is Optimized for Agent Workflows

This is where GLM-5 Turbo gets genuinely interesting. Most AI labs take their general-purpose model, fine-tune it a bit for tool use, and call it agent-ready. Z.ai took the opposite approach. They aligned GLM-5 Turbo with agent requirements during the training phase itself, not as an afterthought.

Here is what that means in practice:

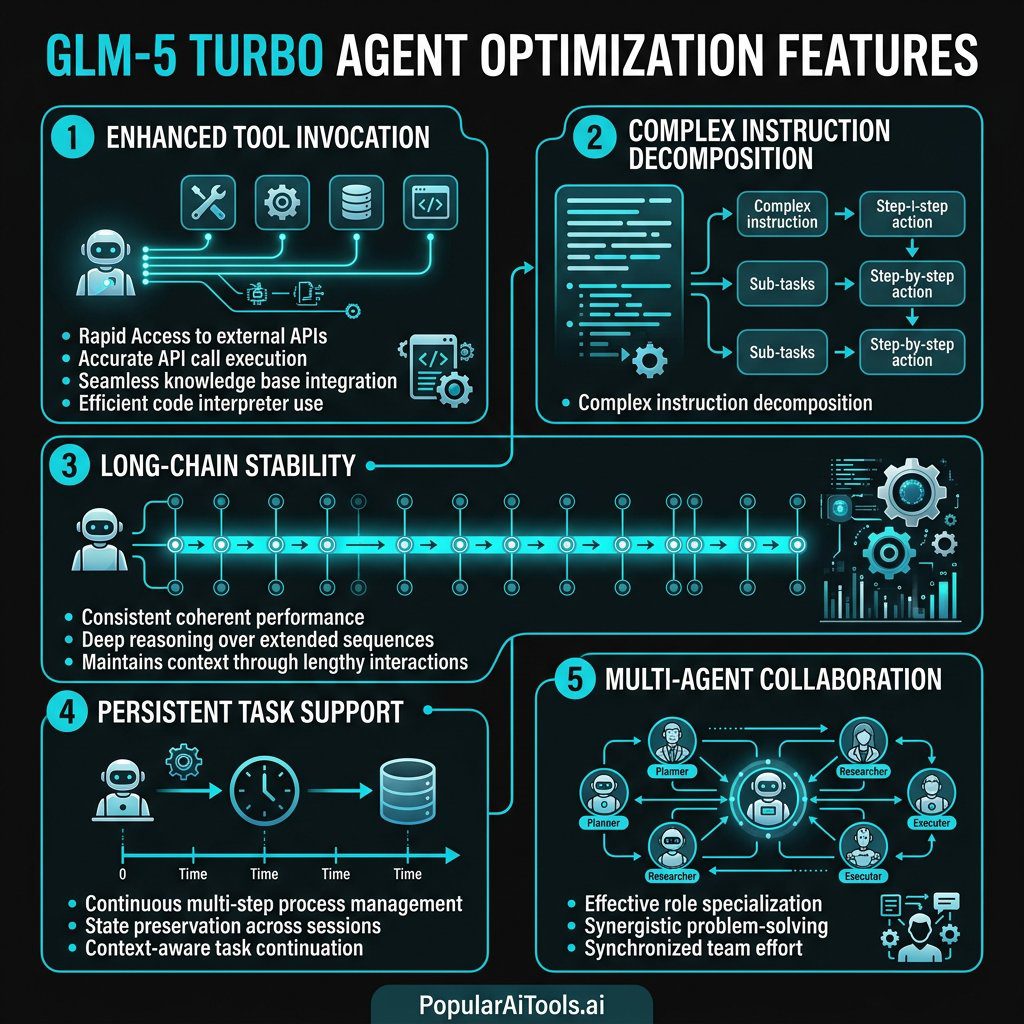

Enhanced Tool Invocation

GLM-5 Turbo has strengthened its ability to invoke external tools and various skills with greater stability and reliability. When an agent needs to call an API, execute code, search the web, or interact with a database, the model produces more accurate tool calls with fewer hallucinated parameters. This matters enormously when you are running automated pipelines where a single malformed API call can break an entire workflow.

Complex Instruction Decomposition

The model excels at understanding multi-layered instructions and breaking them down into logical sub-steps. Give it a complex task like “research competitors in the CRM space, compile pricing data into a spreadsheet, and draft a comparison blog post,” and it will decompose that into a clean execution plan without losing track of the overall objective.

Long-Chain Execution Stability

This is perhaps the most critical improvement. General-purpose models tend to drift, hallucinate, or lose context during extended agent sessions. GLM-5 Turbo was specifically trained for stability across long execution chains, meaning it maintains coherence and accuracy even after dozens of sequential tool calls and sub-tasks.

Scheduled and Persistent Task Support

The model supports scheduled and persistent execution patterns, making it suitable for agents that need to run recurring tasks, monitor conditions over time, or resume interrupted workflows.

Multi-Agent Collaboration

GLM-5 Turbo supports collaboration among multiple agents, allowing complex workflows to be distributed across specialized sub-agents that communicate and coordinate through the model.

Technical Specifications

| Specification | Details |

|---|---|

| Model Name | GLM-5 Turbo |

| Developer | Zhipu AI (Z.ai) |

| Release Date | March 16, 2026 |

| Base Architecture | Mixture of Experts (MoE) |

| Base Model Parameters | 744B total / 40B activated |

| Context Window | 200,000 tokens |

| Max Output Tokens | 128,000 tokens |

| Input Type | Text |

| Output Type | Text |

| Attention Mechanism | DeepSeek Sparse Attention |

| Reasoning Support | Yes (reasoning parameter available) |

| Function Calling | Yes (native support) |

| Structured Output | Yes |

| Context Caching | Yes |

| Streaming | Yes (real-time) |

| License | Proprietary (closed-source) |

The 200K context window and 128K output token limit are particularly important for agent workflows. Agents often need to process large amounts of context (code repositories, research documents, conversation histories) and produce lengthy outputs (full code files, detailed reports, multi-step plans).

Pricing Breakdown

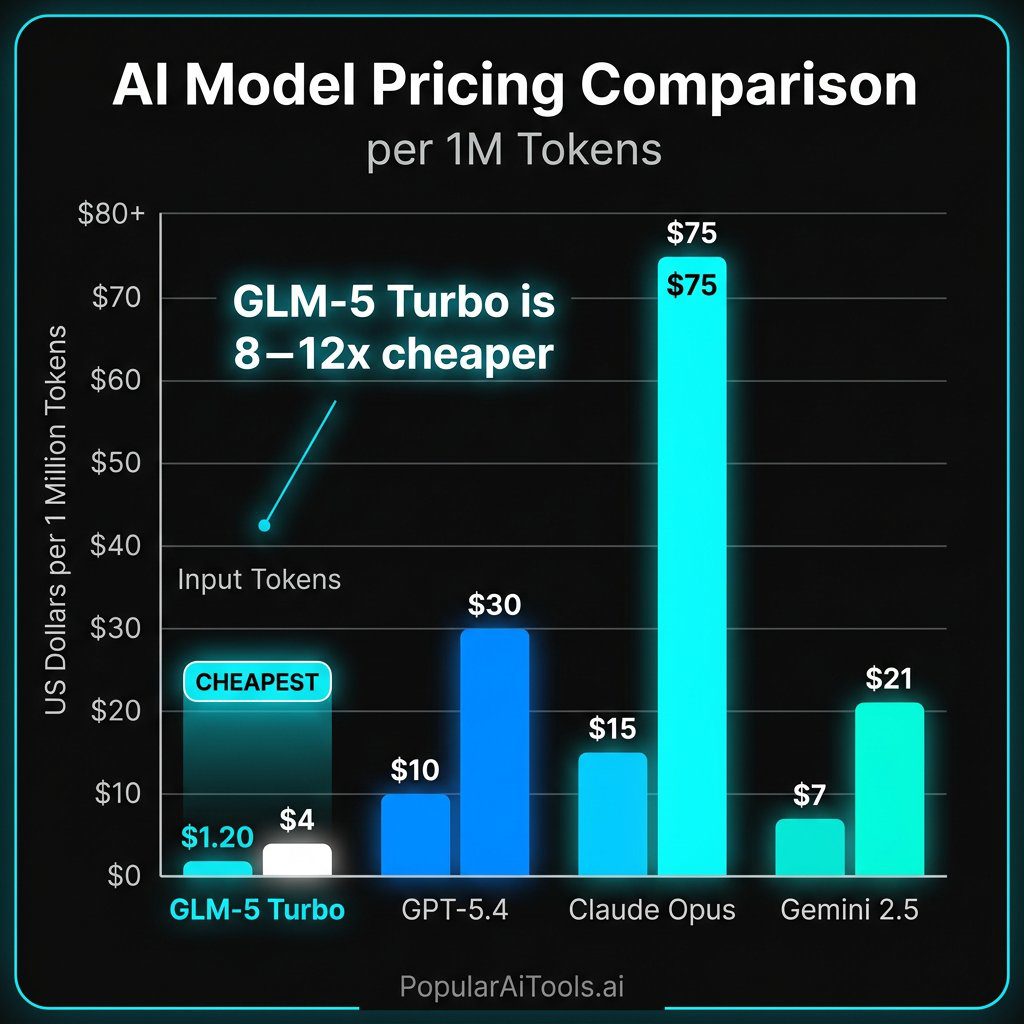

One of the most compelling aspects of GLM-5 Turbo is its pricing. For a model optimized for agent tasks that can involve thousands of API calls per session, cost matters.

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context Window |

|---|---|---|---|

| GLM-5 Turbo | $1.20 | $4.00 | 200K |

| GLM-5 (base) | $1.00 | $3.20 | 200K |

| GPT-5.4 | $10.00 | $30.00 | 200K |

| Claude Opus 4.5 | $15.00 | $75.00 | 200K |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 200K |

| Gemini 2.5 Pro | $7.00 | $21.00 | 1M |

At $1.20 per million input tokens and $4.00 per million output tokens, GLM-5 Turbo is roughly 8x cheaper than GPT-5.4 on input and 7.5x cheaper on output. Compared to Claude Opus 4.5, the savings are even more dramatic: approximately 12.5x cheaper on input and 18.75x cheaper on output.

For agent-heavy workflows where a single task might consume millions of tokens across dozens of tool calls, this pricing difference is not incremental. It is transformational. A complex agent session that costs $50 with GPT-5.4 might cost $6 with GLM-5 Turbo.

Benchmarks: GLM-5 Turbo vs GPT-5.4, Claude, and Gemini

Let us be clear about what GLM-5 Turbo is and is not. It is not designed to top the general-purpose leaderboards. It is designed to dominate agent-specific tasks.

General Performance Context

The base GLM-5 model debuted at number five in overall AI model rankings, displacing GPT-5.2 from the top five on the strength of its open-source credentials and frontier-level performance. GLM-5 approaches Claude Opus 4.5 in code-logic density and systems-engineering capability.

On SWE-bench, a key coding benchmark, Claude Opus 4.5 leads at 76.8%, with Gemini 3.1 Pro, Claude Sonnet 4.6, and GLM-5 joining the top performers.

Agent-Specific Performance (ZClawBench)

Z.ai developed ZClawBench, a proprietary benchmark specifically tailored to end-to-end agent tasks in the OpenClaw ecosystem. ZClawBench covers:

- Environment setup (configuring development environments, installing dependencies)

- Software development (writing, debugging, and deploying code)

- Information retrieval (searching, gathering, and synthesizing data)

- Data analysis (processing datasets, generating insights)

- Content creation (writing, editing, formatting)

According to Z.ai’s published results, GLM-5 Turbo delivers significant improvements over GLM-5 in OpenClaw scenarios and outperforms several leading models across multiple key agent task categories. A radar chart released by Z.ai shows GLM-5 Turbo as especially competitive in information search and gathering, office and daily tasks, data analysis, development and operations, and automation.

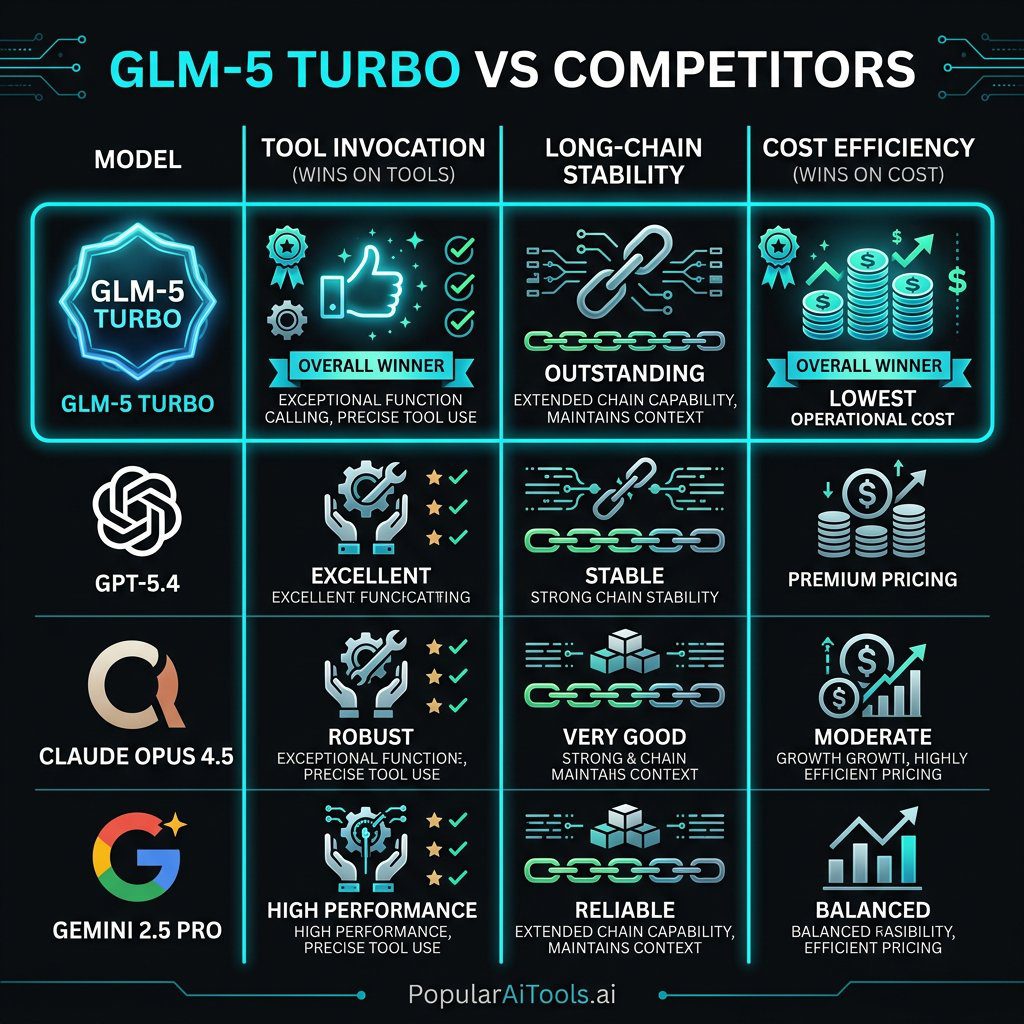

| Capability | GLM-5 Turbo | GPT-5.4 | Claude Opus 4.5 | Gemini 2.5 Pro |

|---|---|---|---|---|

| Tool Invocation Accuracy | Excellent | Very Good | Very Good | Good |

| Long-Chain Stability | Excellent | Good | Very Good | Good |

| Instruction Decomposition | Excellent | Very Good | Excellent | Very Good |

| Multi-Agent Coordination | Excellent | Good | Good | Good |

| General Reasoning | Very Good | Excellent | Excellent | Very Good |

| Creative Writing | Good | Very Good | Excellent | Very Good |

| Cost Efficiency | Excellent | Poor | Poor | Good |

It is worth noting that ZClawBench results are manufacturer-reported. The benchmark dataset and full evaluation trajectories have been made publicly available for community validation, which is a positive sign of transparency.

What Makes It Different from General-Purpose Models

The fundamental difference between GLM-5 Turbo and models like GPT-5.4 or Claude Opus 4.5 is one of design philosophy. Here is a breakdown:

General-purpose models are trained to be excellent at everything: conversation, reasoning, creative writing, coding, analysis, and yes, agent tasks. They are optimized for breadth. When you use them for agent workflows, they work, but they were not specifically designed for the failure modes that agent tasks create.

GLM-5 Turbo sacrifices some breadth for extreme depth in agent capabilities. It was aligned during training with the specific patterns that agent workflows demand:

- Reliable tool calling over hundreds of sequential invocations

- Maintaining coherent state across hours-long execution chains

- Graceful error recovery when tools fail or return unexpected results

- Efficient token usage during repetitive agent operations

- Consistent formatting of structured outputs that downstream tools can parse

Z.ai CEO Zhang Peng has been explicit about this positioning: GLM-5 Turbo is a specialized solution for the growing AI agent market, not a universal competitor to GPT-5.4 or Claude. The strategy is less breadth, more depth in one of the fastest-growing application fields in AI.

How to Use GLM-5 Turbo

Getting started with GLM-5 Turbo is straightforward. Here are your main options:

Option 1: Z.ai API Directly

Access the model through Z.ai’s official API at docs.z.ai. You will need to create an account and obtain an API key. The API follows standard chat completion patterns and supports function calling, streaming, and reasoning modes natively.

Option 2: OpenRouter

GLM-5 Turbo is available on OpenRouter, which provides a unified API for accessing multiple models. This is a great option if you already use OpenRouter for other models or want to easily switch between providers.

A basic API call looks like this:

“python

import requests

response = requests.post(

"https://openrouter.ai/api/v1/chat/completions",

headers={

"Authorization": "Bearer YOUR_API_KEY",

"Content-Type": "application/json"

},

json={

"model": "z-ai/glm-5-turbo",

"messages": [

{"role": "system", "content": "You are an autonomous agent..."},

{"role": "user", "content": "Research and summarize..."}

],

"tools": [...] # Your tool definitions here

}

)

`

Option 3: Third-Party Integrations

GLM-5 Turbo is already being integrated into popular platforms. It is available in Puter.js for browser-based AI applications, and on NVIDIA NIM for enterprise deployments.

Enabling Reasoning Mode

To enable the model's chain-of-thought reasoning, include the reasoning parameter in your request. The response will contain a reasoning_details array showing the model's internal reasoning before producing the final answer. When continuing multi-turn conversations, preserve the complete reasoning_details` when passing messages back so the model can continue reasoning from where it left off.

The Chinese AI Competition Angle

GLM-5 Turbo is not just a product launch. It is a statement about where Chinese AI is headed.

Zhipu AI, founded in 2019 as a Tsinghua University spinoff in Beijing, has rapidly become one of China’s most prominent AI companies. The company listed on the Hong Kong Stock Exchange on January 8, 2026, and is currently valued at approximately $34.5 billion, making it China’s largest independent large language model developer.

The launch of GLM-5 Turbo pushed Zhipu’s Hong Kong-listed stock up as much as 16% to around HK$615 on the day of announcement. The market clearly sees this strategic pivot as significant.

What makes this interesting from a competition standpoint:

China is not just following anymore. While US labs (OpenAI, Anthropic, Google) continue to push general-purpose model capabilities, Chinese labs are increasingly finding strategic niches where they can lead. Z.ai’s bet on agent-first architecture is a prime example. Rather than trying to beat GPT-5.4 at everything, they are trying to own the agent model category entirely.

The open-source angle is strategic. GLM-5 (the base model) is MIT-licensed and open-source, which has driven massive community adoption. GLM-5 Turbo is closed-source, creating a clear commercial tier. Z.ai has said that capabilities and findings from GLM-5 Turbo will be folded into future open-source releases, keeping the community invested.

Pricing is a weapon. At $1.20/$4.00 per million tokens, GLM-5 Turbo undercuts every Western frontier model by a wide margin. For agent workflows that can burn through millions of tokens per session, this pricing makes previously impractical automation economically viable.

The broader context here is that the Chinese AI ecosystem, including DeepSeek, Alibaba’s Qwen, Baidu’s ERNIE, and now Z.ai, is increasingly competitive with Western counterparts. The days of assuming a multi-year gap between US and Chinese AI capabilities are over.

Should You Use GLM-5 Turbo?

Here is our honest assessment:

Use GLM-5 Turbo if:

- You are building AI agent systems that require reliable tool use across long execution chains

- Cost efficiency is a priority and you need to run high-volume agent workloads

- You are working within the OpenClaw ecosystem

- You need a model that excels at instruction decomposition and multi-step task planning

- You want 200K context with 128K output for processing large codebases or document sets

Stick with general-purpose models if:

- You need top-tier creative writing or nuanced conversational ability

- Your use case is primarily single-turn Q&A or analysis

- You need multimodal capabilities (image, audio, video)

- You require the absolute highest general reasoning scores regardless of cost

GLM-5 Turbo represents a genuinely new approach in the AI model landscape. By going deep instead of broad, Z.ai has created something that fills a real gap in the market. As AI agents become the primary way we interact with language models, purpose-built agent models like GLM-5 Turbo may well become the standard, not the exception.

FAQ

What is GLM-5 Turbo and who made it?

GLM-5 Turbo is a specialized large language model created by Zhipu AI, a Chinese AI company operating globally under the brand Z.ai. Released on March 16, 2026, it is a proprietary variant of the open-source GLM-5 model, specifically designed and trained from the ground up to power AI agents in OpenClaw workflows. Unlike general-purpose models, it was optimized during training for tool invocation, long-chain execution stability, and multi-agent collaboration.

How much does GLM-5 Turbo cost compared to GPT-5.4 and Claude?

GLM-5 Turbo costs $1.20 per million input tokens and $4.00 per million output tokens. This makes it approximately 8x cheaper than GPT-5.4 ($10/$30 per million tokens) and over 12x cheaper than Claude Opus 4.5 ($15/$75 per million tokens) on input. For agent-heavy workflows that consume millions of tokens, this pricing difference can reduce costs from $50 per session to under $6.

Is GLM-5 Turbo open-source?

No. While the base GLM-5 model is open-source under an MIT license, GLM-5 Turbo is proprietary and closed-source. It is available only through Z.ai’s API and third-party providers like OpenRouter. However, Z.ai has stated that capabilities and research findings from GLM-5 Turbo will be incorporated into future open-source model releases.

What is OpenClaw and why is GLM-5 Turbo built for it?

OpenClaw refers to the ecosystem of AI agents that autonomously perform complex tasks by using tools, executing code, browsing the web, and interacting with external APIs. GLM-5 Turbo was specifically built for OpenClaw because agent workflows have unique requirements that general-purpose models struggle with, including reliable tool calling over hundreds of sequential invocations, maintaining coherent state across extended sessions, and graceful error recovery. By training specifically for these patterns, GLM-5 Turbo achieves higher reliability and efficiency in agent tasks.

Can I use GLM-5 Turbo for regular chatbot or conversational AI tasks?

You can, but it is not the best choice for that use case. GLM-5 Turbo was optimized for agent-specific workflows, which means it excels at tool use, instruction decomposition, and long-chain execution. For general conversation, creative writing, or single-turn Q&A, models like Claude Opus 4.5, GPT-5.4, or even the base GLM-5 will likely provide better results. GLM-5 Turbo is best used where its agent-specific optimizations provide a clear advantage.

We test and review AI tools regularly so you do not have to. Submit your AI tool to get featured on PopularAiTools.ai.

Sources: