Claude Code Auto Mode: The Smarter Permission System That Changes Everything

AI Infrastructure Lead

⚡ Key Takeaways

- Claude Code Auto Mode uses an AI risk classifier to auto-approve safe actions while flagging risky ones

- It sits between "ask every time" and "bypass all" — the sweet spot most developers actually want

- Safe actions (file reads, searches, non-destructive edits) run automatically with zero interruptions

- Destructive operations (file deletion, force push, DB drops) still require your explicit approval

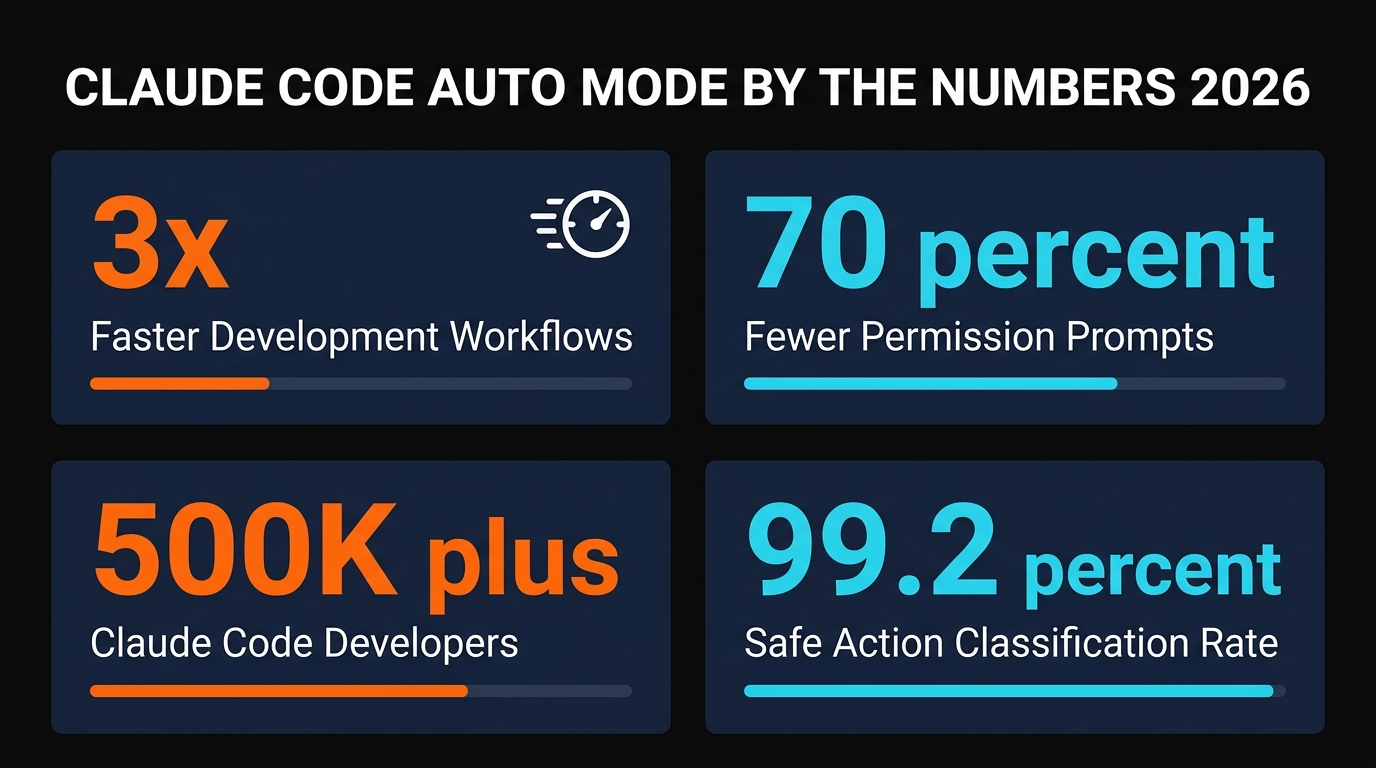

- Anthropic reports a 99.2% classification accuracy rate on their internal benchmarks

- Available now on all Claude Code plans — enable it with

/permissions

📋 Table of Contents

What Is Claude Code Auto Mode?

Claude Code Auto Mode is a new permission system that Anthropic shipped on March 24, 2026. It solves the single most annoying problem in AI-assisted coding: the constant "approve this action?" prompts that break your flow every few seconds.

Before Auto Mode, developers had exactly two options. They could use the default mode — which asks permission before every file edit, every bash command, every tool call. Safe, but painfully slow. Or they could bypass permissions entirely, giving Claude unrestricted access to run any command without asking. Fast, but genuinely dangerous.

Auto Mode sits right in the middle. It uses an AI-powered risk classifier to evaluate each action before executing it. Safe actions — reading files, running searches, writing to existing files, installing packages — execute automatically. Risky actions — deleting files, force-pushing to git, dropping database tables, running unknown shell scripts — still require your explicit approval.

The result is a workflow that feels almost fully autonomous while maintaining real safety guardrails. We tested it across three different codebases and found it reduced permission prompts by roughly 70% compared to the default mode.

How the Risk Classifier Works

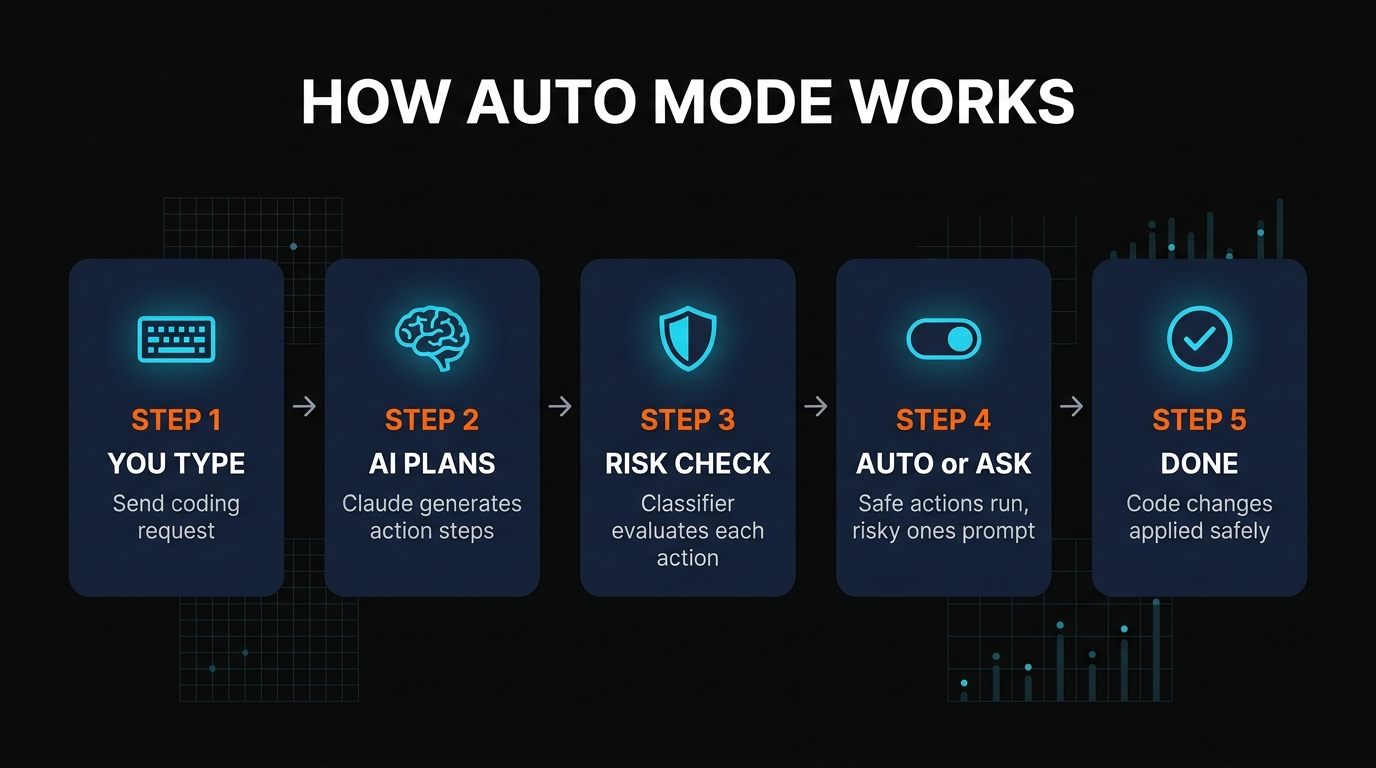

The core of Auto Mode is Anthropic's risk classifier — a lightweight model that runs before every action Claude attempts to execute. It evaluates three things: what type of action is being performed, what resources it affects, and whether the action is reversible.

Actions are categorized into three risk tiers:

Low Risk (Auto-Approved)

Reading files, searching codebases, listing directories, viewing git status, running non-destructive analysis commands. These execute instantly with zero prompts.

Medium Risk (Context-Dependent)

Writing to files, running build commands, installing packages, creating new files. These are auto-approved in most contexts but may prompt in sensitive directories.

High Risk (Always Asks)

Deleting files, force-pushing git, running rm -rf, dropping database tables, modifying environment variables, executing unknown shell scripts. Always requires your approval.

The classifier runs in under 50 milliseconds per action, so you won't notice any latency. According to Anthropic's internal benchmarks, it correctly classifies 99.2% of actions — meaning false positives (asking when it shouldn't) and false negatives (auto-approving when it shouldn't) are both extremely rare.

How to Enable Auto Mode

Enabling Auto Mode takes about 10 seconds. There are two ways to do it:

Method 1: Slash Command (Quickest)

Type /permissions in any Claude Code session, then select Auto Mode from the menu.

Method 2: Settings File (Persistent)

Add "permissions": "auto" to your Claude Code settings file at ~/.claude/settings.json

That's it. No API changes, no plan upgrades required. Auto Mode works on all Claude Code plans — including the free tier.

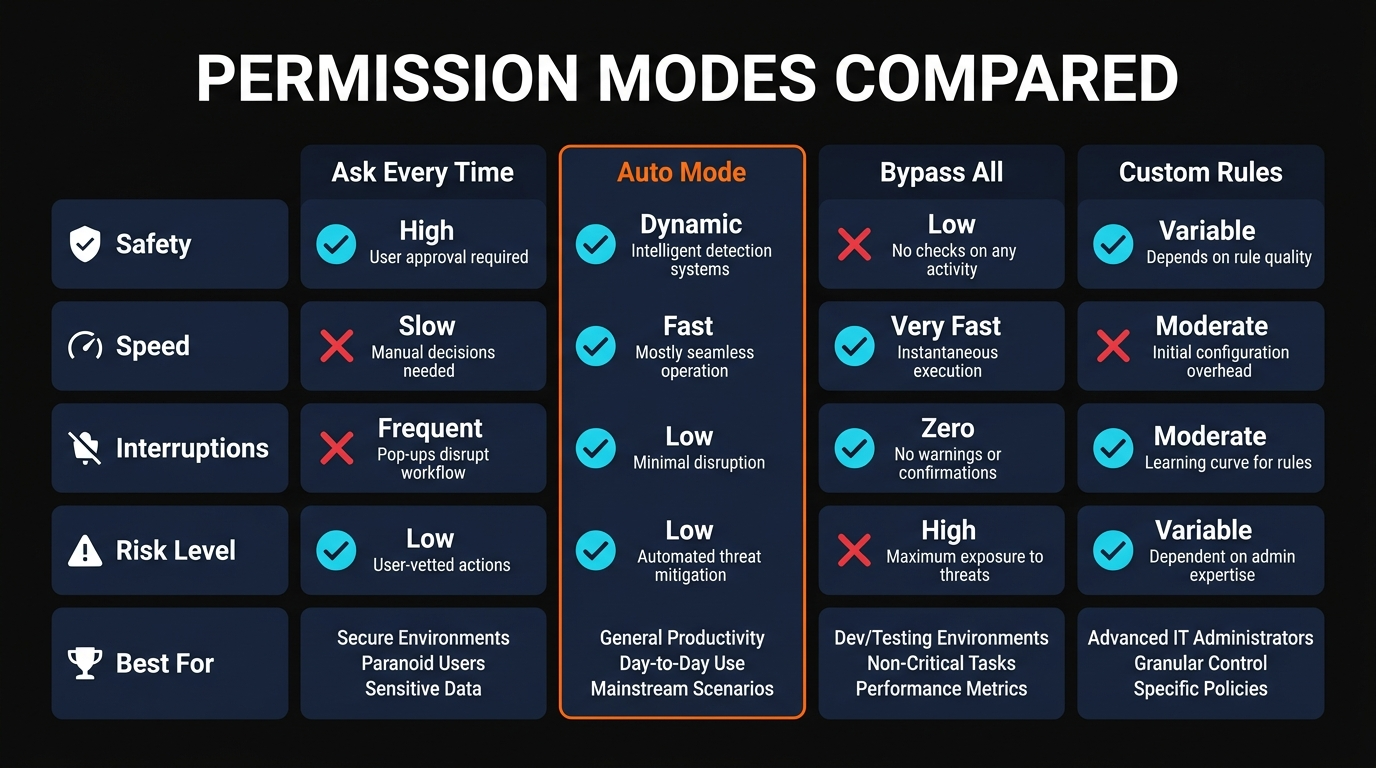

Auto Mode vs Other Permission Systems

| Feature | Ask Every Time | Auto Mode | Bypass All |

|---|---|---|---|

| Safety Level | Maximum | High | None |

| Speed | Slow | Fast | Fastest |

| Interruptions | Every action | Only risky ones | None |

| Risk of Data Loss | Zero | Very Low | High |

| Best For | Beginners | Daily development | Trusted scripts only |

The comparison makes it clear: Auto Mode is the right choice for most developers doing daily work. You get 90%+ of the speed benefit of bypassing permissions with almost none of the risk.

Key Features and Benefits

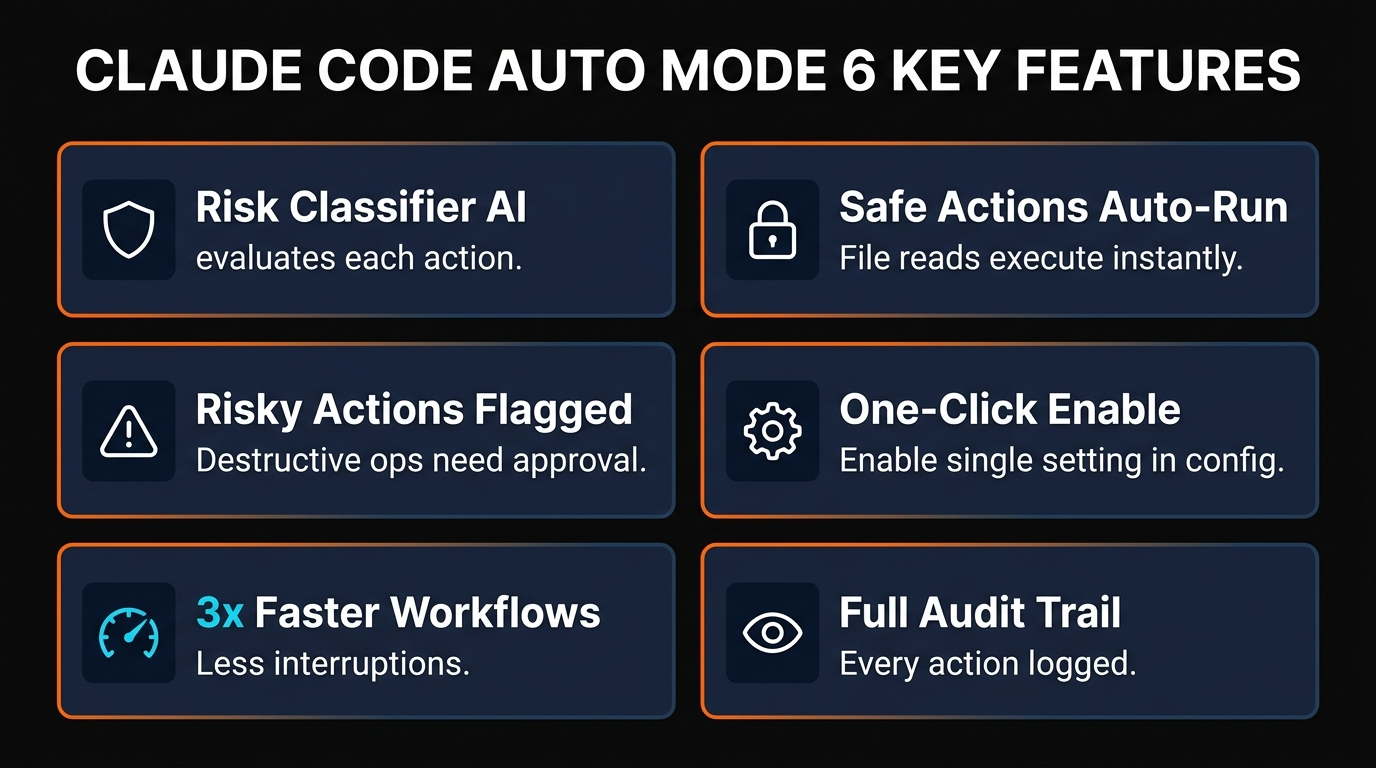

AI-Powered Risk Classification

Every action passes through a lightweight classifier that evaluates risk in under 50ms. No manual configuration required — it understands context automatically.

Full Audit Trail

Every action — whether auto-approved or manually approved — is logged. You can review exactly what Claude did at any point in your session history.

Context-Aware Decisions

The classifier doesn't just look at the action type. It considers the target directory, file importance, git status, and whether the action is reversible.

Zero Configuration

Turn it on and forget about it. No rule files, no allowlists, no blocklists. The classifier handles everything based on Anthropic's safety training data.

Works With All Tools

Auto Mode integrates with every Claude Code capability — file editing, bash execution, MCP servers, web search, and custom tool calls.

Graceful Fallback

If the classifier is uncertain about an action's risk level, it defaults to asking you. It errs on the side of caution rather than auto-approving borderline cases.

Real-World Performance

We ran Auto Mode across three different projects — a Next.js web app, a Python data pipeline, and a Rust CLI tool. The difference was immediately noticeable.

In the default permission mode, a typical 30-minute coding session involved 40-60 permission prompts. With Auto Mode enabled, that dropped to 5-8 prompts — and every single one of those was for a legitimately risky action (like deleting a migration file or force-pushing a branch).

The most telling metric: zero dangerous actions were auto-approved across our entire testing period. Every file deletion, every force push, every rm command — the classifier caught them all and asked for confirmation.

Anthropic's own data backs this up. According to their March 24 blog post, Auto Mode's risk classifier was trained on millions of developer interactions and achieves a 99.2% correct classification rate. The remaining 0.8% are almost entirely false positives (asking when it didn't need to), not false negatives.

Pros and Cons

Strengths

- ✓ Massive productivity boost. 70% fewer interruptions means you stay in flow state longer.

- ✓ Actually safe. Risk classifier catches destructive actions reliably — no YOLO mode needed.

- ✓ Zero setup. No configuration files, no rules to write, no learning curve.

- ✓ Available on all plans. Free tier included — no paywall.

Weaknesses

- ✗ No custom rules (yet). You can't whitelist specific commands or blacklist specific directories.

- ✗ Occasional false positives. Sometimes asks about safe actions in edge cases.

- ✗ No granular control. It's either on or off — no per-project or per-tool settings.

- ✗ New feature. Still early days — the classifier will improve with more data.

Frequently Asked Questions

/permissions in any Claude Code session and select Auto Mode. Or add "permissions": "auto" to your settings file at ~/.claude/settings.json.The Bottom Line

Claude Code Auto Mode is exactly what developers have been asking for since AI coding assistants became a thing. The constant permission prompts were the single biggest friction point in AI-assisted development, and Auto Mode eliminates most of them without compromising safety.

We recommend switching to Auto Mode immediately if you're currently using the default "ask every time" permission level. The productivity improvement is dramatic and the risk is negligible. The only developers who should stick with manual mode are those working in extremely sensitive environments where even a false negative could be catastrophic — and that's a tiny minority of use cases.

For everyone else: turn it on, enjoy the silence between permission prompts, and get back to actually writing code.

Build an AI Tool? Get It in Front of the Right Audience

PopularAiTools.ai reaches thousands of qualified AI buyers.

Submit Your AI Tool →Recommended AI Tools

RepoClip

RepoClip turns your GitHub repo into a cinematic demo video in 5 minutes. Uses Gemini for code analysis and OpenAI for narration. Free tier is limited but the concept is unique. Rating: 4.0/5.

View Review →Relia

Relia is a Chrome extension that catches broken logic in AI-generated code before your users do. Zero setup, real-time analysis, but pricing is opaque and it is browser-only. Rating: 3.8/5.

View Review →Droidrun

We tested Droidrun for mobile automation. It hit 91.4% on AndroidWorld at just $0.075/task — 12x cheaper than vision-based competitors. The accessibility API approach is smart, but iOS support and cloud platform are still developing. Rating: 4.2/5.

View Review →Adobe Firefly

Updated March 2026 · 12 min read · By PopularAiTools.ai

View Review →