Kimi Code CLI Review 2026: The $19/mo Claude Code Rival (Verified)

AI Infrastructure Lead

TL;DR — Verified Review

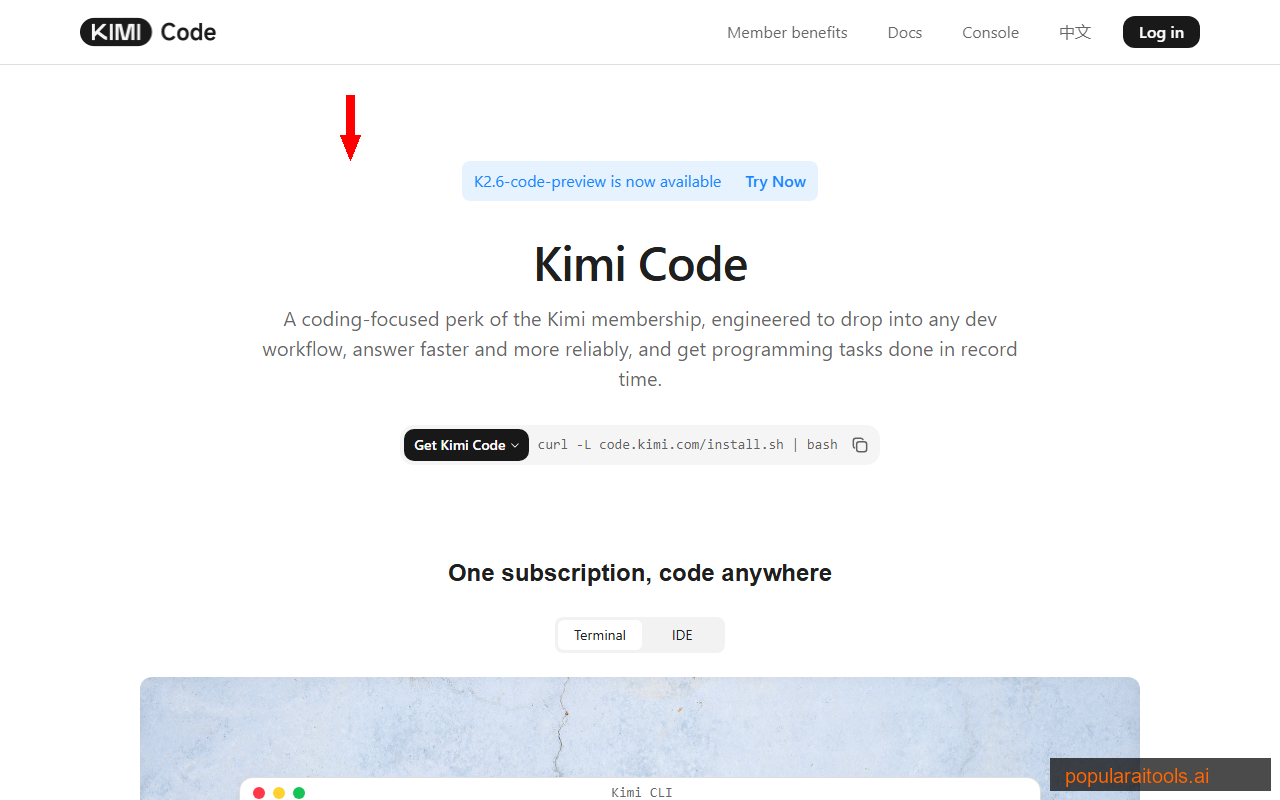

VERIFIEDKimi Code CLI is Moonshot AI's open-source coding agent that genuinely competes with Claude Code at a fraction of the cost. The K2.6 model brings reasoning that beta testers describe as "very Opus," 100 parallel agents blow away Claude's sequential processing, and at $19/month it's hard to argue with the economics. The 256K context limit (vs Claude's 1M) is the main trade-off.

What Is Kimi Code CLI?

Kimi Code CLI is Moonshot AI's open-source, terminal-first AI coding agent — a direct competitor to Claude Code and Gemini CLI. It runs in your terminal, reads and edits code, executes shell commands, searches the web, and autonomously plans multi-step development tasks. Think of it as having a senior developer in your terminal that costs $19/month instead of $200.

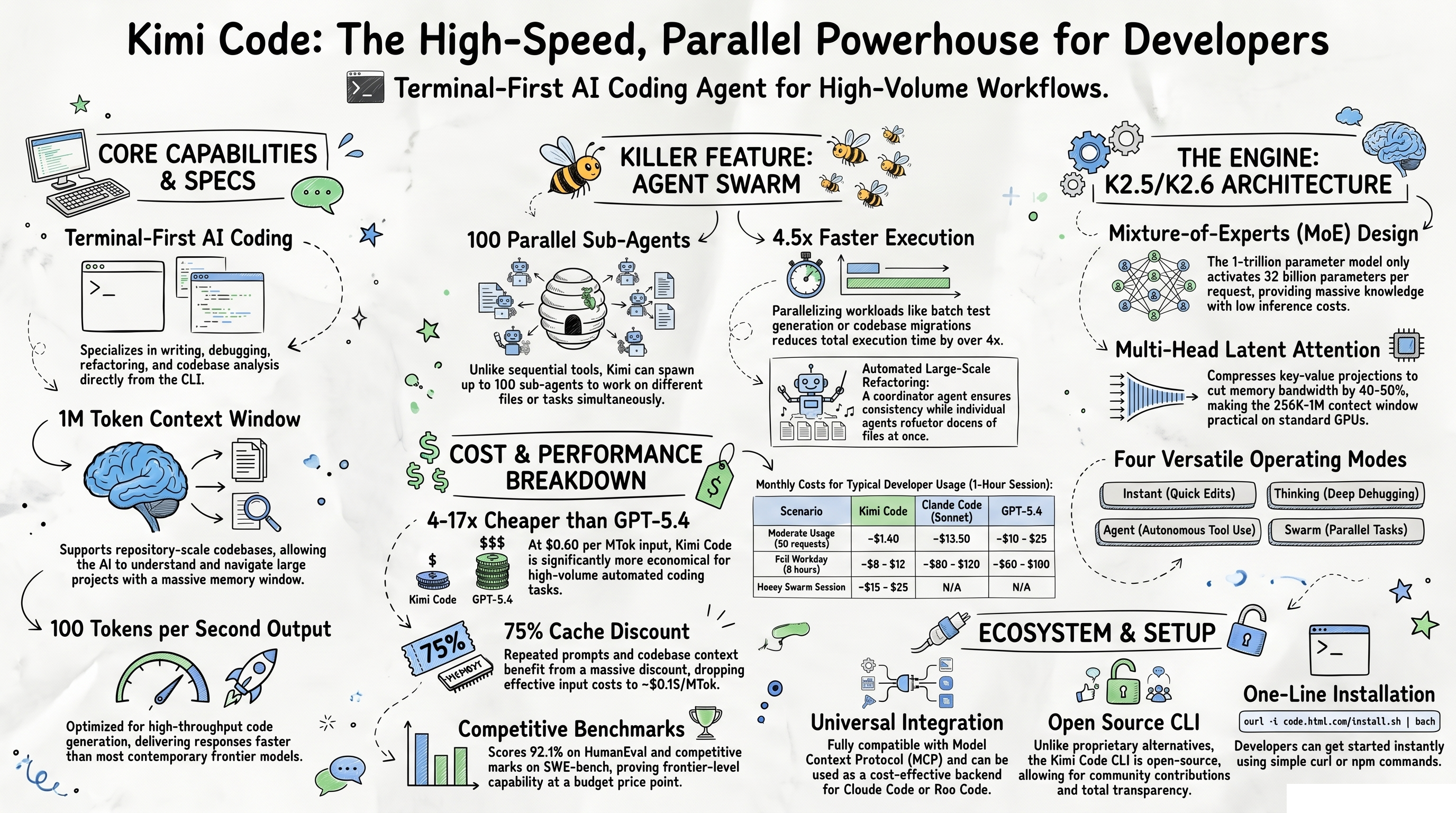

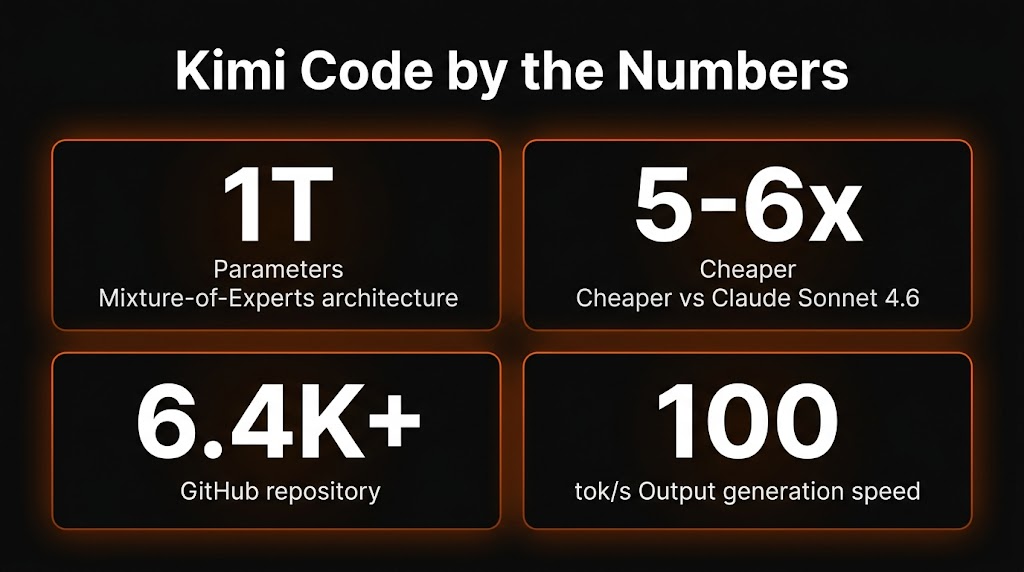

Built on Moonshot AI's K2.6 Code Preview model (a trillion-parameter Mixture-of-Experts architecture), Kimi Code outputs at 100 tokens/second with a 256K context window. It's open source under Apache 2.0, has 6,400+ GitHub stars, and supports the same MCP tool ecosystem as Claude Code — meaning your existing MCP servers work with both.

The headline feature that sets it apart: up to 100 parallel subagents. While Claude Code processes agents sequentially, Kimi Code can spawn and manage up to 100 concurrent agents with 30 simultaneous requests. For large-scale refactoring, multi-file migrations, or test generation across a codebase, this parallelism is a genuine game-changer.

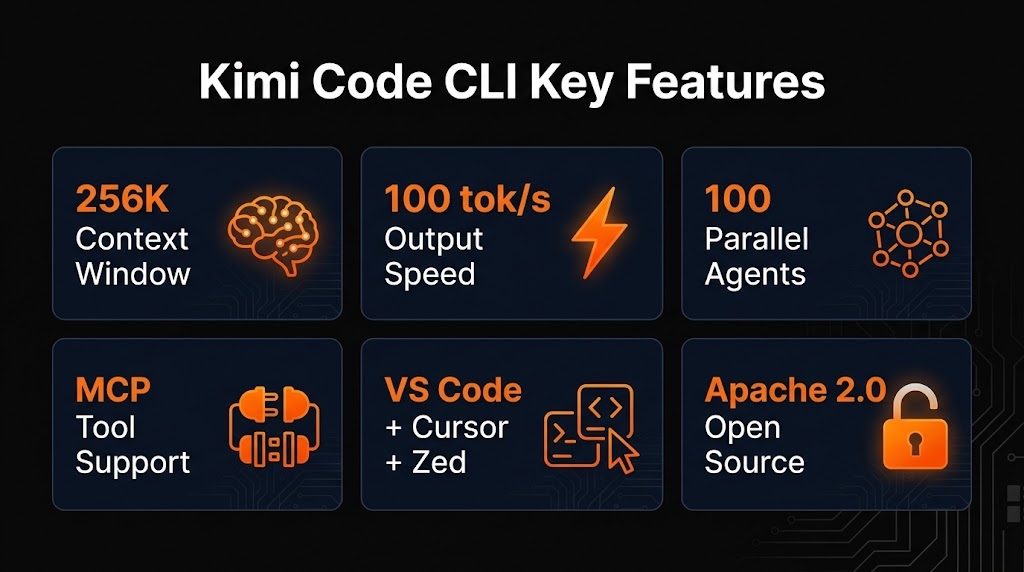

Key Features

Process entire codebases in a single session. Not as large as Claude's 1M, but sufficient for most projects and significantly more than GPT-based alternatives.

25% faster than Claude Code's ~80 tok/s. Code suggestions and file edits appear noticeably quicker, especially for large generation tasks.

Spawn up to 100 concurrent subagents with 30 simultaneous requests. Process entire test suites, refactor multiple files, or run migrations in parallel.

Full MCP compatibility — your existing MCP servers, tools, and connectors work with Kimi Code just like they do with Claude Code.

Works with VS Code, Cursor, and Zed out of the box. Terminal-first but IDE-ready — use it wherever your workflow lives.

Fully open source CLI with 6,400+ GitHub stars. Inspect the code, contribute, fork — no black box. The model weights are accessible via API.

Quick-Reference: Features & Capabilities

What is the size of the context window supported by the Kimi K2.5 model?

256,000 tokens (256K)

What is the maximum context window supported by the K2.6 model according to recent reviews?

1 million tokens

What is the optimized output speed of the Kimi Code platform?

100 tokens per second

What does 'Agent mode' allow the Kimi K2.5 model to do autonomously?

Use tools such as file I/O and shell commands

How many sub-agents can Kimi Code coordinate simultaneously in 'Agent Swarm' mode?

Up to 100 parallel sub-agents

By what factor does Agent Swarm mode increase execution speed on parallelizable tasks?

4.5x faster

The K2.6 Model: What Changed

Moonshot AI rolled out K2.6 Code Preview on April 13, 2026, and the improvements are significant. The model produces deeper reasoning traces, cleaner multi-step agent plans, and more reliable tool call execution compared to K2.5.

Beta testers consistently describe the thinking style as "very Opus" — K2.6 frequently produces "Let me..." prefixes during internal reasoning, similar to Claude Opus 4.6's verbose chain-of-thought. This isn't just cosmetic; it reflects genuinely deeper reasoning that translates to more accurate code generation, especially for complex refactoring and multi-file changes.

The architecture remains a 1 trillion parameter Mixture-of-Experts model, but only 32 billion parameters activate per request — reducing computation by roughly 97% compared to a dense model of equivalent size. This is how Moonshot achieves the aggressive pricing.

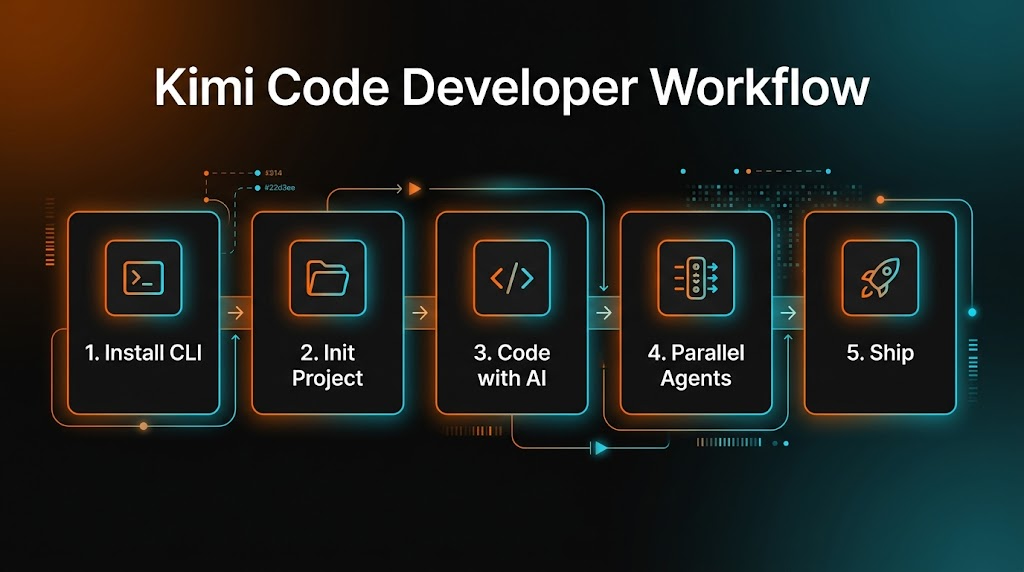

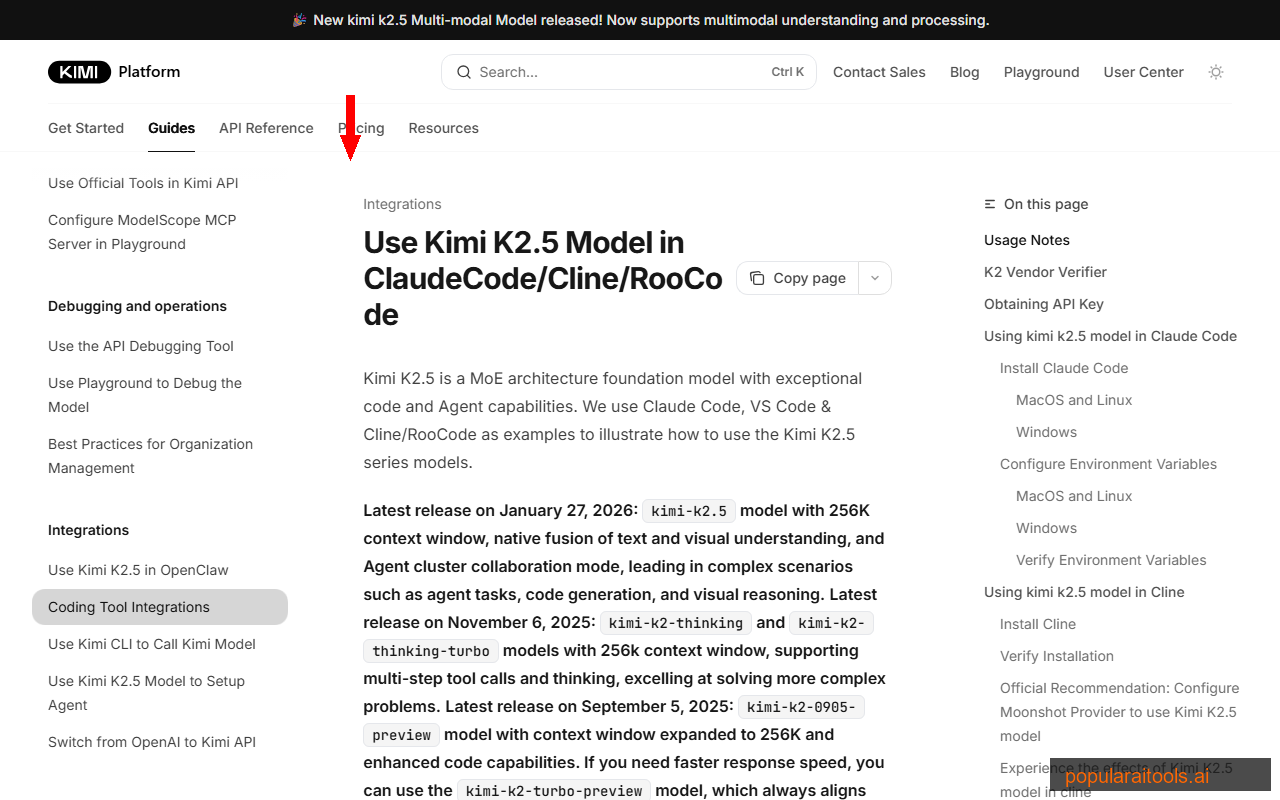

How to Get Started

Getting started takes about 30 seconds:

curl -L code.kimi.com/install.sh | bash

One command installs the CLI. Then run kimi in any project directory.

Three ways to access Kimi Code:

- Terminal CLI — The primary interface. Run

kimiin any directory and start coding. - IDE Extension — Available for VS Code, Cursor, and Zed. Integrates into your editor's sidebar.

- Web Console — Browser-based access at kimi.com/code for quick tasks without local setup.

Pricing & Plans

- CLI + IDE + Web access

- K2.6 Code Preview model

- 5-hour rolling token quota

- 300-1,200 API calls per window

- 30 concurrent requests

- 100 parallel subagents

- Priority compute

- MCP tool support

- 75% cache discount on repeated prompts

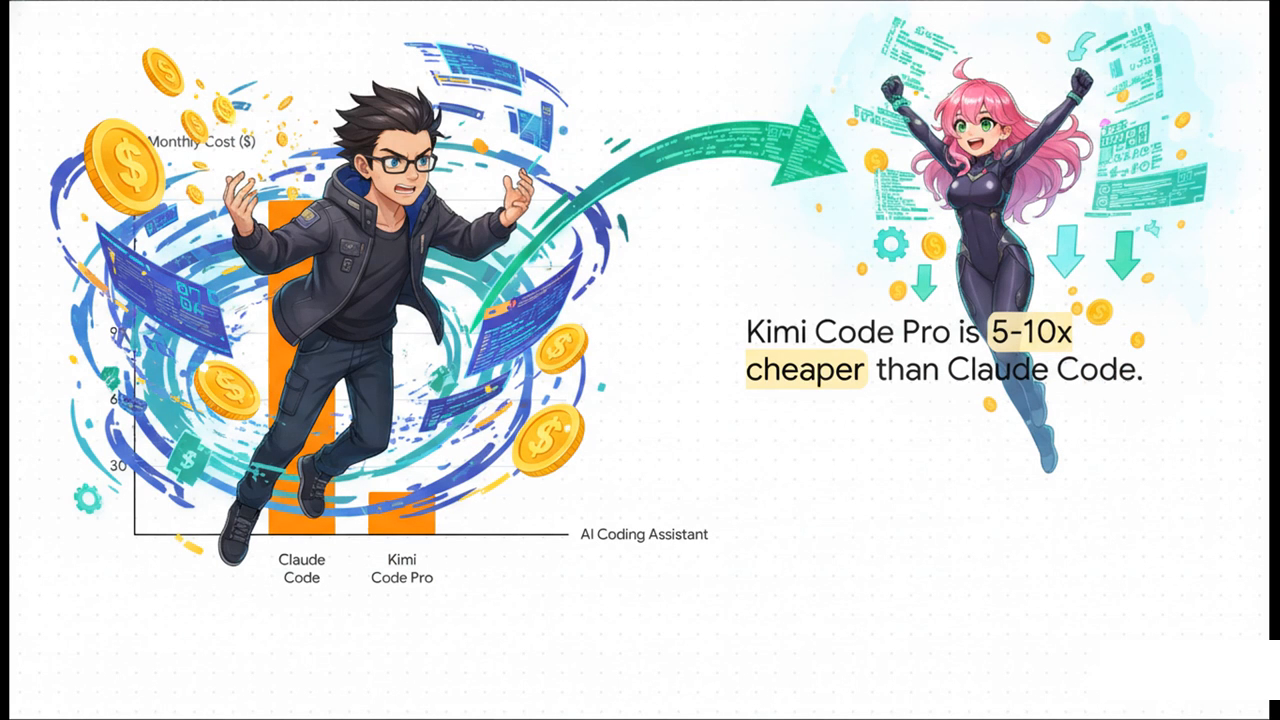

- 5-6x cheaper than Claude Sonnet 4.6

- 4-17x cheaper than GPT-5.4

- 1-hour session: ~$1.40 (vs $13.50 Claude)

- Full workday: $8-12 (vs $80-120 Claude)

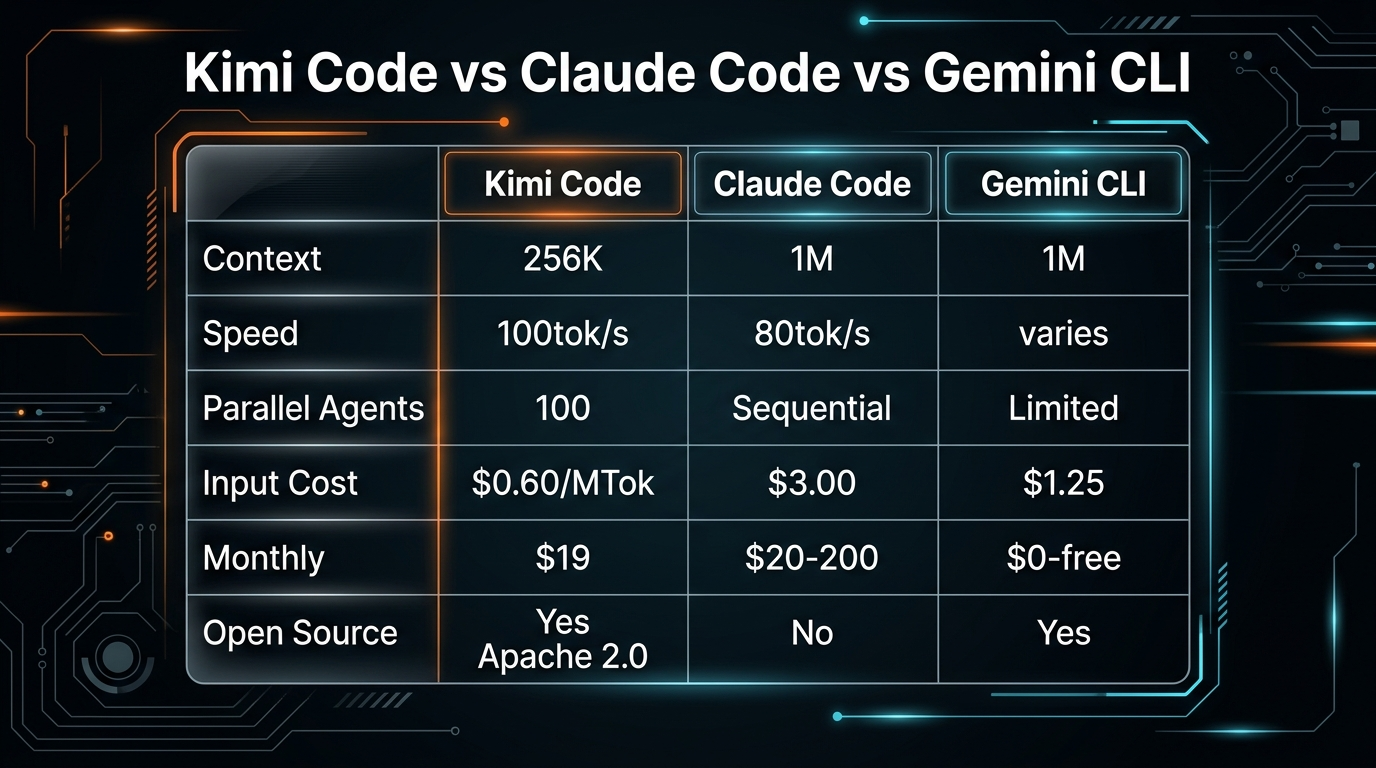

Kimi Code vs Claude Code vs Gemini CLI

| Feature | Kimi Code | Claude Code | Gemini CLI |

|---|---|---|---|

| Context Window | 256K tokens | 1M tokens | 1M tokens |

| Output Speed | 100 tok/s | ~80 tok/s | Varies |

| Parallel Agents | Up to 100 | Sequential | Limited |

| Input Cost/MTok | $0.60 | $3.00 | Free (Gemini 2.5) |

| Monthly Cost | ~$19 | $20-200 | Free |

| Open Source | Yes (Apache 2.0) | No | Yes |

| MCP Support | Yes | Yes | Yes |

| Reasoning Quality | Strong (K2.6) | Best (Opus 4.6) | Good |

AI Coding CLI Comparison

| Tool Name | context window | speed | parallel agents | pricing | open source | MCP support | IDE integration | Source |

|---|---|---|---|---|---|---|---|---|

| Kimi Code CLI | 1M tokens (K2.6) / 256K tokens (K2.5) | 100 tok/s | Up to 100 | $19/mo (Membership/Pro) + API fees ($0.60/$2.50 per MTok) | Yes | Yes | VS Code extension | [1, 2] |

| Claude Code | 1M tokens | ~80 tok/s | 1 (sequential) | $20-200/mo ($100-$200/mo via Claude Max) | No | Yes | VS Code extension | [1, 2] |

| Cursor | Not in source | Not in source | Not in source | $20/mo (Pro); $40/seat (Business) | Not in source | Not in source | IDE-based (Visual Studio Code fork) | [1, 2] |

| Gemini CLI | Not in source | Not in source | Not in source | Free (with Gemini API limits) | Not in source | Not in source | Not in source | [2] |

Our take: Kimi Code wins on cost, speed, and parallelism. Claude Code wins on context window and reasoning depth. Gemini CLI wins on being free. For most developers, Kimi Code offers the best value — you get 80-90% of Claude Code's capability at 10-20% of the cost. The 256K context limit only becomes an issue with very large monorepos.

For a deeper look at where Claude Code excels, see our best AI coding tools comparison and Claude Code commands guide.

Quick-Reference: Pricing & Cost

How many experts are activated for each individual token in K2.5?

8 experts

How much does the standard Kimi Code monthly membership cost?

Approximately \19 per month

What is the API input cost per million tokens (MTok) for Kimi K2.5?

\0.60

What is the API output cost per million tokens (MTok) for Kimi K2.5?

\2.50

What is the percentage of the cache discount applied to repeated prompts in Kimi Code?

75\% discount

Under the rolling quota system, how long is the window used to calculate token allocation?

5 hours

Pros and Cons

- 5-6x cheaper than Claude Code

- 100 parallel agents (vs sequential)

- 100 tok/s output speed (25% faster)

- Open source (Apache 2.0)

- MCP tool compatibility

- VS Code, Cursor, Zed integration

- K2.6 reasoning is "very Opus"

- 75% cache discount on API

- 6,400+ GitHub stars, active community

- One-command install

- 256K context (vs Claude's 1M)

- K2.6 still in preview — may have rough edges

- Smaller ecosystem than Claude Code

- No scheduled tasks/routines equivalent

- Chinese company — data sovereignty concerns for some

- Less documentation and community resources

Quick-Reference: K2.6 Model Architecture

Which specific Large Language Model powers the Kimi Code CLI as of early 2026?

Kimi K2.5

What is the total parameter count of the Kimi K2.5 architecture?

1 trillion total parameters

How many parameters are activated per request in the Kimi K2.5 Mixture-of-Experts model?

32 billion active parameters

How many total layers are contained within the Kimi K2.5 architecture?

61 layers

The Kimi K2.5 model contains a total of _____ individual experts.

384

How many experts are activated for each individual token in K2.5?

8 experts

Frequently Asked Questions

Is Kimi Code CLI better than Claude Code?

It depends on priorities. Kimi is 5-6x cheaper with 100 parallel agents, but Claude has a 1M context window and deeper reasoning. For cost-sensitive teams, Kimi is compelling. For complex large-codebase work, Claude still leads.

How much does Kimi Code cost?

~$19/month membership with 5-hour rolling quota. API: $0.60/MTok input, $2.50/MTok output. A full workday costs $8-12 vs $80-120 for Claude Code.

Is Kimi Code open source?

Yes. Apache 2.0 on GitHub (MoonshotAI/kimi-cli) with 6,400+ stars. The CLI is fully inspectable and forkable.

What is the K2.6 model?

K2.6 Code Preview is the latest from Moonshot AI (April 2026). Trillion-parameter MoE architecture, deeper reasoning, better agent planning. Beta testers call it "very Opus."

Does it support MCP servers?

Yes. Full MCP compatibility — your existing Claude Code MCP servers work with Kimi Code.

Can it really run 100 parallel agents?

Yes. Up to 100 subagents with 30 simultaneous requests. This is a genuine differentiator — Claude Code processes agents sequentially.

Final Verdict

Kimi Code CLI is the real deal. It's not a toy or a demo — it's a production-ready coding agent that competes with Claude Code on capability while dramatically undercutting it on price. The K2.6 model brings reasoning quality that genuinely surprised us, the 100 parallel agents feature is something Claude Code simply doesn't have, and the open-source nature means you're never locked in.

The trade-offs are real: 256K context (vs 1M) matters for large codebases, the ecosystem is smaller, and there are no scheduled tasks/routines equivalent. But for the vast majority of development tasks — writing features, fixing bugs, refactoring, test generation — Kimi Code delivers at a fraction of the cost.

Who should try it: Any developer currently spending $100+/month on Claude Code who wants to cut costs without cutting capability. Also great for teams running many parallel tasks (test suites, migrations, multi-file refactors).

Who should stick with Claude Code: If you work with very large codebases that need the 1M context window, or if you rely heavily on Claude Code's scheduled tasks/routines ecosystem.

Recommended AI Tools

Kimi Code CLI

Open-source AI coding agent by Moonshot AI. Powered by K2.6 trillion-parameter MoE model with 256K context, 100 tok/s output, 100 parallel agents, MCP support. 5-6x cheaper than Claude Code.

View Review →Undetectr

The world's first AI artifact removal engine for music. Remove spectral fingerprints, timing patterns, and metadata that distributors use to flag AI-generated tracks. Distribute on DistroKid, Spotify, Apple Music, and 150+ platforms.

View Review →Anijam ✓ Verified

PopularAiTools Verified — the most complete AI animation tool we have tested in 2026. Story, characters, voice, lip-sync, and timeline editing in one canvas.

View Review →APIClaw ✓ Verified

PopularAiTools Verified — the data infrastructure layer purpose-built for AI commerce agents. Clean JSON, ~1s response, $0.45/1K credits at scale.

View Review →