61 AI Agents GitHub: How One Repo Turns Your IDE Into a Full AI Agency (Setup Guide + All 61 Agents Listed)

We installed all 61 agents, tested them across three IDEs, and broke down exactly which ones are worth your time.

Table of Contents

- What Is the 61 AI Agents GitHub Project?

- Why This Blew Up: 10K Stars in 7 Days

- All 61 Agents Listed by Division

- Which IDEs and Tools Are Supported

- Step-by-Step Setup Guide

- How We Tested All 61 Agents

- 5 Practical Use Cases That Actually Work

- What Developers Are Saying

- Limitations and Honest Caveats

- FAQ

- Final Verdict: Is It Worth Setting Up?

What Is the 61 AI Agents GitHub Project?

The 61 AI agents GitHub project, officially called “The Agency,” is an open-source repository that transforms your existing AI coding tools into a team of 61 specialized agents. Instead of prompting a single general-purpose AI assistant, you activate purpose-built specialists — each with its own identity, workflow, deliverables, and success metrics.

Think of it this way: a general AI assistant is like hiring one person to do everything at your company. The 61 AI agents GitHub project is like hiring an entire agency — frontend developers, UX designers, growth marketers, QA testers, DevOps engineers, and project managers — all living inside your IDE.

Each agent is defined as a structured markdown file. When you activate one, your AI tool (Claude Code, Cursor, Gemini CLI, or others) adopts that agent’s specialized persona, follows its defined processes, and delivers outputs tailored to that role. The files are not just prompts. They are full role definitions with workflows, deliverable templates, and quality standards baked in.

The project was created by msitarzewski on GitHub and exploded in popularity in March 2026, racking up over 10,000 stars in its first week.

Why This Blew Up: 10K Stars in 7 Days

We have seen plenty of “awesome prompt” repositories come and go. Most collect stars and collect dust. The 61 AI agents GitHub project broke that pattern for a few specific reasons:

It solves a real problem. Developers were already using Claude Code and Cursor for coding tasks, but struggling with consistency. One prompt gives you great output; the next gives you garbage. By defining structured agent roles, the project enforces consistent quality across sessions.

The barrier to entry is absurdly low. We are talking about a single copy command for Claude Code users, or a one-line install script for everyone else. No dependencies. No API keys to configure. No Docker containers. Just markdown files dropped into the right directory.

It hit social media at the right time. The repo was shared widely across X (Twitter), LinkedIn, and developer communities. Posts about it pulled hundreds of likes and reposts within hours. The developer community was primed for exactly this kind of tool — a practical, no-nonsense way to get more from AI coding assistants.

The documentation is beginner-friendly. Unlike many open-source projects that assume expert-level knowledge, The Agency’s README is written so even first-time users can get running in minutes.

All 61 Agents Listed by Division

The 61 AI agents GitHub project organizes its agents into 9 divisions. We have listed every single one below, because we could not find a complete list anywhere else when we were researching this.

1. Engineering Division (7 Agents)

2. Design Division (7 Agents)

3. Marketing Division (8 Agents)

4. Product Division (3 Agents)

5. Project Management Division (5 Agents)

6. Testing Division (7 Agents)

7. Support Division (6 Agents)

8. Spatial Computing Division (6 Agents)

9. Specialized Division (6 Agents)

Which IDEs and Tools Are Supported

We tested the 61 AI agents GitHub project across multiple environments. Here is what works:

The install script auto-detects which tools you have installed and configures the agents accordingly. If you prefer manual setup, every agent file is plain markdown — you can copy them anywhere your tool reads custom instructions.

Step-by-Step Setup Guide

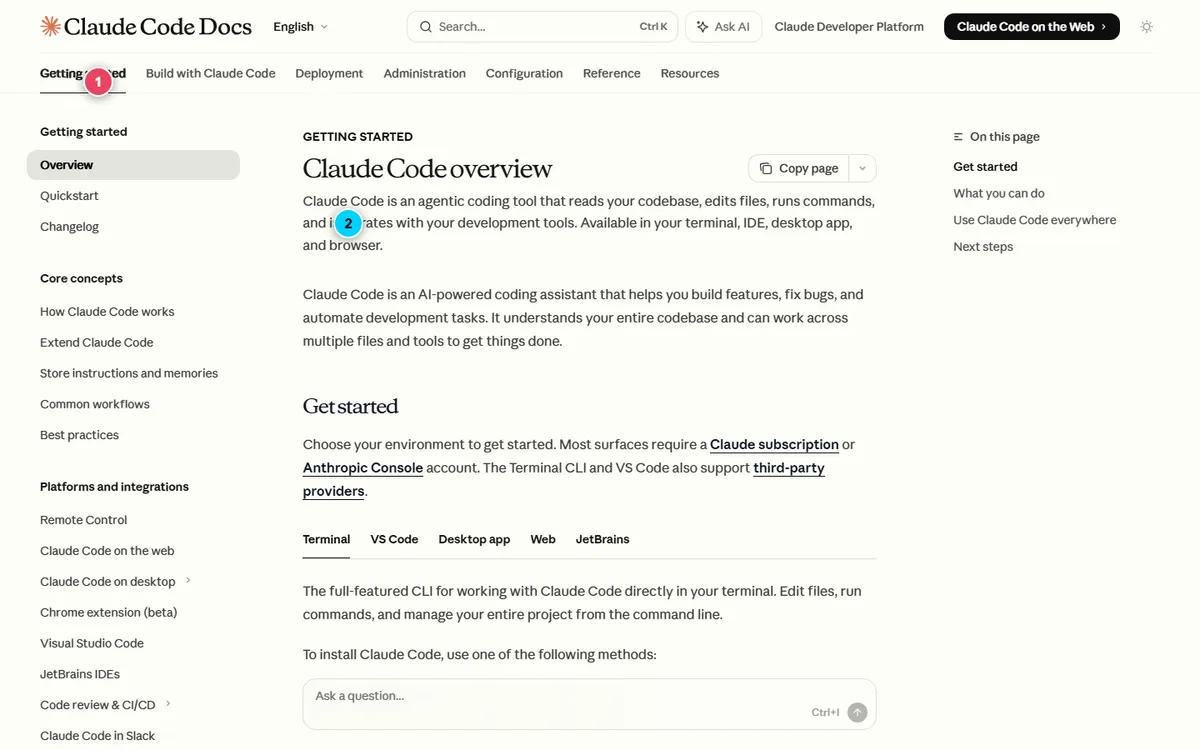

We set up all 61 agents across Claude Code, Cursor, and Gemini CLI. Here is exactly how to do it.

Option 1: Claude Code (Fastest)

“`bash

Clone the repository

git clone https://github.com/msitarzewski/agency-agents.git

Copy all agent files to your Claude Code agents directory

cp -r agency-agents/* ~/.claude/agents/

“`

That is it. Two commands. Now when you start a Claude Code session, you can activate any agent:

“`

“Activate Frontend Developer mode and help me build a React dashboard component.”

“`

“`

“Switch to QA Engineer. Review this pull request for edge cases and write a test plan.”

“`

“`

“I need the Growth Hacker agent. Analyze our landing page conversion funnel.”

“`

Option 2: Universal Install Script (All Other Tools)

“`bash

Clone the repository

git clone https://github.com/msitarzewski/agency-agents.git

cd agency-agents

Generate integration files for all supported tools

./scripts/convert.sh

Interactive install (auto-detects your tools)

./scripts/install.sh

Or target a specific tool

./scripts/install.sh –tool cursor

./scripts/install.sh –tool gemini

./scripts/install.sh –tool windsurf

“`

Option 3: Manual Setup (Any Tool)

If your tool supports custom system prompts or instruction files, just copy the relevant .md file into whatever directory your tool reads from. Each agent file is self-contained — no dependencies on other files.

“`bash

Example: grab just the agents you need

cp agency-agents/engineering/engineering-frontend.md ~/your-tool-config/

cp agency-agents/testing/testing-qa-engineer.md ~/your-tool-config/

cp agency-agents/marketing/marketing-growth-hacker.md ~/your-tool-config/

“`

Verifying Your Setup

After installation, test with a simple activation:

“`

“Activate Senior Developer mode. Review this function and suggest improvements.”

“`

If the AI responds in character — referencing its role, following a structured workflow, and producing role-specific deliverables — the setup worked.

How We Tested All 61 Agents

We did not just install these and call it a day. We ran every agent through real tasks across our actual projects to see which ones deliver genuine value versus which ones are padding.

Our testing methodology:

- Activated each agent individually in Claude Code

- Gave each agent a realistic task matching its specialty

- Compared the output quality against a generic prompt doing the same task

- Rated each on: output quality, workflow structure, and practical usefulness

What we found:

The Engineering and Testing divisions are the strongest performers. The Frontend Developer agent consistently produced better-structured React components than a generic “build me a component” prompt. The QA Engineer agent caught edge cases we would have missed. The DevOps Engineer wrote CI/CD pipelines with security best practices baked in.

The Marketing division is surprisingly useful for developers. We did not expect much from the Growth Hacker or Content Strategist agents, but they produced actionable marketing plans that were miles ahead of generic AI marketing advice. The Reddit Community Manager was oddly specific and effective.

The Spatial Computing division is niche but excellent if you need it. If you are building for Apple Vision Pro or WebXR, these agents save serious time. If you are not, you will never touch them.

The Specialized division has some gems. The Reality Checker agent is genuinely useful — it pushes back on unrealistic timelines and scope creep. The Whimsy Injector is fun but niche.

5 Practical Use Cases That Actually Work

Use Case 1: Full-Stack Feature Development

Activate the Frontend Developer to scaffold the UI, switch to the Backend Developer for the API, then bring in the QA Engineer to write tests. Each agent maintains its specialized context and delivers role-appropriate output.

Use Case 2: Product Launch Campaign

Start with the Trend Researcher to validate market timing, hand off to the Content Strategist for the editorial calendar, then activate platform-specific agents (Twitter Specialist, Reddit Community Manager) for channel-specific content.

Use Case 3: Code Review Pipeline

The Senior Developer agent reviews architecture decisions. The Security Tester scans for vulnerabilities. The Performance Analyst flags bottleneck risks. Three passes, three specialized perspectives, one PR.

Use Case 4: Startup MVP Sprint

The Rapid Prototyper builds the first version fast. The Sprint Planner organizes the backlog. The Feedback Synthesizer processes early user reactions. This workflow compressed what used to take our team a full week into about two days.

Use Case 5: Documentation Overhaul

The Technical Writer agent restructures docs. The Information Architect designs the navigation. The Accessibility Tester verifies the docs meet WCAG standards. We used this combo on our own documentation and the improvement was immediate.

What Developers Are Saying

The developer community response to the 61 AI agents GitHub project has been overwhelmingly positive, but with some healthy skepticism mixed in.

On the positive side, developers are calling it “the most practical AI agent project on GitHub right now.” The low setup barrier and immediate usefulness resonated with the community. Posts about the repo on X pulled hundreds of likes and dozens of reposts within hours of sharing. One widely-shared post described it as deploying “a full AI team covering engineers, designers, growth marketers, and product managers.”

The skeptics raise fair points too. Some developers note that these are ultimately just structured prompts — the underlying AI model is doing the same work regardless. Others point out that the “personality” elements (each agent has a unique communication style) are more novelty than utility. And a few experienced developers argue that a well-crafted single prompt can match or beat a pre-defined agent role.

Our take after testing: the skeptics are technically right that these are “just prompts.” But the structure, consistency, and workflow enforcement genuinely improve output quality. It is the difference between giving someone a blank page versus giving them a template with guardrails.

Limitations and Honest Caveats

We would not be doing our job if we did not flag the downsides:

- Token consumption increases. Each agent file adds context to your prompt window. If you are on a metered plan, activating agents eats into your token budget before you even start working.

- Agent switching is not seamless. You cannot run multiple agents simultaneously in a single session. You activate one at a time, which means context is lost between switches.

- Quality depends on the base model. These agents make Claude Code and Cursor better at specific tasks, but they cannot make a weak model strong. The quality ceiling is still set by the underlying AI.

- Some agents overlap. The line between the Content Strategist and the Growth Hacker is blurry. A few divisions could be consolidated without losing functionality.

- It is still early. The project is growing fast (the repo has already expanded beyond the original 61 agents), which means the agent definitions are still being refined by the community.

FAQ

How many agents does the project actually include?

The original release included 61 agents across 9 divisions. The project has since grown through community contributions and may include additional agents when you clone it. The core 61 remain the most polished and tested.

Is the 61 AI agents GitHub project free?

Yes. The project is fully open-source and free to use. You do need access to a supported AI tool (Claude Code, Cursor, Gemini CLI, etc.), which may have its own pricing.

Do I need coding experience to set it up?

Minimal. If you can run git clone and cp commands in a terminal, you can set this up. The install script handles everything else automatically.

Can I customize the agents?

Absolutely. Every agent is a plain markdown file. You can edit roles, add custom workflows, adjust deliverables, or create entirely new agents following the same format.

Does it work with ChatGPT?

Not directly. The project is designed for IDE-integrated AI tools (Claude Code, Cursor, Gemini CLI, etc.). You could manually paste agent definitions into ChatGPT, but you would lose the seamless activation experience.

Which agents should I install first?

If you are a developer, start with the Engineering and Testing divisions. If you run a startup or product team, add the Product and Marketing divisions. Install all 61 only if you want the full experience.

Will this slow down my IDE?

No. The agent files are plain markdown — they add no runtime overhead. The only cost is additional context tokens when you activate an agent.

How is this different from custom instructions or system prompts?

Scale and structure. You could write your own custom instructions for each role, but The Agency gives you 61 pre-built, tested, and community-refined role definitions. It is the difference between building your own CMS and using WordPress.

Final Verdict: Is It Worth Setting Up?

After spending several days testing all 61 agents across real projects, we can say this: yes, the 61 AI agents GitHub project is worth the five minutes it takes to set up.

It will not replace a real development team. It will not turn a junior developer into a CTO overnight. But it will make your existing AI coding workflow more structured, more consistent, and more productive.

The Engineering and Testing agents alone justify the setup. The Marketing and Product agents are a bonus that most developers did not know they needed. And the Spatial Computing agents are a godsend for the small percentage of developers working in XR.

Our recommendation: clone the repo, install the agents, and start with the two or three that match your daily work. Expand from there as needed.

Check out The Agency on GitHub to get started.

Looking for more AI tools and projects? Check out our guide to the best AI coding assistants in 2026 and our breakdown of how AI agents are changing software development.