12+ AI Models Released in One Week: The March 2026 Wave That Changed Everything

We have been covering AI releases for years now, and we can say without exaggeration: the first week of March 2026 was the most concentrated burst of frontier AI capability we have ever witnessed. Between March 1 and March 12, at least 12 major models dropped from OpenAI, Anthropic, Google, Alibaba, NVIDIA, Lightricks, Zhipu AI, Peking University, ByteDance, and more. Language models, video generators, image editors, 3D encoders, GPU optimization agents — all in a single week.

This was not a coincidence. This was an arms race reaching critical mass. And if you blinked, you missed half of it.

We broke down every major release, what it actually does, who should care, and how they all stack up against each other. Let us walk you through the entire March 2026 AI wave.

Get Your AI Tool in Front of Thousands of Buyers

Join 500+ AI tools already listed on PopularAiTools.ai — DR 50+ backlinks, expert verification, and real traffic from people actively searching for AI solutions.

Starter

$39/mo

Directory listing + backlink

- DR 50+ backlink

- Expert verification badge

- Cancel anytime

Premium

$69/mo

Featured + homepage placement

- Everything in Starter

- Featured on category pages

- Homepage placement (2 days/mo)

- 24/7 support

Ultimate

$99/mo

Premium banner + Reddit promo

- Everything in Premium

- Banner on every page (5 days/mo)

- Elite Verified badge

- Reddit promotion + CTA

No credit card required · Cancel anytime

Table of Contents

- The Big Three: GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro

- GPT-5.4 — OpenAI’s Three-Headed Beast

- Claude Opus 4.6 — Anthropic’s Coding and Reasoning Powerhouse

- Gemini 3.1 Pro — Google’s Reasoning Champion

- The Open-Source Challengers

- Qwen 3.5 — Alibaba’s Efficiency Monster

- GLM-5 and GLM-5-Turbo — Zhipu AI’s Agent Specialist

- Nemotron 3 Super — NVIDIA’s Agentic Reasoning Engine

- The Creative AI Releases

- LTX 2.3 — Lightricks’ 4K Video Revolution

- Helios — 60-Second Video Generation

- FireRed Edit 1.1 — Image Editing at Scale

- The Specialists: CUDA Agent, Kiwi Edit, and More

- DeepSeek V4 — The Ghost That Haunted March

- Master Comparison Table

- What This Means for You

- FAQ

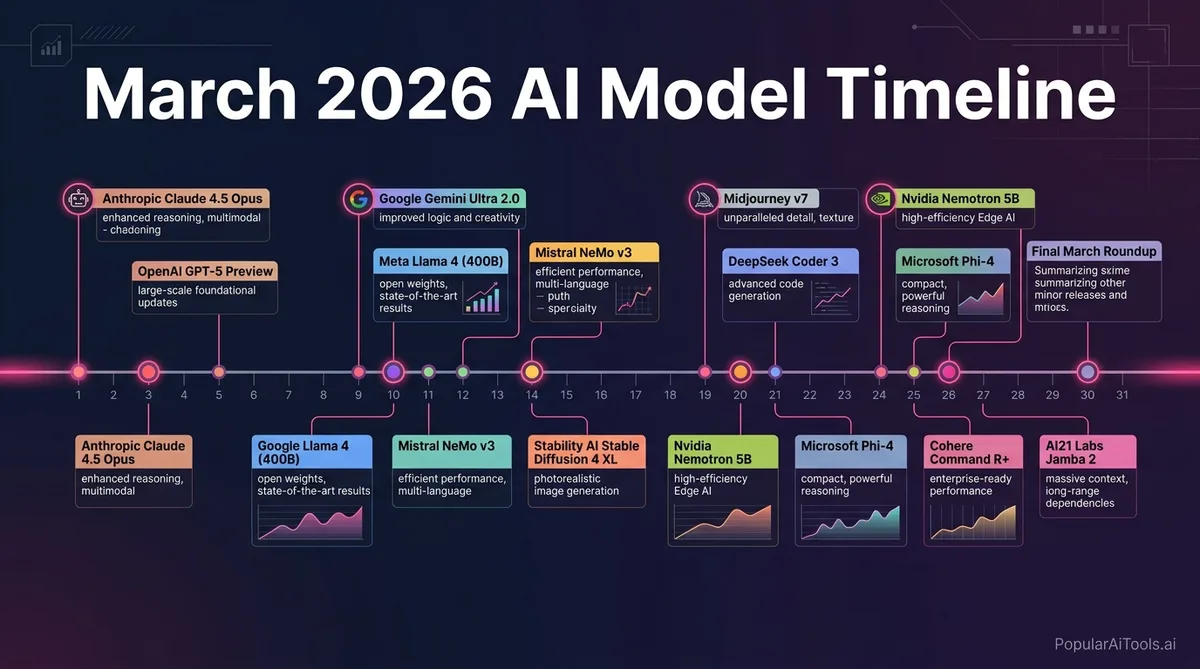

The Big Three: GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro

The frontier model tier saw three powerhouses either launch or cement dominance during this window. Each one targets a different sweet spot, and choosing between them now requires actual thought rather than just defaulting to “the latest ChatGPT.”

GPT-5.4 — OpenAI’s Three-Headed Beast

Released: March 5, 2026

Developer: OpenAI

GPT-5.4 arrived on March 5 with three distinct variants, each tuned for different use cases:

- GPT-5.4 Standard — The general-purpose workhorse with a 272K default context window (expandable to 1.05M tokens). Best for everyday tasks, content generation, and analysis.

- GPT-5.4 Thinking — A reasoning-first variant that shows its chain of thought. Available to Plus, Team, and Pro subscribers. Built for complex multi-step problems.

- GPT-5.4 Pro — Maximum capability model for enterprise and research. Available to Pro and Enterprise plans only.

Key benchmarks: GPT-5.4 reduces individual claim errors by 33% and full-response errors by 18% compared to GPT-5.2. On SWE-bench Verified, it scores approximately 77.2%, placing it just behind Claude Opus 4.6. The new Tool Search architecture enables dynamic tool calling mid-generation, a genuine architectural innovation.

Pricing:

- Standard: $2.50/M input tokens (under 272K), $15.00/M output tokens

- Extended context (272K+): $5.00/M input tokens

- Pro: $30.00/M input, $180.00/M output

- Cached input: $1.25/M (50% automatic discount)

Who should use it: Teams that need a versatile, well-rounded model with the strongest ecosystem of integrations. If you already live in the OpenAI ecosystem, GPT-5.4 is the best general-purpose upgrade available. The Thinking variant is particularly strong for research and analysis tasks.

Claude Opus 4.6 — Anthropic’s Coding and Reasoning Powerhouse

Released: February 5, 2026 (dominant through March)

Developer: Anthropic

Claude Opus 4.6 launched in early February but became the model to beat during the March wave. It leads the field in coding benchmarks and introduced a 1 million token context window in public beta — meaning you can feed it entire codebases, full legal document sets, or multi-hundred-page research papers in a single prompt.

Key benchmarks:

- SWE-bench Verified: 80.8% (with Thinking variant at 79.2% on the leaderboard — the current overall leader)

- ARC-AGI-2: 68.8%, an 83% improvement over Opus 4.5’s 37.6%

- MRCR v2 (long-context coherence): 76%

The ARC-AGI-2 score is particularly telling. This benchmark tests novel pattern recognition rather than memorized knowledge, and Opus 4.6 holds a 14.6-point lead over GPT-5.2 on it.

Pricing: Approximately $15/M input tokens and $75/M output tokens for Opus-tier (Sonnet 4.6 available at lower price points).

Who should use it: Software engineers, AI agent builders, and anyone working with massive codebases. If your workflow involves debugging, code generation, or long-context analysis, Claude Opus 4.6 is the current best-in-class option. The 1M context window is not a gimmick — it fundamentally changes what is possible in a single prompt.

Gemini 3.1 Pro — Google’s Reasoning Champion

Released: February 19, 2026

Developer: Google DeepMind

Gemini 3.1 Pro quietly dropped on February 19 and proceeded to claim the number one spot on 12 out of 18 tracked benchmarks. The headline number is its ARC-AGI-2 score: 77.1%, more than double the reasoning performance of Gemini 3 Pro and the highest score from any frontier model.

Key benchmarks:

- ARC-AGI-2: 77.1% (jumped from 31.1% on Gemini 3 Pro — the largest single-generation reasoning gain among frontier models)

- Leader on 12+ of 18 tracked benchmarks across coding, reasoning, and agentic tasks

- 1 million token context window natively supported

Pricing: Competitive with GPT-5.4 Standard tier through Google AI Studio and Vertex AI.

Who should use it: Anyone prioritizing raw reasoning ability, especially on novel problems. Gemini 3.1 Pro is the model to reach for when you need genuine pattern recognition and logic rather than pattern matching. Enterprise teams already on Google Cloud get seamless integration through Vertex AI.

The Open-Source Challengers

The March wave proved definitively that the frontier is no longer the exclusive domain of trillion-dollar companies. Several open-source and open-weight models matched or exceeded proprietary alternatives in specific domains.

Qwen 3.5 — Alibaba’s Efficiency Monster

Released: March 1–2, 2026 (Small series); February 24, 2026 (Medium series)

Developer: Alibaba / Qwen Team

Qwen 3.5 is built on a hybrid architecture fusing linear attention with sparse mixture-of-experts: 397 billion total parameters but only 17 billion activated per forward pass. That architectural choice makes it absurdly efficient.

Model lineup:

- Qwen3.5-397B-A17B — Flagship, 256K context, 201 languages

- Qwen3.5-122B-A10B — Medium tier

- Qwen3.5 Small series — 0.8B, 2B, 4B, and 9B dense models (natively multimodal)

The 9B small model matches GPT-OSS-120B — a model 13x its size — on benchmarks including GPQA Diamond (81.7 vs. 71.5). The flagship scores 83.6 on LiveCodeBench v6, 91.3 on AIME26, and 88.4 on GPQA Diamond, reportedly outperforming GPT-5.2 and Claude Opus 4.5 on 80% of evaluated categories.

Pricing: Qwen3.5-Flash at $0.10/M input tokens — roughly 1/13th the cost of Claude Sonnet 4.6. Plus models at $0.26/M input, $1.56/M output.

Who should use it: Cost-conscious teams that need strong multilingual support, developers running models locally, and anyone who needs near-frontier performance at a fraction of the price. The small models are particularly impressive for edge deployment and mobile applications.

GLM-5 and GLM-5-Turbo — Zhipu AI’s Agent Specialist

Released: February 13, 2026 (GLM-5); March 16, 2026 (GLM-5-Turbo)

Developer: Zhipu AI / Z.ai

GLM-5 is a 744B parameter open-source model that rivals GPT-5.2. But the March story is GLM-5-Turbo, a specialized variant designed from the ground up for OpenClaw agent workflows.

GLM-5-Turbo specs:

- 200K token context window, 128K token output limit

- Purpose-built for multi-step agentic tasks

- Aligned during training for complex automated workflows

- Supports multi-agent collaboration and task distribution

Pricing: $1.20/M input tokens, $4.00/M output tokens — significantly cheaper than comparable frontier models.

Who should use it: Teams building AI agent systems, especially those working within the OpenClaw ecosystem. If your use case involves multi-step automated workflows with multiple agents collaborating, GLM-5-Turbo was literally designed for that.

Nemotron 3 Super — NVIDIA’s Agentic Reasoning Engine

Released: March 11, 2026

Developer: NVIDIA

NVIDIA entered the model race with Nemotron 3 Super, a 120B parameter open-weight hybrid Mamba-Transformer MoE model with only 12 billion active parameters. This is purpose-built for complex agentic reasoning.

Key specs:

- Native 1M token context window for long-term agent memory

- 5x throughput improvement over previous Nemotron Super

- Hybrid Mamba-Transformer architecture for efficient inference

- Open weights, available on Hugging Face, OpenRouter, and NVIDIA’s build platform

Availability: Google Cloud Vertex AI, Oracle Cloud, with Amazon Bedrock and Azure coming soon.

Who should use it: Enterprise teams building autonomous AI agent systems that need long-context reasoning at scale. The 1M token context gives agents genuine long-term memory, and the 5x throughput improvement makes production deployment practical. If you are on NVIDIA hardware (and most of us are), this model is optimized for your stack.

The Creative AI Releases

The March wave was not just about language models. The creative AI space saw releases that fundamentally change what is possible with open-source tools.

LTX 2.3 — Lightricks’ 4K Video Revolution

Released: March 5, 2026

Developer: Lightricks

License: Apache 2.0

LTX 2.3 is a 22 billion parameter Diffusion Transformer that generates synchronized video and audio in a single forward pass. This is not “video plus separate audio” — the model generates both together, coherently.

Key specs:

- Native 4K resolution at up to 50 FPS

- Synchronized audio generation via rebuilt HiFi-GAN vocoder

- Native 9:16 portrait mode support

- Up to ~12 seconds per clip at full quality

- LoRA adapter support for fine-tuning

- Two variants: 22B-dev (full, trainable) and 22B-distilled (8-step, faster)

Hardware: Full model needs 48GB+ GPU for 4K. Quantized versions run on consumer GPUs with only 5–8% quality loss at 1080p.

Who should use it: Content creators, social media teams, and indie filmmakers who want open-source video generation without per-minute API fees. The native portrait mode and audio sync make it immediately practical for short-form social content.

Helios — 60-Second Video Generation

Released: Early March 2026

Developer: Peking University, ByteDance, and Canva

License: Apache 2.0

Helios is a 14B parameter autoregressive diffusion model that generates videos up to 1,440 frames — approximately 60 seconds at 24 FPS. It runs at 19.5 frames per second on a single NVIDIA H100.

Who should use it: Teams that need longer-form generated video content. While LTX 2.3 wins on quality and resolution, Helios wins on duration, generating five times longer clips in a single pass.

FireRed Edit 1.1 — Image Editing at Scale

Released: March 3, 2026

Developer: FireRed Team

FireRed Edit 1.1 is a general-purpose image editing foundation model trained on 1.6 billion samples (900M text-to-image pairs plus 700M image editing pairs). Version 1.1 optimizes portrait consistency, multi-element fusion, stylized text reference, and portrait makeup effects.

Who should use it: Designers and content teams that need batch image editing with consistent quality. The training scale gives it an edge on complex multi-element edits that trip up smaller models.

The Specialists: CUDA Agent, Kiwi Edit, and More

The March wave also included several specialized tools that are easy to overlook but genuinely useful:

- CUDA Agent — A GPU kernel automation tool that writes and optimizes CUDA code. If you are doing custom GPU programming, this saves serious engineering time.

- Kiwi Edit — An open-source image editing model rivaling proprietary alternatives in targeted editing tasks.

- CubeComposer — A 3D spatial reasoning and composition model.

- Spatial T2I — Text-to-image with explicit spatial understanding and layout control.

- Spectrum — A diffusion acceleration method that speeds up generation without quality loss.

These models represent the “long tail” of the March wave — individually niche, but collectively demonstrating that specialized open-source AI tools now cover nearly every creative and technical workflow.

DeepSeek V4 — The Ghost That Haunted March

We need to mention DeepSeek V4 because its shadow loomed over the entire March wave, even though it has not officially launched as of this writing.

DeepSeek V4 features a reported ~1 trillion total parameters with ~32B active per token, a 1M token context window, native multimodal capabilities (image, video, and text generation), and a novel Engram memory architecture for efficient long-context retrieval.

Leaked (unverified) benchmarks claim 90% on HumanEval and 80%+ on SWE-bench Verified. Multiple release windows (February, Lunar New Year, early March) have passed without an official launch, but the specs alone have forced competitors to accelerate their own timelines.

Who should watch for it: Everyone. If the leaked benchmarks are even close to accurate, DeepSeek V4 will immediately become the most capable open-source model available.

Master Comparison Table

What This Means for You

Here is our honest take on what the March 2026 wave signals:

The context window war is over. Four models now offer 1M+ token context natively. If your workflow still involves chunking documents to fit into 128K windows, you are leaving capability on the table.

Open source won specific domains. LTX 2.3 for video, Nemotron 3 Super for agentic reasoning, Qwen 3.5 for cost efficiency — these are not “almost as good” alternatives. They are the best options in their respective niches, period.

Pricing compression is real. Qwen 3.5 Flash at $0.10/M input tokens versus GPT-5.4 Pro at $30/M input tokens is a 300x cost difference. For many production workloads, the cheaper models are more than sufficient.

Agents are the new frontier. GLM-5-Turbo, Nemotron 3 Super, and GPT-5.4’s Tool Search architecture all point in the same direction: the next wave of AI applications will be autonomous agents, not chatbots.

We recommend most teams adopt a multi-model strategy: Claude Opus 4.6 for coding and long-context work, GPT-5.4 Thinking for general reasoning, Qwen 3.5 for high-volume production tasks, and LTX 2.3 for video content. The era of picking one model for everything is officially over.

Built an AI tool? Get it in front of thousands of qualified buyers on PopularAiTools.ai

FAQ

How many AI models were released in the first week of March 2026?

At least 12 major AI models and tools were announced between March 1 and March 8, 2026, from organizations including OpenAI, Alibaba, Lightricks, NVIDIA, Tencent, Meta, ByteDance, and several leading universities. This does not count the models released in late February (Claude Opus 4.6, Gemini 3.1 Pro) that dominated the same competitive window. Industry data shows 267 new AI models were released in Q1 2026 alone.

Which AI model has the best coding benchmarks in March 2026?

Claude Opus 4.6 leads on SWE-bench Verified with 80.8%, followed by GPT-5.4 at approximately 77.2%. For cost-effective coding, Qwen 3.5’s 122B-A10B model scores 72.2 on BFCL-V4 tool use, outperforming GPT-5 mini by a 30% margin at a fraction of the price. The best choice depends on whether you prioritize raw performance or cost efficiency.

Is GPT-5.4 worth upgrading to from GPT-5.2?

Yes, if you rely on factual accuracy and tool integration. GPT-5.4 reduces individual claim errors by 33% and full-response errors by 18% compared to GPT-5.2. The new Tool Search architecture enables dynamic tool calling mid-generation, and the 1.05M token context window (up from 272K default) is a significant capability upgrade. However, at $30/M input tokens for the Pro tier, evaluate whether the Standard tier at $2.50/M meets your needs first.

What is the best free or open-source AI model from the March 2026 releases?

For language tasks, Qwen 3.5-9B delivers remarkable performance for its size, matching models 13x larger on key benchmarks, and runs on consumer hardware. For video generation, LTX 2.3 under Apache 2.0 generates 4K video with synchronized audio. For agentic reasoning, Nemotron 3 Super offers 120B parameters with open weights and a 1M token context window. All three are free to download and use commercially.

When will DeepSeek V4 actually release?

As of mid-March 2026, DeepSeek V4 has not officially launched despite multiple anticipated release windows. The model reportedly features ~1 trillion parameters, native multimodal capabilities, and a novel Engram memory architecture. Leaked benchmarks suggest strong performance, but none have been independently verified. We recommend not making purchasing or infrastructure decisions based on DeepSeek V4 until it is publicly available and benchmarked by third parties.

We will keep updating this post as more benchmarks, pricing details, and real-world performance data come in. The March 2026 wave is still unfolding, and the models we covered today are just the beginning of what is shaping up to be the most competitive year in AI history.

Want to stay ahead of every major AI release? Subscribe to our newsletter and get weekly breakdowns of the models, tools, and benchmarks that actually matter — no hype, just facts.

Subscribe to PopularAiTools.ai Updates

Sources: