Anthropic vs The Pentagon: The AI Lawsuit That Could Change Everything

The biggest legal battle in artificial intelligence history is unfolding right now, and it has nothing to do with copyright, deepfakes, or job displacement. It is about whether the United States government can punish an American AI company for refusing to let its technology be used for mass surveillance and autonomous weapons.

We are watching Anthropic, the company behind Claude, go head-to-head with the Pentagon in a lawsuit that could reshape the entire relationship between Silicon Valley and the military-industrial complex. Here is everything we know, why it matters, and what comes next.

Table of Contents

- What Actually Happened

- The Two Red Lines Anthropic Refused to Cross

- The Supply Chain Risk Designation Explained

- The Lawsuits: Anthropic Fights Back

- Billions of Dollars at Stake

- The Industry Rallies Behind Anthropic

- OpenAI and Google’s Military Stances in Comparison

- What This Means for the AI Industry

- Timeline of Key Events

- What Happens Next

- FAQ

What Actually Happened

In late February 2026, Defense Secretary Pete Hegseth issued an ultimatum to Anthropic CEO Dario Amodei: agree by 5:01 p.m. on Friday, February 27 to allow unrestricted military use of Claude “for all lawful purposes,” or face consequences.

Anthropic did not budge.

On February 27, President Trump directed federal agencies to cease using Anthropic’s products. Then Hegseth took an extraordinary step: he designated Anthropic a “supply chain risk” to national security. On March 3, a formal letter from Hegseth confirmed the designation, stating that the Department of War had determined that “the use of [Anthropic’s] products in covered systems presents a supply chain risk.”

This designation means that no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. In practical terms, it is a blacklist, and it is the kind of label historically reserved for foreign adversaries like Chinese telecom firms suspected of espionage.

Anthropic became the first American company ever to receive this designation.

The Two Red Lines Anthropic Refused to Cross

The entire dispute boils down to two contractual provisions Anthropic insisted on keeping in any military agreement:

| Red Line | What Anthropic Demanded |

|---|---|

| Mass Surveillance | Claude cannot be used for mass surveillance of U.S. citizens |

| Autonomous Weapons | Claude cannot be used to operate fully autonomous weapons systems |

The Pentagon’s position was straightforward: it wanted Claude available for “all lawful purposes” with no vendor-imposed restrictions. The government argued it could not allow a private company to dictate how its tools could be used in a national security emergency.

Amodei’s response was equally direct. He said he could not “in good conscience” agree to remove those safeguards. In a public statement posted to Anthropic’s website titled “Where We Stand,” the company made clear that these were not negotiating positions but fundamental ethical commitments.

What makes this particularly striking is that the Pentagon did not need to go nuclear. As multiple legal analysts and even the amicus briefs have pointed out, the government had standard procurement tools available. It could have simply canceled the contract and moved to another AI provider. Instead, it chose to weaponize a national security label against an American company for holding a policy position.

The Supply Chain Risk Designation Explained

To understand why this designation is so alarming, we need to understand what it actually is and how it has been used before.

The supply chain risk framework exists to protect the Defense Department from products and services that could compromise national security. It has historically been applied to companies like Huawei and Kaspersky Lab, firms with documented ties to foreign intelligence services or governments considered adversaries of the United States.

| Previous Targets | Reason |

|---|---|

| Huawei (China) | Suspected intelligence ties to CCP |

| Kaspersky Lab (Russia) | Alleged links to Russian intelligence |

| ZTE (China) | Violations of sanctions, security concerns |

| Anthropic (USA) | Refused to remove AI safety guardrails |

The contrast is jarring. Anthropic is an American company headquartered in San Francisco, founded by former OpenAI researchers, and backed by Google and major U.S. venture capital firms. The designation does not allege espionage, foreign ties, or any traditional national security concern. Instead, it punishes Anthropic for maintaining ethical restrictions on how its AI can be used.

This is why the legal community has described the move as unprecedented and why it has generated such a fierce response from the tech industry.

The Lawsuits: Anthropic Fights Back

On March 9, 2026, Anthropic filed two separate federal lawsuits challenging the designation:

Lawsuit 1: U.S. District Court, Northern District of California

Filed before Judge Rita Lin in San Francisco, this is the broader challenge. Anthropic argues that the designation violates its First Amendment rights by punishing the company for its advocacy around AI safety. The complaint alleges that Pentagon officials illegally retaliated against Anthropic for its public positions on how AI should and should not be used in military contexts.

Lawsuit 2: D.C. Circuit Court of Appeals

This is a narrower, more technical challenge filed because one of the statutes the government invoked can only be contested in the D.C. Circuit. It targets the specific legal authority the Pentagon used to issue the designation.

In its court filings, Anthropic described the designation as “ideological” retaliation. The company alleged that the real cause of the dispute was not a genuine national security concern but rather political friction, including Anthropic’s refusal to provide what Amodei described in a leaked internal memo as “dictator-style praise to Trump.”

That leaked memo caused its own controversy. Amodei apologized for the language, which also reportedly called some OpenAI staff “gullible,” but he stood firmly behind the substance of Anthropic’s legal position.

Billions of Dollars at Stake

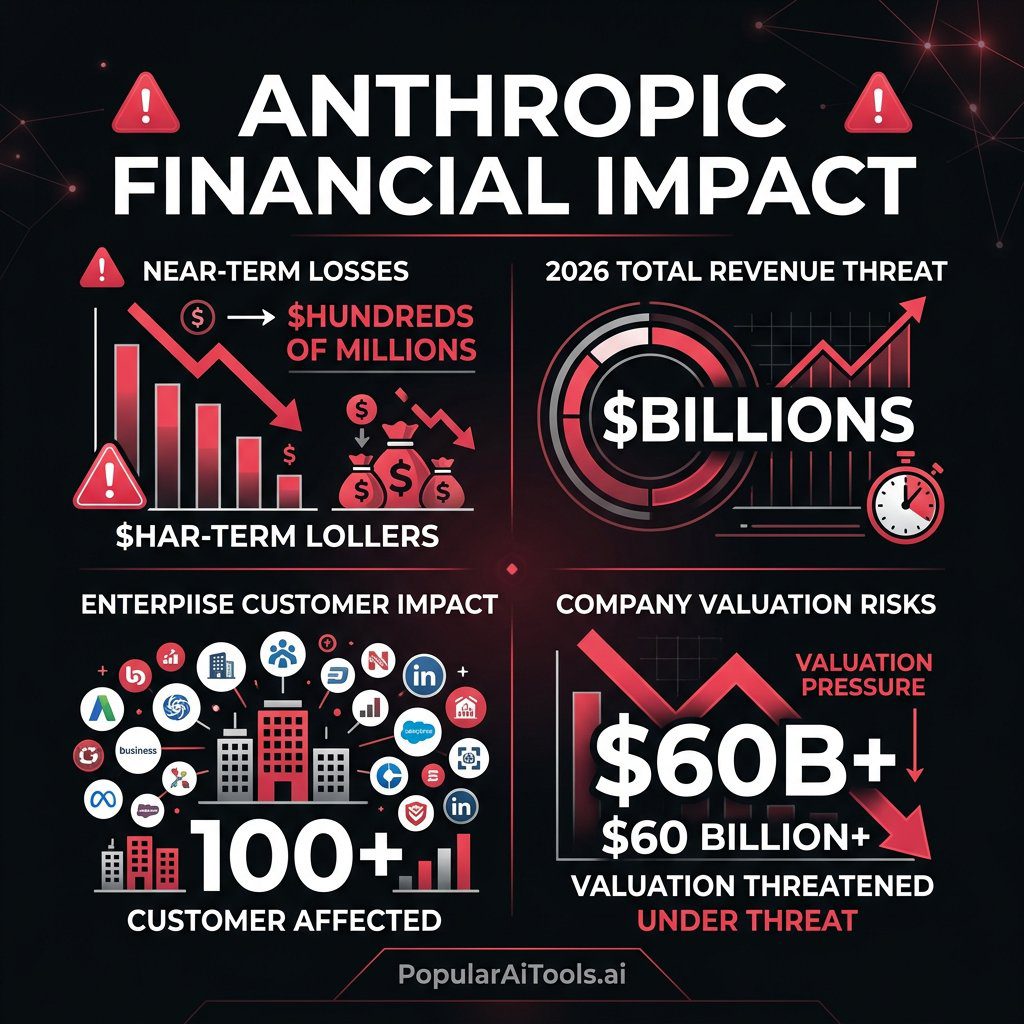

This is not just a philosophical dispute. The financial consequences are enormous.

According to Anthropic’s court filings, more than 100 enterprise customers reached out to the company about the designation in the days after it was announced. Many of these customers work with the Defense Department in some capacity, and the designation creates uncertainty about whether they can continue using Claude for any purpose.

| Financial Impact | Details |

|---|---|

| Near-term revenue at risk | Hundreds of millions of dollars |

| Estimated 2026 total impact | Potentially multiple billions of dollars |

| Enterprise customers affected | 100+ companies have raised concerns |

| Indirect damage | Chilling effect on future government AI contracts |

The damage extends beyond direct government contracts. Because the designation applies to contractors and suppliers, any company in the defense supply chain now faces legal uncertainty about using Anthropic’s products, even for commercial purposes unrelated to the military.

Amodei has stated that the designation “plainly applies only to the use of Claude by customers as a direct part of contracts with the Department of War, not all use of Claude by customers who have such contracts.” But the ambiguity itself is causing customers to pull back.

For Anthropic, a company that was reportedly valued at over $60 billion and was on track to generate significant enterprise revenue in 2026, this designation represents an existential-level threat to its business trajectory. See also: Claude Marketplace for enterprise AI.

The Industry Rallies Behind Anthropic

What happened next was something nobody predicted: Anthropic’s competitors came to its defense.

The Employee Amicus Brief

Within hours of Anthropic filing its lawsuits, more than 30 current employees from OpenAI and Google DeepMind filed an amicus brief in support of Anthropic. Among the signatories was Google chief scientist Jeff Dean, one of the most respected figures in the field.

The employees signed in a personal capacity and were clear they were not representing their employers. But the message was unmistakable: the AI research community views this as a threat to everyone, not just Anthropic.

The brief’s central argument was direct: if the government can blacklist an American AI company for refusing to remove safety restrictions, every AI lab in the country faces the same risk. The signatories warned of a “chilling effect” on the entire industry, arguing that if developers fear that setting safety boundaries will lead to a federal blacklist, they may stop building those guardrails entirely.

As the filing noted, “in the absence of formal laws governing AI use, the ethical guardrails set by developers are often the only thing standing between these powerful systems and catastrophic misuse.”

The Open Letter

Beyond the amicus brief, more than 200 workers from OpenAI and Google signed an open letter expressing solidarity with Anthropic’s position. The letter argued that AI labs should not be split by government negotiation pressure and called on firms to align publicly on what they will not permit, even in national security contexts.

Microsoft and Retired Military Leaders

Microsoft filed its own amicus brief urging the court to pause the Pentagon’s designation. Alongside Microsoft, 22 retired high-ranking U.S. military officials, including former secretaries of the Air Force, Army, and Navy, and a former head of the Coast Guard, submitted a brief supporting Anthropic.

Their argument carried particular weight: even from a military perspective, the designation was an overreach that could harm America’s technological competitiveness.

Tech Industry Groups

Major tech industry groups representing companies with Pentagon contracts also filed an amicus brief calling for a pause on the designation, stating: “The government has ample, well-established tools to resolve procurement disputes and to contract with providers on whatever terms it prefers.”

OpenAI and Google’s Military Stances in Comparison

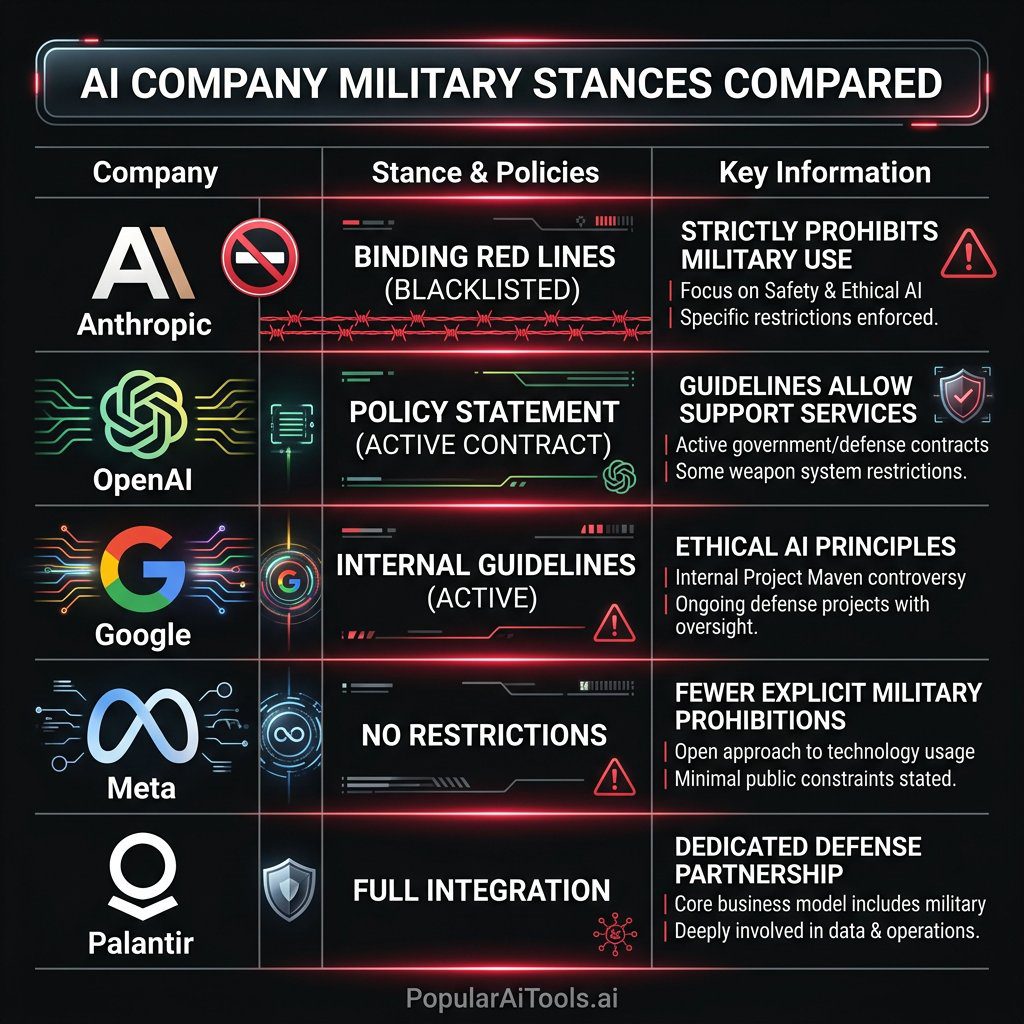

This lawsuit has thrown a spotlight on how different AI companies handle military partnerships, and the differences are revealing.

OpenAI struck a deal with the Pentagon on February 28, 2026, just one day after the Anthropic designation, to allow its technology to be used in classified military settings. OpenAI stated publicly that its technology “cannot be used to direct autonomous weapons systems,” but because the contract has not been released publicly, it remains unclear whether this is reflected in binding contractual language or is merely a policy statement.

This is precisely the distinction that Anthropic was fighting over. Anthropic wanted binding contractual language, not just a policy commitment that could be quietly revised later.

Google has its own complicated history with military AI. The company famously pulled out of Project Maven in 2018 after employee protests over using AI for drone targeting. Since then, Google has re-engaged with defense contracts but has maintained certain internal guidelines about the types of military applications it will support.

| Company | Military AI Stance | Contract Status |

|---|---|---|

| Anthropic | Binding red lines on surveillance and autonomous weapons | Blacklisted by Pentagon |

| OpenAI | Policy statement against autonomous weapons (no public contract language) | Active Pentagon contract |

| Internal guidelines, pulled from Project Maven in 2018 | Active defense contracts | |

| Meta | Open-source models available to military with no restrictions | No formal military contract disputes |

| Palantir | Full military integration, no restrictions | Major defense contractor |

The contrast is stark. Anthropic is the only company that insisted on putting its ethical commitments into binding legal language, and it is the only company being punished for it.

What This Means for the AI Industry

This case has implications that reach far beyond Anthropic and the Pentagon. We see four major consequences playing out regardless of how the court rules.

1. The Safety Guardrails Dilemma

If the government can blacklist companies for maintaining safety restrictions, the incentive structure for the entire industry shifts. Companies will face pressure to remove or weaken safeguards to maintain government contract eligibility. The amicus brief from OpenAI and DeepMind employees made this point explicitly: the people building these systems are worried about what happens when safety becomes a business liability.

2. The Precedent for Government Coercion

This case will define whether the executive branch can use national security designations as a tool to coerce private companies into policy compliance. If the designation stands, it establishes a template that could be applied to any technology company that disagrees with government policy on how its products should be used.

3. The China Competition Factor

Multiple commentators, including Fortune, have noted that blacklisting one of America’s leading AI companies could undermine U.S. competitiveness in the AI race with China. The retired military officials who filed in support of Anthropic made this argument forcefully: weakening the domestic AI ecosystem by punishing safety-conscious companies does not serve national security.

4. The Future of AI Regulation

The employee amicus brief highlighted a critical gap: in the absence of formal AI safety legislation, the ethical guardrails set by developers are the primary check on how these systems are used. This case could accelerate or derail efforts to create formal legal frameworks for military AI use.

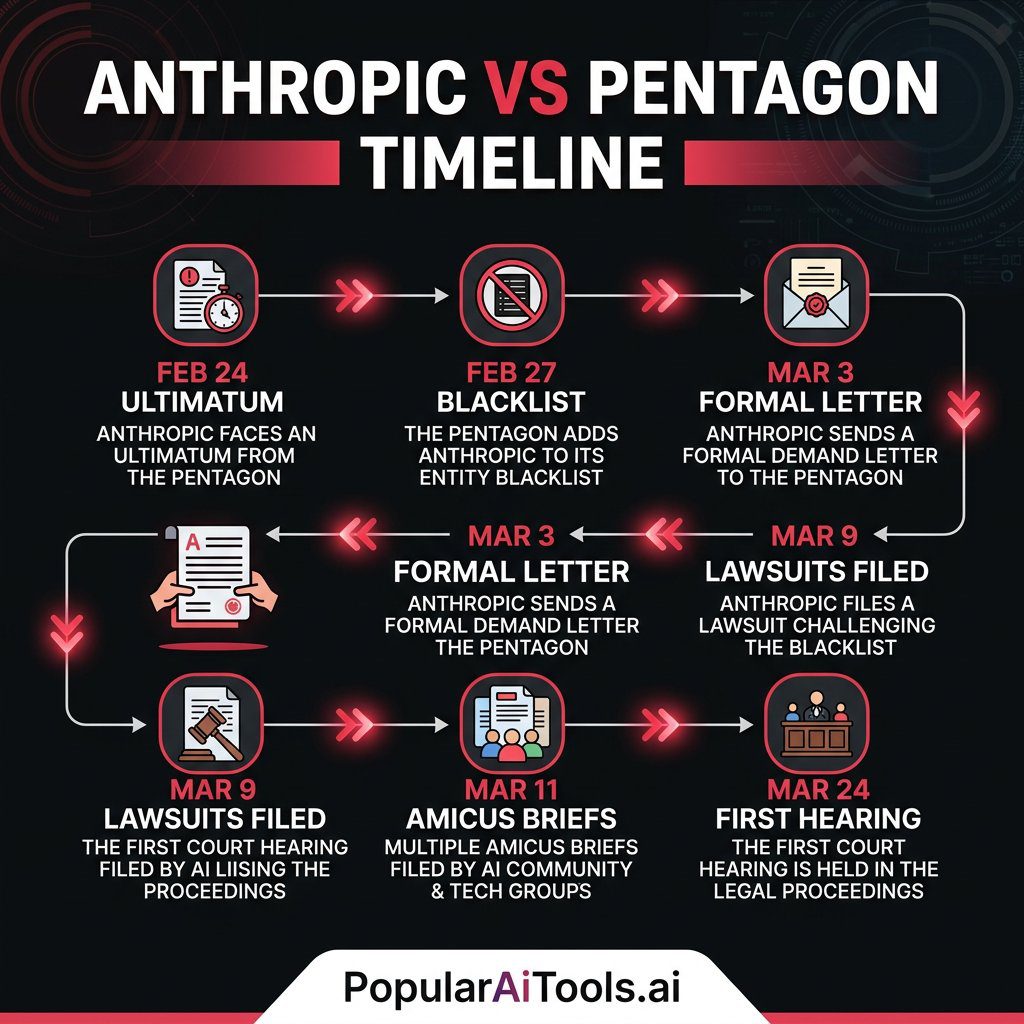

Timeline of Key Events

| Date | Event |

|---|---|

| Feb 24, 2026 | Defense Secretary Hegseth issues ultimatum to Anthropic CEO Amodei |

| Feb 26, 2026 | Anthropic publicly states it will not budge on its two red lines |

| Feb 27, 2026 | Trump directs federal agencies to stop using Anthropic; Hegseth designates Anthropic a supply chain risk |

| Feb 28, 2026 | OpenAI announces its own Pentagon deal for classified military settings |

| Mar 3, 2026 | Hegseth sends formal letter confirming the supply chain risk designation |

| Mar 5, 2026 | Pentagon officially informs Anthropic the designation is “effective immediately” |

| Mar 6, 2026 | Amodei apologizes for leaked internal memo; internal Pentagon memo orders removal of Anthropic tech from key systems |

| Mar 9, 2026 | Anthropic files two federal lawsuits (California and D.C. Circuit); 30+ OpenAI/DeepMind employees file amicus brief |

| Mar 10, 2026 | 200+ OpenAI and Google workers sign open letter supporting Anthropic’s position |

| Mar 11, 2026 | Microsoft and 22 retired military officials file amicus briefs |

| Mar 12, 2026 | Anthropic seeks emergency stay from appeals court |

| Mar 16, 2026 | Major tech industry groups file amicus brief calling for pause |

| Mar 24, 2026 | First court hearing scheduled before Judge Rita Lin in San Francisco |

What Happens Next

The first hearing is set for March 24, 2026, before U.S. District Judge Rita Lin in San Francisco. Anthropic is seeking a temporary restraining order or preliminary injunction to pause the supply chain risk designation while the case proceeds.

If the court grants a stay, Anthropic’s customers and partners would be able to resume normal operations while the legal merits are argued. If the court denies it, the financial bleeding continues and the pressure on Anthropic to settle or capitulate increases.

The separate D.C. Circuit case will proceed on its own timeline, potentially reaching the appellate level faster than the district court case.

We are watching a case that will likely end up before the Supreme Court. The intersection of national security authority, First Amendment rights, executive power, and technology regulation makes this one of the most significant technology law cases in American history.

Whatever your position on military AI, the core question is simple: should the government be able to destroy an American company’s business for saying “we will not help you build tools for mass surveillance and autonomous killing”?

The answer to that question will shape the AI industry for decades.

FAQ

What is the Anthropic vs Pentagon lawsuit about?

Anthropic sued the Trump administration after the Pentagon designated it a “supply chain risk” to national security. This designation came after Anthropic refused to allow unrestricted military use of its Claude AI, specifically insisting on contractual red lines against mass surveillance of U.S. citizens and autonomous weapons. Anthropic argues the designation is illegal retaliation for its policy positions on AI safety.

Why did the Pentagon label Anthropic a supply chain risk?

The Pentagon issued the designation after contract negotiations broke down. Anthropic wanted binding contractual language preventing Claude from being used for mass domestic surveillance and fully autonomous weapons. The Pentagon demanded use “for all lawful purposes” with no vendor-imposed restrictions. When Anthropic refused to drop its red lines by the February 27 deadline, Defense Secretary Pete Hegseth invoked the supply chain risk framework, a tool historically reserved for foreign adversaries like Huawei.

How much money could Anthropic lose from the Pentagon designation?

According to Anthropic’s court filings, the designation puts hundreds of millions to potentially multiple billions of dollars in 2026 revenue at risk. Over 100 enterprise customers have raised concerns, and the ambiguity around whether the designation affects all defense supply chain companies using Claude has caused widespread uncertainty that extends far beyond direct government contracts.

Which companies and individuals are supporting Anthropic in the lawsuit?

Support has been remarkably broad. Over 30 employees from OpenAI and Google DeepMind, including Google chief scientist Jeff Dean, filed an amicus brief. Over 200 workers from both companies signed an open letter. Microsoft filed its own amicus brief, along with 22 retired high-ranking military officials. Major tech industry trade groups representing Pentagon contractors have also filed in support of pausing the designation.

What does the Anthropic Pentagon lawsuit mean for the future of AI?

This case will set a precedent for whether the government can use national security designations to coerce AI companies into removing safety guardrails. If the designation stands, it could create a chilling effect across the industry where companies avoid implementing safety restrictions to maintain government contract eligibility. It also raises fundamental questions about who gets to decide how powerful AI systems are used in military contexts, and whether binding ethical commitments are a business liability or a necessity.

We cover the biggest stories in AI every day. Want to stay ahead of the curve on AI tools, breakthroughs, and industry shifts?