5 Claude Code Workflow Patterns Every Developer Needs in 2026

AI Infrastructure Lead

Key Takeaways

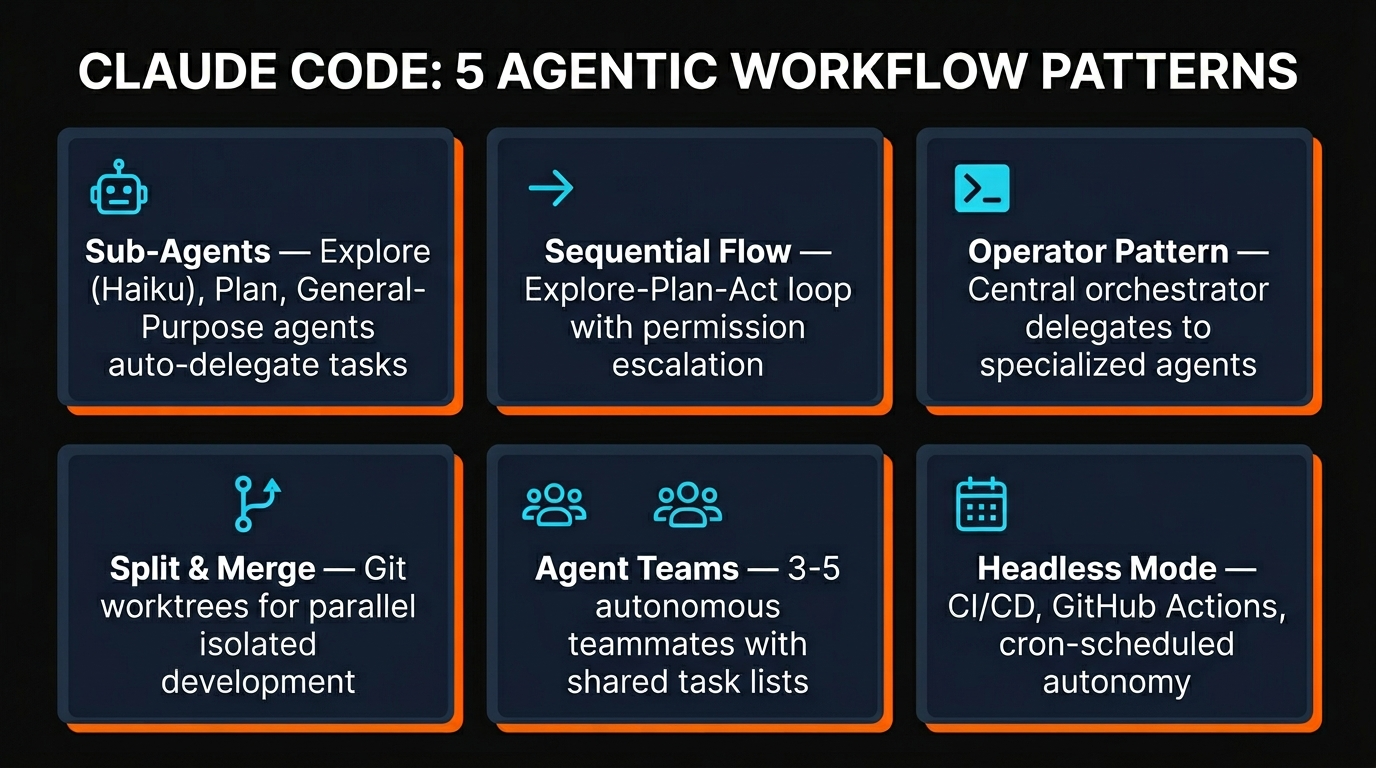

- Claude Code has 5 distinct agentic patterns — picking the right one for your task saves hours and tokens

- Sub-agents handle delegation automatically; agent teams give you parallel autonomous workers

- Git worktrees let parallel agents work without stepping on each other's files

- Headless mode + GitHub Actions turns Claude Code into a 24/7 automated team member

- Start with sequential flows for daily work, scale up to teams only when the task demands it

Most developers use Claude Code the same way every time: open a terminal, type a prompt, wait for a response. That works fine for small tasks. But once you're building real features — multi-file refactors, parallel bug fixes, automated CI pipelines — you need something more structured.

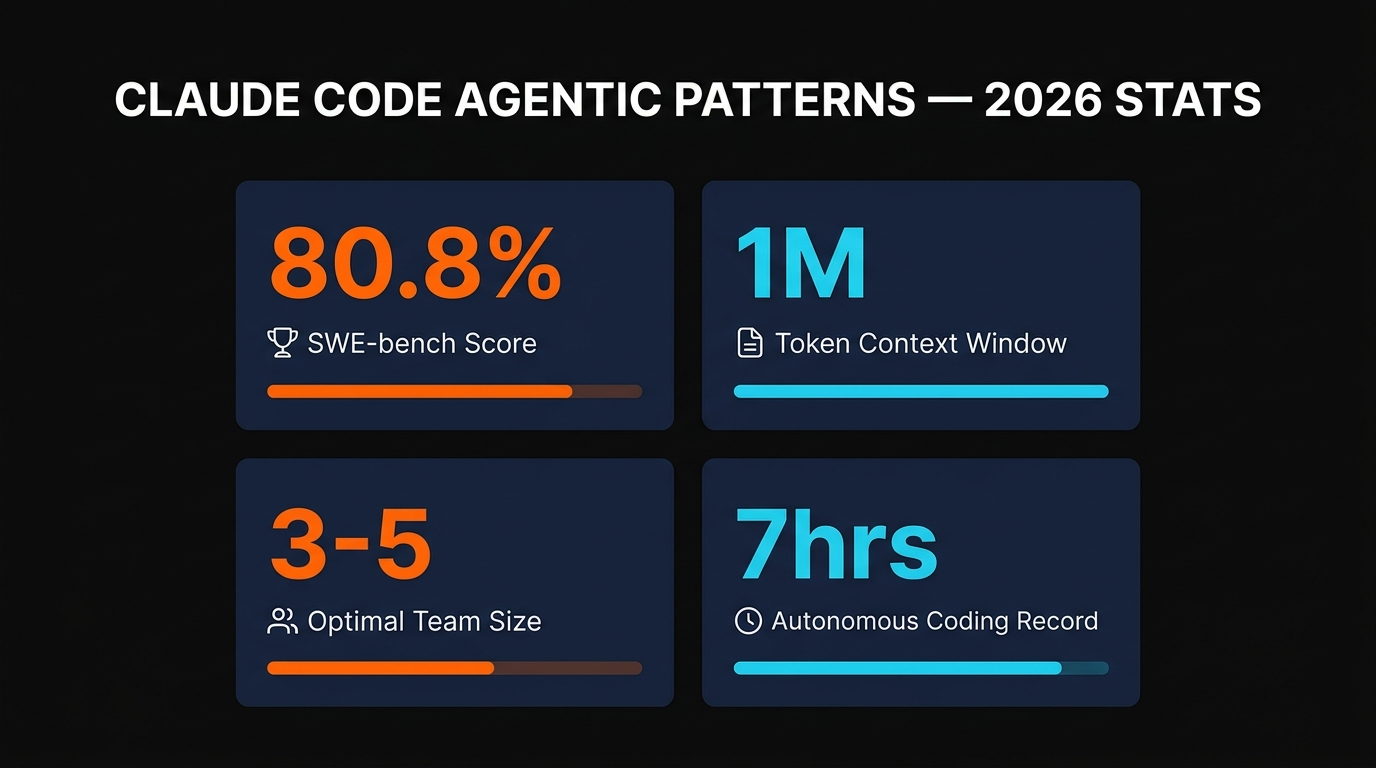

We spent the past two weeks testing all five of Claude Code's agentic workflow patterns across production codebases. The difference between picking the right pattern and defaulting to a single conversation? We went from 4-hour feature implementations down to 45 minutes in some cases.

Here's exactly how each pattern works, when to use it, and the real commands you need to set it up. No theory — just what actually works.

Claude Code's Built-in Sub-Agents

Before we get into the five patterns, you need to understand the engine behind them: sub-agents. When Claude Code tackles a complex task, it doesn't do everything in one monolithic process. It spawns specialized child agents, each running in its own isolated context window.

There are three built-in sub-agent types, each optimized for a different job:

Explore Agent (Haiku)

Read-only. Powered by the fast Haiku model. Searches files, reads code, answers questions about the codebase. Cannot modify anything. Has three thoroughness levels: quick, medium, and very thorough.

Plan Agent

Read-only. Uses the same model as your main session. Gathers context, analyzes architecture, and produces implementation plans. Used during Plan Mode to research before proposing changes.

General-Purpose Agent

Full tool access — can read, write, edit, run bash commands. Handles complex multi-step operations that need both exploration and modification. The heavy lifter of the sub-agent system.

The key design choice: sub-agents receive only their own task prompt, not the full parent conversation. They do their work, return the result, and the parent's context stays clean. This is how Claude Code can handle sprawling codebases without blowing its context window.

You can also create custom sub-agents as Markdown files. Drop a .md file in .claude/agents/ with YAML frontmatter specifying the name, tools, model, and system prompt. Claude Code auto-discovers these and uses them when the task matches their description.

---

name: code-reviewer

description: Reviews code for quality and best practices

tools: Read, Glob, Grep

model: sonnet

---

You are a code reviewer. Analyze code for bugs,

security issues, and style violations.Now let's look at how these sub-agents combine into the five workflow patterns that actually matter.

Pattern 1: Sequential Flow (Explore-Plan-Act)

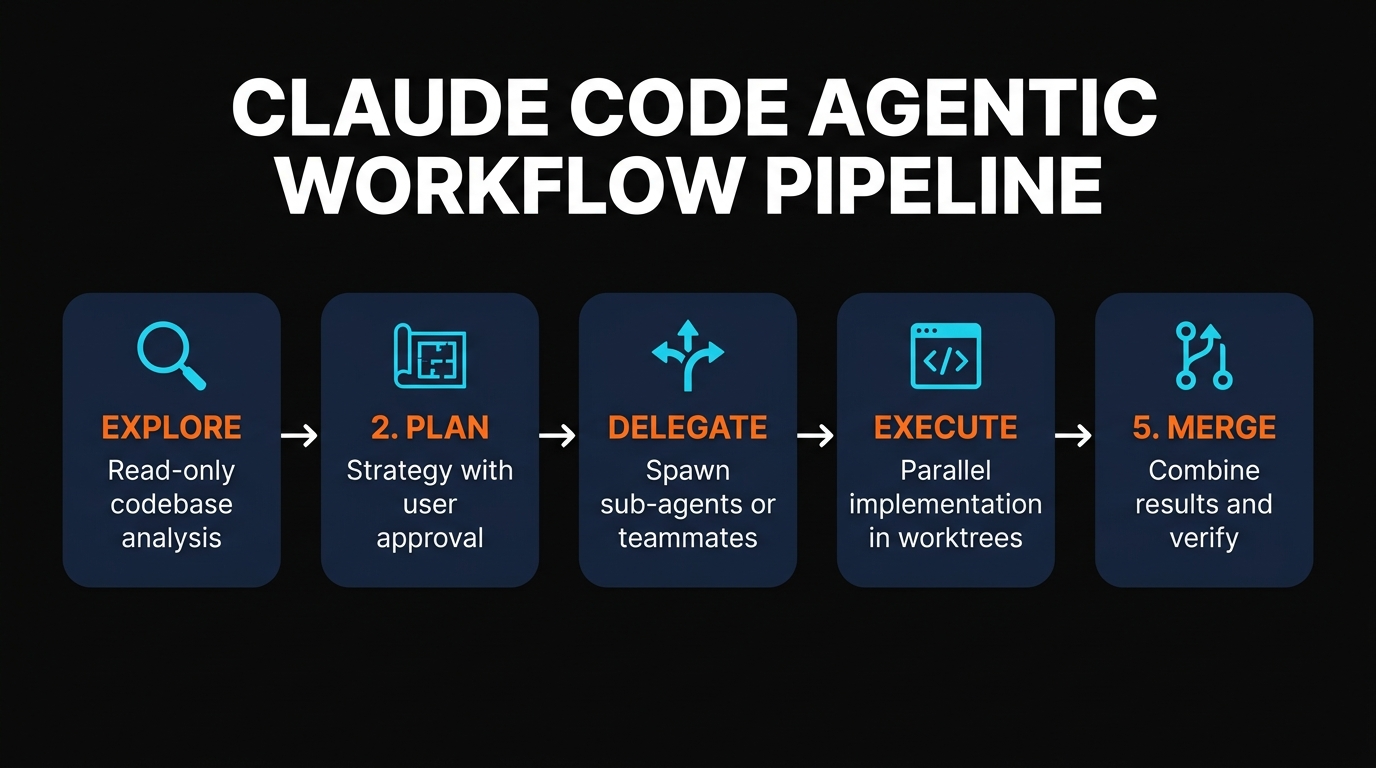

This is the pattern you should start with. It's straightforward: tasks execute in order, each step building on the previous one. The canonical version in Claude Code is the Explore-Plan-Act loop — three permission-escalating phases.

Phase 1: Explore — Claude reads the codebase in read-only mode. It finds the relevant files, understands the architecture, maps dependencies. No changes to anything.

Phase 2: Plan — Based on what it found, Claude proposes a strategy. You review, adjust, approve. This is where you catch bad assumptions before they become bad code.

Phase 3: Act — Full tool access unlocked. Claude implements the plan, runs tests, and iterates on failures.

To start in Plan Mode:

# Start a session in Plan Mode

claude --permission-mode plan

# Or toggle mid-session with Shift+Tab

# Cycles: Normal → Auto-Accept → Plan ModeWe use sequential flow for probably 70% of our daily work. It's the right call when steps depend on each other — refactoring that needs to understand the existing code first, debugging that needs to read logs before proposing fixes, or any task where jumping ahead would waste tokens.

When to use it: Unfamiliar codebases, multi-file refactors, debugging sessions, draft-review-polish cycles. Basically anything where "understand first, act second" applies.

For a deeper look at setting up your daily Claude Code workflow, check out our complete Claude Code setup guide.

Pattern 2: The Operator (Central Orchestrator)

The operator pattern flips the script. Instead of one agent doing everything sequentially, you have a central orchestrator — the "operator" — that breaks down a complex task and delegates pieces to specialized sub-agents.

Think of it like a project manager who never writes code but knows exactly who should handle each part. The operator decomposes the task, picks the right sub-agent for each piece, and synthesizes the results.

In practice, this is what Claude Code does naturally when you give it a complex prompt. It spawns an Explore agent to understand the codebase, then delegates implementation to a General-Purpose agent. But you can make it explicit by creating specialized sub-agents:

# Define agents inline for one session

claude --agents '{

"security-reviewer": {

"description": "Reviews code for security vulnerabilities",

"prompt": "You are a security expert. Focus on OWASP top 10.",

"tools": ["Read", "Grep", "Glob"],

"model": "sonnet"

},

"test-writer": {

"description": "Writes comprehensive test suites",

"prompt": "You write thorough tests. Cover edge cases.",

"tools": ["Read", "Edit", "Bash"],

"model": "sonnet"

}

}'The operator can restrict which sub-agents it spawns using the Agent(worker, researcher) syntax in tool allowlists. This prevents the orchestrator from going rogue and spawning agents you didn't plan for.

When to use it: Complex projects needing multiple specialized perspectives. Code reviews from different angles (security, performance, testing). When tasks naturally decompose into independent units. If you're managing Claude Code hooks for automation triggers, the operator pattern keeps everything coordinated.

Pattern 3: Split and Merge (Parallel Worktrees)

This is where Claude Code gets seriously powerful. The split-and-merge pattern spawns multiple agents that work in parallel, each in its own isolated git worktree. No file conflicts. No stepping on each other's changes. Each agent gets a full copy of the repo on a separate branch.

Start a worktree session from the CLI:

# Start parallel sessions in isolated worktrees

claude --worktree feature-auth

claude --worktree bugfix-payments

claude --worktree refactor-api

# Each creates .claude/worktrees/{name}/ with its own branch

# Worktrees auto-clean if no changes were madeOr configure sub-agents to use worktree isolation automatically:

---

name: parallel-worker

description: Works on independent tasks in isolation

isolation: worktree

---One gotcha we hit: .env files don't copy to worktrees by default (they're gitignored). Create a .worktreeinclude file in your project root listing the files that should be copied:

# .worktreeinclude

.env

.env.local

config/secrets.jsonThe trade-off: Token cost scales at 3-4x compared to sequential work. Each parallel agent runs its own full session. But when you're implementing three independent features that would take 3 hours sequentially, getting them done in 1 hour is worth the extra spend.

When to use it: Multiple features that touch different files. Competing implementation approaches you want to test simultaneously. Bug fixes across isolated modules. Any task where the pieces don't depend on each other. For more on structuring parallel AI workflows, see our AI coding agent blueprints guide.

Pattern 4: Agent Teams (Autonomous Collaboration)

Agent teams take the split-and-merge concept further. Instead of isolated agents that just report results back to a parent, you get independent Claude Code instances that communicate with each other. They share a task list, claim work independently, and send messages peer-to-peer.

This is an experimental feature (v2.1.32+). Enable it with:

# Enable agent teams

export CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS=1

# Or in settings.json:

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}The architecture has four components:

Team Lead

Your main session. Creates the team, spawns teammates, coordinates high-level strategy.

Teammates

Separate Claude Code instances, each with their own context window and tools.

Shared Task List

Work items with states (pending, in progress, completed) and dependency tracking.

Mailbox

Peer-to-peer messaging. Any agent can message any other agent or broadcast to all.

We tested agent teams on a real project: investigating a WebSocket disconnection bug. We spawned 5 teammates, each exploring a different hypothesis (server timeout, client reconnection logic, proxy buffering, load balancer config, and DNS resolution). They debated with each other, disproved theories, and converged on the actual cause in under 20 minutes. That same investigation would have taken us most of a morning working solo.

Practical limits to keep in mind: start with 3-5 teammates (diminishing returns beyond that), aim for 5-6 tasks per teammate, and break work so each teammate owns different files. Navigate teammates with Shift+Down/Up and view the shared task list with Ctrl+T.

For a deep dive into agent team configuration and advanced patterns, check out our Claude Code agent teams guide.

Pattern 5: Headless (Fully Autonomous)

Headless mode removes the human from the loop entirely. You give Claude Code a prompt with the -p flag, it processes the task, outputs results to stdout, and exits. No interactive session, no approval prompts.

This is what unlocks CI/CD integration, cron-scheduled tasks, and GitHub Actions automation.

# Basic headless execution

claude -p "Find and fix lint errors in src/" --allowedTools "Read,Edit,Bash"

# Structured JSON output

claude -p "List all TODO comments" --output-format json

# Budget control (never spend more than $5)

claude -p "Refactor the auth module" --max-budget-usd 5.00

# Pipe input for analysis

cat build-error.txt | claude -p "Explain the root cause"

# Bare mode for CI (skips hooks, plugins, MCP, CLAUDE.md)

claude --bare -p "Run the test suite" --allowedTools "Bash,Read"The GitHub Actions integration is where headless gets really practical. The official anthropics/claude-code-action@v1 action lets Claude respond to PR comments, review code on push, and run scheduled reports:

# .github/workflows/claude-review.yml

name: Claude Code Review

on:

pull_request:

types: [opened, synchronize]

jobs:

review:

runs-on: ubuntu-latest

steps:

- uses: anthropics/claude-code-action@v1

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

prompt: "Review this PR for bugs and security issues"Beyond GitHub Actions, Claude Code offers three scheduling options: Cloud Scheduled Tasks (runs on Anthropic infrastructure, even when your machine is off — minimum 1-hour interval), Desktop Scheduled Tasks (runs locally, minimum 1-minute interval), and /loop (session-scoped polling, great for monitoring during active work).

When to use it: Automated PR reviews, scheduled code quality reports, CI/CD pipeline integration, nightly test runs, monitoring jobs. Anything that should happen without you being at the keyboard. For more automation patterns, see our guide to Claude Code loops and skills.

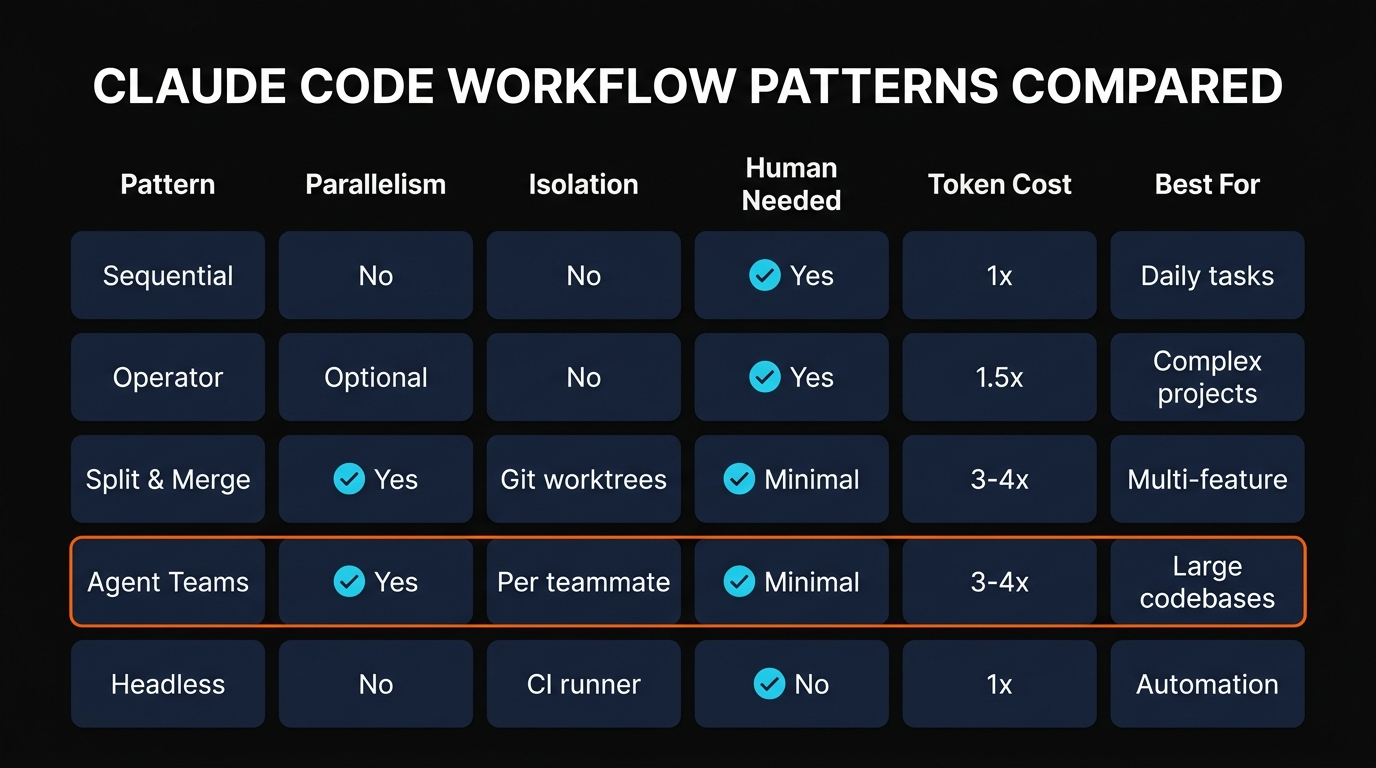

Which Pattern Should You Use?

Here's the decision framework we use after testing all five patterns across real projects:

| Pattern | Best For | Token Cost | Human Oversight |

|---|---|---|---|

| Sequential Flow | Daily tasks, debugging, refactors | 1x (baseline) | High |

| Operator | Multi-perspective reviews, complex projects | 1.5x | Medium |

| Split & Merge | Independent features, parallel bug fixes | 3-4x | Low |

| Agent Teams | Large codebases, competing investigations | 3-4x | Low |

| Headless | CI/CD, cron jobs, GitHub Actions | 1x | None |

Our recommendation: Start with sequential flow for everything. Once you're comfortable, add headless for your CI pipeline. Only reach for agent teams or split-and-merge when you have genuinely independent parallel work that justifies the 3-4x token cost.

The biggest mistake we see is developers jumping straight to agent teams for tasks that a single sequential session handles perfectly. A well-crafted single prompt with Plan Mode often beats a poorly coordinated team of agents.

For more advanced patterns — including custom skills, MCP server integration, and slash commands — browse our full Claude Code power user kit.

Frequently Asked Questions

.claude/agents/.claude -p "your prompt" --allowedTools "Read,Edit,Bash". Add --output-format json for structured output, --max-budget-usd 5.00 for cost control, or --bare for CI environments.--worktree or isolation: worktree. They modify files freely in isolation. When done, you merge the branches back. Use .worktreeinclude for gitignored files that need to be copied./loop 5m /command polls within the current session. Plus GitHub Actions via anthropics/claude-code-action@v1.Build an AI Tool? Get It in Front of the Right Audience

PopularAiTools.ai reaches thousands of qualified AI buyers.

Recommended AI Tools

Writefull

Comprehensive review of Writefull, the AI writing assistant built for academic and research writing, with features, pricing, pros and cons, and alternatives comparison.

View Review →Opus Clip

In-depth Opus Clip review covering features, pricing, pros and cons, and alternatives. Learn how this AI video repurposing tool turns long videos into viral short-form clips.

View Review →Chatzy AI

Agentic AI platform for building and deploying conversational AI agents across WhatsApp, website chat, and other digital channels. No-code builder with knowledge base training.

View Review →Blotato

Blotato is an AI content engine that combines scheduling, AI writing, image generation, video creation, and cross-posting with a full REST API. Built by the creator who grew to 1.5M followers using it.

View Review →