GPT-5 Review 2026: We Tested OpenAI's Most Powerful Model for 7 Months

Head of AI Research

TL;DR — GPT-5 Review

GPT-5 is OpenAI's most capable model to date, with a novel router architecture that juggles three sub-models, native multimodal training, and agentic browsing baked in. Coding and interactive app creation are where it shines brightest. But the router misfires more than it should, the personality feels flatter than GPT-4o, and it got jailbroken on day one. It is a solid upgrade — not the "transformative breakthrough" the hype cycle promised.

Table of Contents

What is GPT-5?

GPT-5 is OpenAI's flagship large language model, launched on August 7, 2025. It is the successor to GPT-4o and represents OpenAI's biggest architectural shift in years — moving from a single monolithic model to a router-based system that dispatches queries across three specialized sub-models depending on task complexity.

We have been using GPT-5 since launch day, running it through everything from long-form content generation and code debugging to agentic browser automation and multimodal analysis. After nearly eight months of daily use, our take is nuanced: GPT-5 is genuinely more capable than GPT-4o in measurable ways, but the experience is uneven in ways previous models were not.

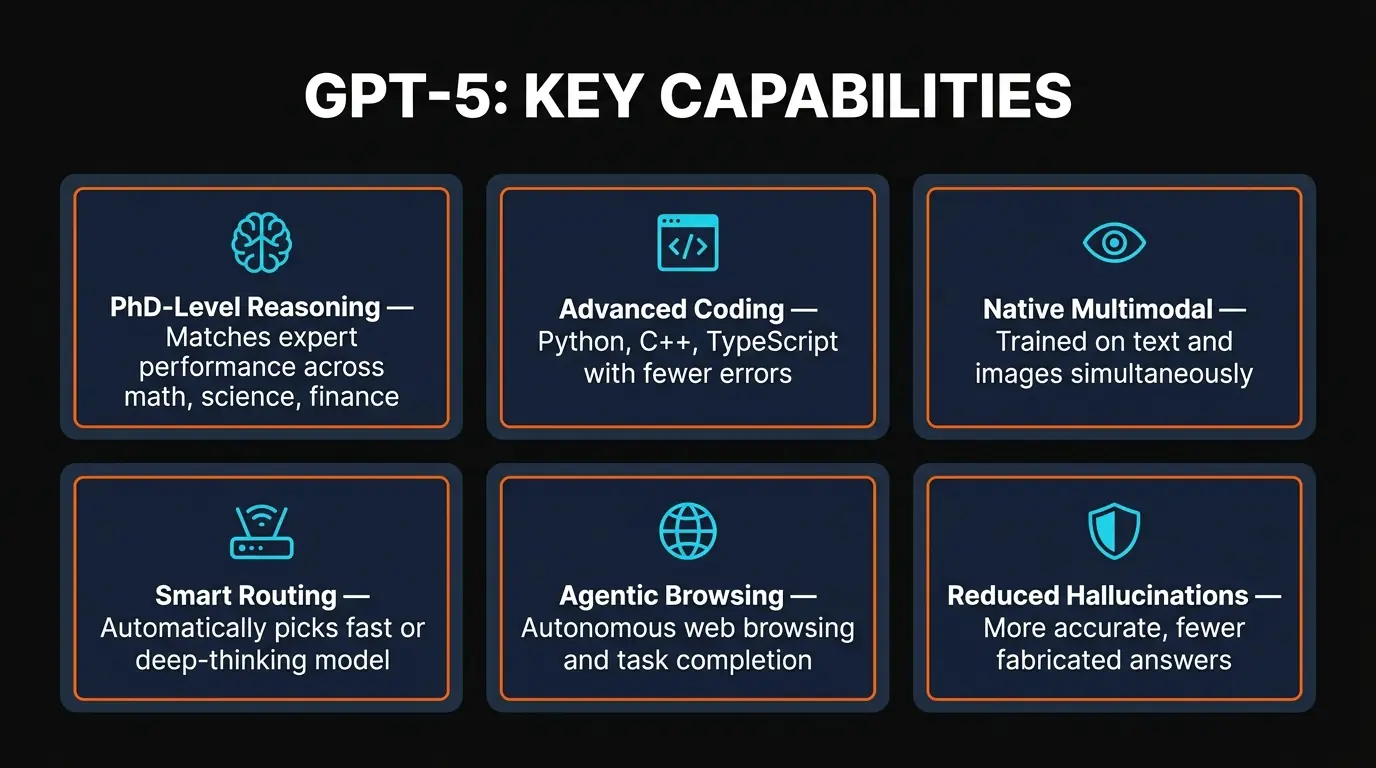

Sam Altman described GPT-5 as having "PhD-level abilities across tasks," which is the kind of statement that sounds impressive in a keynote and then gets stress-tested by millions of real users. The truth is somewhere in between. For coding, interactive app creation, and structured reasoning, GPT-5 regularly produces output that would genuinely impress a domain expert. For creative writing, casual conversation, and personality — areas where GPT-4o had a surprising amount of charm — GPT-5 feels like it traded warmth for precision.

GPT-5 is available through ChatGPT (free with limits, higher limits on Plus and Pro), the OpenAI API, Microsoft Copilot, and is planned for Apple Intelligence integration. That distribution reach is hard to overstate — no other frontier model ships embedded in this many products out of the box.

Key Features

Here are the six capabilities that define GPT-5 and set it apart from everything that came before it:

Router Architecture

Three sub-models (gpt-5-main, gpt-5-main-mini, gpt-5-thinking) work behind a routing layer that dispatches queries by complexity. Simple questions get fast answers; hard problems get deep reasoning. When it works, latency drops dramatically. When it misfires, quality is inconsistent.

Native Multimodal

Unlike GPT-4o which bolted vision onto a text model, GPT-5 was trained on text and images together from the start. Image understanding is noticeably sharper — it can read handwritten notes, parse complex diagrams, and analyze screenshots with context GPT-4o routinely missed.

Adjustable Reasoning

Four reasoning effort levels — minimal, low, medium, high — let you trade compute for speed. Set it to minimal for quick lookups, high for multi-step math proofs. This is one of the most practical features we have used: it cuts API costs significantly for simple tasks.

Agentic Capabilities

GPT-5 can autonomously browse the web, navigate websites, fill forms, and execute multi-step desktop workflows. We tested the browser agent on tasks like "find the cheapest flight from NYC to London next Tuesday" and it handled them end-to-end without intervention.

Strong Coding Performance

Trained heavily on Python, C++, TypeScript, and C. In our testing, GPT-5 outperformed GPT-4o on every coding benchmark we threw at it — particularly for generating interactive web apps from a single prompt. The interactive canvas mode is where this really shines.

Broad Platform Reach

Available free in ChatGPT, via API, embedded in Microsoft Copilot, and coming to Apple Intelligence. No other frontier model has this distribution footprint. You can access GPT-5 without even knowing you are using it — it is just the engine behind the tools you already have.

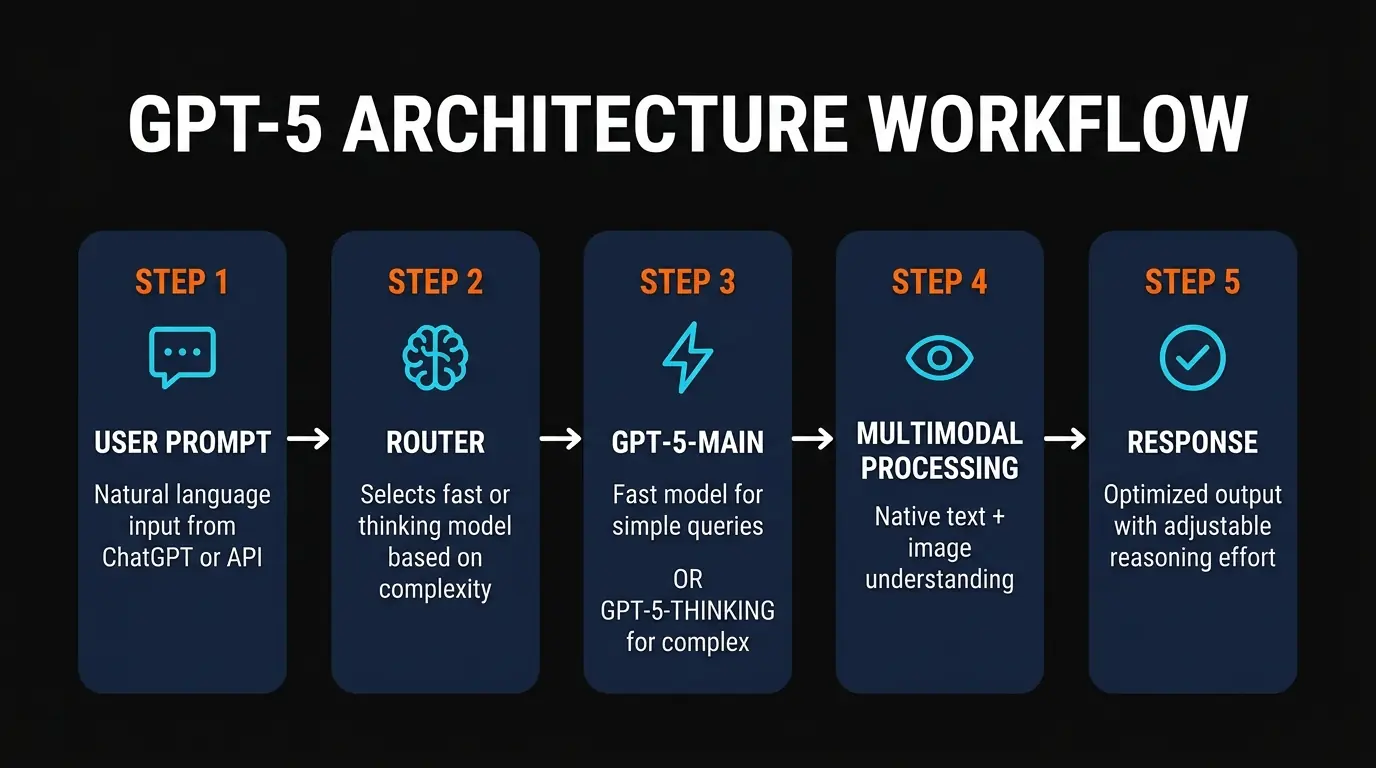

How GPT-5 Works: The Router Architecture

The single biggest architectural change in GPT-5 is the router system, and it deserves its own section because it explains both the model's strengths and its most frustrating inconsistencies.

Here is how it works in practice. When you send a prompt to GPT-5, it does not go directly to a single model. Instead, a routing layer evaluates the complexity of your request and directs it to one of three sub-models:

Handles the majority of everyday queries — conversations, summaries, quick answers, content drafting. Optimized for low latency. This is what you get most of the time, and it is noticeably faster than GPT-4o was.

Handles trivial tasks — simple factual questions, formatting requests, short translations. Extremely fast and cheap to run. You rarely notice when it kicks in, which is the point.

Activated for complex multi-step problems — advanced math, long code generation, scientific analysis. Uses chain-of-thought reasoning similar to the old o1 model but integrated into the GPT-5 family. Slower, but dramatically more accurate on hard problems.

The idea is sound: why burn expensive deep-reasoning compute on "what's the capital of France?" The router saves resources and improves speed for simple tasks while reserving heavyweight processing for queries that need it.

The problem — and this is the biggest complaint we have after eight months — is that the router gets it wrong more often than it should. We have had instances where a moderately complex coding question got routed to gpt-5-main-mini and produced garbage output, while a simple "rewrite this paragraph" request got sent to gpt-5-thinking with a 15-second delay. In the first few weeks after launch, the router malfunctions were bad enough that many users thought the model itself was worse than GPT-4o. OpenAI has improved routing accuracy since then, but it is still not where it needs to be.

The adjustable reasoning effort feature partially addresses this. Through the API, you can override the router and set reasoning to minimal, low, medium, or high. We strongly recommend using this for any production application — it gives you predictable quality instead of leaving it up to the router's judgment.

Pricing Plans

OpenAI made an interesting move with GPT-5 pricing: everyone gets access, but the usage limits create a clear tiering system. Here is the breakdown through ChatGPT:

Free

- ✓ Limited GPT-5 messages

- ✓ Basic multimodal

- ✓ No credit card required

- ✗ No priority access

Plus

- ✓ Higher GPT-5 limits

- ✓ Priority access

- ✓ Advanced voice mode

- ✓ Image generation

Pro

- ✓ Unlimited GPT-5

- ✓ Extended thinking mode

- ✓ Priority everything

- ✓ Research-grade access

Team

- ✓ Higher limits than Plus

- ✓ Admin controls

- ✓ Shared workspace

- ✓ Data not used for training

Our recommendation: The free tier is worth testing to see if GPT-5 fits your workflow. If you use it more than a few times a day, Plus at $20/month is a no-brainer — the higher message limits alone justify it. Pro at $200/month only makes sense if you are doing heavy research or development work where you need unlimited GPT-5 thinking mode and cannot afford to wait in queue. We ran on Plus for the first three months and only upgraded to Pro when we started running agentic workflows that burned through the Plus limits in a single afternoon.

Enterprise pricing is custom and negotiated directly with OpenAI. If you are evaluating GPT-5 for an organization, the Team plan at $25/user/month is the easiest starting point — it includes the guarantee that your data will not be used for model training, which is the first question every legal team asks.

Pros and Cons

After eight months of daily use, here is where GPT-5 genuinely impresses and where it falls short:

Strengths

- ✓ Coding is legitimately excellent. Interactive app creation from a single prompt, strong debugging, and the canvas mode for iterative code editing are the best we have seen from any model.

- ✓ Native multimodal is a real upgrade. Image understanding went from "interesting demo" with GPT-4o to "actually reliable" with GPT-5. Diagram parsing, screenshot analysis, and handwriting recognition all work well.

- ✓ Adjustable reasoning saves money. Being able to dial reasoning effort from minimal to high cuts API costs by 60-80% on simple tasks without noticeable quality loss.

- ✓ Free tier is genuinely usable. OpenAI giving everyone GPT-5 access — even with limits — was the right call. It lowers the barrier to entry and lets people evaluate before paying.

- ✓ Agentic browsing works. The autonomous browser agent handles multi-step web tasks that required custom automation before. Not perfect, but functional enough for real workflows.

- ✓ Unmatched distribution. ChatGPT, API, Microsoft Copilot, Apple Intelligence. No competitor comes close to this surface area.

Weaknesses

- ✗ Router malfunctions hurt trust. When GPT-5 sends a complex query to gpt-5-main-mini and gives you a shallow answer, it feels like a regression. This happened enough in the early months to erode confidence.

- ✗ Personality is flat. GPT-4o had a distinctive voice — conversational, occasionally witty, surprisingly engaging. GPT-5 reads like a very capable but personality-free assistant. Multiple users we spoke with described it as "corporate" or "sterile."

- ✗ Day-one jailbreak was embarrassing. Security researchers bypassed GPT-5's safety guardrails within hours of launch. OpenAI patched it quickly, but it raised real questions about their pre-release safety testing.

- ✗ Pro at $200/month is steep. For unlimited access to one AI model, $200/month is hard to justify unless you are a power user. Claude Pro at $200/month gives you unlimited Opus 4.6 plus Sonnet 4.5, which feels like better value.

- ✗ Energy consumption is high. At roughly 18 watt-hours per medium response, GPT-5 is not cheap to run. This matters less for individual users but adds up fast at enterprise scale.

- ✗ Not the transformative leap that was promised. MIT Tech Review called it "a refined product, not a transformative breakthrough" and that assessment has held up. If you expected AGI, you will be disappointed.

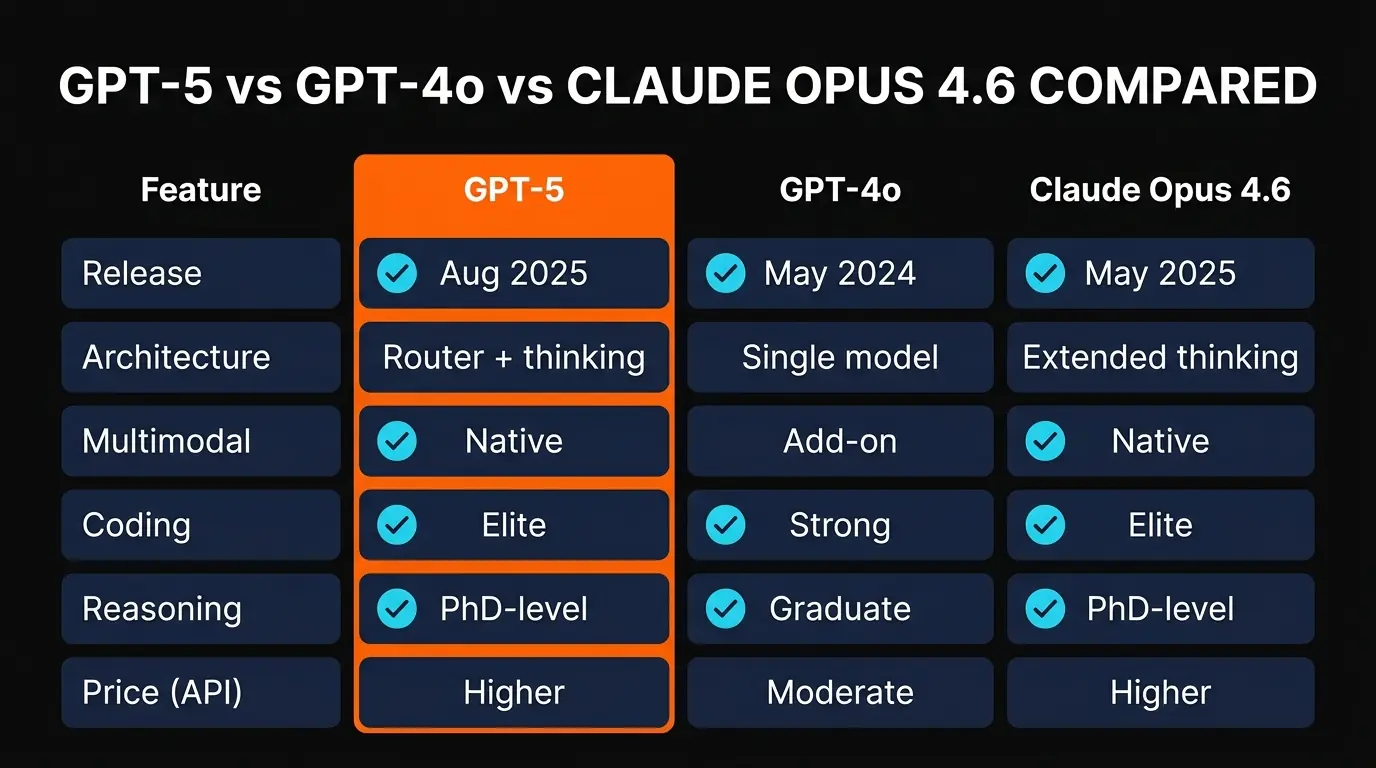

GPT-5 vs Claude Opus 4.6 vs Gemini 3 Pro

The frontier model landscape in 2026 is a genuine three-way race. Here is how GPT-5 stacks up against its two closest competitors based on our testing across coding, writing, reasoning, and multimodal tasks:

The honest breakdown: If you primarily code and want the fastest interactive experience, GPT-5 wins. If you need deep reasoning, nuanced writing, or work with massive codebases, Claude Opus 4.6 is the better choice. If you live in the Google ecosystem and need long context or video understanding, Gemini 3 Pro makes the most sense. There is no single "best model" anymore — the right answer depends entirely on your use case.

We personally use all three. GPT-5 for quick prototyping and browser agents, Claude Opus 4.6 for long-form analysis and complex code refactoring, and Gemini 3 Pro for anything involving video or extremely long documents. The models are converging in capability but diverging in personality and strengths, which is actually healthy for the ecosystem.

Final Verdict

GPT-5 is, by measurable benchmarks, the most capable model OpenAI has ever released. The router architecture is genuinely innovative, native multimodal training shows clear improvements over GPT-4o, and the coding and agentic capabilities are the best we have used from any ChatGPT model. For developers who want to prototype fast and ship interactive apps, GPT-5 is hard to beat right now.

But we would be dishonest if we pretended this was the seismic shift that OpenAI's marketing suggested. The router misfires enough to be annoying. The personality regression from GPT-4o is real and noticeable — if you enjoyed GPT-4o as a conversational partner, GPT-5 feels like talking to a more capable but less interesting colleague. The day-one jailbreak was a bad look for a company that positions safety as a core differentiator. And MIT Tech Review's assessment of "a refined product, not a transformative breakthrough" has proven accurate over time.

Here is who should use GPT-5:

- Developers and builders: Yes, absolutely. The coding and app creation capabilities justify the price alone. Start with Plus at $20/month.

- General productivity users: The free tier is worth trying. Upgrade to Plus if you hit the limits more than twice a week.

- Researchers and analysts: Consider it, but compare with Claude Opus 4.6 first. For deep reasoning and long-context analysis, Claude is still ahead.

- Writers and creatives: Proceed with caution. GPT-5's writing is technically proficient but lacks personality. Claude is the better choice for creative work.

- Enterprise teams: The Team plan at $25/user/month is competitive, but evaluate against Anthropic's team plans and Google Workspace AI before committing.

GPT-5 earns a 4.3 out of 5 from us. It is a strong model that does many things well and a few things best-in-class. The router architecture is a smart engineering decision that needs better execution. The free access for all users is a genuinely pro-consumer move. But in a market where Claude Opus 4.6 exists for the same price point and Gemini 3 Pro offers 2M token context, GPT-5 is no longer the obvious default choice it once was. It is one of three excellent options, and which one is "best" depends entirely on what you are building.

Have an AI tool you want reviewed?

We publish honest, in-depth reviews of AI tools every week. Submit yours for consideration.

Submit Your AI Tool →Frequently Asked Questions

Is GPT-5 free to use?

Yes. All ChatGPT users get limited GPT-5 access at no cost. Free-tier users can send a handful of GPT-5 messages per day. The Plus plan ($20/month) raises those limits significantly, and Pro ($200/month) gives you unlimited GPT-5 access. For most casual users, the free tier is enough to get a feel for the model.

When was GPT-5 released?

GPT-5 launched on August 7, 2025. It became available simultaneously through ChatGPT and the OpenAI API. The rollout was immediate for all tiers — no waitlist or gradual rollout like previous models.

What is the GPT-5 router architecture?

GPT-5 uses a router system that directs queries to three sub-models: gpt-5-main (fast responses), gpt-5-main-mini (lightweight tasks), and gpt-5-thinking (deep reasoning). The router decides which sub-model handles each request based on complexity. This saves compute on simple tasks and reserves heavy processing for complex ones. In practice, the router occasionally misjudges task difficulty, which can lead to inconsistent quality.

Is GPT-5 better than Claude Opus 4.6?

It depends on the task. GPT-5 excels at interactive app creation, multimodal inputs, and agentic browser automation. Claude Opus 4.6 is stronger at long-context analysis, nuanced writing, and complex reasoning chains. For coding, both are competitive — GPT-5 has the edge in quick prototyping while Claude handles large codebase refactoring better. We use both daily for different purposes.

How much energy does GPT-5 use?

OpenAI reports approximately 18 watt-hours per medium-length response. That is roughly equivalent to running a laptop for about one minute per query. At individual scale this is negligible, but across hundreds of millions of daily users the aggregate energy footprint is substantial.

Can GPT-5 browse the web and use a computer?

Yes. GPT-5 has agentic capabilities including autonomous browser use and desktop setup tasks. It can navigate websites, fill out forms, click through multi-step workflows, and execute tasks across applications. This works through both ChatGPT (with the browsing feature enabled) and the API with tool-use. It is not perfect — complex multi-tab workflows sometimes confuse the agent — but for straightforward web tasks it is reliable.

What programming languages does GPT-5 support?

GPT-5 was primarily trained on and shows its strongest performance in Python, C++, TypeScript, and C. It can handle most other popular languages (Java, Go, Rust, Ruby, etc.) competently, but benchmarks and our own testing show the best results in those four core languages. Python and TypeScript in particular see the most consistent output quality.

Was GPT-5 jailbroken?

Yes. GPT-5 was jailbroken on the day of its launch — August 7, 2025. Security researchers found ways to bypass its safety guardrails within hours of the public release. OpenAI acknowledged the issue and patched the most critical exploits within the first week. The incident raised questions about the thoroughness of OpenAI's pre-release red-teaming process, especially for a model positioned as their most advanced.

Recommended AI Tools

Chartcastr

Updated March 2026 · 11 min read · By PopularAiTools.ai

View Review →GoldMine AI

Updated March 2026 · 11 min read · By PopularAiTools.ai

View Review →Git AutoReview

Updated March 2026 · 12 min read · By PopularAiTools.ai

View Review →Renamer.ai

AI-powered file renaming tool that uses OCR to read document content and automatically generates meaningful file names. Supports 30+ file types and 20+ languages.

View Review →