How to Run Claude Code From a USB Drive (Free, Portable, No GPU)

AI Infrastructure Lead

⚡ Key Takeaways

- OpenClaude Portable runs a full AI coding agent from any USB drive — no installation, no trace on the host machine

- Works on Windows, Mac, and Linux from the same folder with shared config and chat history

- Supports 6 AI providers including free options (NVIDIA NIM, OpenRouter) and fully offline local models (Ollama)

- Includes a web dashboard UI, session resume, and a speed proxy that cuts local model latency by up to 90%

- The entire setup takes under 15 minutes and needs only ~150 MB of disk space for the base install

- What Is OpenClaude Portable?

- Why Run an AI Agent From a USB Drive?

- What You Need Before Starting

- Setup on Windows (Step-by-Step)

- Setup on Linux and Mac

- Choosing Your AI Provider (Free Options)

- Running Fully Offline With Ollama

- The Web Dashboard

- OpenClaude vs Official Claude Code

- Security and Privacy

- FAQ

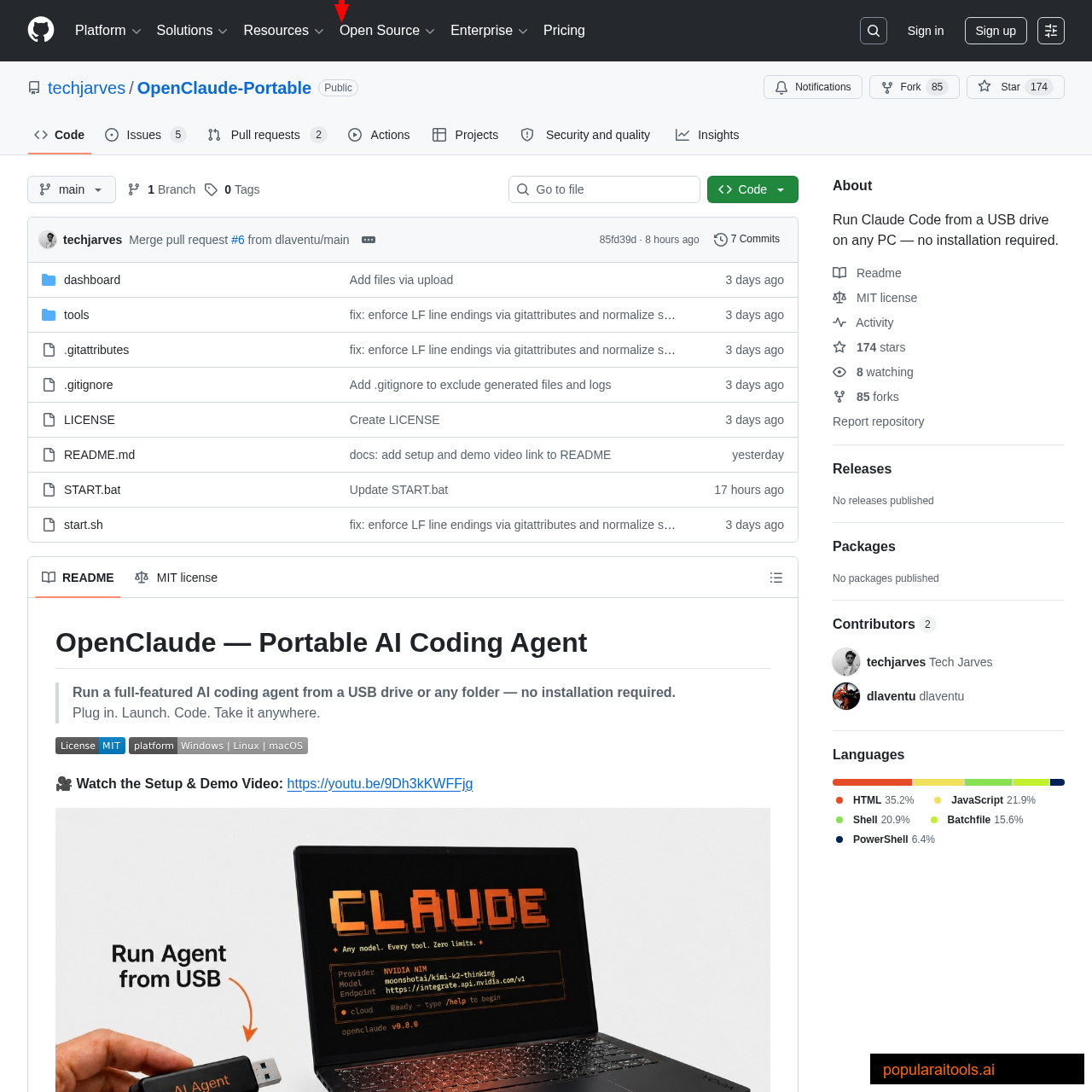

What Is OpenClaude Portable?

We tested something this week that felt almost absurd: running a full Claude Code-style AI coding agent from a USB thumb drive. No installation. No subscription. Plug it into any Windows, Mac, or Linux machine, run one file, and you've got an AI pair programmer ready to write code, run commands, and manage files — all from a device smaller than your house key.

OpenClaude Portable is an open-source project that packages the OpenClaude engine (a community fork of Claude Code) into a self-contained folder with a bundled Node.js runtime, a smart system-prompt proxy, and a web-based dashboard. Everything runs strictly inside the project folder. The host machine never knows you were there.

The project gained immediate traction — the demo video hit 39,000 views in its first day. The appeal is obvious: a portable AI coding agent that costs nothing, requires no GPU, and leaves zero traces. Whether you're a student using lab computers, a consultant working on client machines, or just someone who wants AI assistance without the subscription overhead, this solves a real problem.

Why Run an AI Agent From a USB Drive?

The standard Claude Code setup requires Node.js, npm, a global install, and an Anthropic API key or subscription. That's fine for your personal dev machine. But there are plenty of situations where a traditional installation isn't practical:

- University/library computers — you can't install software, but you can run portable apps from USB

- Client sites and shared workstations — you don't want to leave API keys or config files behind

- Quick coding on borrowed machines — friend's laptop, hotel business center, any machine you don't own

- Privacy-first developers — everything stays on your drive, nothing touches the host OS

- Cost-conscious setup — use free API providers instead of paying for an Anthropic subscription

OpenClaude Portable solves all of these. Same USB, same config, same chat history — on any machine you plug it into. When you unplug, there's no evidence you were there.

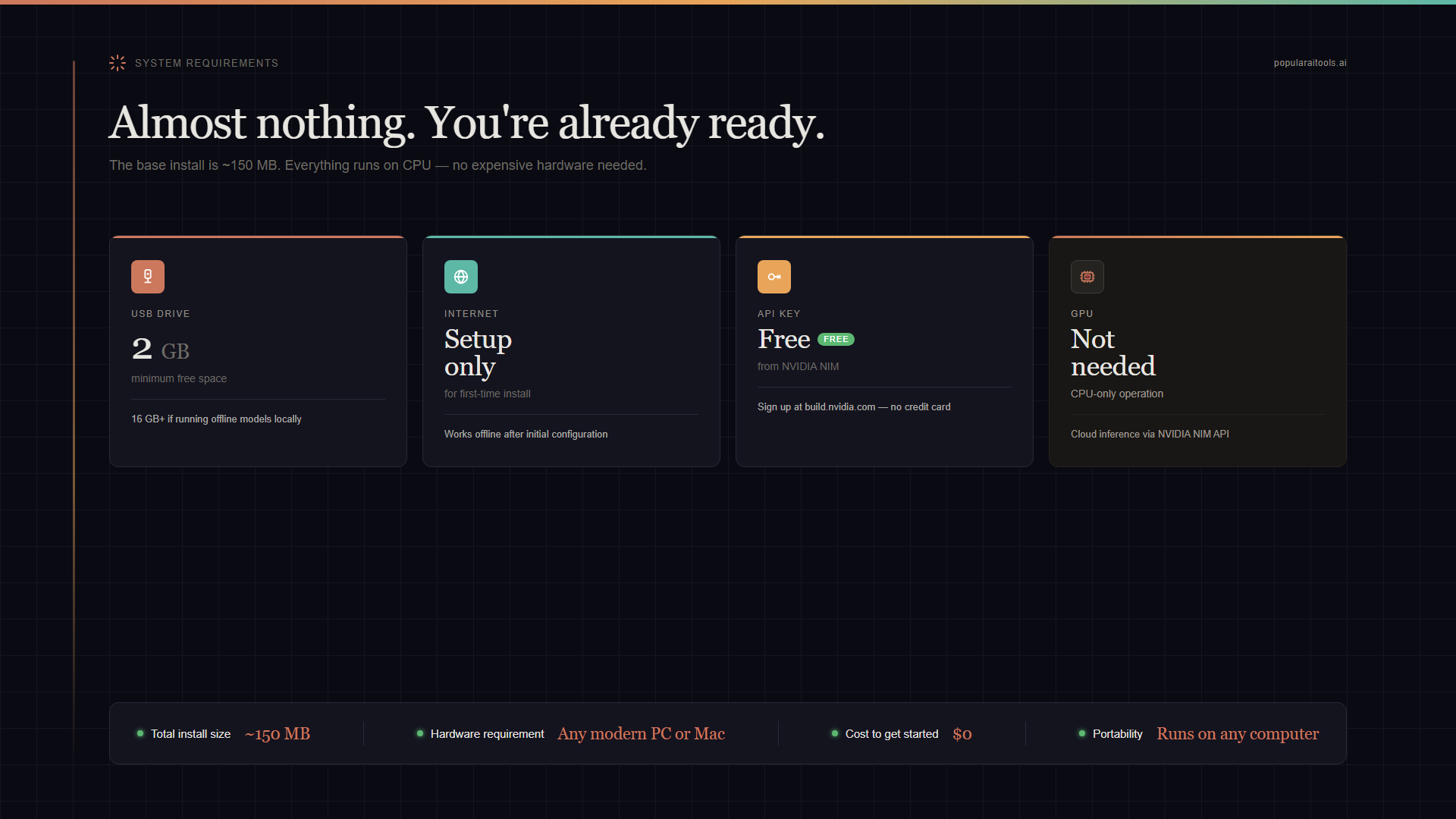

What You Need Before Starting

The requirements are refreshingly minimal:

| Item | Details |

|---|---|

| USB Drive / SSD | Minimum 2 GB free (16 GB+ if you want offline models) |

| Internet | Required for first-time setup + cloud providers. Not needed for Ollama offline mode. |

| API Key (free) | NVIDIA NIM (1,000 free credits/month) or OpenRouter (free tier models) |

| Host OS | Windows 10+, any modern Linux, macOS (all supported from the same folder) |

| GPU | NOT required. Cloud providers handle inference. Only helpful for large local models. |

The base install (Node.js + OpenClaude engine) takes about 150 MB. If you add offline models via Ollama, budget 800 MB for the smallest model (gemma3:1b) up to 8 GB for larger ones.

Setup on Windows (Step-by-Step)

We tested this on a fresh Windows 11 machine with nothing pre-installed. The entire process took about 8 minutes.

Step 1: Download the Repository

Go to the OpenClaude Portable GitHub repo and download the ZIP. Extract it to your USB drive. You should see a folder structure with START.bat at the root.

Step 2: Run START.bat

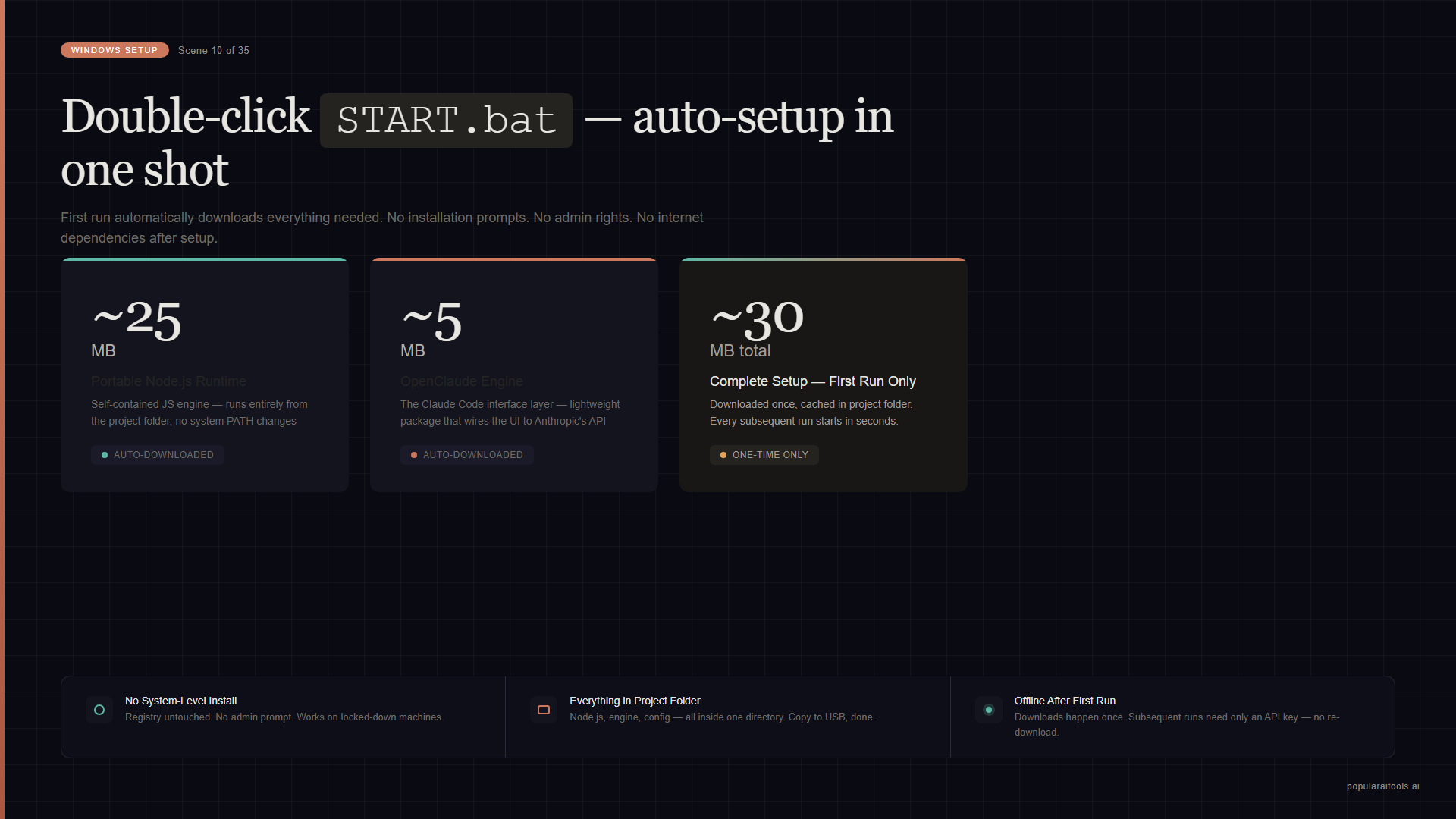

Double-click START.bat. On the first run, it automatically downloads a portable Node.js runtime (~25 MB) and the OpenClaude engine (~5 MB). No system-level install happens — everything goes into the project folder on your USB.

E:\OpenClaude-Multi-Platform> .\START.bat

[+] Downloading Node.js portable... Done (25 MB)

[+] Installing OpenClaude engine... Done (5 MB)

=== OpenClaude Portable ===

1) Launch AI — Normal Mode

2) Limitless Mode — Auto-executes

3) Open Dashboard — Web UI at http://localhost:3000

4) Change Provider — Switch model or API key

5) Setup Offline — Download local Ollama models

Select [1-5]:Step 3: Choose Your Provider

On first run, it walks you through provider selection. Pick NVIDIA NIM for the easiest free setup (we'll cover all options in the providers section below). Paste your API key when prompted — it's stored only in data/ai_settings.env on your USB.

Step 4: Start Coding

Select option 1 (Normal Mode) and you're in. The agent launches in your terminal with full coding capabilities — file creation, editing, bash commands, and multi-step reasoning. If you close mid-session, use RESUME.bat <session-id> to pick up where you left off.

Setup on Linux and Mac

The same USB folder works on all three platforms. On Linux or macOS, use the shell script instead of the batch file:

chmod +x start.sh

./start.shThe only system dependency is curl, which is pre-installed on virtually every modern Linux distro and macOS. The script handles the rest — downloading the appropriate Node.js binary for your architecture and setting up the engine.

The data/ folder is shared across platforms. This means you can set up on Windows, plug the same USB into a Linux machine, and your chat history, provider config, and session data are all there. No re-configuration needed.

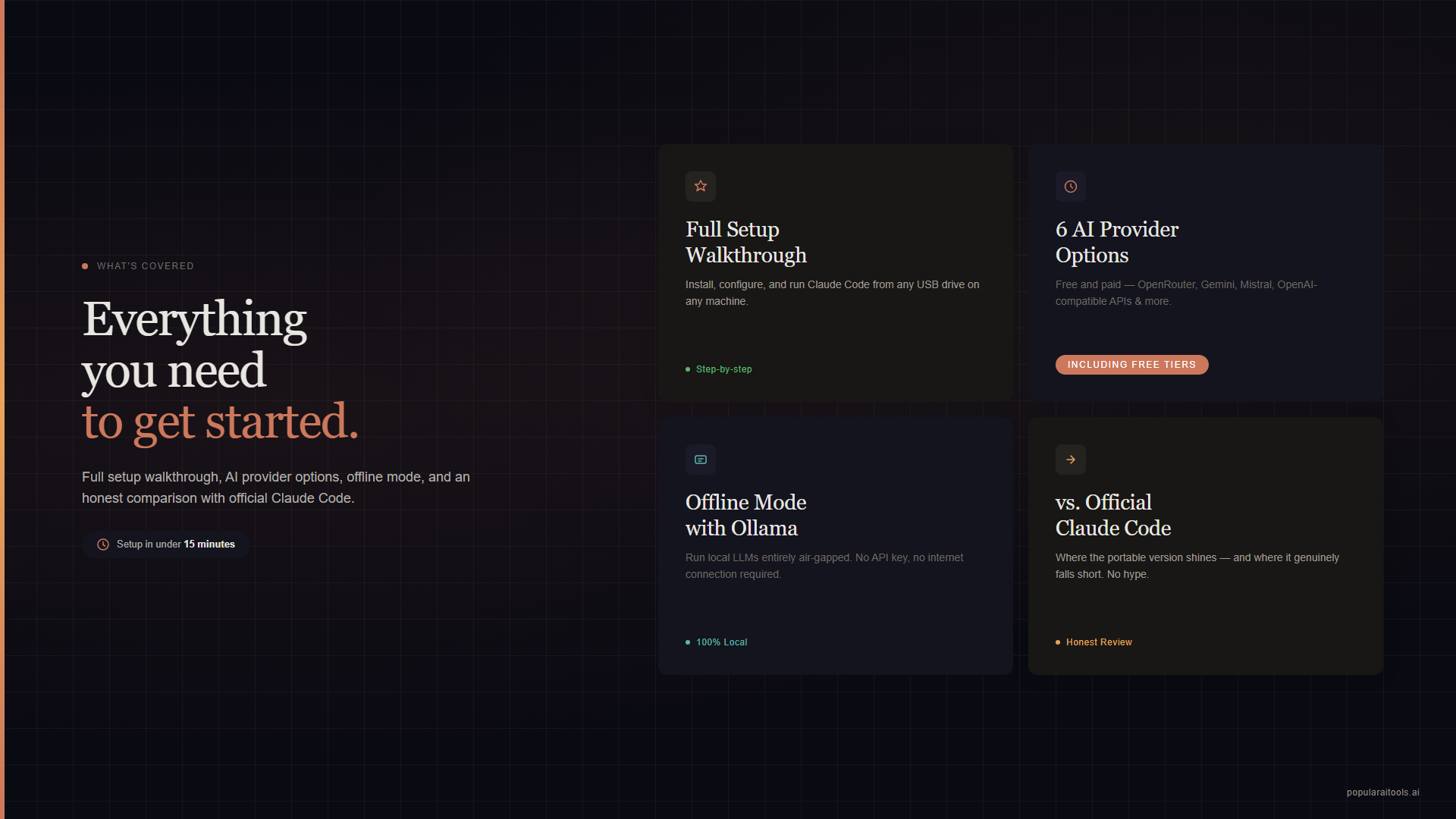

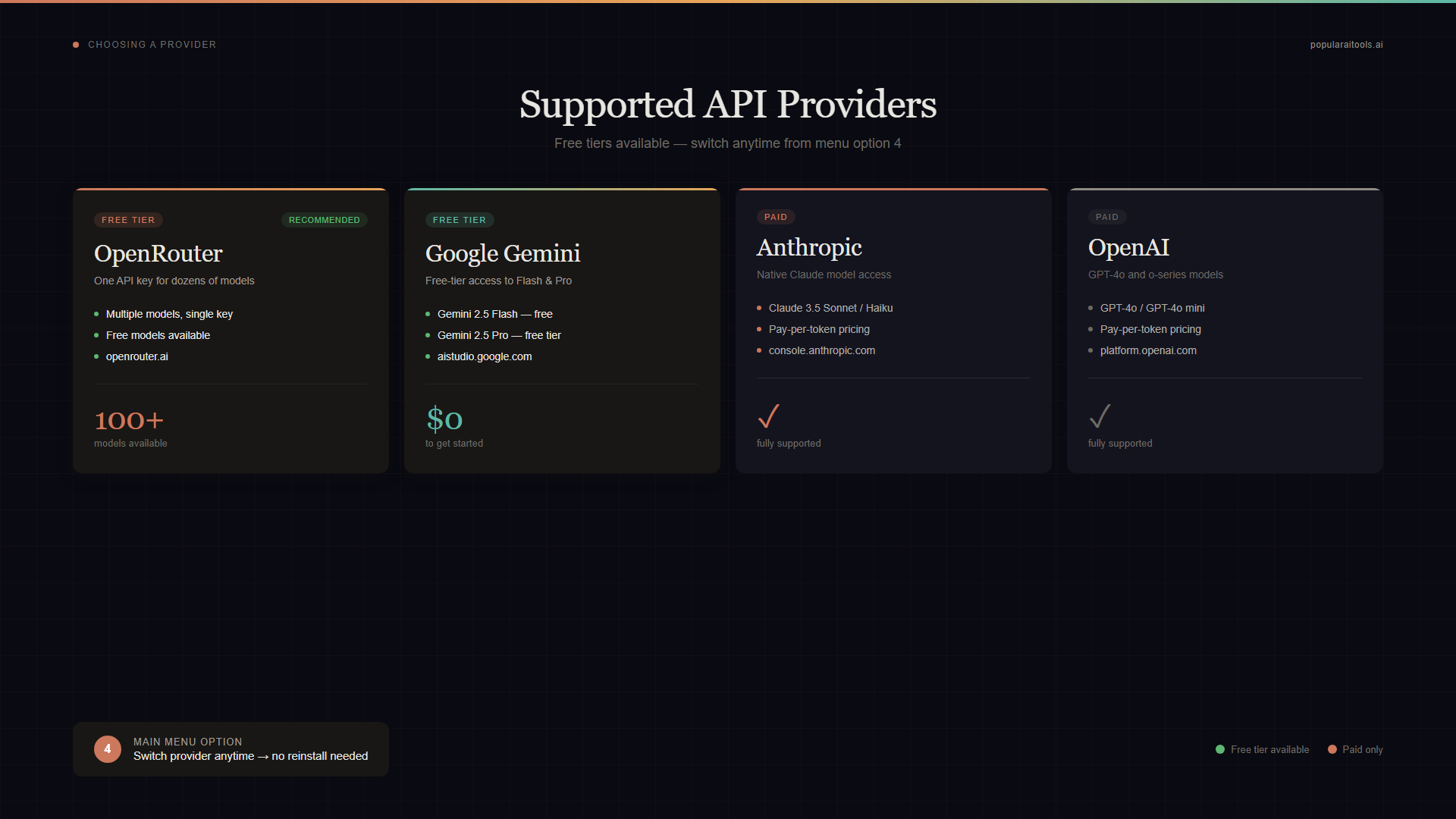

Choosing Your AI Provider (Free Options)

OpenClaude Portable supports 6 AI providers. The two best free options:

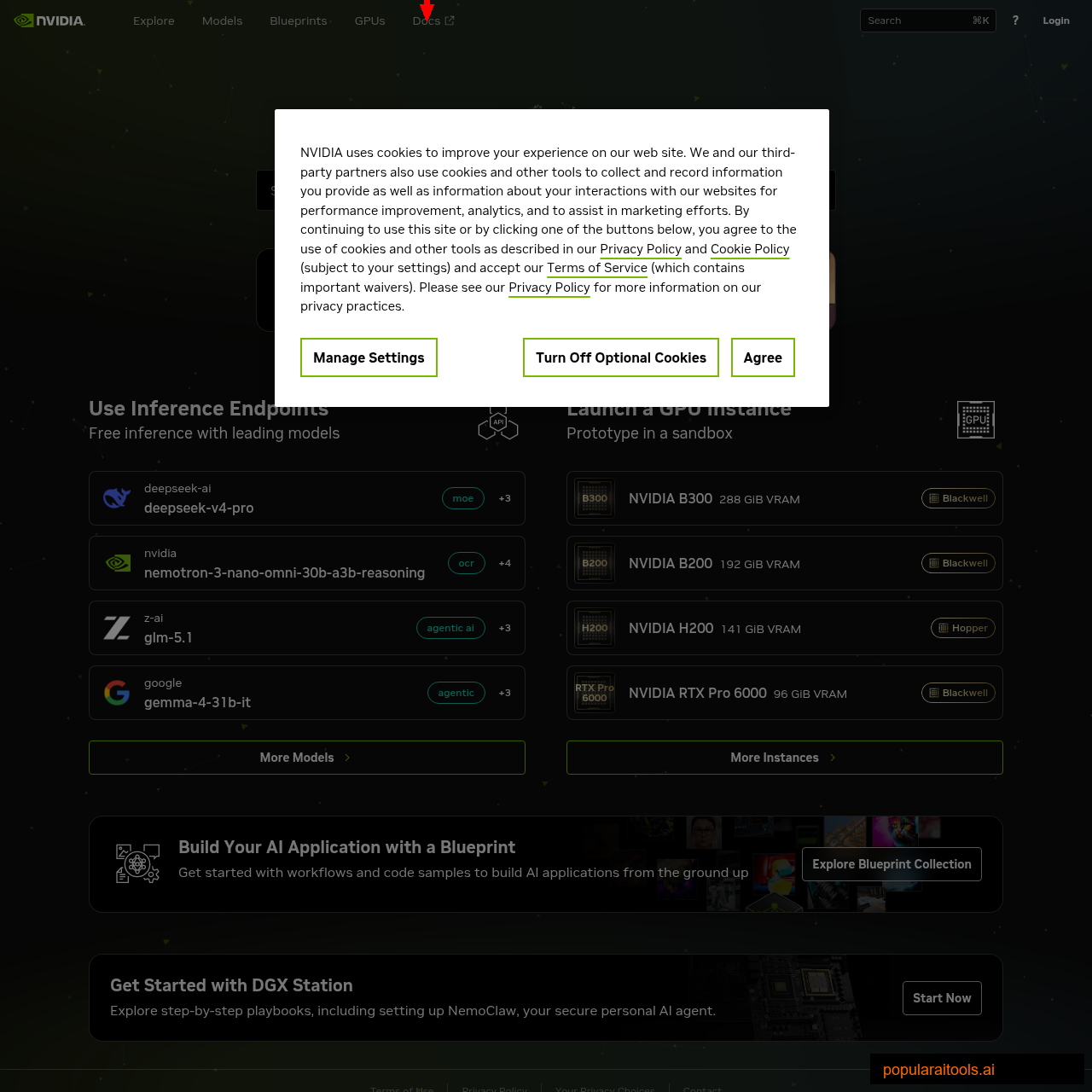

NVIDIA NIM (Recommended Free)

1,000 free credits per month. Sign up at build.nvidia.com, generate an API key, and paste it into OpenClaude. Uses models like GLM-4 and Nemotron for free. No credit card required.

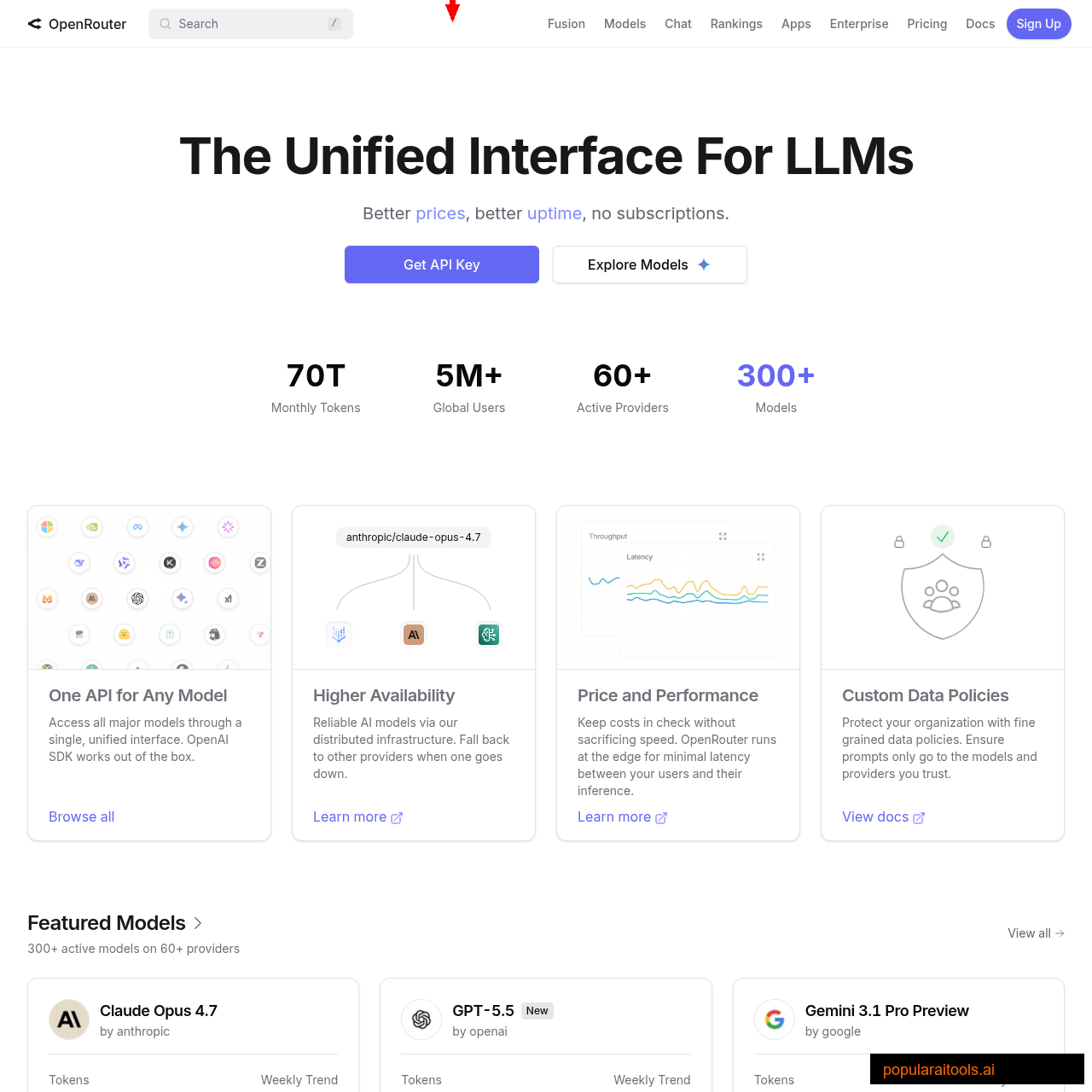

OpenRouter (Free Tier)

Access multiple models through one API key. Free tier includes smaller models with rate limits. Sign up at openrouter.ai. Good for experimenting with different models.

Google Gemini (Free Tier)

Free API tier available through aistudio.google.com. Good rate limits for personal use. Supports Gemini 2.5 Flash and Pro models.

Ollama (Fully Offline)

Run models entirely on your hardware with zero internet. Free forever. We cover this in detail in the next section.

The paid providers (Anthropic Claude, OpenAI) also work if you have existing API keys. You can switch providers at any time using option 4 in the main menu or running Change_Provider.bat.

Running Fully Offline With Ollama

This is where things get interesting. Select option 5 (Setup Offline) from the main menu, and OpenClaude downloads a portable Ollama binary plus your chosen model directly to the USB drive. After the initial download, you never need internet again.

The built-in speed proxy is what makes this actually usable. OpenClaude's system prompt is roughly 10,000 tokens — way too much for a small model running on CPU. The proxy intercepts every request and trims that down to ~300 tokens before it reaches Ollama. The result: first-token latency drops from 60–120 seconds to 5–20 seconds on CPU-only hardware.

| Model | Size | Speed | Best For |

|---|---|---|---|

gemma3:1b |

~800 MB | Fastest | Quick tasks, simple code generation |

qwen2.5:1.5b |

~1 GB | Fast | General coding, good instruction following |

phi3:mini |

~2.3 GB | Moderate | Complex reasoning, multi-step tasks |

Performance tip: If your USB drive is USB 2.0 and model inference feels sluggish, copy the

data/ollama/folder to the host machine's SSD temporarily. The bottleneck is usually disk read speed, not CPU.

The Web Dashboard

OpenClaude Portable isn't just a terminal tool. Select option 3 from the menu (or run Open_Dashboard.bat) and it launches a browser-based UI at http://localhost:3000.

The dashboard gives you a ChatGPT-style interface with:

- Agent mode with tool cards showing what the AI is doing

- Thinking visualization — see the model's reasoning process

- File browser integration for navigating project structures

- Chat history that persists between sessions on the USB

For people who find terminal-based AI tools intimidating, this changes the equation entirely. You get the power of a coding agent in a familiar chat interface — no command line knowledge required.

OpenClaude vs Official Claude Code

Let's be clear about what you're getting and what you're giving up:

| Feature | OpenClaude Portable | Official Claude Code |

|---|---|---|

| Installation | None (portable) | Node.js + npm global |

| Cost | Free (with free providers) | $20/mo Max or API credits |

| AI Providers | 6 (NVIDIA, OpenRouter, Gemini, etc.) | Anthropic only |

| Offline Mode | ✓ (Ollama) | ✗ |

| Portability | USB/SSD, cross-platform | Requires local install |

| Web Dashboard | ✓ (localhost:3000) | Terminal + VS Code only |

| Model Quality | Varies by provider | Claude Opus/Sonnet (best-in-class) |

| MCP Support | Basic | Full ecosystem |

Our take: If you're doing serious production work and can afford it, official Claude Code with Opus is still the gold standard for code quality. But if you want a free, portable, zero-commitment way to get AI coding assistance — especially on machines you don't control — OpenClaude Portable is genuinely impressive for the price (free).

If you're already invested in the Claude Code ecosystem with custom skills, hooks, and MCP servers, the official version is where you'll get the most value. OpenClaude Portable is better suited as a secondary portable tool or a way to experiment without financial commitment.

Security and Privacy

The zero-footprint design deserves a closer look. OpenClaude Portable redirects all standard config directories (XDG_CONFIG_HOME, XDG_DATA_HOME, CLAUDE_CONFIG_DIR) to the data/ folder on your drive. This means:

- API keys are stored only in

data/ai_settings.envon your USB — never in the host's environment - Chat history and sessions live in

data/openclaude/— travel with the drive - No telemetry — nothing is sent anywhere except your chosen AI provider

- Normal Mode asks before any file write or shell command — you approve every action

Security note: This is an open-source community project, not officially affiliated with Anthropic. Always review code before running it, especially scripts from GitHub. The MIT license means no warranty. If you're handling sensitive code, inspect the

START.batandlocal-proxy.jssource before trusting it with your API keys.

Frequently Asked Questions

Recommended AI Tools

Claude Code (Agentic OS)

Claude Code in 2026 has become an Agentic OS — seven composable primitives stacked on a filesystem-based config layer. Honest review of Skills, Agent Teams, Multi-Agent workflows, real pricing, and the alternatives (Cursor, Cline, Devin, Codex).

View Review →Wondershare Filmora

Wondershare Filmora is an AI-powered video editor that wraps Sora 2, Veo 3.1, Kling 2.5 and 20+ other AI tools around a beginner-friendly multi-track timeline.

View Review →Emergent.sh

Build production-ready apps in hours, not weeks. Full-stack with auth, payments, hosting included. $20-200/mo pricing.

View Review →Emergent.sh

Build production-ready apps in hours, not weeks. Full-stack with auth, payments, hosting included. $20-200/mo pricing.

View Review →