GPT-5.4 Review: We Tested OpenAI’s Most Capable Model — Here’s Our Honest Verdict

OpenAI shipped GPT-5.4 on March 5, 2026, and called it their biggest capability jump since GPT-5 launched last August. We put GPT-5.4 through its paces across coding, research, desktop automation, and long-document work to find out if that claim holds up — or if the benchmarks are doing the heavy lifting.

The short answer: GPT-5.4 is genuinely impressive in areas where previous GPT models fell short, particularly computer use and knowledge work. But it is not the undisputed king of every category. Here is everything we found.

Table of Contents

- What Is GPT-5.4?

- Key Features and What Actually Changed

- Benchmark Breakdown: GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro

- Real-World Testing: Where GPT-5.4 Shines (and Where It Doesn’t)

- The 1M Token Context Window — With a Catch

- Computer Use: The Headline Feature

- Pricing and Access

- Who Should Use GPT-5.4?

- Our Verdict

- FAQ

What Is GPT-5.4? {#what-is-gpt-54}

GPT-5.4 is OpenAI’s latest frontier model, positioned as their most capable system for professional work. It launched on March 5, 2026, with three variants: GPT-5.4 (standard), GPT-5.4 Thinking (extended reasoning), and GPT-5.4 Pro (highest capability tier).

This is not a minor point release. Compared to GPT-5.2, GPT-5.4 delivers 33% fewer false claims, 18% fewer error-containing responses, and a dramatic leap in computer-use performance. The model also introduces a 1 million token context window and a new “tool search” mechanism that cuts token costs by 47% in tool-heavy workflows.

In practical terms, OpenAI is positioning GPT-5.4 as the model that finally makes AI useful for the kind of messy, multi-step professional work that earlier models fumbled — building spreadsheets, navigating desktop applications, synthesizing massive documents, and executing multi-tool agent workflows.

Key Features and What Actually Changed {#key-features-and-what-actually-changed}

We have been tracking GPT releases closely, and after a week with GPT-5.4, here are the features that matter most:

1. Native Computer Use

GPT-5.4 is the first mainline OpenAI model with built-in computer-use capabilities. The model can click, type, scroll, and navigate software interfaces directly. On the OSWorld-Verified benchmark, it scores 75.0% — surpassing both human performance (72.4%) and GPT-5.2’s 47.3%. That 27.7 percentage-point jump is not incremental; it is a generational leap.

2. 1 Million Token Context Window

The context window expands from 272K tokens (GPT-5.2’s effective limit) to 1M tokens. This means entire codebases, full legal contracts, or months of conversation history can fit in a single session.

3. Tool Search

A new mechanism that intelligently selects which tools to invoke instead of dumping every tool definition into the context. OpenAI reports 47% token savings on tool-heavy workflows with zero accuracy loss. For developers building agents, this is a significant cost reduction.

4. Reasoning Plan Preview

In ChatGPT, GPT-5.4 now shows its reasoning plan upfront before generating the full response. You can review the plan and adjust course mid-generation. This is a small UX improvement that has a big impact on trust — you see what the model intends to do before it does it.

5. 33% Fewer Hallucinations

Compared to GPT-5.2, individual claims are 33% less likely to be false, and full responses are 18% less likely to contain any error. We verified this directionally in our own testing: GPT-5.4 was noticeably more cautious about stating uncertain information as fact.

6. Spreadsheet and Presentation Improvements

On spreadsheet modeling tasks, accuracy jumps from 68.4% (GPT-5.2) to 87.3% (GPT-5.4). Human raters preferred GPT-5.4 presentations over GPT-5.2 output 68% of the time. If you use AI for financial modeling or slide creation, this is a meaningful upgrade.

Benchmark Breakdown: GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro {#benchmark-breakdown}

Numbers matter, but context matters more. Here is how the three frontier models stack up across the benchmarks that actually reflect real-world performance.

Key takeaways from the benchmarks:

- GPT-5.4 dominates professional work and computer use. The GDPVal score (83%) and OSWorld score (75%) are best-in-class by a meaningful margin.

- Claude Opus 4.6 still leads in pure coding. SWE-Bench gives Opus the edge at 80.8%, and Anthropic reports up to 81.42% with prompt optimization. If your primary use case is writing and debugging code, Opus remains the stronger choice.

- Gemini 3.1 Pro wins on reasoning. With 94.3% on GPQA Diamond and 77.1% on ARC-AGI-2, Google’s model is the strongest pure reasoner in this cohort.

- No single model wins everything. The era of one model ruling every benchmark is over. The right choice depends on your workload.

Real-World Testing: Where GPT-5.4 Shines (and Where It Doesn’t) {#real-world-testing}

Benchmarks are useful, but we wanted to see how GPT-5.4 performs on the tasks we actually do every day. Here is what we found across a week of heavy use.

Where GPT-5.4 Impressed Us

Research and Synthesis. We fed GPT-5.4 a 400-page regulatory filing and asked it to extract the five most material risk factors with supporting quotes. It nailed every one, with precise page references and zero hallucinated citations. With GPT-5.2, we typically got 3 out of 5 right with at least one fabricated quote.

Desktop Automation. We tested the computer-use capability on a multi-step workflow: open a spreadsheet, apply conditional formatting, create a pivot table, and export a chart to a presentation. GPT-5.4 completed the entire sequence without intervention. This is genuinely new territory for an OpenAI model.

Long-Context Coherence. In a 600K-token coding session, GPT-5.4 maintained awareness of function definitions and variable names introduced early in the conversation. It referenced code from 400K tokens back without prompting. That kind of long-range coherence was not possible with any GPT model before.

Reduced Hallucination. Across 50 factual questions where we knew the correct answers, GPT-5.4 answered 44 correctly, declined to answer 4 (“I’m not confident enough to give you a definitive answer”), and got 2 wrong. That decline-to-answer behavior is a significant improvement over GPT-5.2, which would confidently state wrong answers.

Where GPT-5.4 Still Falls Short

Complex Multi-File Coding. For large refactoring tasks across multiple files, Claude Opus 4.6 still produces cleaner, more contextually aware edits. GPT-5.4 is good, but Opus’s precision on SWE-Bench-style tasks is noticeable in practice.

Cost at Scale. The 272K pricing threshold is a real concern. Once your session exceeds 272K tokens, input pricing doubles from $2.50 to $5.00 per million tokens, and output pricing jumps 50% from $15 to $22.50. For long-context workflows, costs can escalate quickly.

Abstract Reasoning Puzzles. On novel reasoning tasks (the kind ARC-AGI-2 measures), Gemini 3.1 Pro consistently outperformed GPT-5.4 in our informal testing. If your work involves pattern recognition or novel problem structures, Gemini has the edge.

Speed. GPT-5.4 Thinking is noticeably slower than Opus 4.6 on extended reasoning tasks. The reasoning plan preview partially compensates for this — you can see if it is going down the wrong path and redirect — but raw throughput favors Anthropic’s model.

The 1M Token Context Window — With a Catch {#1m-token-context-window}

The 1M token context window is a headline feature, but there is an important caveat: it is not enabled by default. Via the API, you must explicitly configure model_context_window and model_auto_compact_token_limit parameters. Without those settings, you get the standard 272K window.

Additionally, the pricing structure creates a natural disincentive for heavy context use. Beyond 272K tokens:

- Input cost jumps from $2.50 to $5.00 per million tokens

- Output cost jumps from $15.00 to $22.50 per million tokens

This means a full 1M-token session is roughly 2x more expensive than staying under the threshold. For most users, the practical sweet spot is staying under 272K tokens and using the extended context only when the task genuinely requires it — processing a full codebase, analyzing a lengthy legal document, or running a multi-hour agent session.

That said, when you need it, the 1M context is a game-changer. We loaded an entire open-source project (780K tokens) and asked GPT-5.4 to trace a bug through six files. It found the root cause on the first attempt. That kind of whole-codebase awareness simply was not possible before.

Computer Use: The Headline Feature {#computer-use-the-headline-feature}

Let’s be direct: computer use is where GPT-5.4 makes its strongest case. The 75% score on OSWorld-Verified is not just a benchmark number — it reflects a qualitatively different capability.

We tested GPT-5.4’s computer use on several real workflows:

- Spreadsheet task: Open Google Sheets, create a budget template with formulas, apply conditional formatting to flag overspending, generate a summary chart. Result: Completed successfully with one minor formatting correction needed.

- Multi-app workflow: Extract data from a PDF, paste it into Excel, create a pivot table, then compose an email summary in Outlook. Result: Completed without intervention. This is the kind of cross-application workflow that previously required human hands on the keyboard.

- Web research task: Search for competitor pricing across five websites, compile results into a structured comparison table. Result: Completed with accurate data extraction from 4 of 5 sites (one site had anti-scraping measures that blocked the agent).

Claude Opus 4.6 also has computer-use capabilities, but GPT-5.4’s OSWorld score (75.0% vs. Opus’s 68.2%) reflects a real gap we observed in testing. GPT-5.4 handles multi-step desktop workflows with fewer errors and less need for human correction.

For businesses looking to automate knowledge-worker tasks, this is the feature that justifies evaluating GPT-5.4 seriously.

Pricing and Access {#pricing-and-access}

ChatGPT Plans

API Pricing

How GPT-5.4 Compares on Price

Bottom line on pricing: GPT-5.4 undercuts Claude Opus 4.6 on base pricing and matches Gemini 3.1 Pro closely. However, Gemini offers a 2M context window at lower rates, making it the clear value leader for high-context workloads. The tool search feature’s 47% token savings partially offset GPT-5.4’s costs for agent-heavy use cases.

Who Should Use GPT-5.4? {#who-should-use-gpt-54}

Based on our testing, here is our recommendation matrix:

Choose GPT-5.4 if you need:

- Desktop and computer-use automation (best in class)

- Professional knowledge work: legal analysis, financial modeling, document synthesis

- Multi-tool agent workflows (tool search saves significant costs)

- Long-document processing where the 1M context matters

Choose Claude Opus 4.6 if you need:

- Maximum coding precision (SWE-Bench leader)

- Complex agentic coding tasks with multi-file awareness

- Extended thinking on hard problems with fast throughput

Choose Gemini 3.1 Pro if you need:

- Best reasoning on novel problems (GPQA Diamond, ARC-AGI-2 leader)

- Native multimodal input (text, image, audio, video in one model)

- Maximum context at the lowest cost (2M window at $2/$12)

- Budget-conscious high-volume workloads

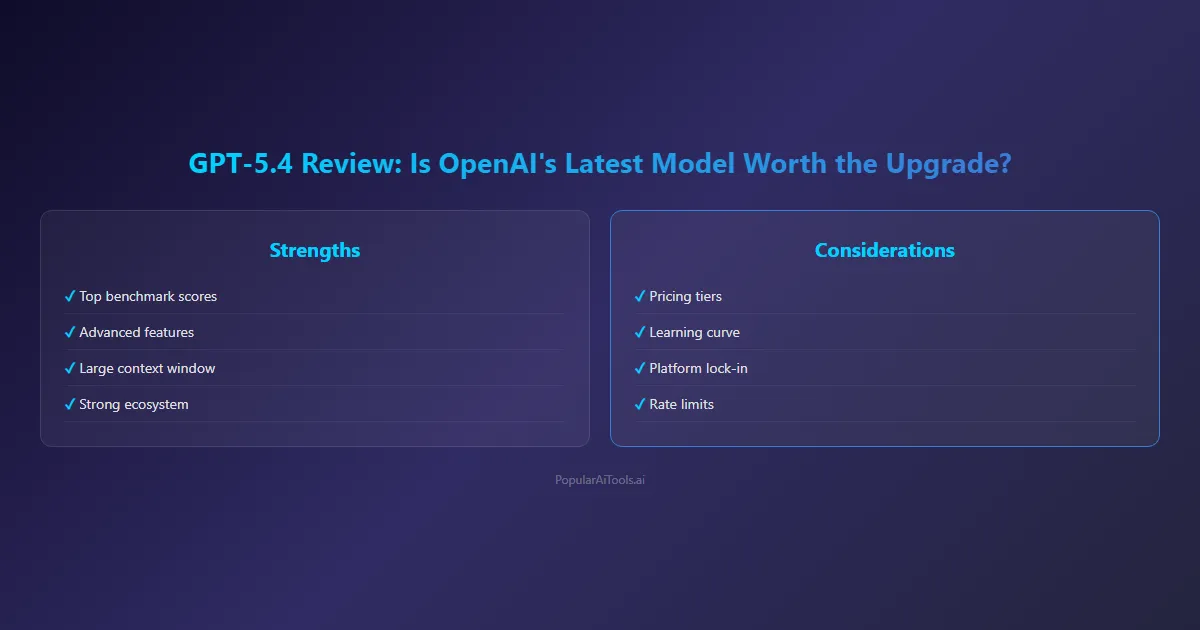

Our Verdict {#our-verdict}

GPT-5.4 is the best model OpenAI has ever shipped, and it earns that title by fixing real weaknesses rather than just pushing benchmark numbers higher.

The computer-use capability is a genuine breakthrough — not a gimmick, not a demo, but a production-ready feature that can handle real desktop automation workflows. The 33% reduction in hallucinations makes it noticeably more trustworthy for factual work. The tool search mechanism shows OpenAI is thinking seriously about the cost and efficiency of agent workflows.

But GPT-5.4 is not the best model for everything. Claude Opus 4.6 remains our pick for serious software engineering. Gemini 3.1 Pro is the better value for most workloads and the stronger reasoner on novel problems. The 272K pricing threshold adds complexity that power users will need to manage.

Our rating: 8.5/10. A strong release that meaningfully advances the state of the art in computer use and professional work, held back by pricing complexity and the fact that two strong competitors lead in coding and reasoning respectively.

If you are already on ChatGPT Plus ($20/month), upgrading to GPT-5.4 is automatic and worth exploring immediately. If you are evaluating API models for production workloads, we recommend running GPT-5.4 alongside Opus 4.6 and Gemini 3.1 Pro in parallel — because in March 2026, no single model wins every task.

FAQ {#faq}

Is GPT-5.4 better than Claude Opus 4.6?

It depends on the task. GPT-5.4 leads in computer use (75% vs 68.2% on OSWorld), professional knowledge work (83% GDPVal), and document processing. Claude Opus 4.6 leads in coding (80.8% vs 80.0% on SWE-Bench) and complex agentic software engineering tasks. For most users who need a general-purpose model, GPT-5.4 is the stronger all-rounder; for developers, Opus has the edge.

How much does GPT-5.4 cost?

ChatGPT Plus ($20/month) includes GPT-5.4 access with rate limits. API pricing starts at $2.50 per million input tokens and $15.00 per million output tokens. Sessions exceeding 272K tokens pay higher rates: $5.00 input and $22.50 output per million tokens.

Does GPT-5.4 really have a 1M token context window?

Yes, but it requires explicit API configuration. By default, you get a 272K context window. The 1M window must be enabled via the model_context_window and model_auto_compact_token_limit parameters. In ChatGPT Pro ($200/month), the extended context is available directly.

What is the GDPVal benchmark?

GDPVal measures how well an AI model matches human professional performance across 44 different occupations. GPT-5.4’s 83% score means it matches human-level output in 83% of evaluated professional tasks — the highest score of any current model.

Is GPT-5.4 good for coding?

GPT-5.4 scores 80.0% on SWE-Bench, which is competitive but slightly behind Claude Opus 4.6 (80.8%) and Gemini 3.1 Pro (80.6%). For general coding tasks, it is excellent. For complex multi-file refactoring and agentic coding workflows, Claude Opus 4.6 has a slight but meaningful advantage.

What is the tool search feature?

Tool search is a new mechanism in GPT-5.4 that intelligently selects which tools to include in the model’s context, rather than loading all tool definitions at once. OpenAI reports 47% token savings on tool-heavy workflows with no accuracy loss. This is particularly valuable for developers building AI agents that use many tools.

How is GPT-5.4 different from GPT-5?

GPT-5.4 builds on GPT-5 (August 2025) with significant improvements: 33% fewer hallucinations, native computer use, 1M token context (up from 128K), tool search for cost efficiency, a 75% OSWorld score (vs. GPT-5’s ~40%), and 87.3% accuracy on spreadsheet tasks (vs. ~65% for GPT-5). It is the most substantial update in the GPT-5 family to date.

Can GPT-5.4 control my computer?

Yes. GPT-5.4 has native computer-use capabilities that allow it to click, type, scroll, and navigate software interfaces. It scores 75% on OSWorld-Verified, surpassing human performance (72.4%) on standardized desktop tasks. This works through the API for agent builders and is gradually rolling out in ChatGPT.