Conway: What 512,000 Lines of Leaked Code Reveal About Anthropic's AI Platform Strategy

AI Infrastructure Lead

Key Takeaways

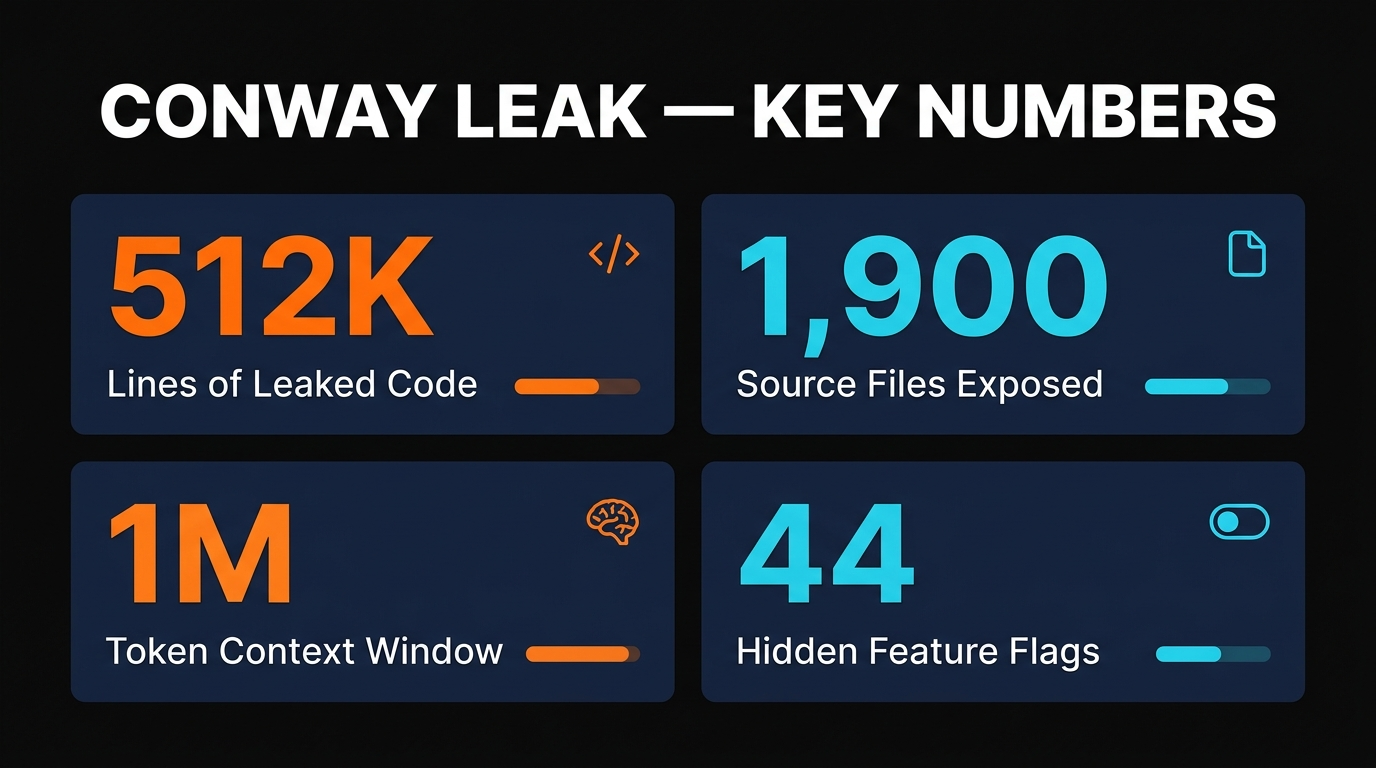

- The Claude Code source leak (512K lines, 1,900 files) revealed Conway — Anthropic's unreleased always-on AI agent that runs continuously without human prompting

- Conway flips the AI interaction model: instead of "you prompt, AI responds," it's "AI watches triggers, AI acts automatically"

- Anthropic is executing a five-surface platform strategy: Claude Code → Cowork → Managed Agents → Conway → MCP ecosystem

- The CNW extension format creates an app-store-like ecosystem — but it's proprietary, not built on the open MCP standard

- Behavioral lock-in is the real risk: once an always-on agent learns your workflows over months, there's no framework to migrate that "intelligence" to a competitor

On March 31, 2026, a routine npm package update quietly exposed something Anthropic didn't intend anyone to see. The Claude Code source code — 512,000 lines across 1,900 files — leaked through a simple packaging error. Most coverage focused on the security implications and the source code itself. But buried in those files was something far more interesting: evidence of a comprehensive platform strategy that goes well beyond a coding assistant.

The biggest reveal? An unreleased product called Conway — an always-on AI agent that doesn't wait for your prompts. It runs continuously in the background, watching for triggers and acting autonomously. And when you connect the dots between Conway, Claude Code, Cowork, the new Managed Agents API, and the MCP ecosystem, a much bigger picture emerges.

We spent the past week analyzing the leaked code, reading every published analysis, and mapping the strategy. Here's what we found.

The Leak Nobody Noticed

The Claude Code leak happened on March 31 — a source map in a public npm package exposed the full TypeScript codebase. Anthropic filed a DMCA takedown on GitHub, but by then the code had been mirrored, analyzed, and discussed across multiple platforms.

The headlines focused on security: exposed source code, potential prompt injection vectors, and the fact that Anthropic's own security practices had a gap. But the strategically important discovery was what the code revealed about Anthropic's product roadmap.

Security researchers found 44 hidden feature flags. Among them: references to Conway instances, a CNW extension framework, webhook configurations, and persistent memory stores that suggested an agent designed to run for days or weeks without human input.

What Conway Actually Is

Every AI tool you've used works the same way: you open it, type something, get a response, close the tab. The AI stops. Conway changes that fundamental interaction model.

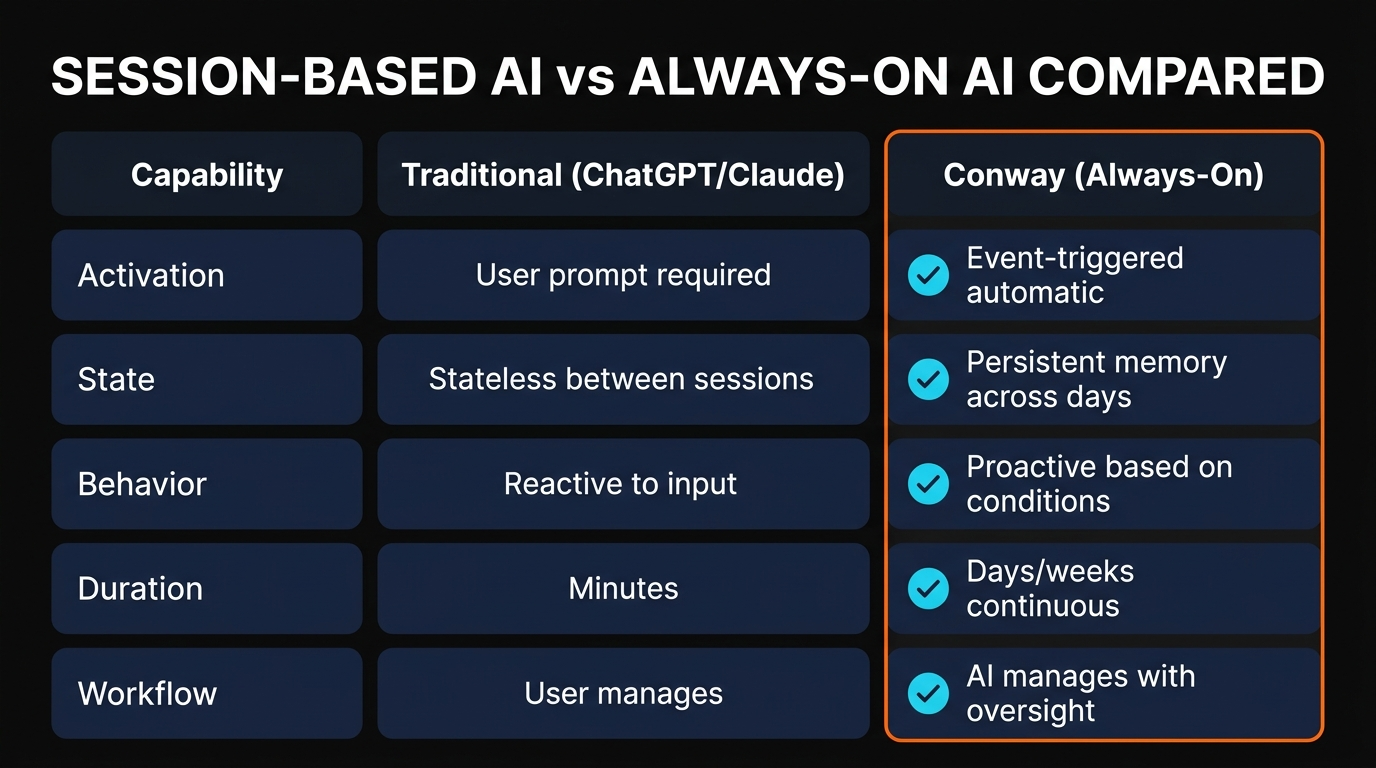

| Aspect | Traditional AI (ChatGPT, Claude) | Conway (Always-On) |

|---|---|---|

| Activation | You type a prompt | Triggered by events automatically |

| State | Stateless between sessions | Persistent memory across days/weeks |

| Behavior | Reactive to input | Proactive based on conditions |

| Duration | Minutes per conversation | Continuous for days or weeks |

| Workflow | User manages each step | AI manages with human oversight |

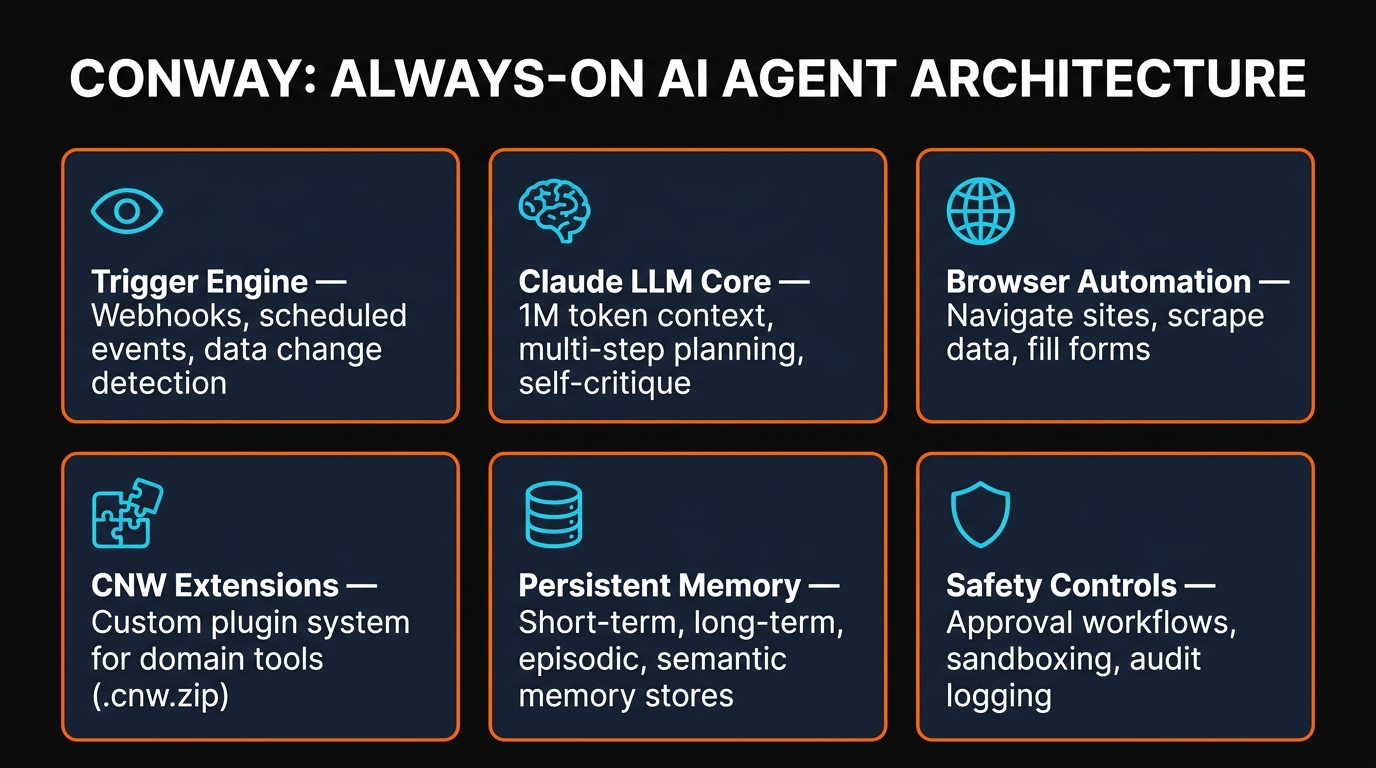

According to the leaked code and subsequent analysis, Conway has a three-panel UI: Search (query agent activities and memories), Chat (direct interaction and overrides), and System (configure triggers, extensions, webhooks, and safety guardrails).

The trigger engine is the key innovation. Conway monitors webhooks, polls APIs and databases on schedules, processes event streams, and supports conditional logic. When a condition fires, Claude starts working — no human prompt needed. An email arrives, a data feed updates, a calendar event fires, and the agent takes action.

For context on how Claude Code's existing agent system works, see our guide to 76+ Claude Code agents.

Anthropic's Five-Surface Platform Strategy

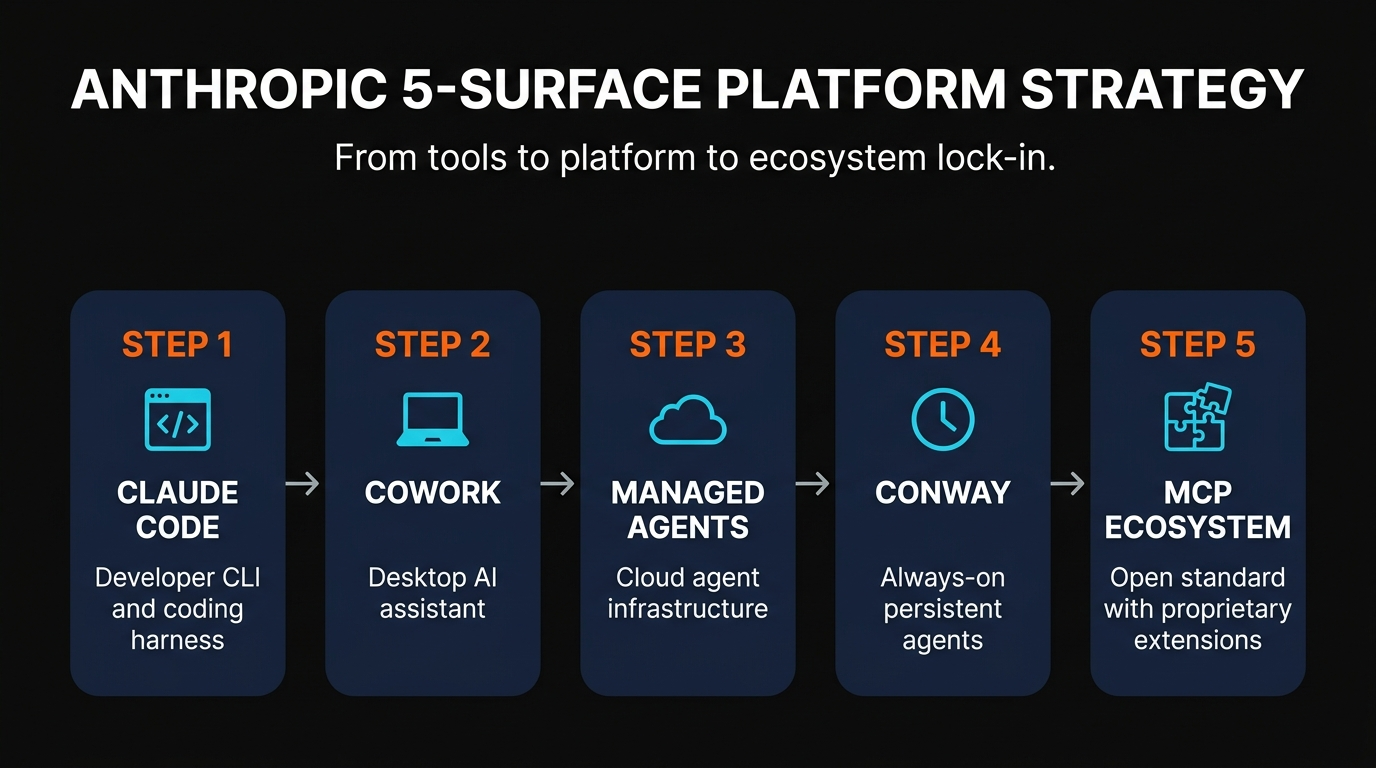

When you connect Conway with everything else Anthropic has shipped in the past 90 days, a clear platform strategy emerges. This isn't just about building better models — it's the same move Microsoft made with Windows and Apple made with iOS.

1. Claude Code

The developer CLI. 1M+ active developers. This is the entry point — gets developers building on Claude's tooling and conventions.

2. Cowork

The desktop AI assistant. Brings Claude into non-developer workflows — writing, research, project management.

3. Managed Agents

Cloud infrastructure for production agents. Launched April 8 — the commercial API layer for enterprises deploying autonomous agents.

4. Conway

The always-on agent. Not yet released publicly. Adds persistent operation, event-driven triggers, and browser automation.

5. MCP Ecosystem

The "app store" layer. Open standard for tool connectors — but CNW extensions are proprietary, creating an inner ecosystem.

The strategy is clear: capture developers with Claude Code, expand to knowledge workers with Cowork, monetize enterprise with Managed Agents, create stickiness with Conway's persistent state, and build network effects with the MCP/CNW ecosystem. Each surface reinforces the others.

For a guide to the MCP ecosystem, check our 1,600+ MCP servers directory and MCP starter pack.

MCP Open Standard vs Proprietary Layer

Here's where the strategy gets subtle. MCP (Model Context Protocol) is genuinely open — it's a standard for connecting AI models to tools, and competitors like OpenAI and Google have started adopting it. That's the portable layer. But the leaked code reveals a second layer on top: the CNW extension format.

CNW extensions use .cnw.zip files — a proprietary plugin format. Developers build custom tools, UI tabs, and context handlers that only work inside Conway's environment. If you invest in building CNW extensions, your tooling is locked to Anthropic's platform.

This is the classic platform playbook: offer an open standard at the base layer (MCP) to attract the ecosystem, then add a proprietary layer on top (CNW) where the real value accumulates. The open layer creates adoption. The proprietary layer creates lock-in.

"The question isn't 'is Claude smart enough?' — it's 'what lives inside Claude's operating environment?' That's the same move Apple made with iOS and Microsoft made with Windows."

Why Behavioral Lock-In Is Different

Data portability laws like GDPR give you the right to export your data. But there's no equivalent for behavioral lock-in — and that's the deeper risk with always-on agents.

When Conway runs for weeks monitoring your systems, it builds up layered context: your decision patterns, workflow preferences, business rules it's inferred, edge cases it's learned to handle. That's not "data" in the traditional sense — it's operational intelligence. You can export your files and API configurations, but you can't export the agent's understanding of how you work.

Consider the cost of switching: a Conway agent that's been managing your customer support for six months has learned your escalation patterns, your tone preferences, your holiday policies, and hundreds of edge cases. Moving to a competitor means retraining all of that from scratch — months of operational knowledge, gone.

There's currently no "intelligence portability" framework in the industry. No standard for exporting an agent's learned behavior. And Anthropic — along with every other AI platform provider — has little incentive to create one.

For a broader look at how the AI coding tool landscape is evolving, see our best AI coding tools comparison and the OpenSWE guide to building your own Claude Code.

What Developers Should Do Now

If you're choosing an AI platform for your business, the decision you're making now is harder to reverse than any software migration you've faced before. Here's our practical advice:

- Use MCP connectors wherever possible. They're the portable layer. If you build integrations with MCP, they work across Claude, and increasingly other platforms. Avoid proprietary extension formats unless the value is overwhelming.

- Document your agent's learned behavior. If an always-on agent is managing workflows, periodically export its decision logs, preferences, and business rules into platform-independent documentation.

- Diversify your AI stack. Don't put everything on one provider. Use Claude for coding, maybe a different provider for customer support, another for data analysis. Concentration risk is real.

- Watch the regulatory landscape. Intelligence portability regulation is inevitable — probably 2-3 years out. Position yourself to comply early.

- Build abstractions. If you're writing code that calls Anthropic APIs, wrap them in interfaces that could swap to another provider. The migration cost drops from months to days.

Anthropic is building an excellent platform. Claude Code is genuinely the best coding assistant we've used. Managed Agents is a significant step forward for production agent deployments. Conway — when it ships — will be impressive. The question isn't whether the technology is good. It's whether you understand the trade-offs of deep integration with any single provider.

For the complete guide to getting started with Claude Code, see our Claude Code setup guide.

Frequently Asked Questions

.cnw.zip files). Developers build custom tools and UI extensions that only work inside Conway — creating an app-store-like ecosystem locked to Anthropic's platform.Recommended AI Tools

Writefull

Comprehensive review of Writefull, the AI writing assistant built for academic and research writing, with features, pricing, pros and cons, and alternatives comparison.

View Review →Opus Clip

In-depth Opus Clip review covering features, pricing, pros and cons, and alternatives. Learn how this AI video repurposing tool turns long videos into viral short-form clips.

View Review →Chatzy AI

Agentic AI platform for building and deploying conversational AI agents across WhatsApp, website chat, and other digital channels. No-code builder with knowledge base training.

View Review →Blotato

Blotato is an AI content engine that combines scheduling, AI writing, image generation, video creation, and cross-posting with a full REST API. Built by the creator who grew to 1.5M followers using it.

View Review →