The AI Music Detection Arms Race: How Platforms Catch AI Tracks and How Creators Fight Back

AI Creative Tools Specialist

Key Takeaways

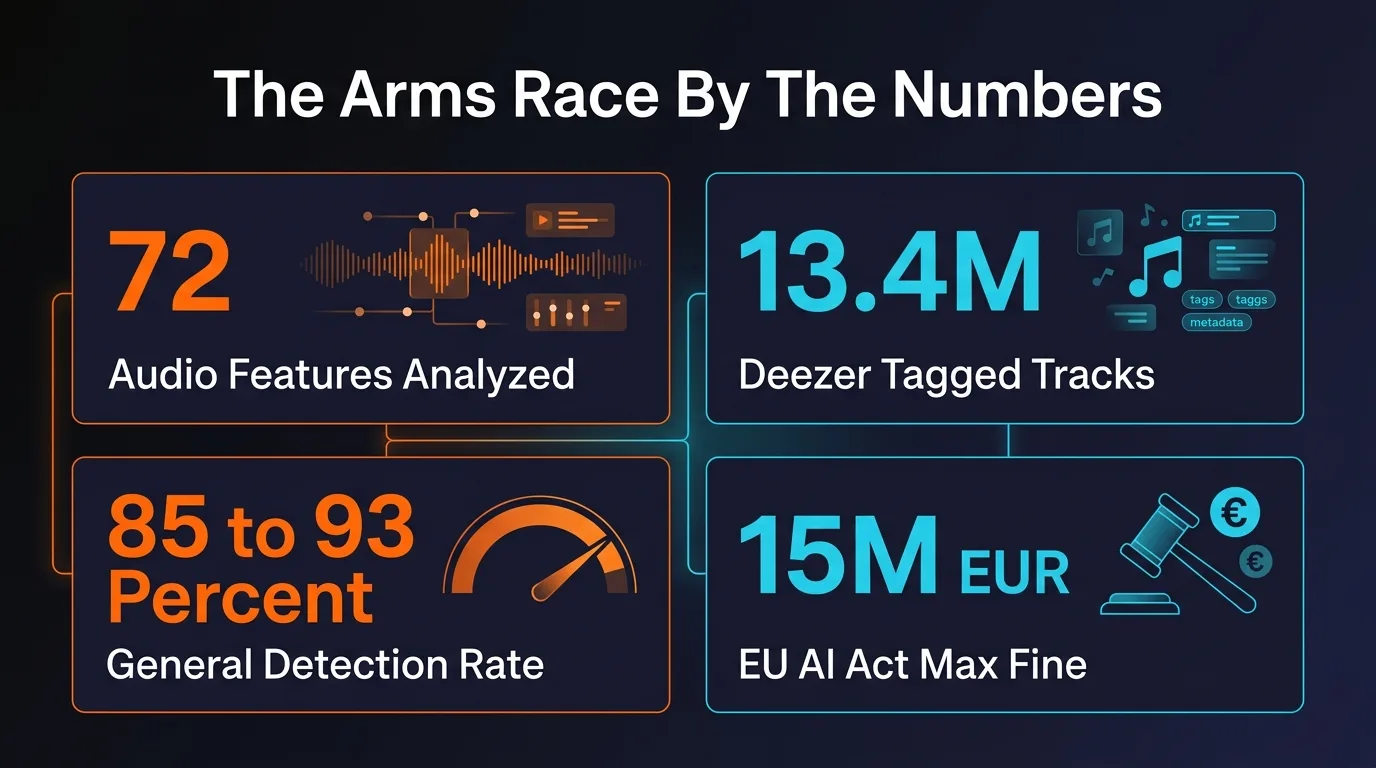

- Detection systems now analyze 72 distinct audio features including MFCCs, spectral contrast, chroma, and rhythmic patterns to identify AI-generated music

- Suno tracks leave identifiable fingerprints in the 2-8 kHz frequency range with regular harmonic series that scanners flag instantly

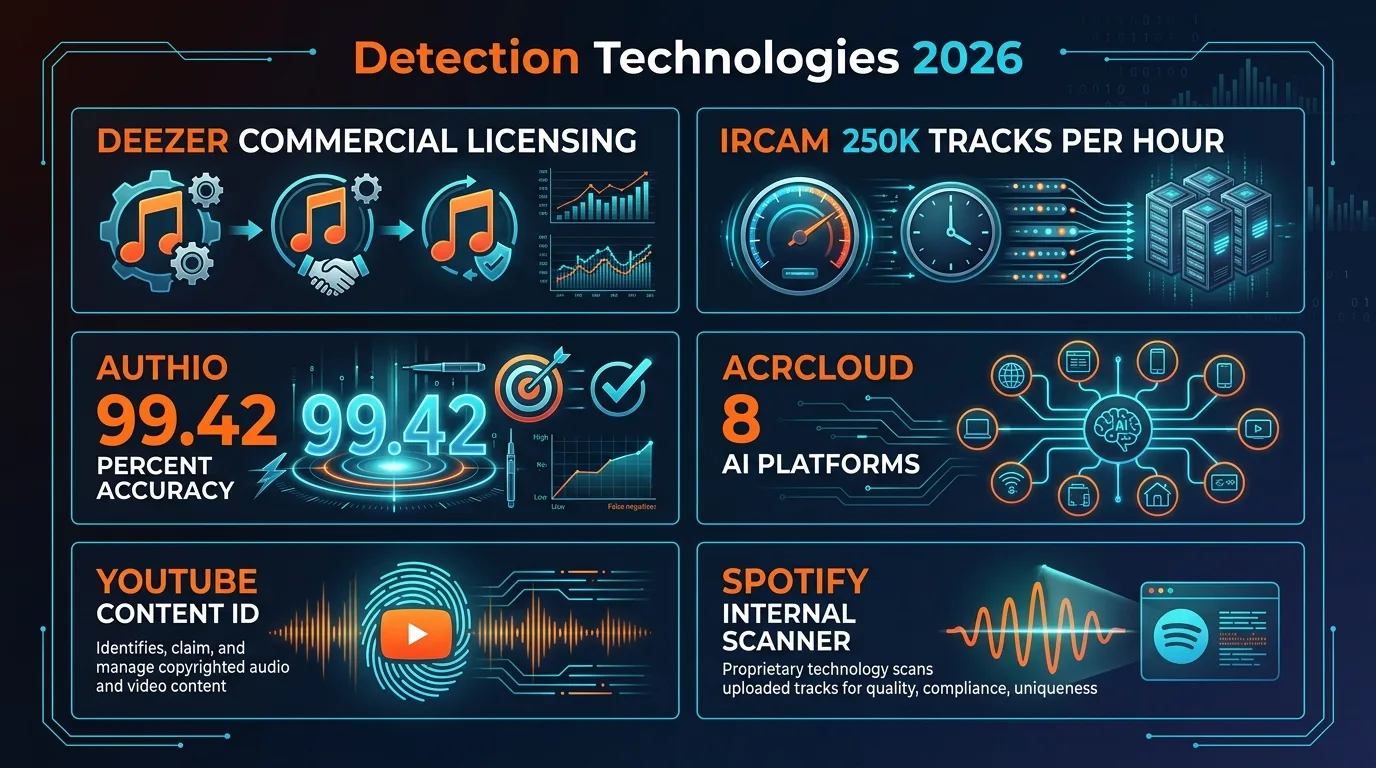

- Authio's 12-model ensemble achieves 99.42% detection accuracy with a false positive rate under 0.6%

- IRCAM Amplify can scan 250,000+ tracks per hour — industrial-scale detection is here

- Cross-platform intelligence sharing means one flag = scrutiny everywhere your track was distributed

- The EU AI Act (Article 50) becomes enforceable August 2, 2026, mandating watermarks with fines up to 15M EUR

- Only two artifact removal tools remain after Spectrahertz shut down March 10, 2026

Table of Contents

- The Scale of the Problem: 100 Million Creators vs. the Machine

- How Detection Actually Works: 72 Features, One Verdict

- Generator Fingerprints: Why Suno Is the Easiest to Catch

- The Detection Players: Who Is Scanning Your Music

- Cross-Platform Intelligence Sharing

- The EU AI Act: Mandatory Watermarks by August 2026

- The Other Side: Artifact Removal Tools

- Where This Arms Race Is Headed

- FAQ

Somewhere in a data center right now, a neural network is listening to your track. It is not listening for melody. It is not evaluating your chord progressions or judging your lyrics. It is counting spectral peaks in the 2-8 kHz range, measuring the microsecond gaps between your drum hits, and comparing your harmonic series against a database of known AI generator fingerprints.

This is the AI music detection arms race of 2026 — and it is accelerating faster than most creators realize. On one side, a billion-dollar detection industry backed by Spotify, Deezer, and the major labels. On the other, over 100 million people who have used AI to generate music and want to distribute it. The technology on both sides evolves weekly. What worked last month to get tracks past scanners fails today. What detectors missed yesterday, they catch tomorrow.

This is a technical deep-dive into how the detection side operates, who the major players are, what specific features they analyze, and what the regulatory landscape looks like as the EU prepares to make watermarking mandatory. Whether you are building detection systems or trying to understand why your tracks keep getting flagged, this is the full picture.

The Scale of the Problem: 100 Million Creators vs. the Machine

The numbers tell the story of an industry colliding with itself. Suno alone has attracted over 100 million users, converted 2 million of them into paid subscribers, and reached a $300 million annual run rate as of February 2026. The company is valued at $2.4 billion. It is, by any measure, a wildly successful consumer product.

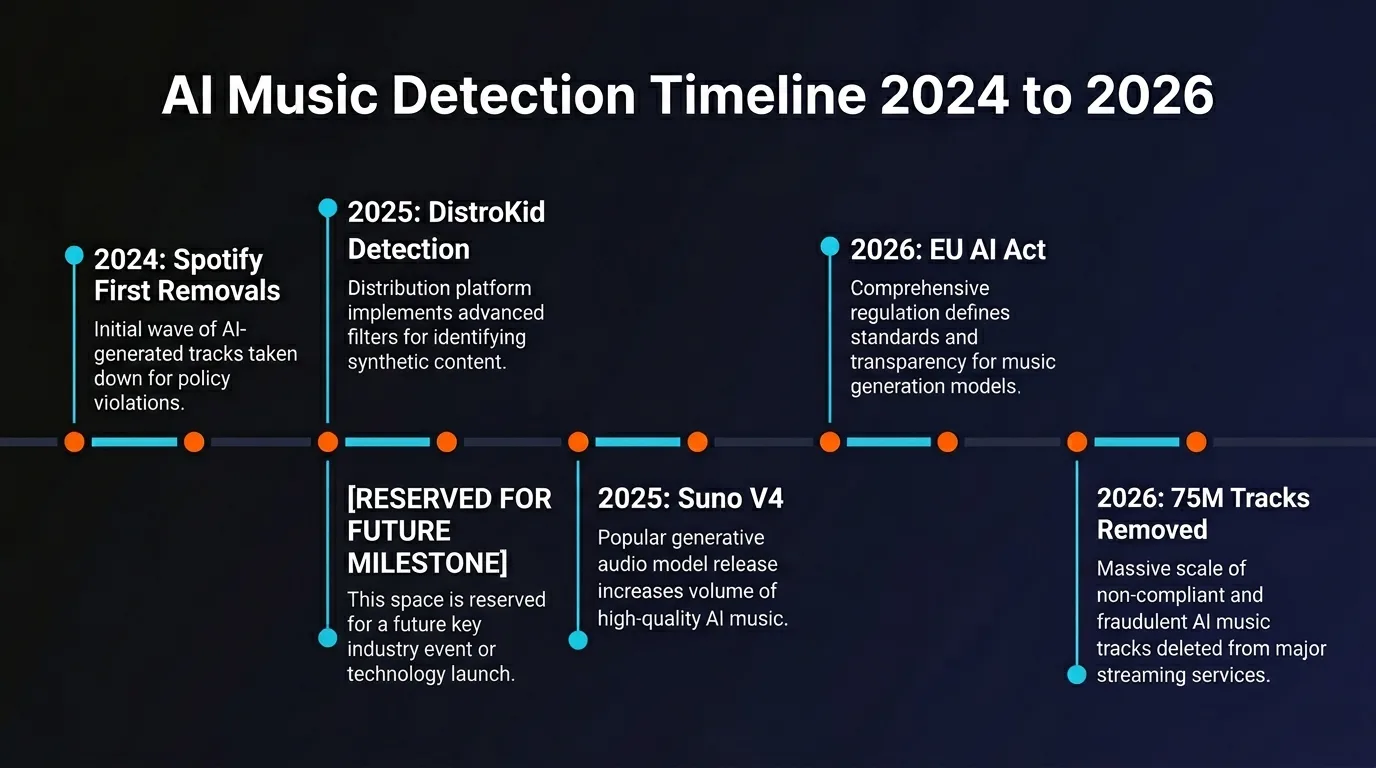

Meanwhile, on the platform side, Spotify has removed over 75 million AI-generated tracks in a single enforcement sweep. Deezer has tagged 13.4 million AI tracks on its platform and filed two detection patents in December 2024. DistroKid now runs automated AI screening on every upload. TuneCore refuses to distribute anything that is 100% AI-generated. CD Baby — the strictest — will not accept any AI-generated content, even if you contributed original sounds alongside the AI output.

This is the fundamental tension. One side of the industry is building tools that make it effortless for anyone to create music. The other side is building tools to identify and block that music from reaching listeners. Both sides are spending hundreds of millions of dollars. Both sides are deploying increasingly sophisticated AI. And the creators — the 100 million people who just want their tracks on Spotify — are caught in the middle.

How Detection Actually Works: 72 Features, One Verdict

AI music detection is not a single check. It is a layered analysis system that examines your audio across multiple domains simultaneously. Research presented at ISMIR 2025 demonstrated that modern detection systems analyze 72 distinct audio features to reach a single AI-or-human verdict. Here is what those systems are actually measuring.

Layer 1: Spectral Analysis

Every AI music generator leaves microscopic fingerprints in the frequency domain. These are not intentional watermarks — they are architectural artifacts. The deconvolution layers that are core components of neural audio generators produce systematic spectral peaks at predictable frequency intervals. Detection systems extract MFCCs (Mel-Frequency Cepstral Coefficients), which capture the timbral characteristics of the audio. They measure spectral contrast — the difference between peaks and valleys across frequency bands. They analyze chroma features, which represent pitch class distributions independent of octave. And they examine overall spectral shape for the telltale flatness that AI generators produce.

Layer 2: Temporal Analysis

Human musicians are not metronomes. Even the tightest drummer introduces micro-timing variations measured in single-digit milliseconds. AI generators produce rhythmic patterns with machine precision — and detectors exploit this difference ruthlessly. They measure inter-onset intervals between beats, looking for the absence of natural swing and drift. They analyze attack transients — the way a note begins — which in human performance vary subtly from note to note. In AI output, attack profiles are more consistent than any human could achieve.

Layer 3: Metadata and Watermark Inspection

Beyond the audio itself, detection systems read the invisible data embedded in files. They check for C2PA (Coalition for Content Provenance and Authenticity) cryptographic signatures — standardized metadata that documents authorship, source, and AI involvement. They scan for Google SynthID watermarks — imperceptible signals embedded directly into audio waveforms that survive compression, format conversion, and basic processing. They examine encoding characteristics, container metadata, and even statistical properties of the binary data for patterns associated with specific AI pipelines.

Layer 4: Ensemble Classification

The final layer combines all features into a probability score. The most advanced systems do not rely on a single model. They use ensemble architectures — multiple specialized neural networks, each trained to detect different aspects of AI generation, whose outputs are combined for a final verdict. This approach dramatically reduces both false positives and false negatives compared to single-model detection.

These Are the Exact Artifacts Undetectr Removes

Spectral smoothing. Timing humanization. Metadata cleanup. One upload, every layer addressed.

See how Undetectr removes these exact artifacts →Generator Fingerprints: Why Suno Is the Easiest to Catch

Not all AI generators are equally detectable. Each platform uses a different neural architecture, which produces a different spectral signature — and some are far more conspicuous than others.

Suno: The Most Detectable

Suno tracks are, by industry consensus, among the easiest to identify. The generator embeds both intentional identification markers and architecture-specific spectral signatures that create a multi-layer detection surface. The characteristic patterns are concentrated in the 2-8 kHz frequency range, where Suno's deconvolution architecture produces a highly regular harmonic series. Combined with machine-precise timing and proprietary inaudible watermarking, Suno output offers detectors multiple independent signals to analyze.

This is significant because Suno is the dominant generator — with 2 million paying subscribers, it accounts for the vast majority of AI tracks entering the distribution pipeline. And every one of those tracks carries the same architectural DNA.

Udio: Different Architecture, Different Tells

Udio uses a fundamentally different generation architecture, which produces a different fingerprint profile. Rather than the spectral peaks characteristic of Suno, Udio tracks exhibit identifiable inpainting artifacts — visible in spectrograms where the model filled gaps in the audio. They also have a characteristically flat noise floor (real recordings always have some noise variation) and a stereo field uniformity that is more consistent than organic recordings. Since October 2025, Udio has disabled download functionality entirely, but tracks generated before that date remain in circulation and continue to require processing for distribution.

The Expanding Detection Surface

ACRCloud, which launched its detection platform in January 2026, now identifies content from eight distinct AI platforms: Suno, Udio, Sonauto, ElevenLabs, Seed Music, MiniMax, Mureka, and Riffusion. Each platform's neural architecture produces unique artifacts, and detection models are trained specifically on each generator's output. The detection surface expands with every new generator that enters the market — and it never contracts.

The Detection Players: Who Is Scanning Your Music

Detection is no longer a feature buried in a platform's moderation pipeline. It is a standalone industry with dedicated companies, commercial licensing deals, and processing capacity that would have seemed absurd two years ago.

| System | Accuracy | Scale | Notable Detail |

|---|---|---|---|

| Authio | 99.42% | Per-track analysis | 12-model ensemble, <0.6% false positives |

| IRCAM Amplify | 99% | 250,000+ tracks/hour | <1% false positives, industrial-scale |

| Deezer (In-House) | ~100% (Suno/Udio) | 13.4M tracks tagged | 2 patents filed, commercially licensed |

| ACRCloud | Not disclosed | 8 AI platforms | Launched Jan 2026, multi-generator |

| Spotify (In-House) | Not disclosed | 75M+ tracks removed | Voice cloning detection, spam filtering |

| YouTube Content ID | Not disclosed | Platform-wide | Evolved to AI pattern recognition |

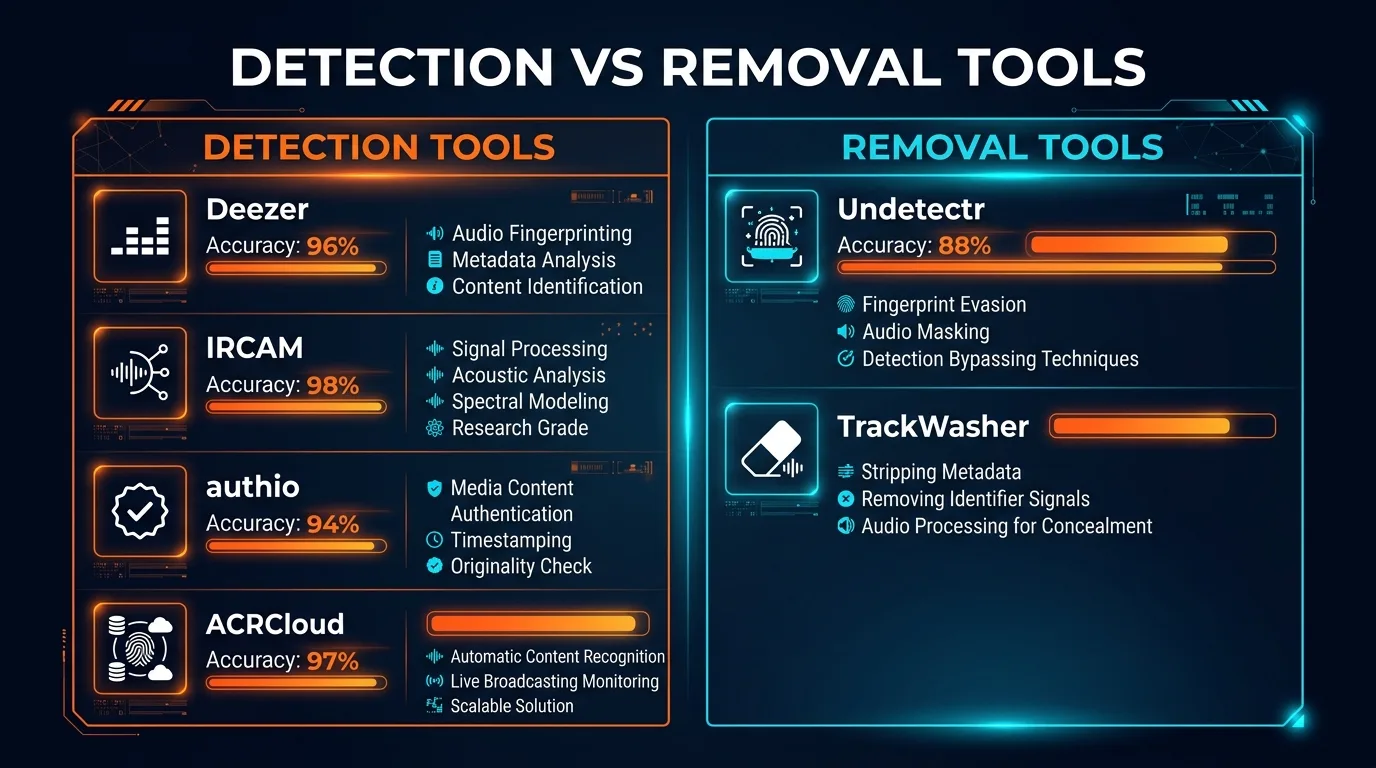

Deezer deserves special attention. The French streaming platform has not only built what may be the most aggressive in-house detection system — claiming 100% accuracy on fully AI-generated Suno and Udio tracks — but it has also begun commercially licensing that technology to other platforms. Deezer filed two detection-related patents in December 2024 and is actively selling its scanning capabilities to industry partners. This means Deezer's detection algorithms may be running behind the scenes at platforms you would not expect.

IRCAM Amplify represents the industrialization of detection. Processing 250,000 tracks per hour means this system can scan an entire distributor's daily upload volume in real time. At that throughput, there is no batch processing delay — every track is analyzed before it reaches a single listener.

Authio is the accuracy benchmark. Its 12-model ensemble architecture — where twelve specialized neural networks each analyze different aspects of the audio and vote on the final classification — achieves 99.42% accuracy with a false positive rate under 0.6%. That means fewer than 6 in 1,000 human tracks get incorrectly flagged, while only about 6 in 1,000 AI tracks slip through.

Cross-Platform Intelligence Sharing

This is the detail most creators miss, and it is arguably the most consequential development in the detection landscape. When Spotify, Apple Music, or Deezer flags a track, that information does not stay siloed. It flows back to the distributor.

DistroKid participates in cross-platform intelligence sharing. A track that gets pulled from Spotify can trigger scrutiny on every platform where it was distributed — Apple Music, Amazon Music, YouTube Music, Deezer, Tidal. One flag does not mean one takedown. It means simultaneous investigation across your entire distribution footprint.

Worse, DistroKid applies policy changes retroactively. Tracks that were accepted under previous, more lenient rules can be flagged and removed during routine sweeps under updated policies. There is no grandfather clause. If the detection systems improve — and they improve continuously — your previously approved tracks are not safe.

The implications are severe. A single detection event on one platform can cascade into account-wide consequences: song removals, revenue withholdings, account suspension, and permanent distribution bans. The intelligence sharing creates a unified detection net that is far more powerful than any individual platform's system.

The EU AI Act: Mandatory Watermarks by August 2026

If the detection technology were not enough, regulation is about to force the issue. Article 50 of the EU AI Act becomes enforceable on August 2, 2026 — less than five months from now. It mandates that all AI-generated audio, video, image, and text must be labeled as artificially generated in machine-readable format.

The requirements are specific. AI providers must ensure their outputs are marked using a "multilayered" approach that includes:

Metadata Identifiers

Machine-readable tags embedded in file metadata documenting AI generation, model used, and provenance chain.

Interwoven Watermarks

Imperceptible signals embedded directly into audio waveforms that survive compression, conversion, and processing.

Digital Signatures

Cryptographic signatures (C2PA standard) that provide tamper-evident proof of AI origin and creation chain.

Technical solutions must be "effective, interoperable, robust, and reliable." The Code of Practice is expected to be finalized in May-June 2026. Non-compliance carries penalties of up to 15 million EUR or 3% of global annual turnover — whichever is higher.

The regulatory dynamic creates an interesting paradox. The EU AI Act will require AI music generators to embed even more identification markers into their output — more watermarks, more metadata, more signals for detectors to find. This means the detection surface will expand dramatically after August 2026. Every AI-generated track will carry mandatory, legally required identification that platforms can use to flag and restrict distribution.

For creators, this means the window is closing. The arms race is not trending toward equilibrium. It is trending toward a regulatory-technical environment where AI-generated audio becomes increasingly identifiable by design.

The Other Side: Artifact Removal Tools

On the opposite side of this arms race sit the tools designed to help creators get their music past detection systems. The landscape here is significantly smaller — and it just got smaller.

Spectrahertz, which offered professional AI-powered audio restoration including watermark detection and artifact removal, permanently shut down on March 10, 2026. The closure was attributed to legal pressure. Its website is dark. Its users are orphaned.

The open-source alternative — the ai-audio-fingerprint-remover GitHub project — was deprecated in December 2025 with an explicit note that it "cannot address content-based fingerprinting." It was a four-pass system (metadata stripping, spectral analysis, statistical normalization, humanization) that worked against earlier, simpler detection methods. Against 2026-era ensemble classifiers analyzing 72 features, it is inadequate.

That leaves two tools in the artifact removal space:

| Feature | Undetectr | TrackWasher |

|---|---|---|

| Spectral Smoothing | Yes | Yes |

| Timing Humanization | Yes | Unknown |

| Pitch Variation | Yes | Unknown |

| Metadata Cleanup | Yes | Unknown |

| Dynamic Range Restoration | Yes | Unknown |

| Integrated Mastering | Yes (-14 LUFS) | Yes |

| Pricing | One-time from $19 | One-time payment |

| Browser-Based | Yes | Yes |

| Format Support | MP3, WAV, FLAC | MP3, WAV, FLAC |

| Published Content/SEO | 28 blog posts | Minimal |

The Spectrahertz shutdown is a cautionary signal. Legal pressure eliminated one of only three tools in this space in a single stroke. The tools that remain operate in a gray zone — technically performing audio processing (which is legal), but doing so specifically to circumvent detection systems (which invites scrutiny). As the EU AI Act approaches enforcement, the legal environment for these tools becomes increasingly uncertain.

The Last Tool Standing

Spectrahertz is gone. The open-source option is deprecated. Don't wait for the next shutdown.

Try Undetectr before it's gone →Where This Arms Race Is Headed

The trajectory is clear. Detection systems are getting more accurate, faster, and more widely deployed. Regulatory requirements will force generators to embed more identification signals. Intelligence sharing between platforms creates a unified net that is harder to navigate with each passing month.

But the arms race is not one-sided. Detection systems have known vulnerabilities. Researchers have demonstrated that simply resampling audio to 22.05 kHz caused one commercial detector to misclassify all Suno samples. Models trained on one platform's output can struggle with others. Some detectors identify production pipeline characteristics rather than genuine AI qualities — meaning DAW post-processing, effects chains, and analog re-recording can alter the signals they rely on.

The cat-and-mouse dynamic will persist because the incentives on both sides are enormous. The music industry sees AI-generated content as an existential threat to the economic model that sustains it — if anyone can generate professional-quality music for free, the entire value chain from songwriters to labels to publishers faces disruption. Platforms like Spotify are caught between two imperatives: they need content volume to retain subscribers, but they need content quality and authenticity to retain artist relationships and label deals.

Creators, meanwhile, have a simpler motivation: they made something they want people to hear. Whether it was generated by an AI, assisted by an AI, or produced entirely by a human is — to many of them — a distinction without a difference. The music exists. They want it on Spotify. The rest is politics.

Several developments to watch over the next 12 months:

- August 2, 2026: EU AI Act Article 50 enforcement begins. Expect mandatory watermarking compliance from all major generators.

- Deezer licensing expansion: As Deezer sells its detection tech to more platforms, expect uniform detection capabilities across previously independent systems.

- Suno V5 detection adaptation: V5's upgrade to 44.1kHz audio changes the spectral fingerprint. Detection systems will need to retrain. There will be a window.

- YouTube Content ID evolution: YouTube is developing tools to detect AI-generated voices and faces. Audio detection will follow the same trajectory.

- DDEX metadata standards: Spotify's new DDEX metadata fields for AI disclosure (vocals, instruments, post-production) will become industry standard.

The question is not whether AI music will coexist with human music on streaming platforms. It will — Ditto Music already permits it, and the economic incentives for platforms to host more content are too strong. The question is under what conditions, with what disclosures, and after what processing. The arms race is not about whether AI music exists. It is about who controls the terms of its distribution.

Frequently Asked Questions

Don't Wait for the Next Shutdown

Detection is getting smarter. Regulation is arriving. Competitors are disappearing. Secure your music distribution pipeline while you still can.

Secure your music pipeline at Undetectr.com →Recommended AI Tools

Manus AI

Autonomous AI agent platform that executes complex multi-step tasks.

View Review →Manus AI

Autonomous AI agent platform that executes complex multi-step tasks.

View Review →Renamer.ai

AI-powered file renaming tool that uses OCR to read document content and automatically generates meaningful file names. Supports 30+ file types and 20+ languages.

View Review →Storydoc

AI-native interactive presentation platform that creates scroll-based business documents with real-time engagement analytics and CRM integration.

View Review →