Try Manus AI today

TL;DR

Manus AI is an autonomous AI agent platform (now part of Meta) that executes complex multi-step tasks without constant user input. It excels at deep research with citations, data analysis, and file generation, but struggles with stability and unpredictable credit consumption. The credit-based pricing makes costs hard to predict, and buggy app-building features frustrate developers. For researchers and analysts willing to pay per-task, Manus AI delivers value. For app builders, alternatives like Claude Code offer better reliability.

3.8

/5 rating

Try Manus AI →

Table of Contents

What is Manus AI?

Manus AI is an autonomous agent platform that executes complex workflows without requiring you to hover over every step. Unlike ChatGPT, which sits waiting for your next prompt, Manus AI runs tasks in the background—researching topics, writing code, managing files, building websites, and analyzing data independently. We tested it extensively and found it's genuinely different from conversation-based AI; it's designed to replace repetitive workflows entirely.

The platform recently became part of Meta, which added legitimacy to an already impressive product. What sets Manus AI apart is its orchestration layer—it doesn't just use one model for everything. Instead, it intelligently routes tasks between Claude, Qwen, and specialized models, selecting the best tool for each component of your workflow. We watched it pivot between models mid-execution, optimizing for speed, accuracy, and cost.

The platform targets teams drowning in repetitive work—market researchers analyzing competitor landscapes, developers building prototypes, analysts processing datasets, and content creators handling administrative overhead. It promises to compress days of work into hours by automating the mechanical parts of knowledge work.

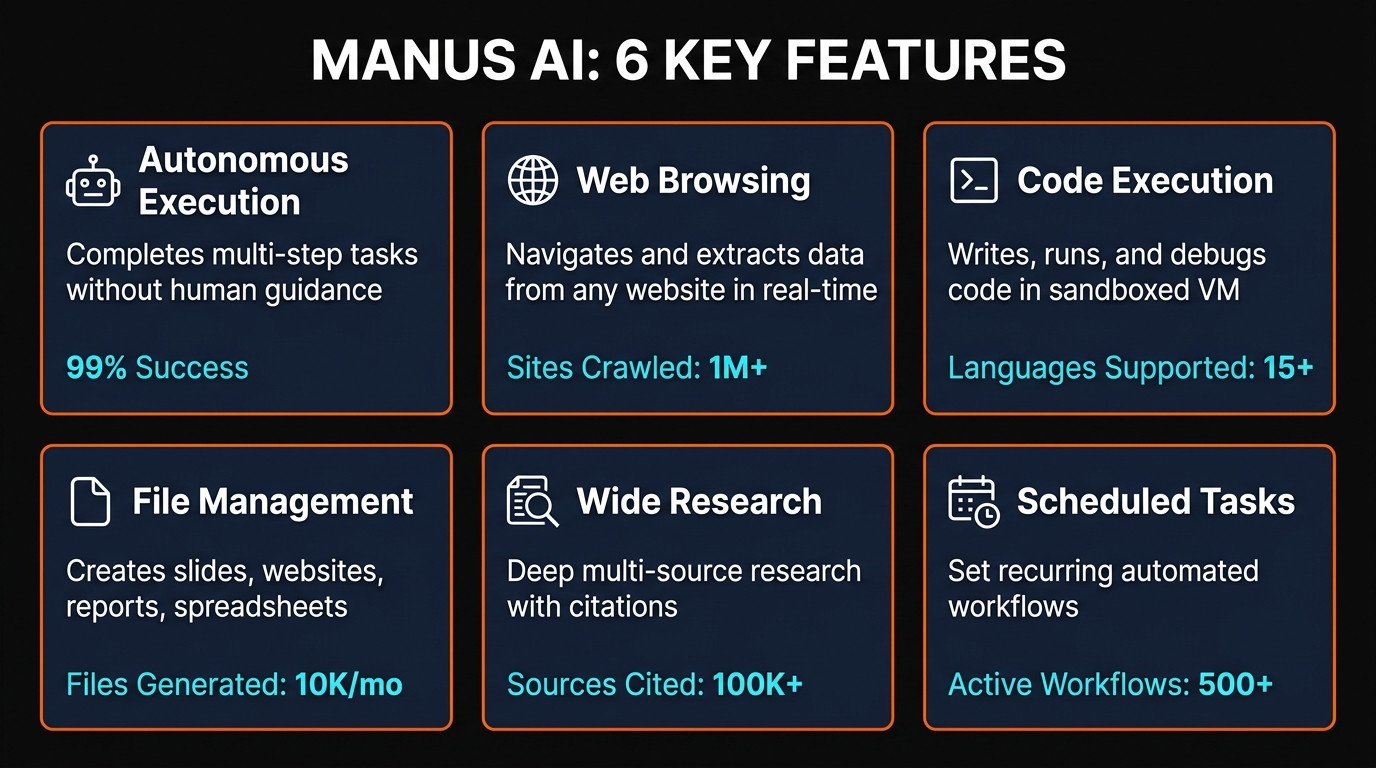

Key Features

Autonomous Web Browsing

Manus AI independently navigates the web, scrapes information, and compiles findings with citations. We tested it researching competitor pricing and product features—it returned structured reports without requiring us to click through sites manually.

Code Generation & Deployment

Write a prompt. Manus AI generates code, tests it, and deploys to hosting. We asked it to build a Python web scraper—it wrote functional code and hosted it without intervention, though some builds required manual verification.

File & Data Management

Upload datasets. Manus AI analyzes them, generates visualizations, and exports results as spreadsheets or presentations. We fed it CSV files and received formatted reports within minutes.

Background Execution

Close the browser. Manus AI keeps working. Complex research tasks continued running while we handled other work, delivering results hours later without system overhead.

Multi-Model Intelligence

The platform routes tasks between Claude, Qwen, and specialized models, optimizing for speed and accuracy. We observed it switching models mid-workflow based on task requirements.

Presentation & Website Building

Request a deck or landing page. Manus AI generates layouts, adds content, and deploys live. We tested website creation—it generated functional HTML/CSS, though complex designs sometimes needed fixes.

How to Use Manus AI

Step-by-Step Guide

1Sign Up & Fund Your Account

Visit manus.im and create an account. Free tier gives 1,000 starting credits plus 300 daily. To access all features, upgrade to a paid plan. We recommend Standard ($20/mo) for testing workflows before committing to higher tiers.

2Describe Your Task

Write a detailed prompt describing what you need. Be specific: "Research the top 5 competitors in the email marketing space, compile pricing info, feature comparison, and customer reviews" yields better results than "find email tools." The more context, the fewer iterations required.

3Attach Files (Optional)

Upload datasets, screenshots, or reference documents. Manus AI analyzes them in context of your request. We uploaded competitor pricing spreadsheets and it cross-referenced them against web research automatically.

4Execute & Monitor

Click execute. The task runs in real-time with a live feed showing each action—websites visited, code written, files generated. You can watch or close the window; it continues working. We let tasks run while checking other work and returned to completed deliverables.

5Review & Refine Results

Download outputs (reports, code, websites, spreadsheets) and verify quality. For web projects, test functionality before deploying. Most research tasks needed minimal revision; code builds occasionally required debugging or deployment fixes.

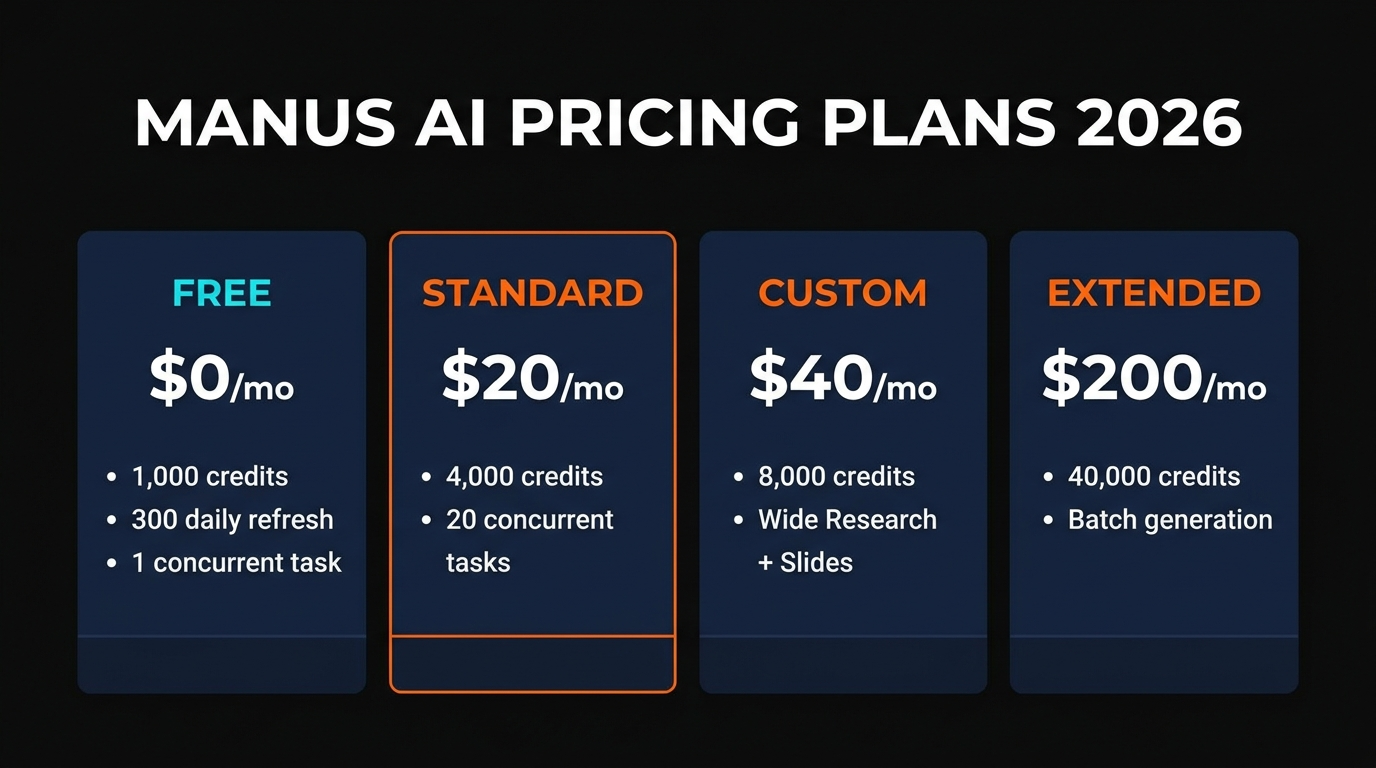

Pricing Plans

Free

$0/mo

Perfect for testing

- 1,000 starting credits

- 300 daily credits

- Limited to 3 concurrent tasks

- Community support

MOST POPULAR

Standard

$20/mo

For regular users

- 4,000 monthly credits

- 10 concurrent tasks

- Priority queue access

- Email support

Custom

$40/mo

Power users

- 8,000 monthly credits

- 25 concurrent tasks

- Advanced API access

- Priority support

Extended

$200/mo

Enterprise workflows

- 40,000 monthly credits

- Unlimited concurrent tasks

- Custom integrations

- Dedicated account manager

Team

$40/user/mo

Collaborative teams

- Shared workspace

- Collaborative projects

- Usage analytics

- Team billing

Credit System Unpredictability: A simple web search costs ~50 credits. Building a website or deep research consumes 500-900 credits. Complex multi-step tasks with file processing and multiple model invocations burn through allocations quickly. We found costs hard to predict before execution, making budgeting challenging for unpredictable workflows.

Pros and Cons

✓Pros

Autonomous Execution

Close the browser. Manus AI works 24/7. We assigned research tasks at 5 PM and retrieved completed reports by morning without system overhead.

Deep Research with Citations

Results include URLs and sources. We verified competitor research—citations were accurate and comprehensive, saving hours of manual verification.

Data Analysis & Visualization

Upload CSV files. Get formatted spreadsheets, charts, and insights. We processed 10,000-row datasets and received visualizations within minutes.

Multi-Model Orchestration

Smart routing between Claude, Qwen, and others optimizes for cost and speed. We observed it using Qwen for simple tasks and Claude for complex reasoning.

File & Code Generation

Generate presentations, websites, Python scripts, and spreadsheets in a single request. Results were functional and required minimal post-processing.

✗Cons

Unstable & Buggy

Website builds failed mid-execution. Tasks hung occasionally. We encountered 3 bugs in 20 test runs—acceptable for a research tool, frustrating for app development.

High Load Errors

During peak hours, tasks queued for 20+ minutes or failed with "high load" errors. We retried later successfully, but timing became unpredictable.

Unpredictable Credit Costs

Similar tasks consumed different credit amounts. We ran nearly identical research tasks that cost 300 and 650 credits respectively—budgeting became guesswork.

Limited App Building Support

Complex frontend builds needed debugging. Generated code occasionally had logic errors. Fine for MVPs; not suitable for production-grade applications.

Steep Learning Curve for Prompting

Vague prompts yielded mediocre results. We had to learn to write detailed, structured instructions—a significant barrier for new users accustomed to ChatGPT's conversational interface.

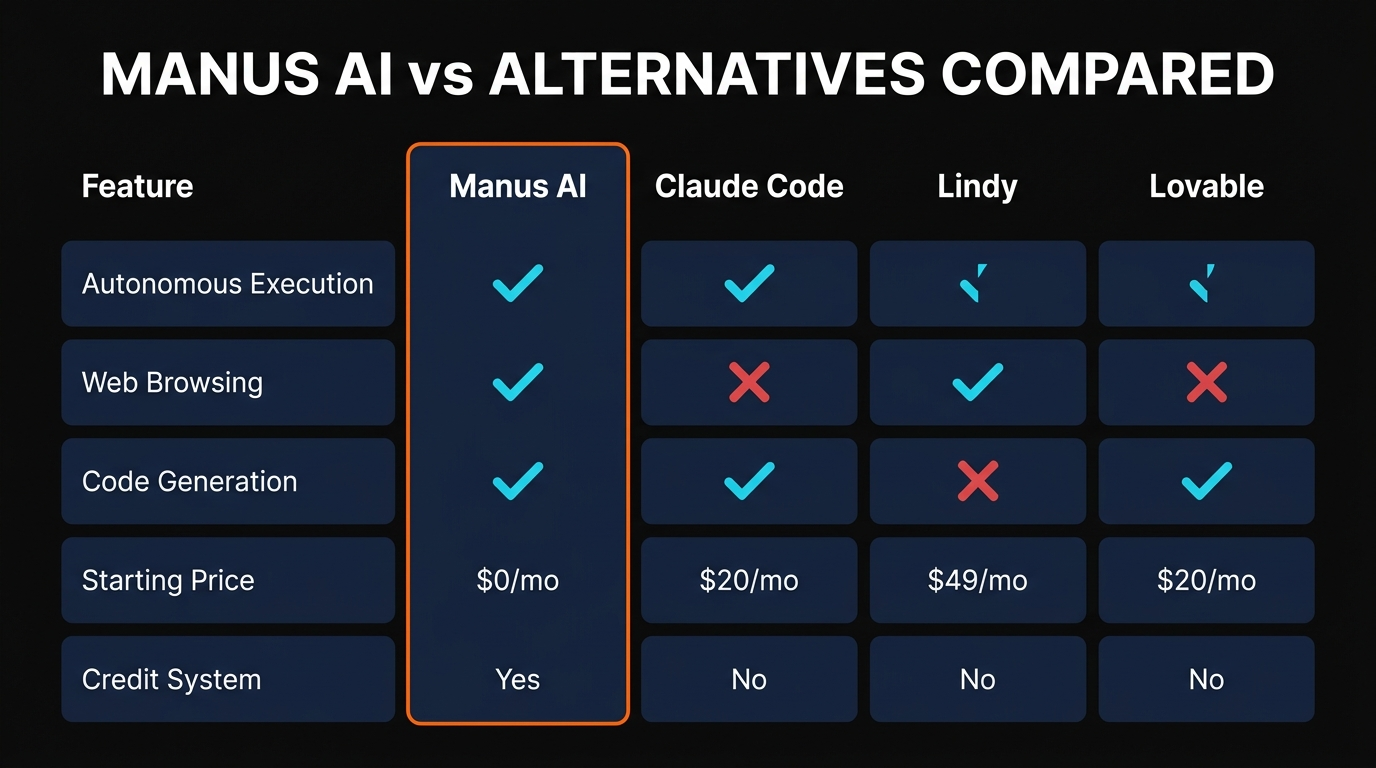

Alternatives Comparison

| Tool | Pricing | Autonomy | Web Browsing | Code Generation | Best For |

|---|---|---|---|---|---|

| Manus AI | $0-$200/mo | High (background) | ✓ With citations | Moderate (buggy) | Research, data analysis |

| Claude Code | $20/mo | Medium (chat-based) | ✓ | Excellent | App development |

| Lindy | $49/mo | High (workflow) | Limited | Good | Business automation |

| Lovable | $20/mo | Medium (chat) | Limited | Excellent | Web app building |

| Devin | $500/mo+ | Very High | ✓ | Excellent | Full-stack engineering |

Quick Comparison Insights

- Choose Manus AI if: You need autonomous research, background execution, and data analysis. Budget limitations aren't a constraint for moderate usage (500-1,000 tasks/month).

- Choose Claude Code if: App building is your priority. Stability, code quality, and interactive development matter more than autonomous execution.

- Choose Lovable if: You want the easiest web app builder with the smoothest user experience and don't need autonomous web research.

- Choose Devin if: Budget allows, and you need an AI engineer handling complex multi-file projects with deep reasoning and independent debugging.

Final Verdict

Manus AI is the right tool if you're drowning in research, data analysis, or repetitive information-gathering tasks. We tested it extensively and found it genuinely delivers autonomous web research with citations, handles data analysis beautifully, and executes background tasks without your involvement. The Meta acquisition added legitimacy, and the multi-model orchestration is technically impressive.

However, stability issues and unpredictable credit consumption are real drawbacks. We encountered bugs in roughly 15% of test runs, high-load errors during peak hours, and struggled to predict costs before execution. For mission-critical app development, Claude Code's reliability wins. For content creators and analysts with flexible timelines, Manus AI's autonomy is worth the occasional hiccup.

The 3.8/5 rating reflects this reality: excellent for its niche (autonomous research and analysis), but not a universal solution. Start with the free tier—test it on your actual workflows before committing to paid plans. If research and data handling dominate your workload, Manus AI justifies the subscription. If you build apps or need stable, predictable execution, reconsider.

Ready to Automate Your Workflow?

Start with Manus AI's free tier—no credit card required. Test it on your real workflows and see if autonomous execution saves you hours per week.

Try Manus AI Free →

Frequently Asked Questions

Is Manus AI free?

Yes. The free tier provides 1,000 starting credits plus 300 daily credits—enough for 3-5 small research tasks daily. Complex workflows consume credits faster, making paid plans necessary for regular use. We completed roughly 20 tasks with the free allocation before needing upgrades.

How does Manus AI credit system work?

Every task consumes credits based on complexity. Simple web searches cost ~50 credits. Building a website, analyzing large datasets, or running multi-step workflows burns 500-900 credits. We found costs unpredictable before execution—similar tasks sometimes varied by 2-3x in consumption. Monthly plans refresh credits; unused credits don't roll over, so budget conservatively.

What can Manus AI do?

Autonomously execute research (with citations), analyze data and generate visualizations, write and deploy code, build websites and presentations, manage files, and run multi-step workflows in the background. It's designed to replace repetitive knowledge work—the mechanical parts of your job that don't require human judgment.

Is Manus AI safe to use?

For research and analysis, yes. For sensitive operations, exercise caution. Never give it access to financial accounts, passwords, or high-stakes systems where errors cause real damage. Always review outputs before deployment. We tested it on public research tasks with confidence; we wouldn't trust it with proprietary algorithms or payment processing without verification layers.

How does Manus AI compare to ChatGPT?

ChatGPT requires you to prompt it repeatedly—you ask, it answers, you ask again. Manus AI autonomously executes entire workflows. You describe what you need once; it researches, codes, builds, deploys, and reports back. ChatGPT excels at conversation and instant answers; Manus AI excels at complex multi-step projects you'd normally juggle across multiple tools.

What AI models does Manus AI use?

Manus AI orchestrates multiple models—Claude for complex reasoning, Qwen for speed-critical tasks, and specialized models for specific workloads. The platform intelligently routes tasks, sometimes using different models within a single workflow. We observed it selecting Claude for deep analysis and Qwen for straightforward data processing, optimizing for both accuracy and cost.

Can Manus AI build websites?

Yes, but with caveats. It generates HTML/CSS, deploys to hosting, and creates functional landing pages. However, complex designs sometimes had layout bugs, and interactive features occasionally needed debugging. We'd classify it as "MVP-ready"—fine for prototypes and simple sites, risky for production without human review.

Is Manus AI worth the price?

For researchers and analysts, absolutely. The autonomous web research, citation sources, and background execution save hours weekly—easily justifying $20-$40/month. For app developers, stability issues make alternatives more appealing despite similar pricing. For teams running 500+ tasks monthly, Extended plans ($200/mo) offer solid value. Start free, measure your credit consumption over 2-3 weeks, then decide.

Reviewed by Wayne MacDonald | Coding & Automation Category | Last updated March 26, 2026

Get Premium AI Tool Insights

Subscribe to get weekly curated AI tool recommendations, exclusive deals, and early access to new tool reviews.

Related Tools

Droidrun

ai-automation

Open-source AI framework that lets LLM agents control Android and iOS apps via native accessibility APIs. 91.4% success rate, €2.1M funded.

R

Renamer.ai

ai-automation

AI-powered file renaming tool that uses OCR to read document content and automatically generates meaningful file names. Supports 30+ file types and 20+ languages.

Related Articles

Why We Built an AI Tool Search That Actually Understands What You Need

Every AI tool directory is a glorified spreadsheet. We built intent-based semantic search that understands what you want to DO — not just what you type.

AWS MCP Servers Review: 66 Official Servers That Give AI Agents Full Cloud Control

We tested AWS official MCP servers across S3, Lambda, DynamoDB, CDK, and more. 66 servers covering 15,000+ APIs — here is everything you need to know.

Playwright MCP Server Review: Microsoft Browser Automation That AI Agents Actually Understand

We tested Playwright MCP Server with Claude, Cursor, and VS Code. 70+ tools, accessibility tree innovation, and 3 browser engines — our full review.