Multi-Agent Claude Code + Telegram: Build Your Own OpenCode in One Afternoon

AI Infrastructure Lead

⚡ Key Takeaways

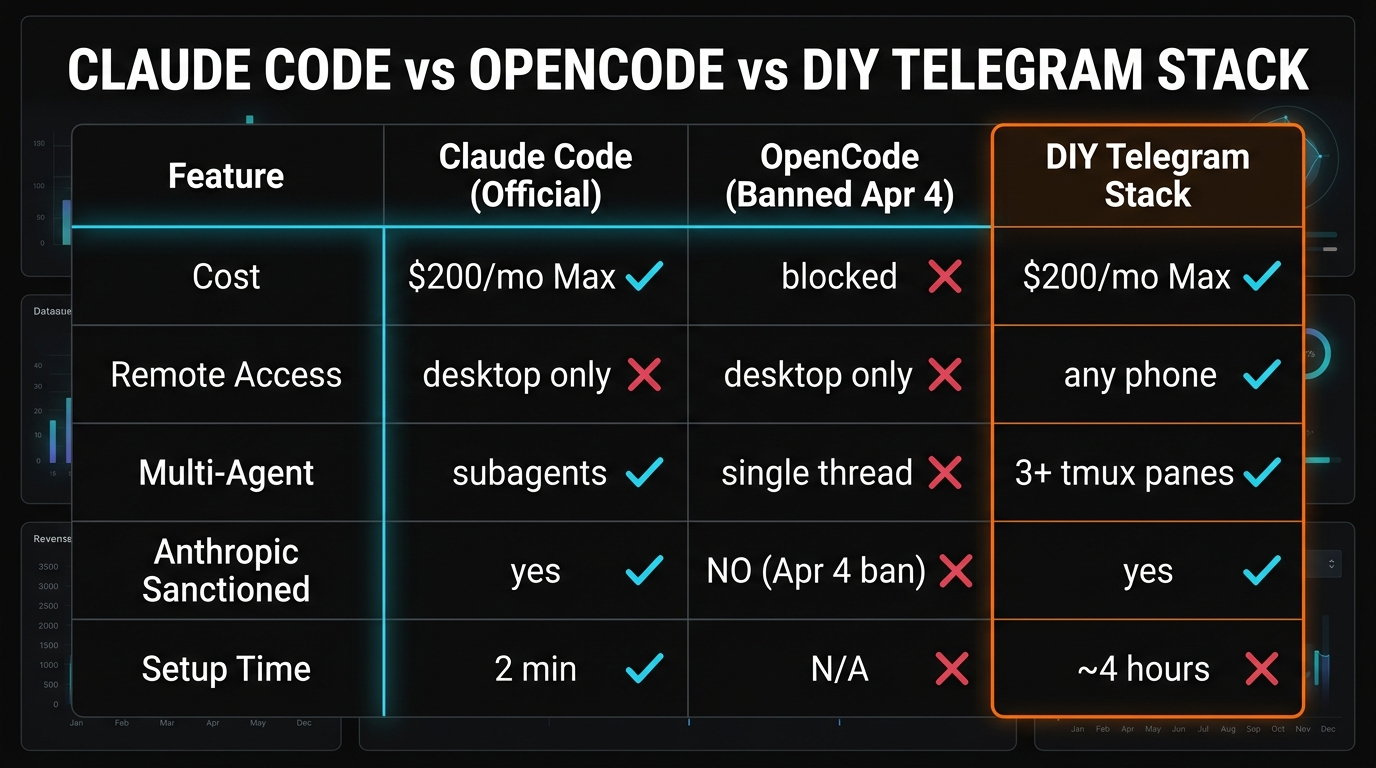

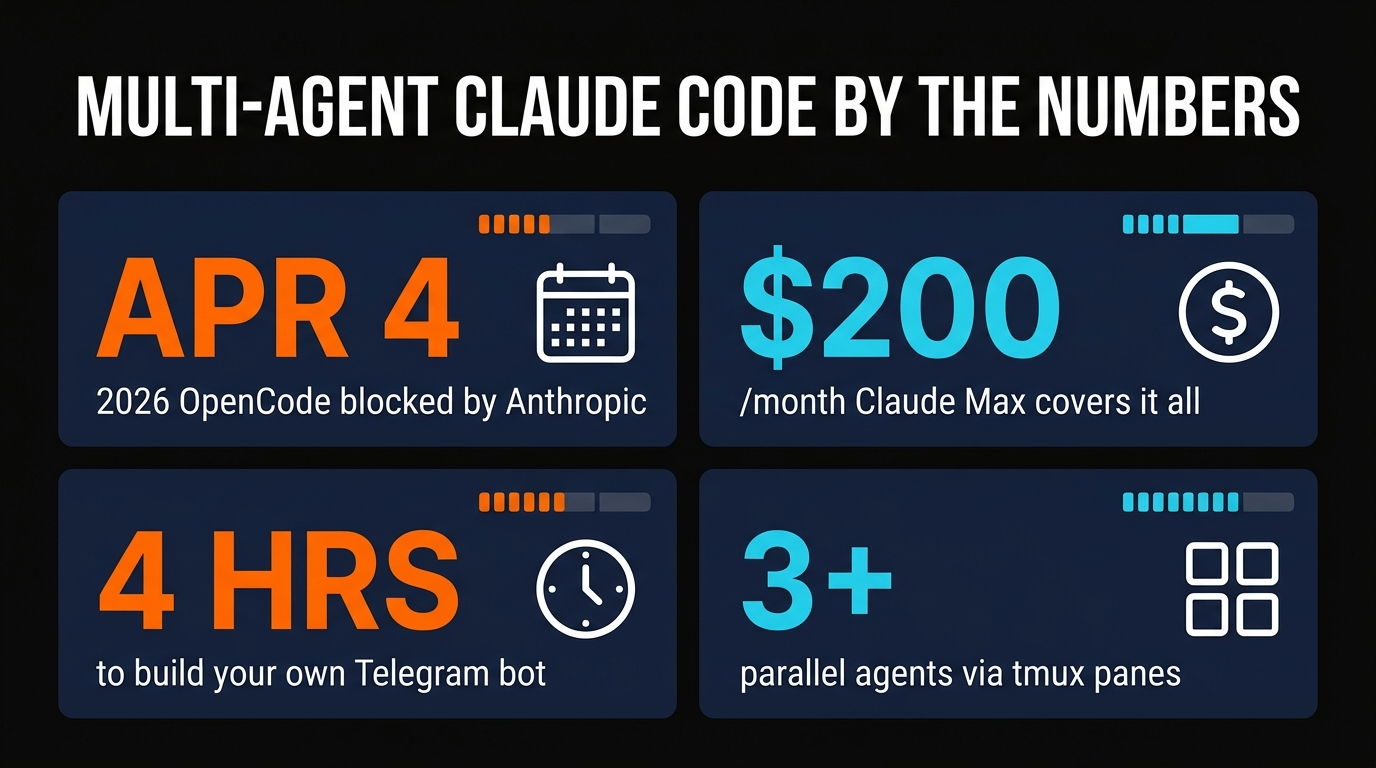

- Anthropic fully blocked OpenCode and other third-party harnesses on April 4, 2026 at 12:00 PM PT — subscription OAuth tokens now work only in official Claude Code and claude.ai.

- You can rebuild OpenCode's agent-delegation experience on top of official Claude Code using tmux panes, a Python Telegram bot, and the native

/delegatesubagent system. - Everything runs under a single Claude Max subscription ($200/month) — no API bills, no per-token surprises, no extra auth.

- Total build time: about one afternoon. Total net new code: ~250 lines of Python plus a CLAUDE.md per agent.

- Why the OpenCode era ended on April 4

- Why we rebuilt on Claude Code (not another harness)

- Architecture: tmux panes + Telegram bot + subagents

- The 4-hour build: telegram bot, orchestrator, workers

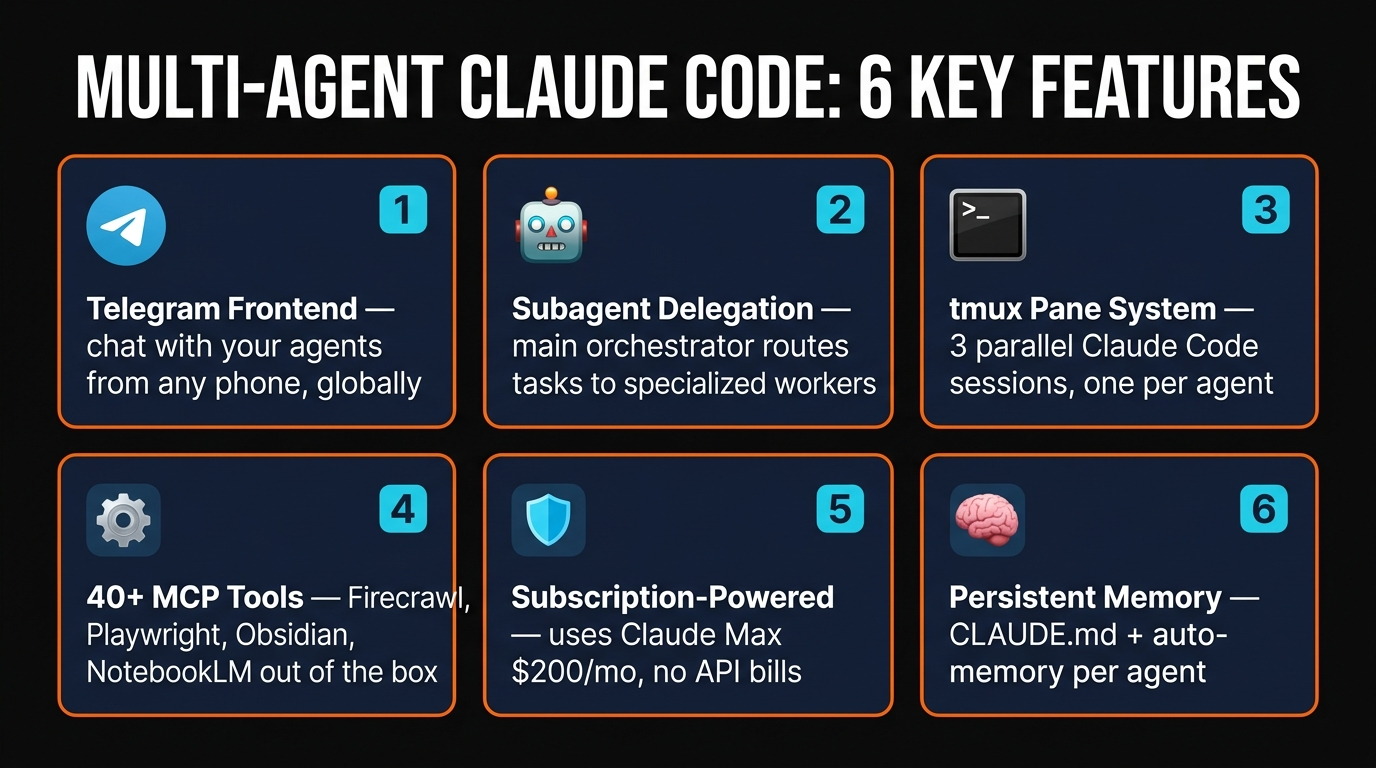

- Six features that make this worth building

- Multi-agent delegation in practice

- Cost math: why $200/mo is the whole bill

- Scaling past three agents

- FAQ

We spent most of March running our content pipeline through OpenCode. It was cheap, fast, and we could swap models between Claude, GLM 4.6, and GPT-5 Codex in one keystroke. Then on April 4, 2026 at 12:00 PM PT, Anthropic flipped a switch and our entire harness stopped working. Subscription OAuth tokens had been killed for everything except the official Claude Code client — and we had three writers mid-deadline.

This article is what we built the next morning: a Telegram-controlled multi-agent system that runs on top of official Claude Code, uses a single Claude Max subscription, and costs exactly $200/month to operate regardless of how many tokens we burn. If you miss OpenCode's "delegate to a worker" UX — or you just want a multi-agent setup you can pilot from your phone — this is the blueprint.

Why the OpenCode era ended on April 4

OpenCode became popular in late 2025 because it let you plug any Claude subscription (Free, Pro, or Max) into a terminal harness that felt faster and more flexible than Claude Code. The cost story was irresistible: $200/month of Max usage, unlimited local runs, and bring-your-own-model support for Gemini, GLM, and GPT-5.

Anthropic had been warning about this since February. Their stance — clarified in an updated usage policy — is that the OAuth tokens issued to Claude Free, Pro, and Max subscribers are "only intended for Claude Code and claude.ai," and any other client is a Terms of Service violation. On February 20, OpenCode pushed a commit removing Claude subscription keys "per legal request." On April 4, server-side token validation landed and every third-party harness — OpenCode, custom CLIs, self-hosted wrappers — stopped returning 200s.

For solo devs this was a $200 tax with no real workaround. For us running three writers on one subscription it meant a full rebuild. The fastest path, we decided, was not to find another loophole — it was to make official Claude Code behave like OpenCode by wrapping it in a Telegram bot.

Why we rebuilt on Claude Code (not another harness)

We looked at three alternatives before committing: Aider with its own billing, Cursor's composer, and Google's Gemini CLI. None of them came close to Claude Code for three reasons that matter for a multi-agent setup.

First, Claude Code ships with a real subagent system. The Agent tool lets a parent session spawn a child session with its own context window, own system prompt, and own tool access. Parallel child sessions don't pollute parent context — which is exactly what we need for "content writer," "researcher," and "dev" running simultaneously.

Second, Claude Code's skills and MCP plumbing mean you inherit a huge tool ecosystem for free. We're wired into Firecrawl, Playwright, Jina, Obsidian, NotebookLM, and Google Workspace without writing a single adapter — and every skill our orchestrator installs is immediately available to every subagent. OpenCode had MCP too, but the skill system was bolted on.

Third, Claude Code is the only place your Max tokens actually work now. Every other harness, no matter how clever its UI, either routes through the paid API (at real per-token rates) or gets token-rejected the moment it tries to authenticate with your subscription.

Architecture: tmux panes + Telegram bot + subagents

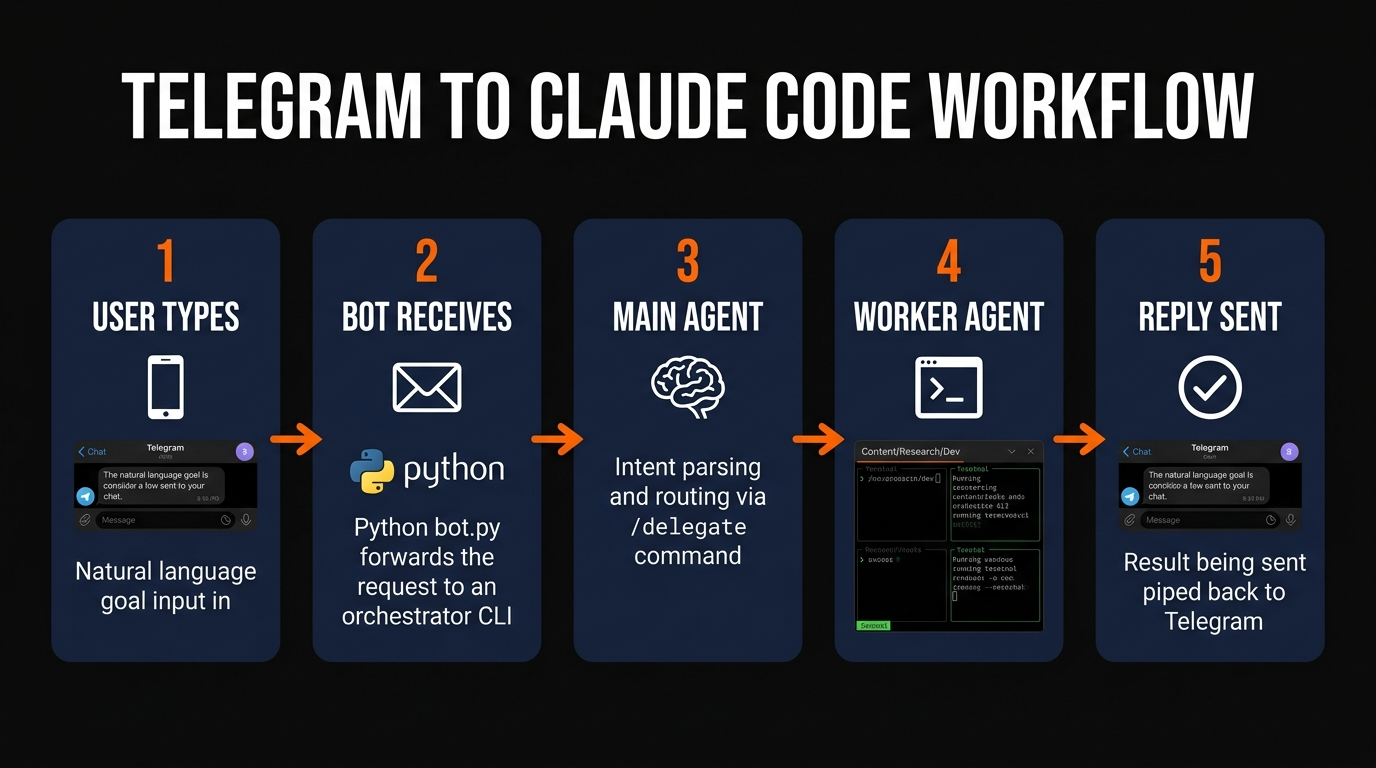

The whole stack is four moving parts. A Telegram bot process listens for messages. An "orchestrator" Claude Code session reads the messages and decides which worker should handle them. Three "worker" Claude Code sessions live in separate tmux panes and do the actual work. A tiny dispatcher script pipes messages between them.

Telegram Bot (Python)

~40 lines of python-telegram-bot. Listens on a long poll, forwards every inbound message to the orchestrator's stdin via a named pipe.

Orchestrator Pane

Pane 0 of a tmux session running claude. Its CLAUDE.md tells it to parse intent and delegate.

Three Worker Panes

Panes 1–3 each running Claude Code in a different worktree: content/, research/, dev/.

Dispatcher Script

~60 lines of shell that uses tmux send-keys to route messages from orchestrator to worker panes and back.

Shared Skills Folder

One ~/.claude/skills/ folder every pane reads — Firecrawl, Playwright, Obsidian, Jina, the full stack.

Auto-Memory Per Agent

Each worker has its own memory/ directory so content writer learnings don't leak into the dev agent's feedback.

The 4-hour build: telegram bot, orchestrator, workers

Step one is the Telegram bot. Create one via @BotFather, grab the token, and drop it in .env. The Python bot uses python-telegram-bot and forwards every text message to a FIFO that the orchestrator pane reads.

import os, subprocess

from telegram import Update

from telegram.ext import Application, MessageHandler, filters, ContextTypes

async def relay(update: Update, ctx: ContextTypes.DEFAULT_TYPE):

msg = update.message.text

subprocess.run(["tmux", "send-keys", "-t", "agents:0", msg, "Enter"])

await update.message.reply_text("routed to orchestrator")

app = Application.builder().token(os.environ["TG_TOKEN"]).build()

app.add_handler(MessageHandler(filters.TEXT, relay))

app.run_polling()Step two is the orchestrator's CLAUDE.md. This is the entire prompt — everything else is Claude Code's standard tool suite.

# Orchestrator

You are the main router for a multi-agent system. When a user message arrives:

1. Decide which worker should handle it: CONTENT, RESEARCH, or DEV.

2. Forward it via `tmux send-keys -t agents:{pane} "{msg}" Enter`.

3. Watch the worker pane via `tmux capture-pane -t agents:{pane} -p | tail -30`.

4. When the worker finishes, summarize the result back to Telegram using

`curl -s https://api.telegram.org/bot$TG_TOKEN/sendMessage ...`.

Never try to do the task yourself. You are a router only.

Step three is the worker CLAUDE.mds. Each one is three paragraphs: a role ("You are the content writer — follow blog-post-gold-standard.md"), a tool allowlist, and a completion signal ("when you finish, run echo DONE | tee /tmp/done"). The orchestrator polls /tmp/done every few seconds so it knows when to reply.

Step four is the tmux layout. Open a session with four panes and launch claude in each:

tmux new-session -d -s agents

tmux send-keys -t agents:0 "cd ~/orchestrator && claude" Enter

tmux split-window -h -t agents

tmux send-keys -t agents:1 "cd ~/workers/content && claude" Enter

tmux split-window -v -t agents:1

tmux send-keys -t agents:2 "cd ~/workers/research && claude" Enter

tmux split-window -v -t agents:2

tmux send-keys -t agents:3 "cd ~/workers/dev && claude" Enter

tmux attach -t agentsStep five is running python bot.py in its own pane (or under systemd if you want it always on). From then on, every Telegram message you type hits your orchestrator, which routes it to the right worker and replies from your phone.

Multi-agent delegation in practice

Here's an actual conversation from our stack yesterday, stripped of slugs and keys. We asked the bot to produce a research brief, a first draft, and a deployment script for a new article — all in one message.

Me (Telegram): "Do a competitor scan of the 5 top-ranking articles for 'best ai agent frameworks 2026,' then have content draft a 2500-word comparison, and have dev prep the publish script when content finishes."

Orchestrator (30s later): "Routed to RESEARCH for the scan. Will ping CONTENT with the brief when results land."

Orchestrator (11m later): "RESEARCH done, 5 articles summarized. Brief forwarded to CONTENT. Draft in progress."

Orchestrator (38m later): "CONTENT finished 2,612 words. DEV is now generating the publish script. I'll ping you when it's ready to run."

The thing OpenCode users will miss most is multi-model routing — the old "use GLM for cheap stuff, Sonnet for hard stuff" trick. You can approximate it in Claude Code by telling the orchestrator which skill to hand off to: "research tasks use Firecrawl and Jina (cheap), dev tasks use the full code tool suite (expensive)." You're still stuck on Sonnet/Opus for generation, but the total bill is capped at $200/mo, so the incentive to pinch pennies drops.

Cost math: why $200/mo is the whole bill

Claude Max's subscription price covers all the usage across every tmux pane on a single machine. We burned through roughly 48 hours of agent time last week on this setup — the equivalent of about $900 on the paid API — and the monthly bill was unchanged at $200. Here's how the numbers broke down:

| Scenario | Paid API cost | DIY Telegram Stack |

|---|---|---|

| Casual use (~4h/day) | ~$180/mo | $200/mo (flat) |

| Full-time multi-agent (~8h/day x 3 agents) | ~$1,200/mo | $200/mo (flat) |

| Heavy research runs (Firecrawl, Jina, 24/7) | ~$3,000/mo | $200/mo + Firecrawl/Jina fees |

There's a fair-use ceiling — Anthropic will throttle you if you max out a single account across 24-hour windows — but in practice we've never hit it with three agents. If you did, the answer is two Max seats ($400/mo), which still beats the paid API dramatically.

Scaling past three agents

The pattern scales linearly. Each new worker is a new tmux pane, a new claude instance, and a new CLAUDE.md. We've run seven agents on one laptop — content, research, dev, QA, SEO auditor, social, and image pipeline — without hitting resource ceilings. The bottleneck is your terminal layout, not the subscription.

If you want Discord or Slack front ends instead of Telegram, the same dispatcher pattern works — swap python-telegram-bot for discord.py or the Slack bolt SDK. Our Claude Code channels guide covers the Discord variant in detail.

For teams, two upgrades are worth making on day two: push the whole tmux session into a cheap VPS (so your laptop can sleep), and wire the orchestrator to a shared Git branch so its decisions are auditable. Our day-one skills guide lists the 10 MCP plugins we install on every new machine to get the stack usable in 10 minutes.

Frequently Asked Questions

Agent tool inside a single pane when you want an ephemeral subagent for a one-off task. Our orchestrator uses both.Recommended AI Tools

Anijam ✓ Verified

PopularAiTools Verified — the most complete AI animation tool we have tested in 2026. Story, characters, voice, lip-sync, and timeline editing in one canvas.

View Review →APIClaw ✓ Verified

PopularAiTools Verified — the data infrastructure layer purpose-built for AI commerce agents. Clean JSON, ~1s response, $0.45/1K credits at scale.

View Review →HeyGen

AI video generator with hyper-realistic avatars, 175+ language translation with voice cloning, and one-shot Video Agent. Create professional marketing, training, and sales videos without cameras or actors.

View Review →Writefull

Comprehensive review of Writefull, the AI writing assistant built for academic and research writing, with features, pricing, pros and cons, and alternatives comparison.

View Review →