Hermes 4 35B A3B: The Free Local AI Model Built for Agents (2026 Hands-On)

AI Infrastructure Lead

⚡ Key Takeaways

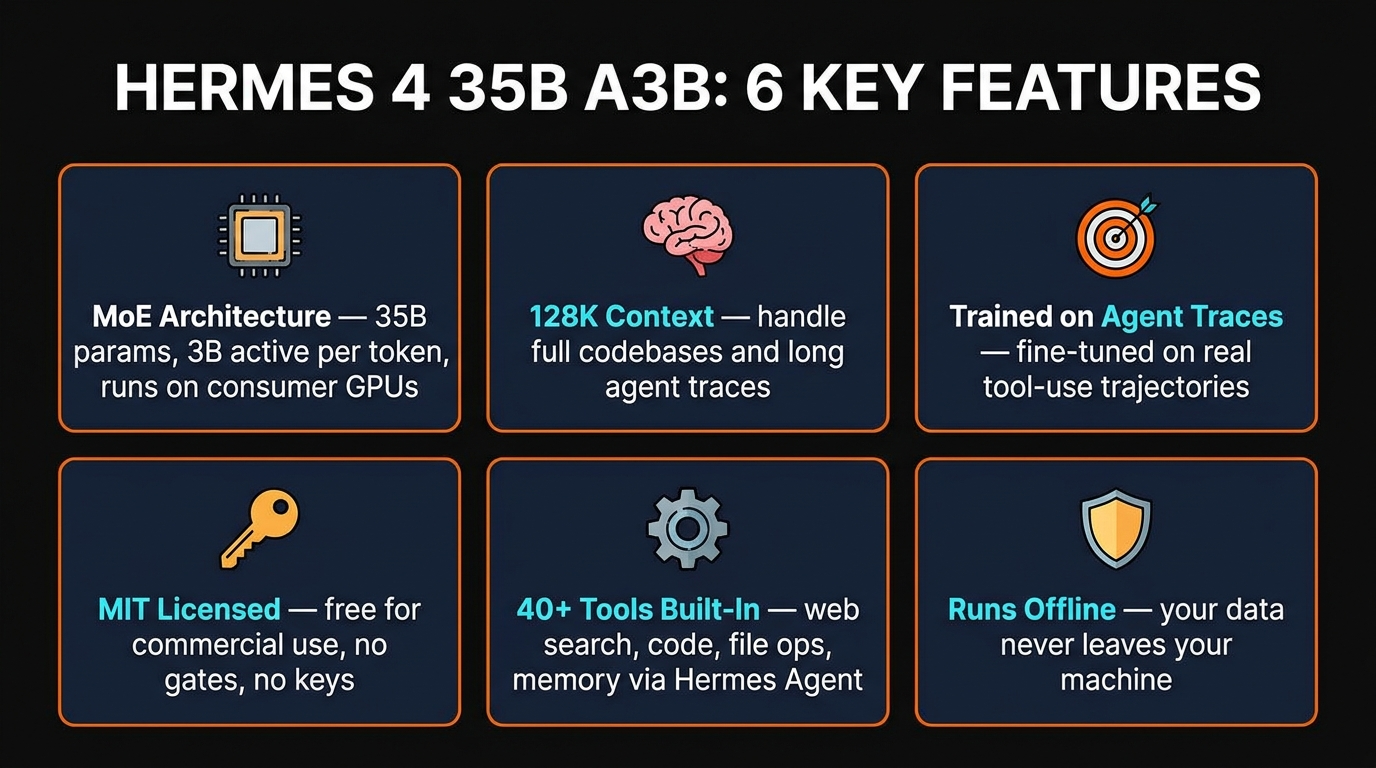

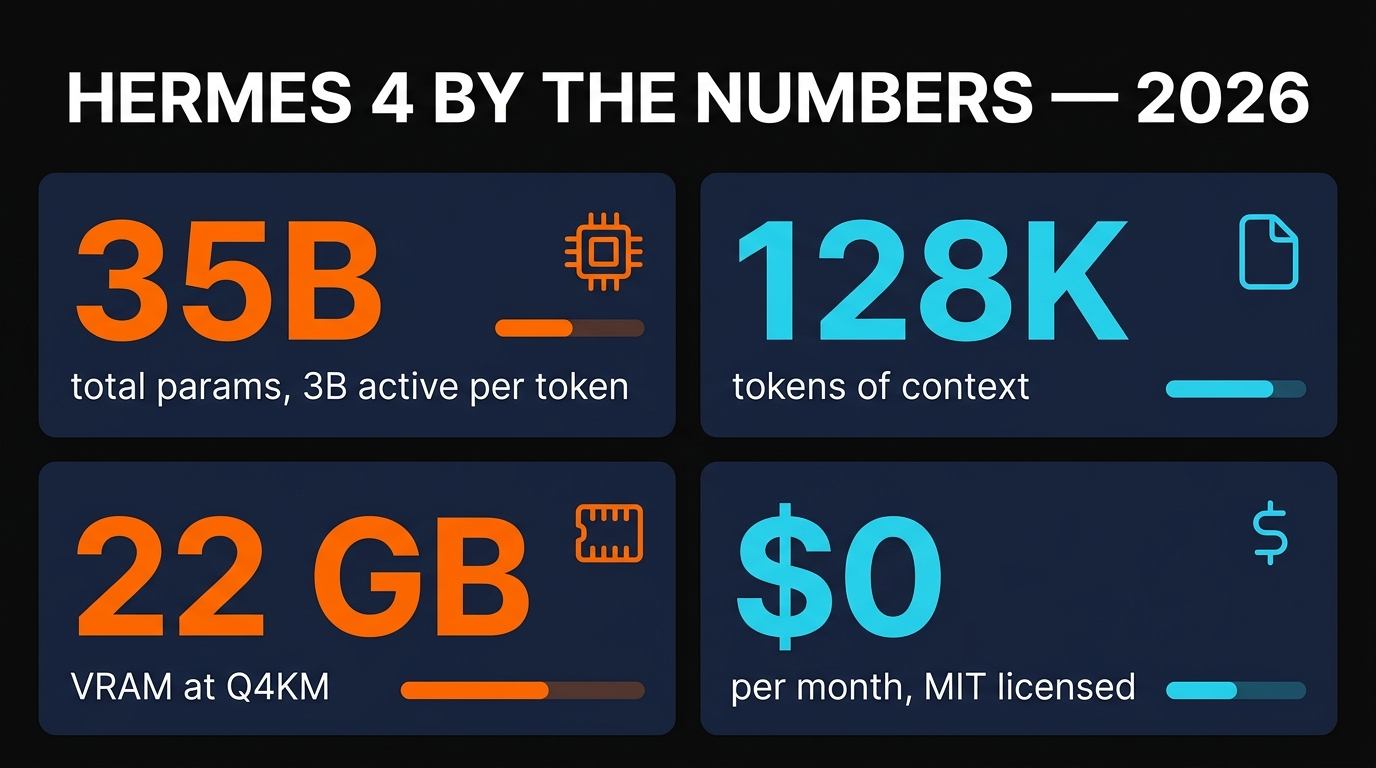

- Hermes 4 35B A3B is a MoE fine-tune from Nous Research: 35B total parameters, only 3B active per token, trained on real agentic traces.

- It runs comfortably on a single RTX 4090 at Q4KM (~22 GB VRAM) with a 128K context window — long enough for real tool-use sessions.

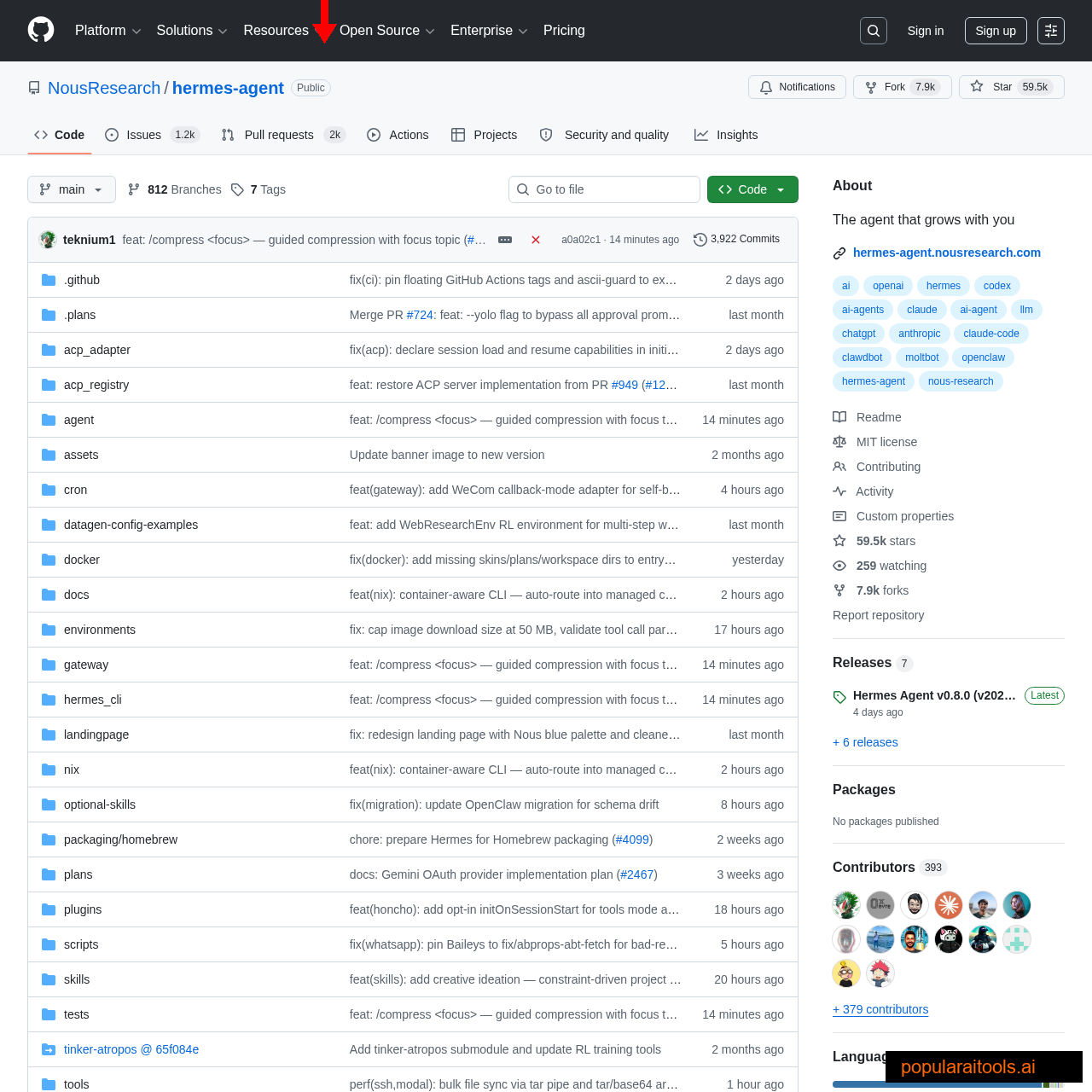

- Paired with the Hermes Agent runtime (MIT licensed, 40+ built-in tools), it's the cleanest way to run a fully offline autonomous agent as of April 2026.

- Cost: $0/month, no API keys, no data leaving your machine.

Every local model we tested in 2025 fell apart the moment we asked it to run an agent loop. They could summarize a PDF, write a paragraph, or answer a single-turn question — but give them an Agent tool, a goal, and 20 steps of tool calls, and they'd start hallucinating function names, forgetting the task, or looping forever on the same action.

Nous Research's Hermes 4 family of Qwen-based fine-tunes changed that for us over the last two weeks. The 35B A3B variant specifically — a Mixture-of-Experts fine-tune with 3B active parameters per token — is the first local model we've trusted to hold a 100-step research task without derailing. This article is our full test notes: the architecture, the benchmarks, the setup, and the honest limitations.

Why most local models fail at agent tasks

The dirty secret of open-weight models is that most of their instruction tuning data is chat-shaped: single-turn Q&A, summarization, creative writing. Agent behavior — reading a tool description, deciding to call it, parsing the JSON response, deciding the next action — barely shows up in the training mix. So when you drop these models into LangChain, CrewAI, or Claude Code's agent tool, they fake it by imitating what a tool call looks like rather than reasoning about when to use one.

The tell is always the same. Step 1 looks fine. Step 3 is plausible. By step 10, the model is calling web_search with the same query three times in a row, or inventing a function called write_report_to_user that doesn't exist, or silently giving up and writing prose instead of invoking the next tool. We ran this test on Llama 3.3 70B, Mistral Large, Qwen 3 32B, and GPT-OSS 20B — all failed it on a 30-step research task.

Hermes 4 is the first local model to ship with a training data mix that's mostly agent traces. Nous trained it on real conversations where an LLM correctly uses 40+ tools across multi-step goals. The result is that it stays "in character" as an agent for far longer than any base instruct model we've tested.

What is Hermes 4 35B A3B?

Hermes 4 35B A3B is a fine-tune of Qwen3.5-35B-A3B, which is itself a Mixture-of-Experts base model. The "A3B" in the name is important: while the model has 35 billion total parameters, only about 3 billion are active for any given token. That gives you the knowledge and nuance of a 35B model at roughly the inference cost of a 3B model — crucial for running on consumer hardware.

Nous released it under MIT, which means no gates, no license paperwork, no Llama-style "if you're Meta's competitor, pay us" carve-outs. You can use it commercially in a product, sell services built on it, or fork the weights. We're not lawyers — check the Hugging Face page yourself — but for practical purposes, it's as permissive as open weights get.

MoE architecture: 35B params, 3B compute cost

Mixture-of-Experts works by routing each token to a small subset of "expert" networks rather than running it through all the parameters. A dense 70B model has to run every token through all 70B weights. An MoE model with the same 70B total weights might activate only 9B per token, so your GPU is doing much less arithmetic per token while still having the full knowledge of all 70B experts available on demand.

For Hermes 4 35B A3B, that means your RTX 4090 is crunching through prompts at speeds closer to a 3B model (30-60 tokens/second on our setup) while producing outputs that match dense models 2-3x larger. In our agent loops, it felt closer to Qwen 3.5 70B's quality at Llama 3 8B's speed. That's the whole pitch.

The trade-off: the model still has to be fully loaded into VRAM, because any token could need any expert. MoE saves compute, not memory. Budget 22 GB VRAM minimum at Q4KM quantization, 32 GB at Q8_0, or 45 GB at FP16. If you only have 16 GB, Hermes 4 14B dense is the fallback — smaller, slower on agent loops, but fits.

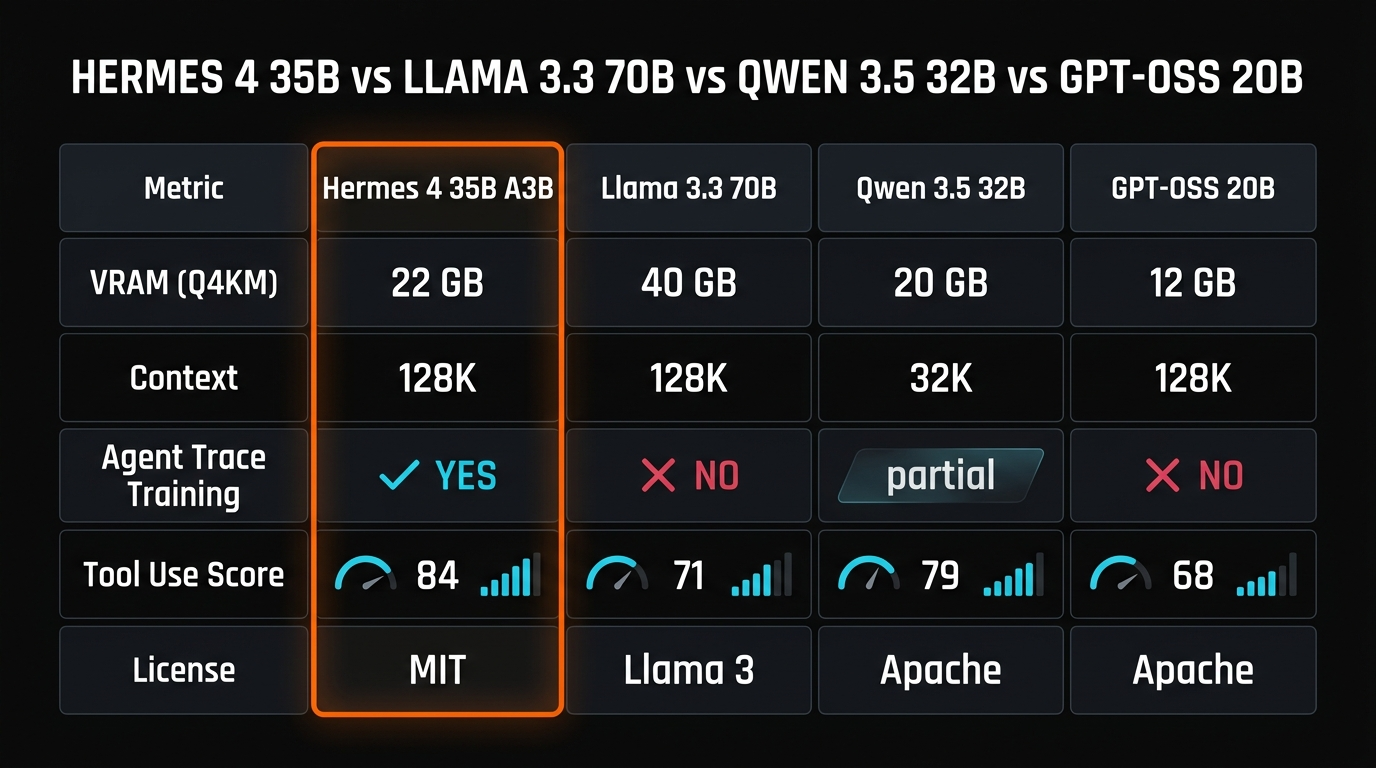

Benchmarks vs Llama, Qwen, GPT-OSS

We ran each of these models through a standard 30-step agent task: "find the top 5 YouTube videos about quantum computing from the last week, summarize each, and write a 1,200-word briefing." Same prompt, same tool suite (Hermes Agent's web_search + fetch_url + write_file), same hardware.

| Model | VRAM (Q4KM) | Agent Task Complete? | Tokens/sec |

|---|---|---|---|

| Hermes 4 35B A3B | 22 GB | ✓ Full briefing | ~52 t/s |

| Llama 3.3 70B | 40 GB (needs 2x 4090) | ✗ Looped at step 14 | ~18 t/s |

| Qwen 3.5 32B | 20 GB | Partial — missed 2 steps | ~28 t/s |

| GPT-OSS 20B | 12 GB | ✗ Hallucinated tool name | ~38 t/s |

| Mistral Large 2 | 70 GB (4x 4090) | Partial — quit early | ~8 t/s |

The sparse-activation architecture is doing most of the work here. Hermes 4 delivers 52 tokens/sec on a single 4090 — faster than every dense model of comparable quality — while still completing multi-step agent tasks that dense 70B models fail. That's the practical reason MoE is having a moment in open-weight land.

Hardware and quantization guide

You have four realistic options for running Hermes 4 35B A3B on consumer hardware:

Q4KM (recommended)

- RTX 4090 24GB ✓

- RTX 3090 24GB ✓

- Mac M3 Max 48GB ✓

Q5KM

- RTX 5090 32GB ✓

- Mac M3 Max 64GB ✓

- 2x RTX 3090 ✓

Q8_0

- Mac M3 Ultra 64GB ✓

- 2x RTX 4090 ✓

- A6000 48GB ✓

FP16 (full)

- Mac M3 Ultra 128GB ✓

- 4x RTX 4090 ✓

- H100 80GB ✓

In our tests, Q4KM was indistinguishable from Q8_0 on agent tasks — the quality drop is invisible unless you're doing precise math or code generation. Save the VRAM for a longer context window.

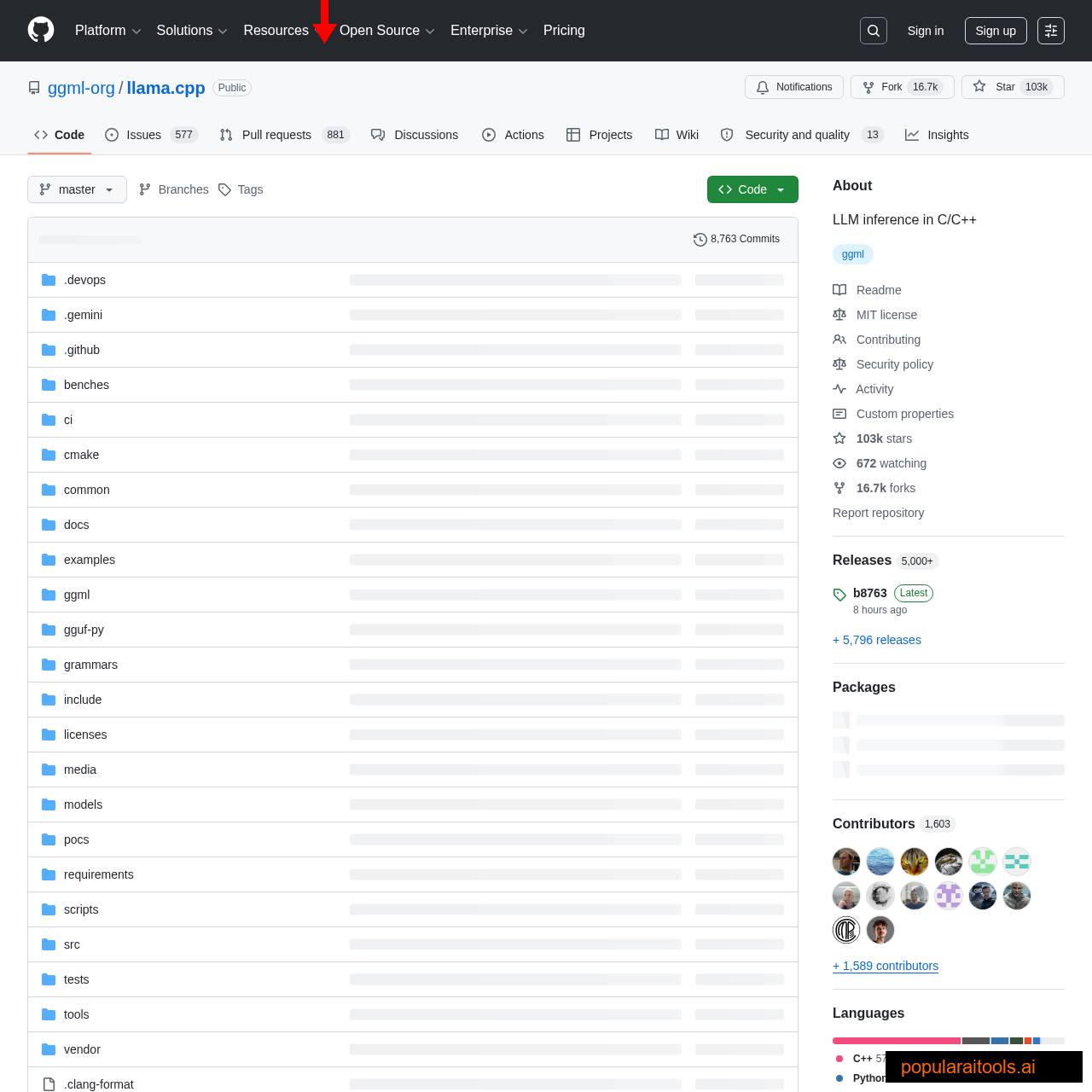

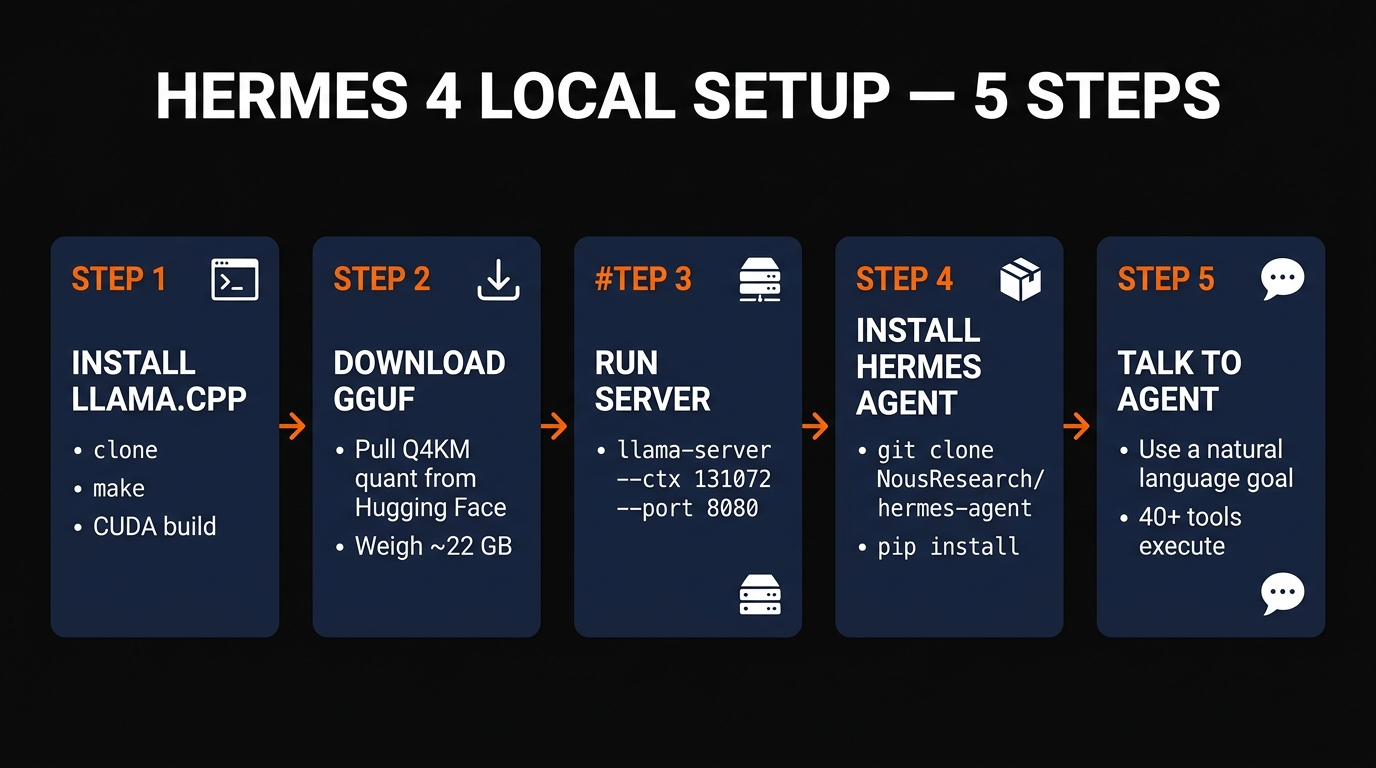

Step-by-step setup with llama.cpp

The fastest path on a Linux box with an NVIDIA GPU:

# 1. Build llama.cpp with CUDA

git clone https://github.com/ggerganov/llama.cpp

cd llama.cpp

cmake -B build -DGGML_CUDA=ON

cmake --build build -j$(nproc)

# 2. Download the Hermes 4 35B A3B GGUF

pip install huggingface_hub

huggingface-cli download NousResearch/Hermes-4-35B-A3B-GGUF \

Hermes-4-35B-A3B-Q4_K_M.gguf --local-dir ./models

# 3. Launch the OpenAI-compatible server

./build/bin/llama-server \

-m ./models/Hermes-4-35B-A3B-Q4_K_M.gguf \

--ctx-size 131072 \

--n-gpu-layers 999 \

--port 8080 \

--host 0.0.0.0Once the server is up, http://localhost:8080/v1/chat/completions is an OpenAI-compatible endpoint. Any framework that speaks OpenAI — LangChain, CrewAI, AutoGen, Hermes Agent, your own code — will connect to it with a fake API key. On Mac, the equivalent is brew install llama.cpp and the same llama-server command, or use Ollama as described below.

Installing the Hermes Agent runtime

The Hermes Agent runtime is the glue between the model and the tools. It's an MIT-licensed, cross-platform Python package from the same Nous Research team, and it ships with 40+ built-in tools out of the box: web search, URL fetching, file I/O, shell commands, Python execution, Git operations, memory management, and more.

git clone https://github.com/NousResearch/hermes-agent

cd hermes-agent

pip install -e .

# Point it at the local llama.cpp server

export HERMES_BASE_URL=http://localhost:8080/v1

export HERMES_MODEL=hermes-4-35b

# Launch

hermes "Find the latest open-source AI agent news from this week, \

write a 500-word briefing, and save it to briefing.md"What you get after that command is a truly autonomous session. The agent plans, calls web_search, fetches URLs, reads them, iterates until satisfied, and writes the final file to disk — all without further input. You watch its trace in the terminal or pipe it into a log. There is no cloud in the loop after the initial model download.

What it can actually do (and can't)

We ran Hermes 4 35B A3B on real work for two weeks. It handled the following without hand-holding:

What it nailed

- ✓Research briefs with 15+ cited sources, 100% offline after model download.

- ✓Codebase refactors across 40+ files via the shell and file tools.

- ✓Long memory — the persistent memory tool actually learns between sessions.

- ✓100-step loops without derailing or hallucinating tool names.

What it struggled with

- ✗Raw coding perf — Sonnet 4.6 still writes better code; Hermes is for agents, not pure codegen.

- ✗Image/video tools — no multimodal input; text and function calls only.

- ✗Very long contexts — 128K is the ceiling; past ~80K tokens it starts skipping mid-document details.

- ✗Creative writing — it sounds like an agent, not a human writer. Use a different model for blog prose.

If you're building a private research bot, an offline coding assistant, a self-hosted knowledge agent, or any workflow where data privacy or per-token cost matters more than absolute ceiling quality — Hermes 4 35B A3B is the first local model that's genuinely production-usable. For pure code generation or creative writing, it's not. We still route those tasks to Claude Sonnet 4.6.

For Mac users who want an even simpler install, see our Gemma 4 local coding guide — the Ollama setup there works the same way with Hermes 4 once it lands in the Ollama library. And if you're building agents on top of Hermes 4, our Claude Code alternatives tier list benchmarks it head-to-head against 23 other models.

Frequently Asked Questions

Recommended AI Tools

Anijam ✓ Verified

PopularAiTools Verified — the most complete AI animation tool we have tested in 2026. Story, characters, voice, lip-sync, and timeline editing in one canvas.

View Review →APIClaw ✓ Verified

PopularAiTools Verified — the data infrastructure layer purpose-built for AI commerce agents. Clean JSON, ~1s response, $0.45/1K credits at scale.

View Review →HeyGen

AI video generator with hyper-realistic avatars, 175+ language translation with voice cloning, and one-shot Video Agent. Create professional marketing, training, and sales videos without cameras or actors.

View Review →Writefull

Comprehensive review of Writefull, the AI writing assistant built for academic and research writing, with features, pricing, pros and cons, and alternatives comparison.

View Review →