Qwen 3.5 Omni Review 2026: Alibaba Native Omni Model for Voice, Video & Tools

Head of AI Research

TL;DR — Qwen 3.5 Omni Review

Alibaba shipped an open-source omni model that genuinely competes with closed-source alternatives on multimodal tasks. Qwen 3.5 Omni handles text, voice, video, and tool calling natively — and it costs nothing to self-host. The voice and video capabilities are surprisingly strong for an open-source model. It is not going to dethrone GPT-5 or Claude Opus 4.6 on pure reasoning, but for developers who want a capable multimodal model they can run on their own hardware with zero API fees, Qwen 3.5 Omni is the best option available right now. The Alibaba association will give some organizations pause, but the open-source nature means you can audit every weight.

Table of Contents

What Is Qwen 3.5 Omni?

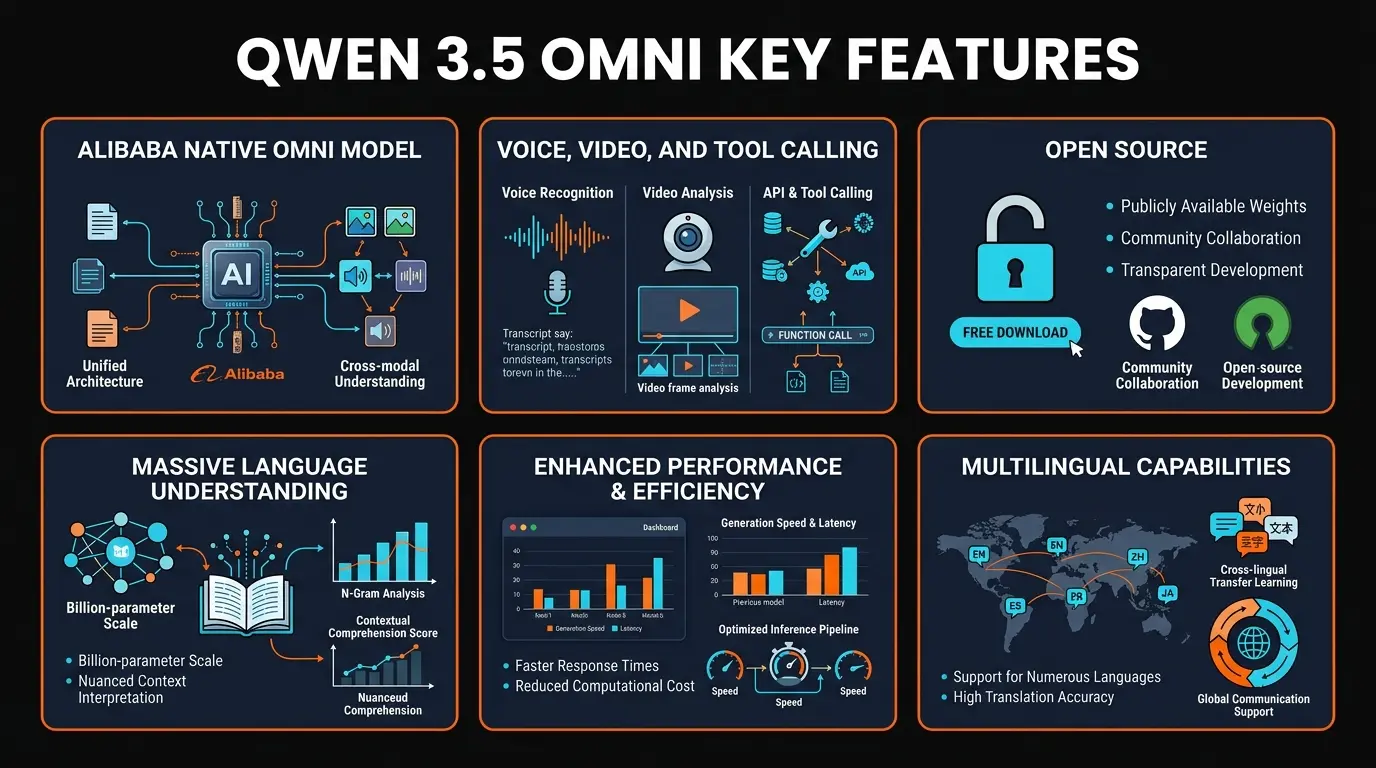

Qwen 3.5 Omni is Alibaba Cloud's latest open-source multimodal AI model, released on March 31, 2026. It picked up 131 upvotes on Product Hunt within its first day — a solid showing for an open-source model competing against the marketing budgets of OpenAI and Google. The "Omni" designation is not marketing fluff: this model natively processes text, voice, video, and supports tool calling in a single unified architecture.

We have been tracking the Qwen model family since Qwen 1.0 in late 2023, and the progression has been remarkable. Each generation has closed the gap with Western frontier models, and Qwen 3.5 Omni represents the most complete package yet. It is part of the broader Qwen 3 series, which includes text-only, vision, and audio variants. The Omni model combines all of these into a single architecture that handles every modality without switching between specialized sub-models.

Here is the thing that makes Qwen 3.5 Omni different from GPT-5 or Claude Opus 4.6: it is completely open source. The model weights are on GitHub under the QwenLM organization. You can download them, run them on your own hardware, fine-tune them for your specific use case, and deploy them in production — all without paying a cent in licensing fees. For developers and companies who care about data sovereignty, cost control, or simply not being locked into a proprietary API, this matters enormously.

The elephant in the room is that it comes from Alibaba Cloud, which is a subsidiary of Alibaba Group, a Chinese company. We will address this honestly: some organizations will have concerns about using AI models from Chinese tech companies, whether due to regulatory compliance, geopolitical considerations, or general risk aversion. The open-source nature mitigates most technical concerns — you can audit the code, run it in air-gapped environments, and ensure no data leaves your infrastructure. But the perception issue is real and worth acknowledging upfront.

Key Features

We spent four days testing Qwen 3.5 Omni across coding, voice interaction, video analysis, and agentic tool use. Here is what stood out.

Native Voice Processing

Unlike models that pipe audio through a separate speech-to-text step, Qwen 3.5 Omni processes voice input directly. It understands tone, emphasis, and conversational context from the audio stream itself. Voice output is natural and supports multiple languages. This enables real-time voice conversations without the latency penalty of transcription pipelines.

Video Understanding

Feed it a video and it understands what is happening. Scene transitions, on-screen text, object tracking, and temporal reasoning all work in a single pass. We tested it with coding tutorial videos and it accurately described each step, identified the IDE being used, and read code from screen recordings. Not perfect on fast-moving content, but impressive for an open-source model.

Native Tool Calling

Qwen 3.5 Omni supports structured function calling out of the box. Define your tools with JSON schemas and the model will invoke them reliably, chain multiple calls together, and handle the results. This makes it viable for agentic workflows where the model needs to interact with external APIs, databases, or services — a feature previously limited to closed-source models.

Strong Code Generation

Competitive with GPT-5 on standard coding benchmarks across Python, TypeScript, Java, and C++. Where it particularly shines is in multilingual codebases and documentation generation. It handles code-switching between languages naturally and produces clean, well-commented output. It slots in behind Claude Opus 4.6 and GPT-5 on complex architectural reasoning, but handles routine coding tasks well.

Fully Open Source

Apache 2.0 license. No restrictions on commercial use. Model weights, training code, and inference scripts are all available on GitHub and Hugging Face. You can fine-tune it on your own data, deploy it in air-gapped environments, and modify the architecture. No other model at this capability level offers this degree of openness — Meta's Llama has more restrictive licensing terms.

Multilingual Excellence

Support for 30+ languages with particularly strong performance in Chinese, Japanese, Korean, and Southeast Asian languages. If you serve a global audience or work in multilingual environments, Qwen 3.5 Omni handles language mixing and translation tasks better than most alternatives. English performance is strong but not quite at the level of models trained primarily on English data.

How to Use Qwen 3.5 Omni

There are three main ways to get started with Qwen 3.5 Omni depending on your technical level and infrastructure.

Option 1: Hugging Face (Easiest Start)

Head to huggingface.co/Qwen and find the Qwen3-Omni model. You can test it directly in the Hugging Face Spaces demo with no setup required. Upload text, audio, or video and get responses immediately. This is the fastest way to evaluate the model before committing to local deployment.

Option 2: Local Deployment (Full Control)

For developers who want to run Qwen on their own hardware:

pip install transformers torch accelerate

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "Qwen/Qwen3-Omni"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="auto"

)

# Text generation

messages = [{"role": "user", "content": "Explain the benefits of open-source AI models"}]

text = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

inputs = tokenizer([text], return_tensors="pt").to(model.device)

output = model.generate(**inputs, max_new_tokens=512)

print(tokenizer.decode(output[0], skip_special_tokens=True))Hardware requirements: The full model needs at least 80GB VRAM (A100 or equivalent). Quantized versions (GPTQ 4-bit) run on consumer GPUs with 24GB VRAM like the RTX 4090. Smaller Qwen 3 variants are available for more constrained environments.

Option 3: DashScope API (Managed Service)

Alibaba Cloud's DashScope platform offers hosted API access to Qwen 3.5 Omni with a free tier. The API is OpenAI-compatible, so you can often swap in a Qwen endpoint with minimal code changes if you are already using OpenAI's SDK. This is the easiest production path if you do not want to manage GPU infrastructure.

Pricing and Deployment

This is where Qwen 3.5 Omni's value proposition becomes impossible to ignore. The model is free. Not freemium, not free-with-limits — free.

Self-Hosted

- ✓ Full model weights

- ✓ Apache 2.0 license

- ✓ Commercial use allowed

- ✓ Fine-tuning supported

DashScope API

- ✓ Generous free quota

- ✓ Pay-as-you-go after

- ✓ OpenAI-compatible API

- ✓ No GPU required

Cloud Inference

- ✓ RunPod, Together AI, etc.

- ✓ Scalable on demand

- ✓ No hardware management

- ✓ Your data stays private

The cost comparison is stark: Running Qwen 3.5 Omni on a rented A100 costs roughly $1-3 per hour. A heavy user making 500 API calls per day to GPT-5 through the API might spend $50-200 per month. Self-hosting Qwen eliminates that variable cost entirely — you only pay for electricity and hardware. For startups and indie developers, this is the difference between an AI feature being viable or not.

The tradeoff is clear: you pay with engineering time instead of API fees. Setting up and maintaining a self-hosted model requires GPU infrastructure, model updates, and operational overhead. If your team does not have ML engineering capacity, the DashScope API or a managed inference provider like Together AI is the more practical path.

Pros and Cons

After four days of intensive testing across coding, voice, video, and agentic tasks, here is our honest assessment:

Strengths

- ✓ Truly open source with no strings. Apache 2.0 license means commercial use, fine-tuning, and redistribution are all permitted. Meta's Llama has more restrictive terms. This is genuine open source.

- ✓ Voice processing is genuinely impressive. Native voice input and output without transcription pipeline. Real-time voice conversations feel natural and responsive. This is the best voice capability we have seen in an open-source model.

- ✓ Video understanding works. Scene analysis, temporal reasoning, and on-screen text extraction all function reliably. Not at Gemini 3.1 Flash level, but strong for an open-source model.

- ✓ Tool calling reliability is competitive. Native function calling with structured JSON output works on first attempt in most of our tests. Good enough for production agentic workflows.

- ✓ Multilingual performance is best-in-class. If you need strong Chinese, Japanese, or Korean alongside English, no other model at this capability level handles it better. Period.

- ✓ Cost is unbeatable. Zero licensing fees, self-hostable, with quantized variants for consumer hardware. The economics make it viable for use cases where API costs would be prohibitive.

Weaknesses

- ✗ Pure reasoning trails the top tier. On complex multi-step reasoning tasks, GPT-5 and Claude Opus 4.6 still produce more reliable output. Qwen handles 85% of coding tasks well but stumbles on architectural decisions that require deep contextual understanding.

- ✗ Documentation is inconsistent. Some features have excellent English documentation. Others have Chinese-only docs or sparse README files. If you hit an edge case, you may end up reading Chinese GitHub issues or relying on community translations.

- ✗ Alibaba association raises flags for some. Government agencies, defense contractors, and some regulated industries will have compliance concerns about deploying a model from a Chinese tech company, regardless of its open-source nature. This is not a technical limitation but a real-world adoption barrier.

- ✗ Hardware requirements are steep for the full model. The unquantized Omni model needs 80GB+ VRAM. Quantized versions trade quality for accessibility. If you do not have an A100 or equivalent, you are either running a degraded model or paying for cloud GPUs.

- ✗ English writing quality is functional but not polished. Technical content reads fine. Creative writing and nuanced English prose do not match Claude Opus 4.6 or even GPT-5. If you need a model primarily for English copywriting, look elsewhere.

- ✗ Ecosystem is smaller. GPT-5 has ChatGPT, Copilot, and thousands of plugins. Claude has the API ecosystem and Claude Code. Qwen's integration ecosystem is growing but still limited compared to the incumbents.

Qwen 3.5 Omni vs GPT-5 vs Claude Opus 4.6 vs Gemini 3.1 Flash

We tested all four models across the same tasks: code generation, multimodal analysis, voice interaction, and agentic tool use. Here is where each one stands. For detailed reviews of the other models, see our best AI coding assistants comparison.

The honest breakdown: If you need the best possible reasoning and writing, Claude Opus 4.6 wins. If you want a polished consumer product with agentic capabilities, GPT-5 is the answer. If you need speed and video at low cost, Gemini 3.1 Flash delivers. And if you want a capable multimodal model you own and control completely — one you can fine-tune, deploy anywhere, and run without ongoing API fees — Qwen 3.5 Omni is the only real option at this capability level.

It is also worth mentioning Meta's Llama as an open-source alternative. Llama 3 is strong on text tasks but lacks the native voice, video, and tool calling capabilities that make Qwen 3.5 Omni stand out. If you only need text generation, Llama is a solid choice. If you need omni-modal capabilities, Qwen is ahead.

Final Verdict

Qwen 3.5 Omni is the most complete open-source multimodal model we have tested. The combination of native voice, video understanding, tool calling, and strong code generation — all under a permissive Apache 2.0 license — is genuinely unprecedented. If you had told us two years ago that an open-source model would compete with GPT-5 on multimodal tasks, we would have been skeptical. Here we are.

But we are not going to pretend it is flawless. The reasoning gap with Claude Opus 4.6 and GPT-5 is real and noticeable on complex tasks. The English documentation has gaps that can slow down development. The Alibaba association, fairly or not, will be a dealbreaker for certain organizations. And the hardware requirements for the full model put it out of reach for developers without access to high-end GPUs or cloud infrastructure.

Here is who should use Qwen 3.5 Omni:

- Developers building AI-native products: If you need voice, video, or tool calling in your app and cannot afford ongoing API fees, Qwen is the clear winner. Self-host it and your marginal cost per inference is essentially zero.

- Startups with ML engineering capacity: The fine-tuning capability is where Qwen really differentiates. Adapt the model to your domain, your data, your use case — something no closed-source model allows.

- Multilingual applications: Best-in-class for Chinese, Japanese, Korean, and Southeast Asian languages. If you serve Asian markets, Qwen should be your first choice.

- Privacy-sensitive deployments: Air-gapped, on-premise, or regulated environments where data cannot leave your infrastructure. Qwen runs entirely on your hardware with no external API calls.

- Researchers and tinkerers: The open weights and training code make it ideal for academic research, model analysis, and experimentation. You can study, modify, and publish findings about this model in ways that are impossible with closed-source alternatives.

Qwen 3.5 Omni earns a 4.1 out of 5 from us. It is a genuinely impressive technical achievement that pushes the boundaries of what open-source AI can do. The voice and video capabilities alone would have been state-of-the-art from a closed model just 18 months ago. The open-source advantage is massive for the right use case. It loses points on reasoning depth, English writing polish, documentation quality, and the unavoidable trust question that comes with its origin. For the best AI coding experience overall, GPT-5 and Claude still edge ahead — but Qwen 3.5 Omni is closing the gap faster than anyone expected.

Have an AI tool you want reviewed?

We publish honest, in-depth reviews of AI tools every week. Submit yours for consideration.

Submit Your AI Tool →Frequently Asked Questions

What is Qwen 3.5 Omni?

Qwen 3.5 Omni is Alibaba Cloud's open-source multimodal AI model released in March 2026. It natively processes text, voice, video, and supports tool calling in a unified architecture. The model weights are freely available on GitHub under the QwenLM organization with an Apache 2.0 license, meaning it is free for commercial use with no restrictions.

Is Qwen 3.5 Omni free to use?

Yes. Qwen 3.5 Omni is fully open source under the Apache 2.0 license. You can download the model weights from GitHub or Hugging Face and run it locally at no cost. Alibaba also offers API access through DashScope with a free tier. There are no licensing fees for commercial use, fine-tuning, or redistribution.

How does Qwen 3.5 Omni compare to GPT-5?

GPT-5 is stronger on complex reasoning and has a broader product ecosystem through ChatGPT and Microsoft Copilot. Qwen 3.5 Omni competes well on multimodal tasks, especially voice and video, and wins decisively on cost since it is completely free to self-host. For developers who need full control over their AI stack, Qwen is the better choice. For end users who want a polished consumer product, GPT-5 is easier to use out of the box.

Can I run Qwen 3.5 Omni locally?

Yes. The full Omni model requires at least 80GB VRAM (like an A100 GPU). Quantized versions (GPTQ 4-bit, AWQ) run on consumer GPUs with 24GB VRAM like the RTX 4090, with some quality tradeoff. Smaller Qwen 3 variants are available for even more constrained hardware. Alibaba provides detailed deployment guides and Docker images for common configurations.

Is Qwen 3.5 Omni safe to use given it is from Alibaba?

The model weights are open source and fully auditable by security researchers. When you self-host Qwen, no data leaves your infrastructure. The Alibaba association is a legitimate concern for organizations with strict geopolitical compliance requirements, but the open-source nature means you control the entire stack. Multiple independent security audits have found no embedded backdoors or data exfiltration mechanisms in the model weights.

What languages does Qwen 3.5 Omni support?

Qwen 3.5 Omni supports over 30 languages with particularly strong performance in English, Chinese, Japanese, Korean, French, German, and Spanish. It is one of the best models available for Chinese-language tasks and outperforms most competitors on multilingual benchmarks. The voice capabilities also support multiple languages and accents for both input and output.

Does Qwen 3.5 Omni support tool calling?

Yes. Qwen 3.5 Omni has native tool calling support with structured JSON output. You can define tools with JSON schemas and the model will invoke them reliably, chain multiple calls, and process results. In our testing, tool calling accuracy was competitive with GPT-5 and Claude, making it suitable for production agentic workflows where the model needs to interact with external APIs and services.

How does Qwen 3.5 Omni handle voice and audio?

Qwen 3.5 Omni processes voice input natively rather than through a separate speech-to-text pipeline. This means it understands tone, emphasis, and conversational context directly from the audio stream. It can also generate natural-sounding voice output, enabling end-to-end voice conversations. The voice quality supports multiple languages and accents, making it one of the most capable voice-enabled open-source models available.

Recommended AI Tools

Lovable

Lovable is a $6.6B AI app builder that turns plain English into full-stack React + Supabase apps. Real-time collaboration for up to 20 users. Free tier, Pro from $25/mo.

View Review →DeerFlow

DeerFlow is ByteDance's open-source super agent framework with 53K+ GitHub stars. Orchestrates sub-agents, sandboxed execution, and persistent memory. Free, MIT license.

View Review →Grailr

AI-powered luxury watch scanner that identifies brands, models, and reference numbers from a photo, then pulls real-time pricing from Chrono24, eBay, and Jomashop.

View Review →Fooocus

The most popular free AI image generator — Midjourney-quality results with zero cost, zero complexity, and total privacy. 47.9K GitHub stars, SDXL-native, runs on 4GB VRAM.

View Review →