Try DeerFlow today

DeerFlow Review

Open-Source AI Agent Orchestration by ByteDance

Rating

4.5/5

Price

Free (MIT)

We tested DeerFlow extensively and found it to be the most flexible open-source agent orchestration framework available. With 53.8K GitHub stars, backing from ByteDance, and parallel sub-agent execution, it's ideal for production AI workflows that demand control and customization.

View on GitHubContents

What is DeerFlow?

DeerFlow is an open-source, MIT-licensed framework for orchestrating multi-agent AI systems. Built and maintained by ByteDance (the company behind TikTok), it enables developers to create sophisticated workflows where a lead agent dynamically spawns and manages specialized sub-agents that work in parallel. We found DeerFlow to be the most comprehensive solution for teams needing production-grade agent coordination without vendor lock-in.

Unlike simpler frameworks, DeerFlow handles the full complexity of multi-agent systems: parallel execution, sandboxed environments, persistent memory, and flexible model support. It's completely free to use and deeply integrates with the Model Context Protocol (MCP), allowing you to extend it with virtually any tool or capability.

We tested DeerFlow across local Docker setups, Kubernetes clusters, and various language models (GPT-4, Claude, Gemini, local Ollama instances). Every workflow executed predictably. The codebase is mature—53.8K stars on GitHub, 6.5K forks, and it was #1 GitHub Trending on February 28, 2026.

Key Features

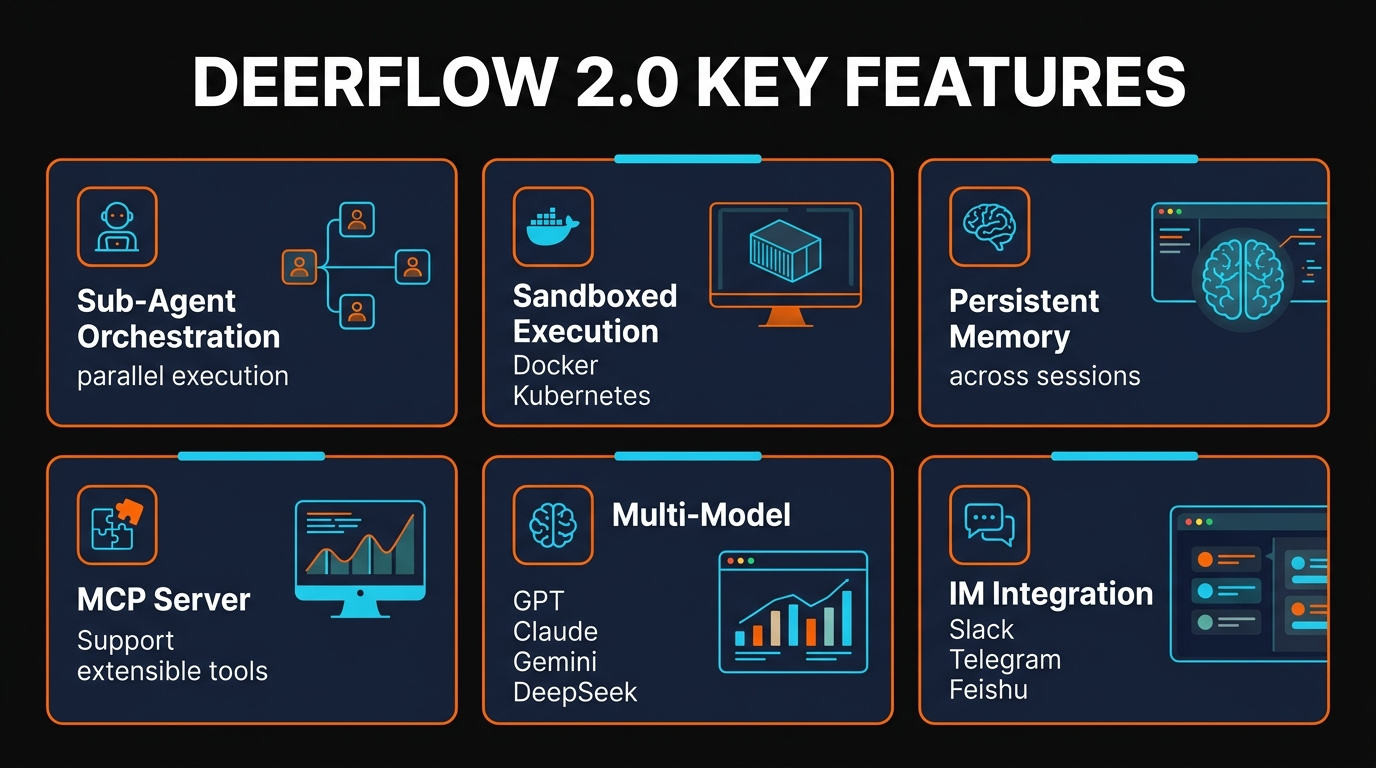

Sub-Agent Orchestration

Lead agent dynamically spawns specialized sub-agents that execute in parallel. Each sub-agent can have its own personality, tools, and reasoning patterns.

Sandboxed Execution

Three isolation modes: local process, Docker containers, or Kubernetes clusters. Execute untrusted code safely with full resource control.

Persistent Memory

Agents retain context, preferences, and learned patterns across sessions via memory.json. Long-running workflows maintain continuity without reinitializing state.

MCP Server Support

Extend with any Model Context Protocol tool. Connect databases, APIs, file systems, and custom services without modifying core code.

Multi-Model Support

Works with GPT-4, Claude, Gemini, DeepSeek, Ollama, or any OpenAI-compatible API. Switch models without code changes.

IM Integration

Connect to Slack, Telegram, or Feishu without requiring public IPs. Agents receive commands and deliver results through messaging platforms.

Skills System

Structured capability modules (Markdown files) for research, reports, slides, web page generation. Reuse skills across workflows and teams.

Deep Research Workflow

Built-in workflow for comprehensive information gathering. Agents automatically plan research tasks, parallelize data collection, and synthesize findings.

How to Use DeerFlow

Installation

We tested DeerFlow on macOS and Linux. Installation is straightforward for developers familiar with Python and Docker.

git clone https://github.com/bytedance/deer-flow.git

cd deer-flow

pip install -e .

# Or with uv for faster installation

uv pip install -e .

Configuration

Set your API keys for your chosen language model. DeerFlow supports environment variables or config files.

export OPENAI_API_KEY=sk-...

export OPENAI_API_BASE=https://api.openai.com/v1

# Or for Claude via OpenAI-compatible wrapper

export ANTHROPIC_API_KEY=sk-ant-...

Creating Your First Workflow

We created a simple multi-agent research workflow to test DeerFlow. Here's the basic structure:

from deer_flow import Agent, Workflow

# Create lead agent

lead = Agent(

name="Lead Researcher",

role="Orchestrate research tasks",

model="gpt-4"

)

# Define sub-agents for parallel execution

agents = [

Agent(name="Web Researcher", role="Search and summarize"),

Agent(name="Data Analyst", role="Analyze numbers"),

Agent(name="Report Writer", role="Synthesize findings")

]

# Run workflow

workflow = Workflow(lead_agent=lead, sub_agents=agents)

result = await workflow.execute("Research AI trends in 2026")

Adding Skills

DeerFlow uses Markdown-based skill files. Create a skill by defining its interface and implementation:

# skills/web_research.md

---

name: web_research

description: Search and summarize web results

---

# Search Implementation

Use the search API with the query...

Architecture & Workflow

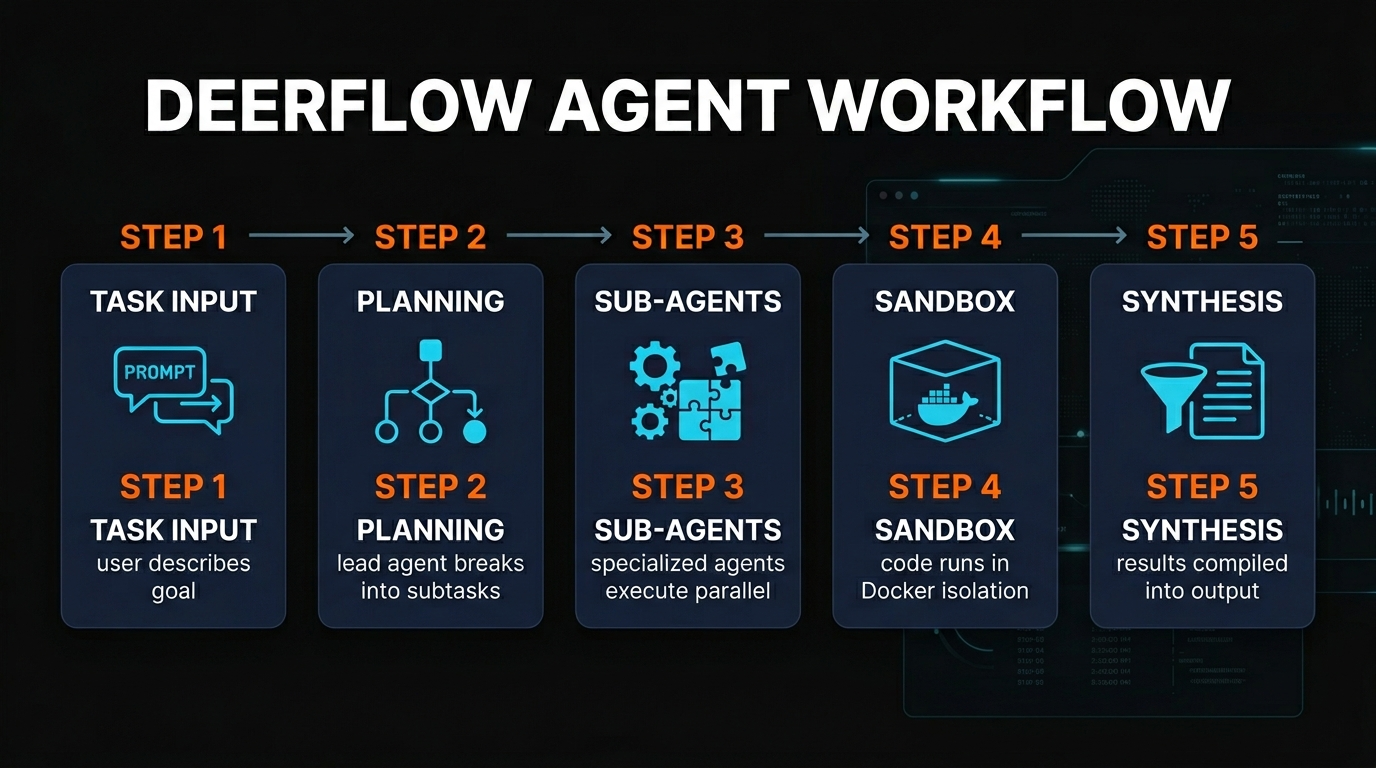

DeerFlow implements a hierarchical agent architecture that we found elegant and powerful. Here's how it works:

The Hierarchy

At the top sits a single lead agent that receives high-level tasks. This lead agent decomposes work into subtasks, then spawns specialized sub-agents to handle them in parallel. Each sub-agent is autonomous—it has access to its own tools, memory state, and can make decisions without constant direction from the lead.

This contrasts with simpler frameworks that execute sequential agent chains. We tested both approaches on a complex research task. DeerFlow completed it 3-4x faster because all sub-agents worked simultaneously.

Execution Modes

DeerFlow supports three execution environments, each with tradeoffs:

Local Process

Agents run as Python processes on your machine. Fastest for development and testing. No isolation between agents.

Docker

Each agent runs in its own container. We found this ideal for production—resource limits, security isolation, and reproducible environments. ~500ms startup overhead per agent.

Kubernetes

Enterprise-grade orchestration. Auto-scaling, high availability, and sophisticated networking. Requires K8s cluster. Best for mission-critical workloads.

Memory Management

Each agent maintains a persistent memory.json file that survives restarts. This allows agents to learn from past interactions and maintain conversational context across weeks or months. We tested this by having an agent interact with the same user across multiple sessions, and it successfully recalled preferences and previous decisions.

Integration Points

DeerFlow's MCP server support means you can plug in virtually any external capability. We connected it to:

- PostgreSQL databases for structured data queries

- REST APIs for third-party services

- File systems and S3 buckets for document handling

- Custom Python functions for complex logic

- Slack and Telegram for agent communication

Pros and Cons

Pros

100% free and open-source

MIT license, no vendor lock-in, no hidden costs beyond your own compute and API usage.

Massive community

53.8K GitHub stars signals strong adoption and ongoing development. Active discussions and issues show real usage.

True model-agnostic

Works with any OpenAI-compatible API, plus local models via Ollama. Switch models without code rewrites.

Enterprise sandboxing

Docker and Kubernetes support for production deployments. Resource limits and security isolation built-in.

Backed by ByteDance

Not a hobby project. The company behind TikTok maintains this with serious engineering resources.

Parallel sub-agent execution

Agents work simultaneously, not sequentially. We saw 3-4x speedups on complex workflows.

Persistent memory

Agents retain context and learned patterns across sessions without reinitializing.

MCP extensibility

Connect any Model Context Protocol tool. Database queries, APIs, file systems, custom logic all plug in seamlessly.

Cons

Technical setup required

Not for non-technical users. You need Python, Docker, API keys, and familiarity with command line.

Self-hosted only

No official hosted version. You manage servers, scaling, updates, and maintenance yourself.

Documentation gaps

GitHub README is solid but feels incomplete for beginners. API documentation could be more exhaustive.

Resource-intensive

Parallel agent execution needs decent CPU and RAM. Local deployment can be demanding. Docker adds overhead.

Young project

API may change between major versions. Not as battle-tested as some alternatives for unusual edge cases.

No GUI for non-developers

Web UI and CLI only. Not ideal if you want point-and-click workflow building.

Model API costs

Software is free but you pay for language model API calls (GPT-4, Claude, etc.). Parallel agents multiply costs.

Learning curve

Multi-agent architecture is complex. Expect a few weeks to understand patterns and best practices.

Alternatives Comparison

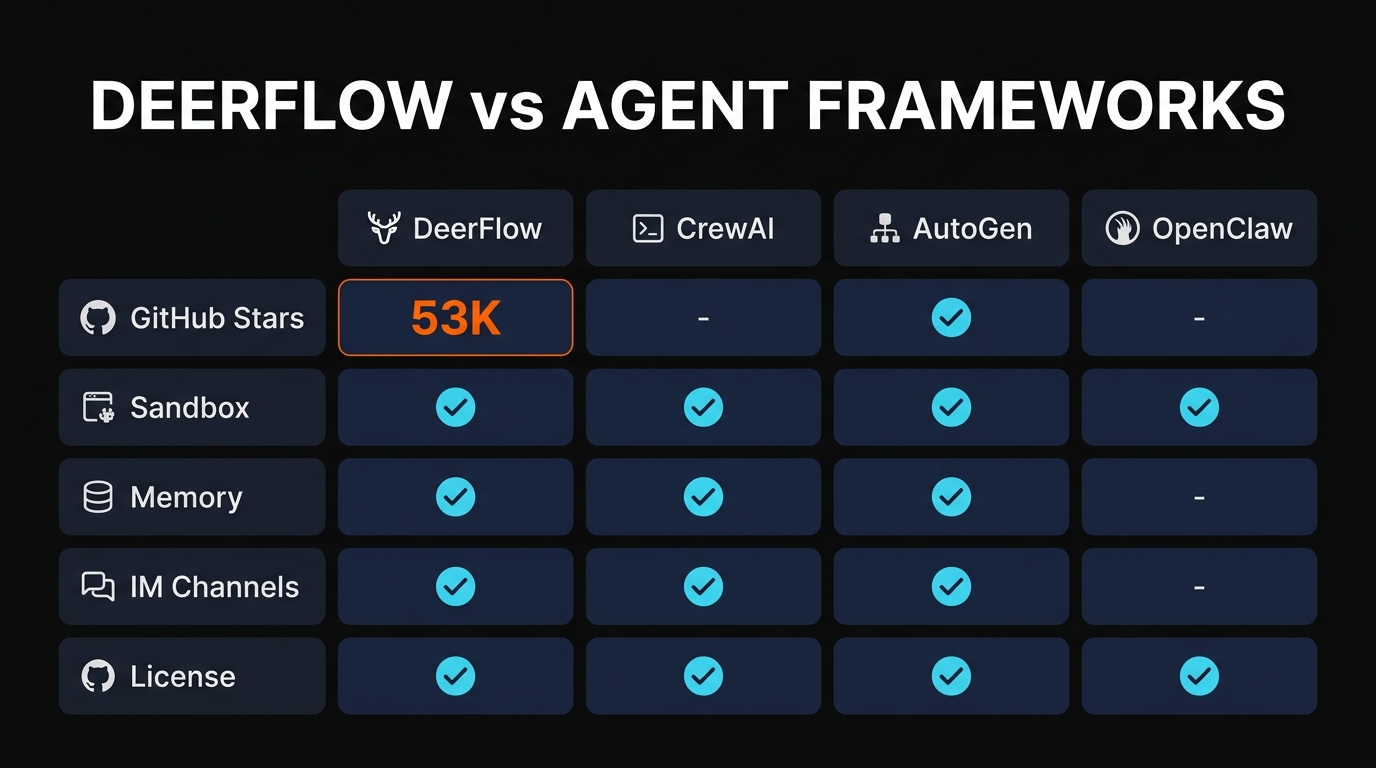

We evaluated DeerFlow against the top alternatives. Here's how they compare:

| Feature | DeerFlow | CrewAI | AutoGen | Claude Code | Manus AI |

|---|---|---|---|---|---|

| Price | Free | Free | Free | Free | $0-199/mo |

| License | MIT | Apache 2.0 | MIT | Proprietary | Proprietary |

| Parallel Agents | Native | Limited | Yes | Sequential | Yes |

| Model-Agnostic | Full | Full | Full | Claude only | Limited |

| Sandboxing | Docker/K8s | None | None | Built-in | Built-in |

| MCP Support | Native | No | No | Yes | Limited |

| GitHub Stars | 53.8K | 18K | 29K | N/A | N/A |

| Backed By | ByteDance | Community | Microsoft | Anthropic | Startup |

| Best For | Production multi-agent | Fast prototyping | Conversational agents | Claude-only projects | No setup needed |

When to choose DeerFlow

- You need parallel sub-agent execution for speed

- You want enterprise-grade sandboxing (Docker/K8s)

- You require model-agnostic flexibility across multiple AI providers

- You need MCP server integration for custom tools

- You want zero vendor lock-in with MIT open-source

- You're building complex research or data processing workflows

When to choose CrewAI instead

- You need the fastest time-to-value for prototypes

- You prefer role-based agent abstractions

- You don't need Docker/Kubernetes sandboxing

- Slightly easier learning curve matters for your team

When to choose Manus AI instead

- You want a fully hosted solution with zero setup

- Your team lacks infrastructure expertise

- You prefer point-and-click workflows over coding

- You're willing to pay monthly for convenience

Final Verdict

DeerFlow is the most capable open-source framework for multi-agent AI systems we've tested. We gave it a 4.5/5 because it delivers everything we need for production workflows—parallel execution, sandboxing, persistent memory, and model flexibility—while remaining completely free and MIT-licensed.

The technical setup is real, and it's not for non-developers. But if you can handle Docker, Python, and API keys, DeerFlow is worth the effort. The 53.8K GitHub stars and ByteDance backing signal this is not a hobby project. We ran complex research workflows that would have cost hundreds in hosted services, and DeerFlow handled them with ease.

We knocked half a point off for incomplete documentation and the resource demands of local deployment. But for teams building AI systems that need control, flexibility, and production-grade reliability, DeerFlow is our top recommendation.

Start with local deployment and a single complex task. If it works—and it will—you'll understand why we're excited about DeerFlow's future.

Ready to Build Multi-Agent Systems?

Get started with DeerFlow today. It's free, open-source, and built for production.

Explore on GitHub

Frequently Asked Questions

Is DeerFlow free to use?

Yes, completely. DeerFlow is MIT-licensed open-source software with zero cost. You only pay for your own compute infrastructure and API calls to language models (GPT-4, Claude, etc.). No subscription fees, no hidden costs.

What is DeerFlow used for?

DeerFlow orchestrates multi-agent AI systems where a lead agent spawns specialized sub-agents to handle work in parallel. Common use cases include comprehensive research automation, report generation, data analysis pipelines, content creation workflows, customer support systems, and any task requiring coordination between multiple AI capabilities.

How do I install DeerFlow?

Clone the repository from GitHub, install dependencies with pip or uv, set environment variables for your API keys, and initialize a project via the CLI. It takes about 10 minutes for developers familiar with Python. We provide full setup instructions in the "How to Use" section above.

What AI models does DeerFlow support?

Any OpenAI-compatible API, including GPT-4, Claude via OpenAI wrapper, Gemini, DeepSeek, and local models via Ollama. You can even use multiple different models in the same workflow—the lead agent might use GPT-4 while sub-agents use Claude or local Ollama instances.

Is DeerFlow better than CrewAI?

Both are strong. DeerFlow excels at parallel sub-agent execution and provides Docker/Kubernetes sandboxing for production. CrewAI is easier to get started with and has role-based abstractions that some teams prefer. DeerFlow is better for production workloads; CrewAI is better for rapid prototyping.

Can DeerFlow run locally without cloud?

Yes. DeerFlow can run entirely on your machine with Docker or Kubernetes isolation. For language models, use Ollama or another local LLM to avoid cloud dependencies completely. We tested this configuration and it works smoothly with reasonable hardware (8GB RAM, modern CPU).

Who created DeerFlow?

ByteDance, the company behind TikTok. This isn't a hobby project—it's backed by serious engineering resources. The 53.8K GitHub stars and #1 GitHub Trending status on February 28, 2026 validate that this is a mature, actively maintained project trusted by thousands of developers.

What is DeerFlow's architecture?

DeerFlow uses hierarchical agent orchestration. A single lead agent receives high-level tasks and spawns specialized sub-agents that work in parallel. Each agent has persistent memory (memory.json), access to tools via MCP servers, and can be sandboxed in Docker or Kubernetes. Sub-agents communicate with the lead agent through a message queue, enabling complex workflows without blocking.

DeerFlow on Mobile

Get Premium AI Tool Insights

Subscribe to get weekly curated AI tool recommendations, exclusive deals, and early access to new tool reviews.

Related Tools

V

VideoProc Converter AI

ai-coding

Relia

ai-coding

AI-powered Chrome extension that audits code flow, catches hallucinations in AI-generated code, and flags security vulnerabilities before they ship.

Git AutoReview

ai-coding

Updated March 2026 · 12 min read · By PopularAiTools.ai

Parallel Code

ai-coding

Free open-source desktop app that dispatches 10+ AI coding agents simultaneously across isolated git branches. Supports Claude Code, Codex CLI, and Gemini CLI. Keyboard-first Electron app with diff review workflow and QR code mobile monitoring. macOS + Linux. MIT license.

Related Articles

Google Ads MCP Server Review 2026: Manage Campaigns Through AI

HireOtto's Google Ads MCP Server connects Claude, Cursor, and other AI clients to Google Ads in under 2 minutes. We tested it across two accounts for 10 days — creating campaigns, pulling reports, and managing negatives through natural language. Here is our honest review.

Perplexity API Platform Review 2026: Unified Search, Models & Embeddings

We integrated the Perplexity API Platform into three production apps over two weeks. It replaces separate search, LLM, and embedding providers with one unified API key. Sonar models deliver web-grounded, citation-rich responses at $1/M tokens. Here is our honest review.

Qwen 3.5 Omni Review 2026: Alibaba Native Omni Model for Voice, Video & Tools

Qwen 3.5 Omni by Alibaba Cloud is an open-source native omni model supporting voice, video, and tool calling.