TL;DR -- LLM Council Review

Rating: 4.1/5

Best For: Developers and organizations who need high-confidence AI outputs by synthesizing multiple model perspectives

Pricing: Open-source concept. Costs depend on API usage through OpenRouter or direct model providers.

Verdict: LLM Council is less a product and more an architecture pattern -- but an important one. For critical decisions where single-model bias is unacceptable, having multiple models evaluate and synthesize responses produces measurably better outputs. The OpenRouter integration makes it practical to implement without managing multiple API credentials.

Table of Contents

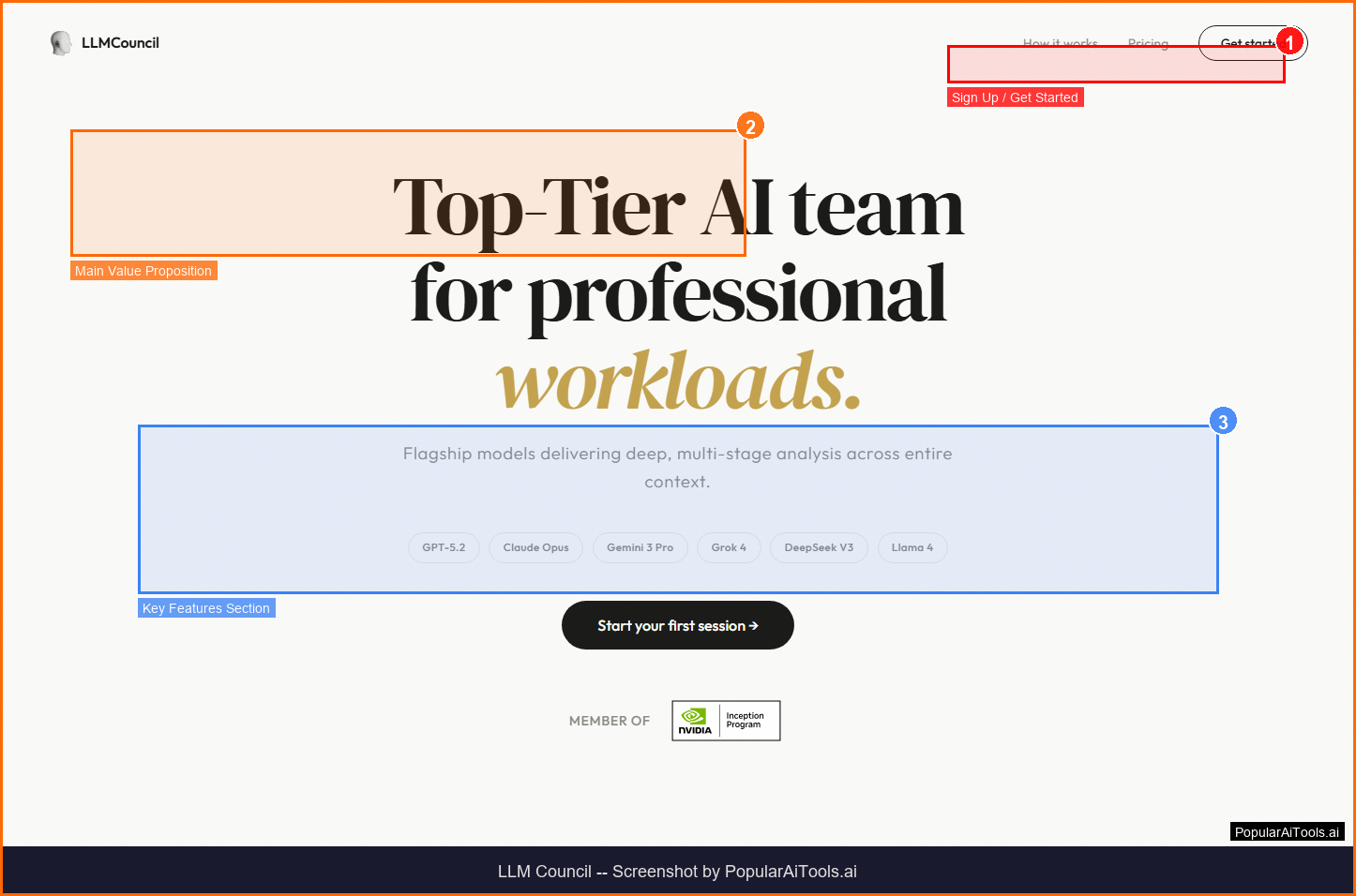

What is LLM Council?

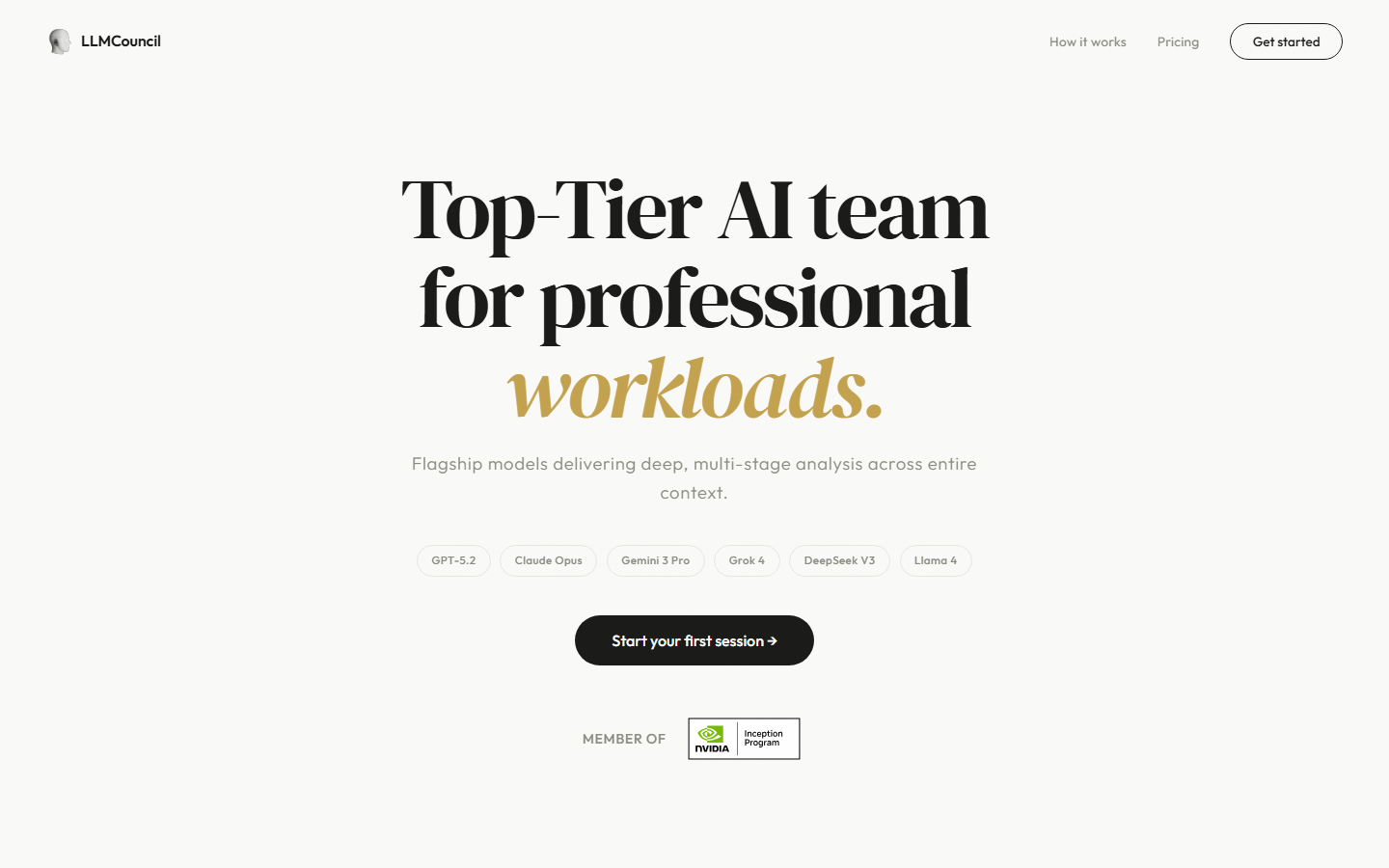

LLM Council implements Andrej Karpathy's concept of an AI moderation board where the same question is answered by multiple LLMs, all answers are anonymized, each model evaluates and ranks all responses, and a designated Chairman model synthesizes a final verdict. This reduces single-model bias and produces more balanced, objective answers.

LLM Council falls into the AI Development category and is designed for developers and organizations who need high-confidence ai outputs by synthesizing multiple model perspectives. In this review, we will explore its features, pricing, pros and cons, and how it compares to alternatives in the market.

Key Features

Here are the standout features that make LLM Council worth considering:

Multi-Model Querying

Send the same prompt to multiple LLMs simultaneously and collect independent responses.

Anonymized Evaluation

Responses are anonymized before cross-evaluation to prevent model favoritism.

Cross-Model Ranking

Each participating model evaluates and ranks all responses for quality.

Chairman Synthesis

A designated model synthesizes a final verdict based on evaluations from all council members.

OpenRouter Integration

Leverages OpenRouter for access to many LLMs through a single API credential.

How to Use LLM Council

Getting started with LLM Council is straightforward. Here is the typical workflow:

1

Visit the Website

Go to https://llmcouncil.com and create your account. Most tools offer a free tier or trial to get started.

2

Explore the Dashboard

Familiarize yourself with LLM Council's interface, settings, and available features. The onboarding flow will guide you through initial setup.

3

Configure Your Workflow

Set up LLM Council for your specific use case. Connect integrations, customize settings, and configure any automations.

4

Start Using & Iterate

Begin using LLM Council for real tasks. Monitor results, adjust settings, and scale usage as you become comfortable.

Pricing Plans

Open-source concept. Costs depend on API usage through OpenRouter or direct model providers.

| Plan | Price | Includes |

|---|---|---|

| Self-Hosted | Free | Run your own council with your API keys |

| OpenRouter | Pay-per-use | Unified API access to 100+ models |

| Custom Implementation | Varies | Build custom council workflows |

Pros and Cons

Pros

- ✓ Reduces single-model bias for critical decisions

- ✓ Based on Andrej Karpathy's validated concept

- ✓ Works with any LLMs through OpenRouter

- ✓ Open-source implementation available

Cons

- ✗ Multiple model calls increase cost per query

- ✗ Latency increases with more council members

- ✗ Requires understanding of LLM capabilities for best results

LLM Council Alternatives

If LLM Council does not fit your needs, here are some alternatives worth considering:

| Alternative | Description |

|---|---|

| OpenRouter | Unified LLM API access |

| PromptLayer | LLM prompt management |

| Portkey | AI gateway for LLMs |

| Helicone | LLM observability platform |

Final Verdict

LLM Council is less a product and more an architecture pattern -- but an important one. For critical decisions where single-model bias is unacceptable, having multiple models evaluate and synthesize responses produces measurably better outputs. The OpenRouter integration makes it practical to implement without managing multiple API credentials.

Frequently Asked Questions

What is LLM Council?

LLM Council is a system where multiple LLMs independently answer a question, evaluate each other's responses, and synthesize a final verdict.

Who created the LLM Council concept?

The concept is based on Andrej Karpathy's idea of using multiple models to reduce bias and improve answer quality.

How does it reduce bias?

By having multiple models independently respond and then cross-evaluate anonymized answers, single-model bias is minimized.

What is the Chairman model?

A designated model that synthesizes the final verdict based on evaluations from all council members.

Is LLM Council expensive?

Costs scale with the number of models used per query, as each model call incurs API charges.

Does it work with any LLM?

Yes, through OpenRouter or direct API integration, it works with most available LLMs.

Is there an open-source implementation?

Yes, open-source implementations are available, including n8n workflow templates.

When should I use LLM Council?

For critical decisions, complex analysis, or any scenario where reducing AI bias is important.

Review by PopularAiTools.ai | Last updated: March 21, 2026

Get Premium AI Tool Insights

Subscribe to get weekly curated AI tool recommendations, exclusive deals, and early access to new tool reviews.

Related Tools

Google Gemini 3.1 Flash Live

ai-chatbots

Google Gemini 3.1 Flash Live is a fast, affordable multimodal AI model with real-time streaming. Handles text, images, audio, video, and code at a fraction of the cost of GPT-5.

P

Pulse Ai

ai-chatbots

Pulse AI is an always-on AI business intelligence analyst that builds dashboards, answers plain-language queries, detects trends and anomalies, and turns data into actionable insights.

Paperclip

ai-chatbots

Paperclip: A self-hosted platform that orchestrates autonomous AI-driven companies by hiring, organizing, and coordinating LLM- or agent-based workers.

flompt

ai-chatbots

Related Articles

10 Claude Code Skills, Plugins & CLIs to Install on Day One (April 2026)

Starting Claude Code from scratch in 2026? Install these 10 skills, plugins, and CLIs on day one — Codex CLI, Obsidian, Autoresearch, Firecrawl, Playwright, NotebookLM, Skill Creator, RAG-Anything, Google Workspace CLI, and awesome-design-md. Full install commands included.

We Tested 24 AI Models Inside Claude Code: The 2026 Tier List

We swapped 24 different AI models into Claude Code and ran identical tool-call tests on each. Here's the S-tier-to-D-tier ranking, real cost comparison, and the single best Claude Sonnet 4.6 alternative for 2026 — including the GLM 4.6 sleeper pick that matched Sonnet at 15% the cost.

Claude as a Creative Studio: Make Ads, Images, and Video From One Chat (2026)

Claude doesn't generate raster images natively, but in 2026 it's the smartest creative director on Earth — orchestrating Nano Banana 2, Sora 2, Runway, Higgsfield, Remotion, and VEED into a single ad-and-video factory. The full stack, the variant matrix trick, and how to build a YouTube Shorts factory.