TL;DR -- MagicArena Review

Rating: 4.0/5

Best For: AI researchers, designers, and content creators who need to compare visual AI models side-by-side

Pricing: Free with paid subscription options

Verdict: MagicArena, developed by ByteDance, fills an important gap in the AI tooling ecosystem: an objective, community-driven platform for comparing visual generation models. The arena-style matchup format -- where users vote on the best output from anonymous models -- creates genuinely useful benchmark data. It covers image generation, image-to-video, and text-to-video models including Midjourney, FLUX, Keling, and more. Free to use for basic comparisons.

Table of Contents

What is MagicArena?

MagicArena is a visual generation model comparison platform developed by ByteDance. It operates as a competitive arena where different AI models -- for text-to-image, image-to-video, and text-to-video generation -- are pitted against each other on the same prompts. Users evaluate the results through blind voting, creating a community-driven leaderboard that ranks models based on real-world output quality rather than synthetic benchmarks.

The concept follows the proven Elo rating model that Chatbot Arena (now Arena.ai) popularized for large language models, but applied to visual generation. When you submit a prompt, two randomly selected models generate outputs simultaneously. You vote for the winner without knowing which model produced which result. Over thousands of votes, the leaderboard surfaces genuinely reliable rankings.

The platform covers all major image generation models including Midjourney, FLUX, and ByteDance's own Jimeng and Keling models, as well as video generation models. It is free to use for basic comparisons, with paid subscriptions available for higher usage limits.

Key Features

Model Battle Arena

Submit a text prompt and two AI models generate outputs simultaneously. Vote for the better result in a blind comparison. The arena format eliminates brand bias and surfaces genuine quality differences between models.

Community Leaderboard

A dynamic ranking system updated by thousands of user votes. The Elo-based scoring provides statistically meaningful rankings that reflect real-world output quality across diverse prompts and use cases.

Multi-Modal Coverage

Compare models across text-to-image, image-to-video, and text-to-video generation. This breadth of coverage makes MagicArena a one-stop evaluation platform for visual AI capabilities.

Model Explorer

Browse and explore generation results across different models. See how various models handle similar prompts, compare style tendencies, and identify which model best suits your specific use case.

Points & Rewards System

Earn points by participating in model comparisons and voting. The gamification element encourages consistent participation, which improves the statistical reliability of the leaderboard rankings.

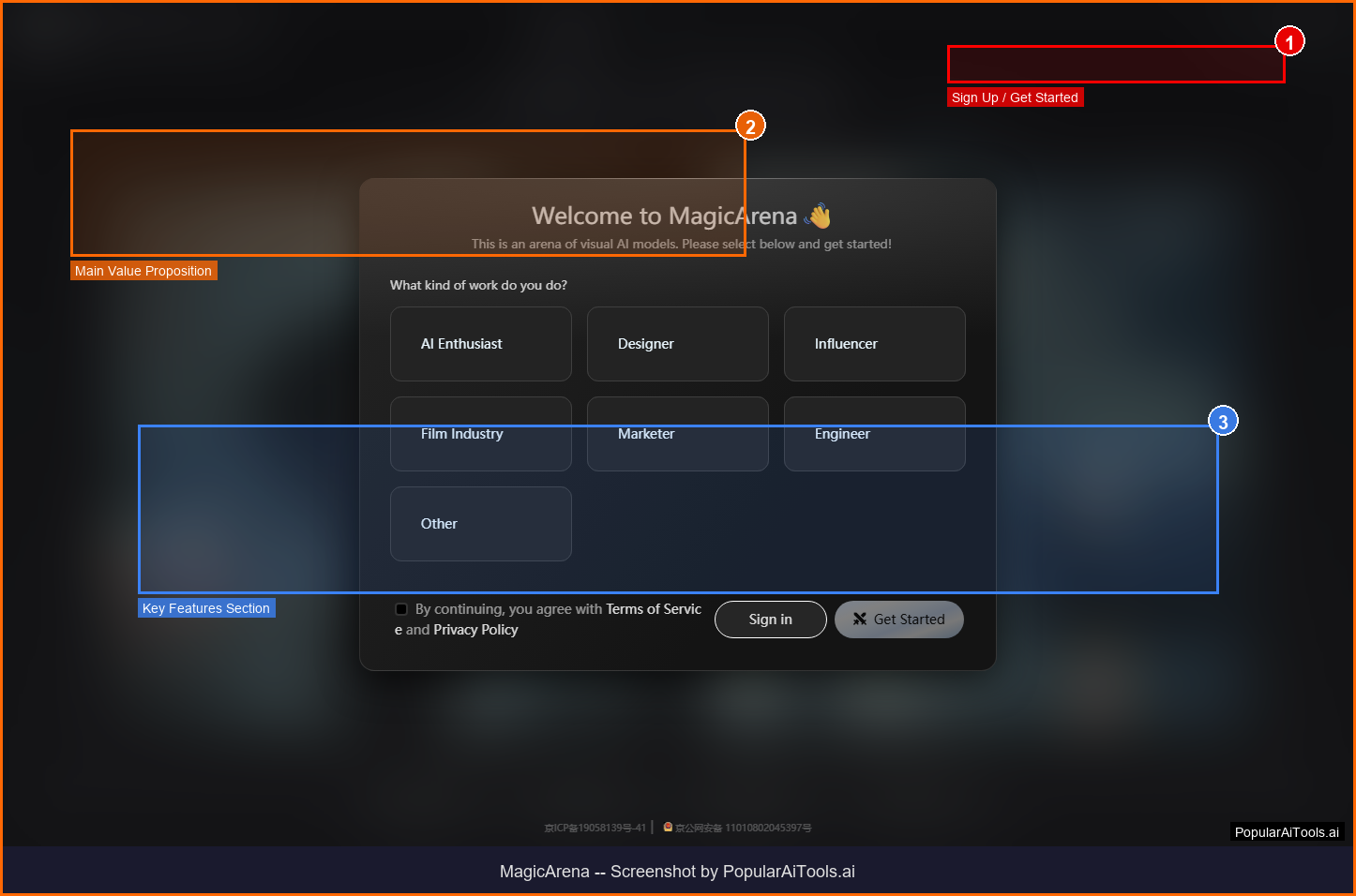

How to Use MagicArena

1

Choose Your Battle Type

Select text-to-image, image-to-video, or text-to-video comparison mode.

2

Enter Your Prompt

Type a text description of what you want generated. Two anonymous models will process the same prompt simultaneously.

3

Compare & Vote

Review both outputs side-by-side and vote for the one you prefer. After voting, the model names are revealed.

4

Explore the Leaderboard

Check the community-driven rankings to see which models are consistently preferred across thousands of comparisons.

Pricing

MagicArena offers free access for basic model comparisons and voting. Paid subscriptions are available for users who need higher usage limits and additional features. The platform is primarily designed as a community evaluation tool, so the free tier provides sufficient access for most users looking to compare models before committing to a paid generation service.

Pros and Cons

Pros

- Free one-stop model comparison

- Blind voting eliminates brand bias

- Covers image and video generation models

- Community-driven leaderboard with Elo ratings

- Backed by ByteDance infrastructure

Cons

- Interface primarily in Chinese (limited English)

- ByteDance ownership may introduce model bias

- Limited documentation in English

- Not a generation tool -- comparison only

- Newer platform with smaller vote sample sizes

MagicArena Alternatives

| Alternative | Best For | Price |

|---|---|---|

| Arena.ai (LMArena) | LLM and multimodal model benchmarking | Free |

| Artificial Analysis | AI model performance and pricing data | Free |

| LiveBench | Objective LLM benchmarking | Free |

Final Verdict

MagicArena earns a 4.0/5 for providing a genuinely useful service in the AI ecosystem: objective, community-driven comparison of visual generation models. The blind voting format and Elo-based leaderboard create reliable rankings that help users make informed decisions about which image or video generation tool to invest in.

The main limitations are the Chinese-language interface and the potential for bias given ByteDance's ownership (they compete with some listed models). For English-speaking users, Arena.ai provides a more accessible alternative for model comparison. But for anyone serious about evaluating visual AI models, MagicArena offers a valuable and largely free resource.

Ready to try MagicArena?

Visit MagicArena

Frequently Asked Questions

Is MagicArena free to use?

Yes. MagicArena offers free access for basic model comparisons and voting. Paid subscriptions are available for higher usage limits.

Who made MagicArena?

MagicArena is developed by ByteDance, the company behind TikTok. It serves as a platform for evaluating visual generation AI models across the industry.

Can I generate images with MagicArena?

MagicArena generates images as part of the comparison process, but it is primarily an evaluation and benchmarking tool, not a production image generation service. For regular image generation, use the individual tools like Midjourney or FLUX directly.

Get Premium AI Tool Insights

Subscribe to get weekly curated AI tool recommendations, exclusive deals, and early access to new tool reviews.

Related Tools

Google Gemini 3.1 Flash Live

ai-chatbots

Google Gemini 3.1 Flash Live is a fast, affordable multimodal AI model with real-time streaming. Handles text, images, audio, video, and code at a fraction of the cost of GPT-5.

P

Pulse Ai

ai-chatbots

Pulse AI is an always-on AI business intelligence analyst that builds dashboards, answers plain-language queries, detects trends and anomalies, and turns data into actionable insights.

Paperclip

ai-chatbots

Paperclip: A self-hosted platform that orchestrates autonomous AI-driven companies by hiring, organizing, and coordinating LLM- or agent-based workers.

flompt

ai-chatbots

Related Articles

10 Claude Code Skills, Plugins & CLIs to Install on Day One (April 2026)

Starting Claude Code from scratch in 2026? Install these 10 skills, plugins, and CLIs on day one — Codex CLI, Obsidian, Autoresearch, Firecrawl, Playwright, NotebookLM, Skill Creator, RAG-Anything, Google Workspace CLI, and awesome-design-md. Full install commands included.

We Tested 24 AI Models Inside Claude Code: The 2026 Tier List

We swapped 24 different AI models into Claude Code and ran identical tool-call tests on each. Here's the S-tier-to-D-tier ranking, real cost comparison, and the single best Claude Sonnet 4.6 alternative for 2026 — including the GLM 4.6 sleeper pick that matched Sonnet at 15% the cost.

Claude as a Creative Studio: Make Ads, Images, and Video From One Chat (2026)

Claude doesn't generate raster images natively, but in 2026 it's the smartest creative director on Earth — orchestrating Nano Banana 2, Sora 2, Runway, Higgsfield, Remotion, and VEED into a single ad-and-video factory. The full stack, the variant matrix trick, and how to build a YouTube Shorts factory.