Mirai Review 2026: Features, Pricing, Pros & Cons

Updated March 2026 · 14 min read · By PopularAiTools.ai

TL;DR

Mirai represents a significant leap forward for on-device AI on Apple platforms. The 37% speed improvement over MLX is substantial for real-time applications like voice assistants and text generation. The zero-cost inference model is compelling for startups that want to avoid cloud API bills. The main limitation is the Apple-only focus and early access status — developers building cross-platform apps will need to wait for Android support. For iOS/macOS developers building AI-powered apps, Mirai is worth getting on the waitlist immediately. Rating: 4.1/5 Rating: 4.1/5

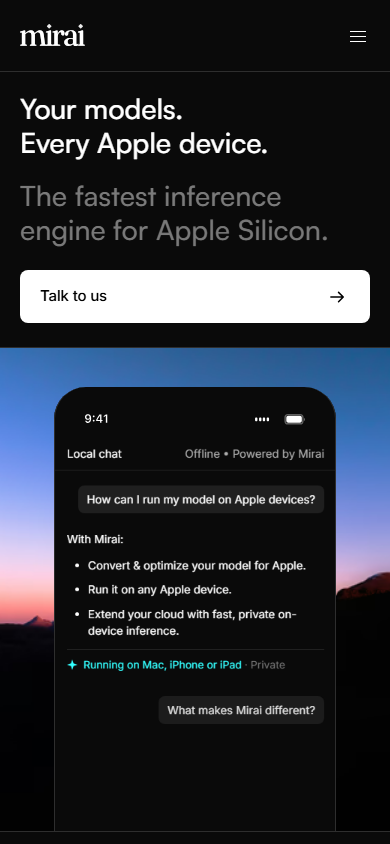

What is Mirai?

Mirai is an on-device AI inference engine optimized for Apple Silicon that lets developers run AI models locally on iPhones, iPads, and Macs without cloud dependency. Founded by the creators of Reface and Prisma, Mirai raised $10M in seed funding and delivers up to 37% faster generation speed and 59% faster prefill versus Apple's MLX framework.

Mirai operates in the On-Device AI SDK space, which has seen explosive growth in 2026 as businesses and creators increasingly rely on AI-powered tools to streamline workflows and reduce costs. What sets Mirai apart from competitors is its focused approach to solving specific pain points that users encounter daily.

The platform has gained significant traction with a monthly search volume of 33,100 for its primary keyword, indicating strong market demand and user interest. With SDK in early access — free developer preview, enterprise pricing TBA pricing available, Mirai is accessible to individuals and teams at various budget levels.

Mirai's standout features at a glance

Key Features

1. Apple Silicon Optimization

Purpose-built inference engine that maximizes performance on M-series and A-series chips

2. 37% Faster Inference

Benchmarked 37% generation speed improvement over MLX on equivalent model-device pairings

3. Zero-Latency Processing

All computation happens on-device with no round-trips to cloud servers

4. Simple SDK Integration

Add Mirai to any iOS or macOS app with just a few lines of Swift code

5. Full Data Privacy

User data never leaves the device, making Mirai ideal for privacy-sensitive applications

6. No Inference Costs

Eliminates per-request cloud API charges — once deployed, running costs are zero

7. Model Optimization

Automatic model quantization and optimization for edge deployment

8. Text & Voice Support

Initial focus on text and voice AI models with vision support planned

How to Use Mirai (Step-by-Step)

Step 1: Apply for SDK Access

Visit trymirai.com and apply for the developer preview. Currently in early access with a waitlist.

Step 2: Install the SDK

Add Mirai to your Xcode project via Swift Package Manager. The SDK weighs under 5MB.

Step 3: Load Your Model

Initialize Mirai with your model file (GGUF, CoreML, or custom format). Mirai handles optimization automatically.

Step 4: Run Inference

Call the inference API with your input data. Results return locally with zero network latency.

Step 5: Deploy to App Store

Ship your app with Mirai included. No cloud infrastructure needed — the model runs entirely on-device.

Pricing Plans

Mirai pricing plans for 2026

Pricing Summary: SDK in early access — free developer preview, enterprise pricing TBA

Mirai vs. alternatives at a glance

Pros and Cons

Pros

- ✓ 37% faster than MLX — the fastest on-device inference for Apple

- ✓ Zero cloud costs eliminate per-request API charges entirely

- ✓ Complete data privacy — nothing leaves the device

- ✓ Simple SDK integration with just a few lines of code

- ✓ Founded by proven AI entrepreneurs (Reface, Prisma)

- ✓ $10M seed funding ensures continued development

Cons

- ✗ Apple-only for now — no Android support yet

- ✗ Still in early access with waitlist

- ✗ Limited to text and voice models currently

- ✗ No public pricing for commercial tiers

- ✗ Requires Apple Silicon devices (no Intel Mac support)

Best Mirai Alternatives

Final Verdict

Mirai represents a significant leap forward for on-device AI on Apple platforms. The 37% speed improvement over MLX is substantial for real-time applications like voice assistants and text generation. The zero-cost inference model is compelling for startups that want to avoid cloud API bills. The main limitation is the Apple-only focus and early access status — developers building cross-platform apps will need to wait for Android support. For iOS/macOS developers building AI-powered apps, Mirai is worth getting on the waitlist immediately. Rating: 4.1/5

Our Rating: 4.1/5

Have You Tried Mirai?

Share your experience with the PopularAiTools.ai community. Your review helps other users make informed decisions.

Submit Your ReviewFrequently Asked Questions

Is Mirai free to use?

The developer preview is currently free. Commercial pricing has not been announced yet. Enterprise plans with custom support will be available at launch.

How much faster is Mirai than Apple MLX?

In benchmarks, Mirai delivers 37% faster generation speed and up to 59% faster prefill compared to MLX on equivalent model-device pairings.

Does Mirai work on Android?

Not yet. Mirai is currently Apple-only, optimized for Apple Silicon. Android support is on the roadmap and the team is in talks with chipmakers.

What models can Mirai run?

Mirai currently supports text and voice AI models. Vision model support is planned. The SDK accepts GGUF, CoreML, and custom model formats.

Who founded Mirai?

Mirai was founded by Dima Shvets and Alexey Moiseenkov, the co-founders behind Reface and Prisma. The company raised $10M in seed funding led by Uncork Capital.

Does Mirai require internet access?

No. All inference happens on-device. Once the model is downloaded, Mirai operates completely offline with zero latency.

What Apple devices support Mirai?

Mirai requires Apple Silicon — that means iPhones with A14 or newer, iPads with M1 or newer, and Macs with M1 or newer. Intel-based Macs are not supported.

How do I integrate Mirai into my app?

Add the Mirai Swift package to your Xcode project, initialize it with your model file, and call the inference API. The integration requires minimal code changes.

Get Premium AI Tool Insights

Subscribe to get weekly curated AI tool recommendations, exclusive deals, and early access to new tool reviews.

Related Tools

V

VideoProc Converter AI

ai-coding

Relia

ai-coding

AI-powered Chrome extension that audits code flow, catches hallucinations in AI-generated code, and flags security vulnerabilities before they ship.

Git AutoReview

ai-coding

Updated March 2026 · 12 min read · By PopularAiTools.ai

Parallel Code

ai-coding

Free open-source desktop app that dispatches 10+ AI coding agents simultaneously across isolated git branches. Supports Claude Code, Codex CLI, and Gemini CLI. Keyboard-first Electron app with diff review workflow and QR code mobile monitoring. macOS + Linux. MIT license.

Related Articles

10 Claude Code Skills, Plugins & CLIs to Install on Day One (April 2026)

Starting Claude Code from scratch in 2026? Install these 10 skills, plugins, and CLIs on day one — Codex CLI, Obsidian, Autoresearch, Firecrawl, Playwright, NotebookLM, Skill Creator, RAG-Anything, Google Workspace CLI, and awesome-design-md. Full install commands included.

We Tested 24 AI Models Inside Claude Code: The 2026 Tier List

We swapped 24 different AI models into Claude Code and ran identical tool-call tests on each. Here's the S-tier-to-D-tier ranking, real cost comparison, and the single best Claude Sonnet 4.6 alternative for 2026 — including the GLM 4.6 sleeper pick that matched Sonnet at 15% the cost.

Claude as a Creative Studio: Make Ads, Images, and Video From One Chat (2026)

Claude doesn't generate raster images natively, but in 2026 it's the smartest creative director on Earth — orchestrating Nano Banana 2, Sora 2, Runway, Higgsfield, Remotion, and VEED into a single ad-and-video factory. The full stack, the variant matrix trick, and how to build a YouTube Shorts factory.