DeepSeek V4 + Claude Code: How to Cut Your AI Coding Costs by 100X

AI Infrastructure Lead

Table of Contents

If you use Claude Code for serious development work, you already know the pricing sting. Anthropic's Claude Max plan runs $100/month for 5x usage — that's $1,200/year before you even factor in API overages. For teams, it's worse. And if you've ever hit the rate limit mid-session on a complex refactor, you know the frustration of paying premium prices and still getting throttled.

DeepSeek V4 changes the math entirely. Released on April 24, 2026, it ships with an Anthropic-compatible API endpoint — meaning you can point Claude Code at DeepSeek's servers with a few environment variables and keep your exact same workflow. The cost difference? V4-Flash runs at $0.14 per million input tokens versus Claude Opus's $15. That's not a typo. That's roughly 100x cheaper.

What Is DeepSeek V4?

DeepSeek V4 is the fourth-generation open-source model family from DeepSeek AI, released April 24, 2026 under the MIT license. It comes in two variants that matter for Claude Code users:

V4-Pro

1.6 trillion total parameters (49B activated per token)

1M token context window

SWE-bench: 80.6%

Promo: $0.87/M output tokens (until May 31)

V4-Flash

284 billion total parameters (13B activated per token)

1M token context window

Optimized for speed + cost

Price: $0.28/M output tokens

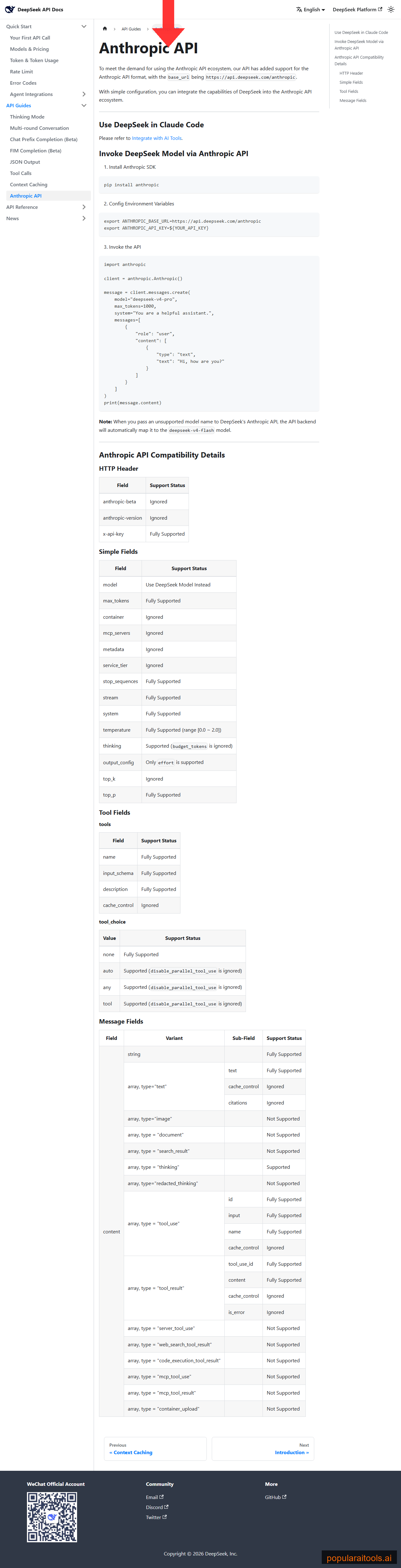

The key innovation for Claude Code users is DeepSeek's Anthropic-compatible API endpoint. Their server at api.deepseek.com/anthropic accepts the exact same request format that Claude Code sends to Anthropic. Claude Code doesn't know it's not talking to Anthropic — same SDK, same tool calls, same streaming. You just swap the base URL and auth token.

Both models support thinking mode, JSON output, tool calls, and the full tool_choice API. The only things not supported are image input, document content, and MCP content types — which are rarely used in typical Claude Code coding sessions anyway.

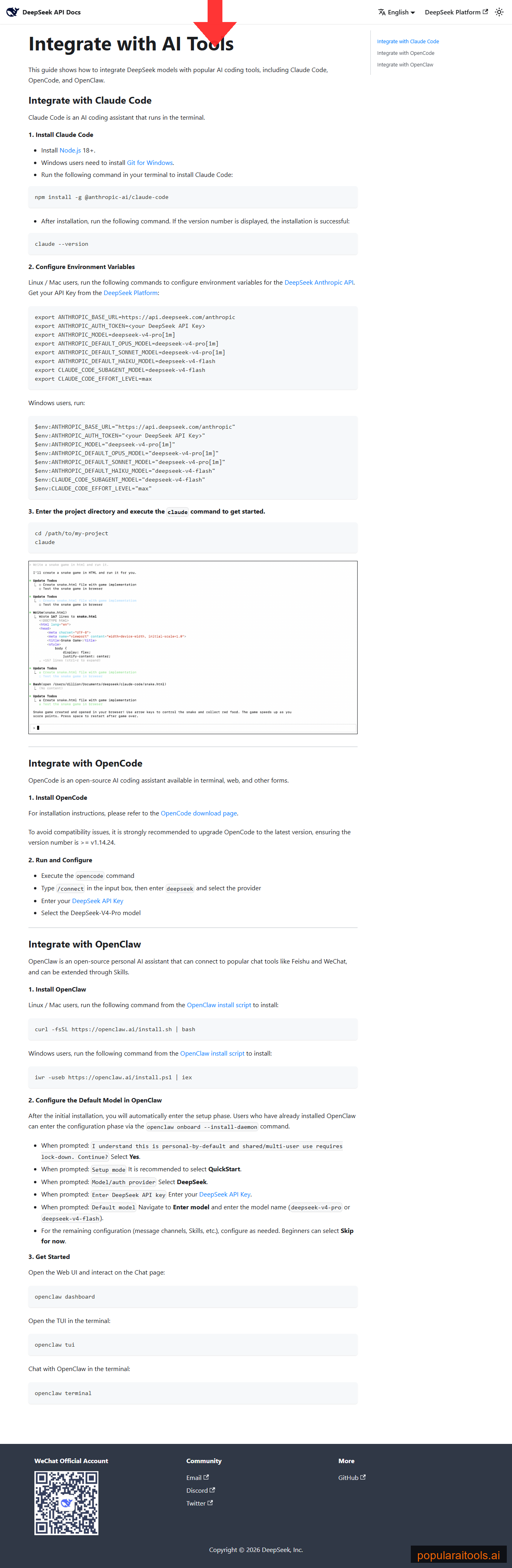

How to Set Up DeepSeek V4 with Claude Code (5 Minutes)

The entire setup is 3 steps. If you already have Claude Code installed, you can be running on DeepSeek in under 5 minutes.

Step 1: Get Your DeepSeek API Key

Go to platform.deepseek.com and create an account. Navigate to API Keys and generate one. New accounts get $5 in free credits — enough for several days of heavy coding.

Step 2: Set Environment Variables

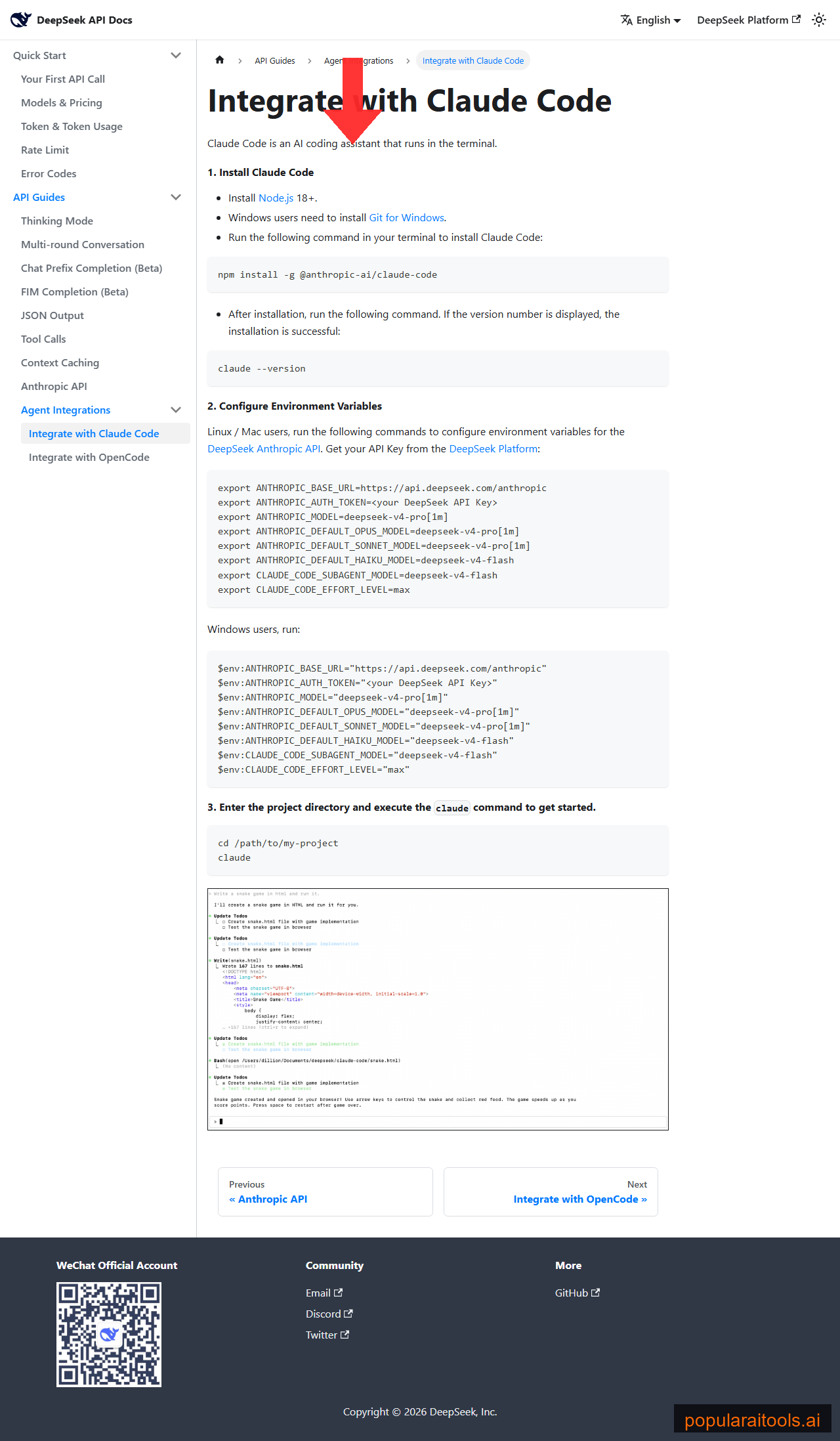

Add these to your .bashrc, .zshrc, or PowerShell profile:

export ANTHROPIC_BASE_URL=https://api.deepseek.com/anthropic export ANTHROPIC_AUTH_TOKEN=your_deepseek_api_key_here export ANTHROPIC_MODEL=deepseek-v4-pro[1m] export ANTHROPIC_DEFAULT_OPUS_MODEL=deepseek-v4-pro[1m] export ANTHROPIC_DEFAULT_SONNET_MODEL=deepseek-v4-pro export ANTHROPIC_DEFAULT_HAIKU_MODEL=deepseek-v4-flash export CLAUDE_CODE_SUBAGENT_MODEL=deepseek-v4-flash export CLAUDE_CODE_EFFORT_LEVEL=max

Critical: The

[1m]suffix on the model name activates the full 1 million token context window. Without it, you're limited to 200K context. For large codebases, this suffix is the entire reason to switch.

Windows PowerShell users — use $env:VARIABLE_NAME = "value" instead of export.

Step 3: Reload and Verify

Source your shell profile (source ~/.zshrc), navigate to your project directory, and run claude. Type /status inside Claude Code to confirm the base URL points to DeepSeek and the model shows deepseek-v4-pro[1m].

That's it. Your existing hooks, skills, and slash commands all work unchanged. MCP servers, agents, CLAUDE.md files — everything carries over.

What Each Variable Does

| Variable | Purpose |

|---|---|

ANTHROPIC_BASE_URL |

Routes all requests to DeepSeek's Anthropic-compatible endpoint |

ANTHROPIC_AUTH_TOKEN |

Your DeepSeek API key (replaces Anthropic key) |

ANTHROPIC_MODEL |

Primary model — V4-Pro with [1m] for full 1M context |

ANTHROPIC_DEFAULT_HAIKU_MODEL |

Routes lightweight Haiku calls to V4-Flash (cheapest option) |

CLAUDE_CODE_SUBAGENT_MODEL |

Sub-agents use V4-Flash — saves the most money on parallel tasks |

CLAUDE_CODE_EFFORT_LEVEL |

Set to max for best quality results |

The Cost Math: $1,200/Year to $60/Year

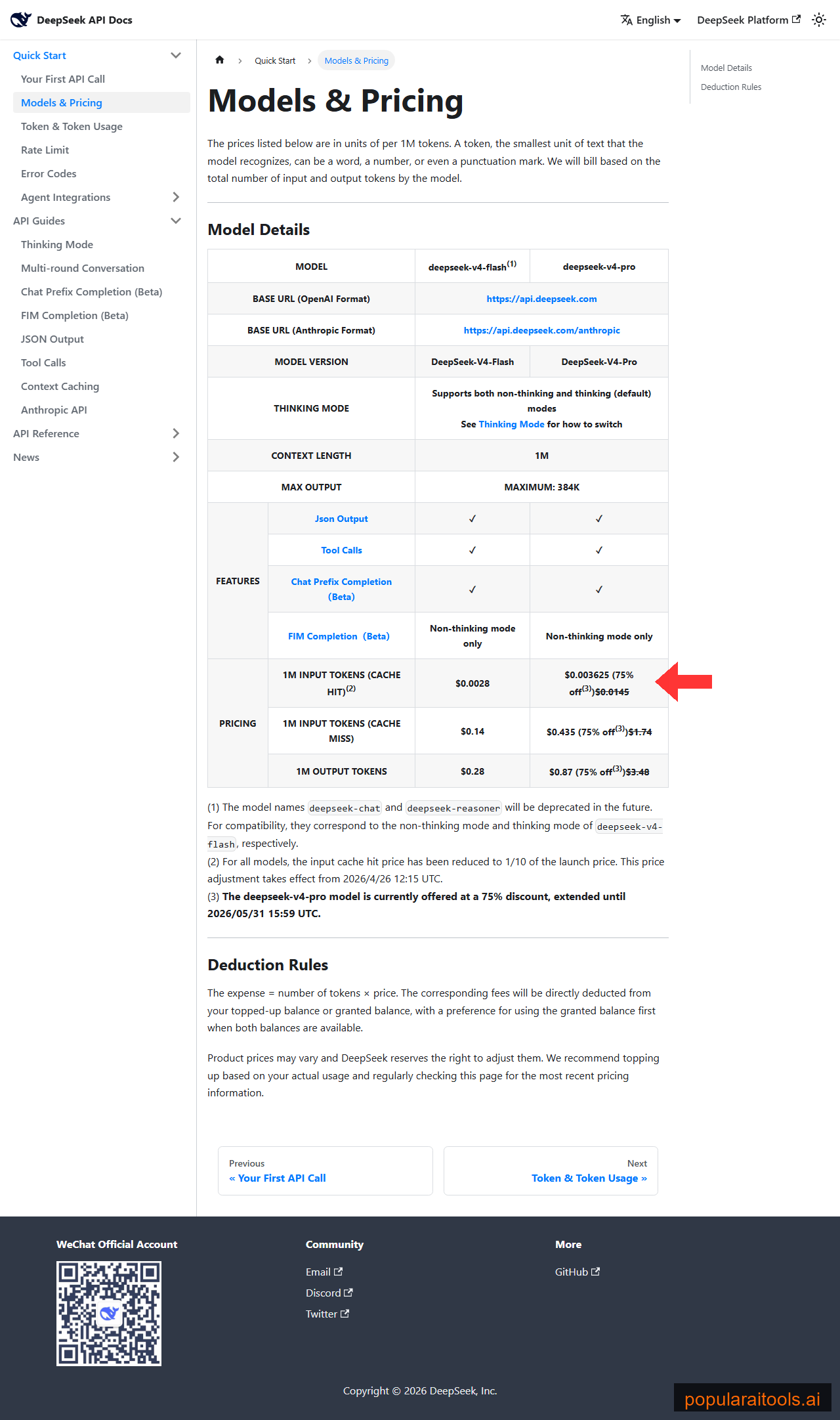

This is where it gets wild. Here's the per-million-token pricing side by side:

| Model | Input $/M | Output $/M | Cache Hit $/M |

|---|---|---|---|

| Claude Opus 4.6 | $15.00 | $75.00 | $1.50 |

| Claude Sonnet 4.6 | $3.00 | $15.00 | $0.30 |

| DeepSeek V4-Pro (promo) | $0.435 | $0.87 | $0.004 |

| DeepSeek V4-Flash | $0.14 | $0.28 | $0.003 |

Those aren't theoretical numbers. Real developers are reporting their actual spend:

- Antirez (Redis creator): "One dollar per hour" for intense agentic usage

- Tur24Tur: $6.84 for an entire day — 412 tool calls across security challenges and Android analysis

- koffuxu: $1 during promo pricing for Android AOSP-scale code analysis; projected ~$5 at full price

- One developer's real test project: $3.87 total — the same work estimated at $20-40 on Claude Opus

The LLM cost calculator on this site tracks 200+ models in real time — check it for the latest pricing if the promo window has shifted by the time you read this.

Performance: How Good Is It Really?

The headline benchmark is SWE-bench Verified: V4-Pro scores 80.6% versus Claude Opus 4.6's 80.8%. That 0.2 percentage point gap is statistically meaningless — for standard coding tasks, they're functionally equivalent.

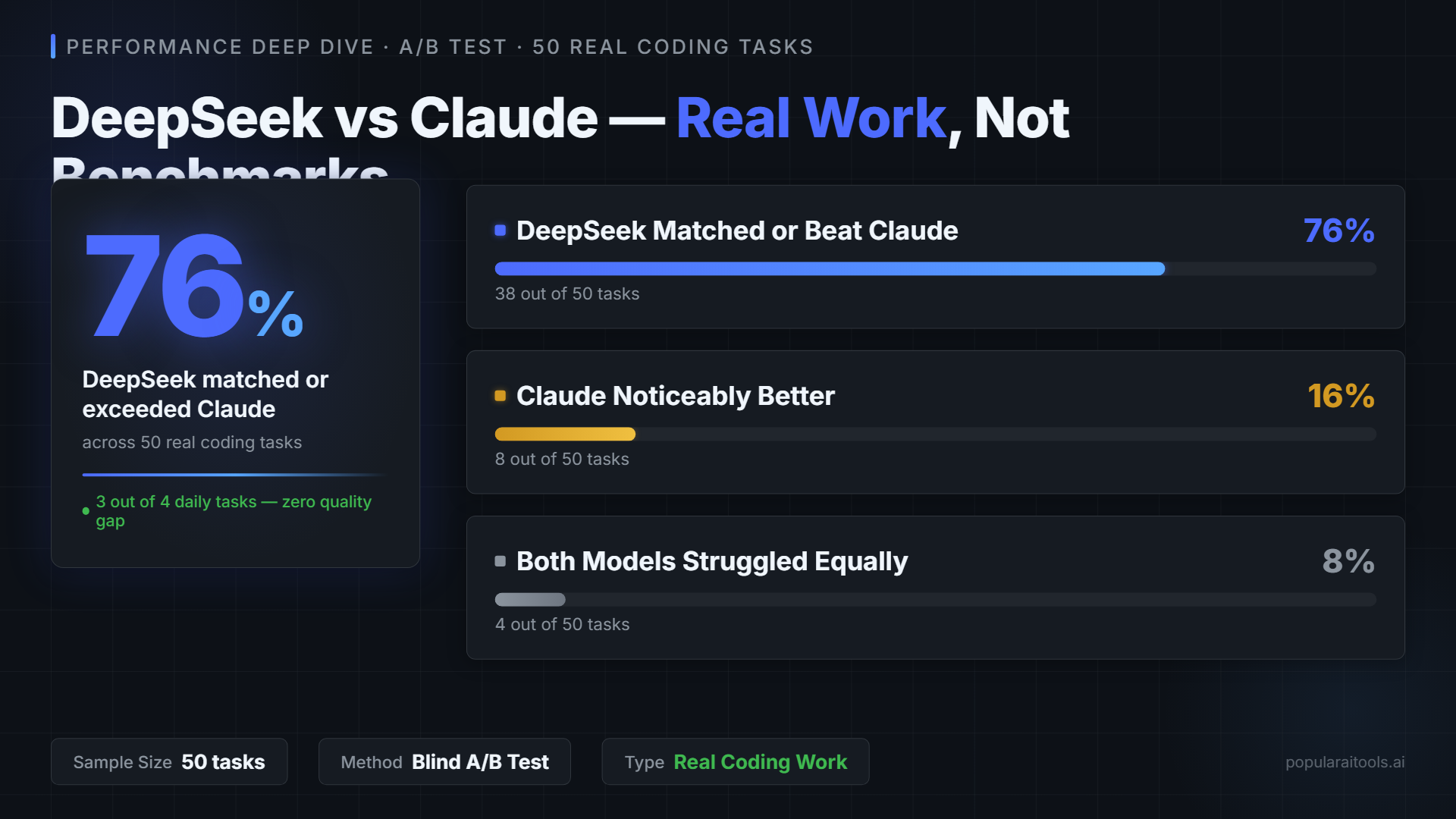

But benchmarks don't tell the full story. One developer ran an A/B test across 50 real coding tasks:

DeepSeek matched or exceeded Claude

Claude noticeably better

Both struggled equally

Where V4-Pro wins: Long-context monorepo refactors, agentic loops with 50+ tool calls, well-represented languages (Python, TypeScript, Go, Rust), and any cost-sensitive workload where you'd otherwise hit your Claude rate limit.

Where Claude remains stronger: Domain-specific or proprietary APIs (V4-Pro has been caught fabricating nonexistent API calls), safety-critical workflows, extended coherence beyond ~500K tokens, and tasks requiring nuanced "infer my intent" behavior. On the harder SWE-bench Pro benchmark, the gap widens — Claude Opus scores 64.3% versus V4-Pro's 55.4%.

Known Limitations and Gotchas

This is where honest reviews matter. V4 has real limitations you should know about before committing:

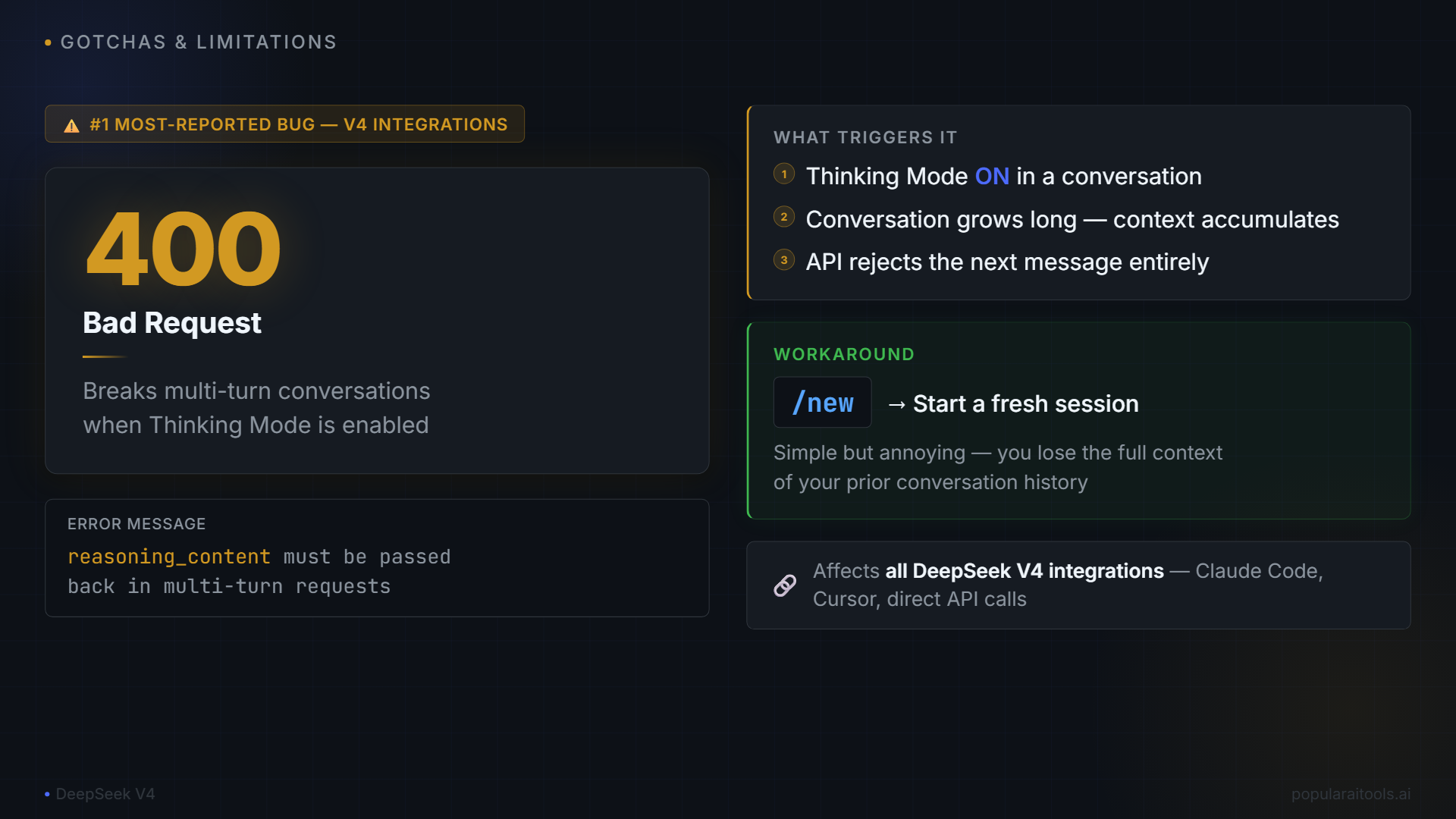

The reasoning_content 400 Error

The most-reported bug across all V4 integrations. When thinking mode is enabled, multi-turn conversations can trigger a 400 Bad Request: "reasoning_content must be passed back" error. Workaround: use /new to start fresh sessions if you hit this in long conversations.

Hallucination on Unknown APIs

V4-Pro scored a 94% hallucination rate on the AA-Omniscience benchmark — when it doesn't know an answer, it almost always makes one up rather than admitting uncertainty. In practice, this means it will confidently generate calls to APIs that don't exist. Double-check unfamiliar API calls.

No Image Input

V4 is text-only at launch. If your Claude Code workflow relies on screenshots, mockups, or vision-based UI validation, you'll need to keep a Claude session available for those tasks.

Higher Latency from Outside Asia

DeepSeek's servers are in China. Expect 200-400ms additional latency compared to Anthropic's US/EU infrastructure. Noticeable but rarely a dealbreaker for development work.

Prompt Sensitivity

V4 follows instructions more literally than Claude. Prompts that rely on Claude's "infer my intent" behavior may need to be made more explicit. System prompts in your CLAUDE.md might need minor tuning.

V4-Pro vs V4-Flash: When to Use Which

The environment variable setup above routes the primary agent to V4-Pro and sub-agents to V4-Flash. But you might want to adjust this based on your work:

| Task Type | V4-Pro | V4-Flash |

|---|---|---|

| Complex architecture decisions | ✓ | |

| Multi-file refactoring | ✓ | |

| Simple code generation | ✓ | |

| Writing tests | ✓ | |

| Documentation generation | ✓ | |

| Debugging race conditions | ✓ | |

| Sub-agent file searches | ✓ | |

| Large codebase analysis | ✓ |

A good rule of thumb: if the task requires reasoning about relationships between multiple files, use Pro. If it's generating or transforming content within a single file, Flash is more than enough — and 3x cheaper.

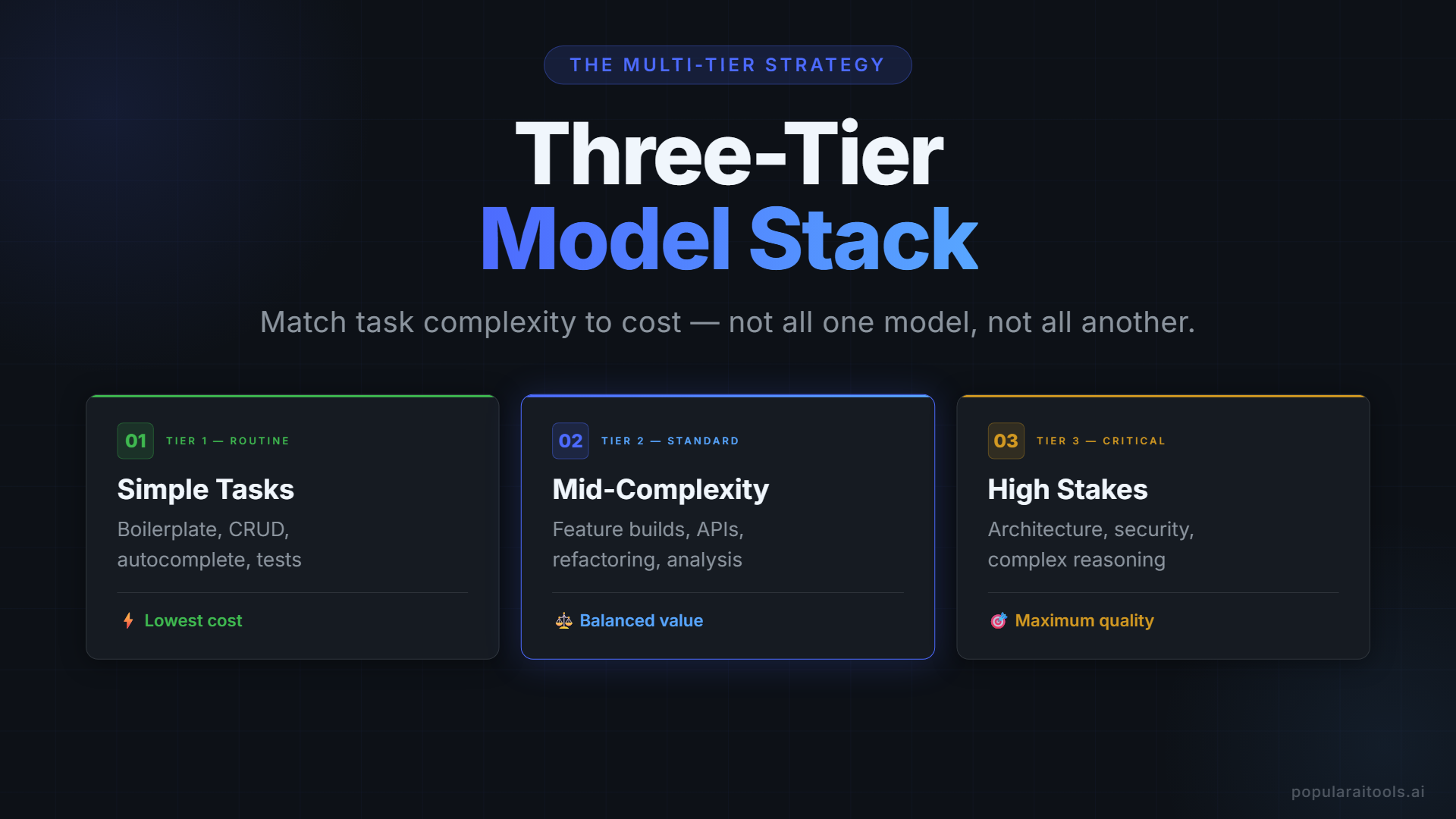

The Multi-Tier Strategy (Best of Both Worlds)

The smartest approach isn't all-DeepSeek or all-Claude. It's a tiered model stack where you match the model to the task complexity. Here's what experienced developers are converging on:

Tier 1 — DeepSeek Flash

- Code generation

- Simple refactoring

- Test writing

- Documentation

Tier 2 — DeepSeek Pro

- Architecture decisions

- Complex debugging

- Multi-file refactors

- Large codebase analysis

Tier 3 — Claude Opus

- Production-critical code

- Novel problem solving

- Safety-dependent workflows

- Proprietary API integrations

Estimated monthly savings: 70-80%. You can use dual terminal windows to keep both DeepSeek and Claude sessions running simultaneously — switching between them takes seconds. An 8-hour autonomous agent run that costs $50-200 on Opus drops to $1.50-6 on V4-Pro during the promo period.

For developers who want even more options, check out our full comparison of AI coding tools including Claude Code workflow patterns and how to set up 76+ AI agents for development.

- DeepSeek V4 Pro Review 2026: 1.6 Trillion Parameters, 1M Context, Open Source

- Open Claude: How to Use Claude Code With Any AI Model

- The Free LLM Cost Calculator That Tracks 200+ AI Models in Real Time

- Claude Code vs Cursor vs GitHub Copilot in 2026: We Used All Three

- Best AI Coding Assistants 2026: We Tested 7 (Here Is Our Ranking)

- Vibe Coding in 2026: Why Building Software Without Writing Code Is the Skill That Matters Most

Frequently Asked Questions

api.deepseek.com/anthropic. Set 7-8 environment variables and Claude Code routes all requests through DeepSeek without any code changes. Your hooks, skills, slash commands, and MCP servers all continue working.[1m] to the model name (e.g., deepseek-v4-pro[1m]) activates the full 1 million token context window. Without it, the model defaults to 200K context. This is the single most important detail in the setup — miss it and you lose the primary advantage for large codebases.Final Verdict

DeepSeek V4's Claude Code integration isn't a compromise — it's a strategic choice that makes economic sense for the majority of coding work. The 0.2% SWE-bench gap is invisible in practice. The 100x cost reduction is not. If you're paying $100+/month for Claude Max and doing mostly standard development work (feature building, refactoring, test writing, debugging), you're overpaying.

The smart play is the multi-tier approach: run DeepSeek V4-Flash for everyday tasks, V4-Pro for complex work, and keep Claude Opus on standby for the 16% of cases where it genuinely outperforms. Your monthly AI coding bill drops from triple digits to single digits, and the quality difference is unnoticeable for most of it.

The 5-minute setup time makes this a no-brainer to at least try. Open a second terminal, set the environment variables, and run your next task on DeepSeek. You'll know within 10 minutes whether it works for your workflow. Given the promo pricing through May 31, there's never been a cheaper time to find out.

Recommended AI Tools

Wondershare Filmora

Wondershare Filmora is an AI-powered video editor that wraps Sora 2, Veo 3.1, Kling 2.5 and 20+ other AI tools around a beginner-friendly multi-track timeline.

View Review →Emergent.sh

Build production-ready apps in hours, not weeks. Full-stack with auth, payments, hosting included. $20-200/mo pricing.

View Review →Emergent.sh

Build production-ready apps in hours, not weeks. Full-stack with auth, payments, hosting included. $20-200/mo pricing.

View Review →Kie.ai

Unified API gateway for every frontier generative AI model — Veo, Suno, Midjourney, Flux, Nano Banana Pro, Runway Aleph. 30-80% cheaper than official pricing.

View Review →