Try Kubbi today

TL;DR — Kubbi Review

Kubbi solves a problem I did not know I had until I started building multi-agent workflows: how do you pass sensitive data between agents without embedding it inline? The answer is a temporary, encrypted claim link with TTL and burn policies. Create a kubbi, hand over the URL, and let the next agent or human claim the payload whenever they are ready. Python and TypeScript SDKs are solid, MCP integration works out of the box, and the free tier is generous enough for prototyping. At $19/month for Pro, it is one of the cheapest infrastructure pieces in any agent stack.

Table of Contents

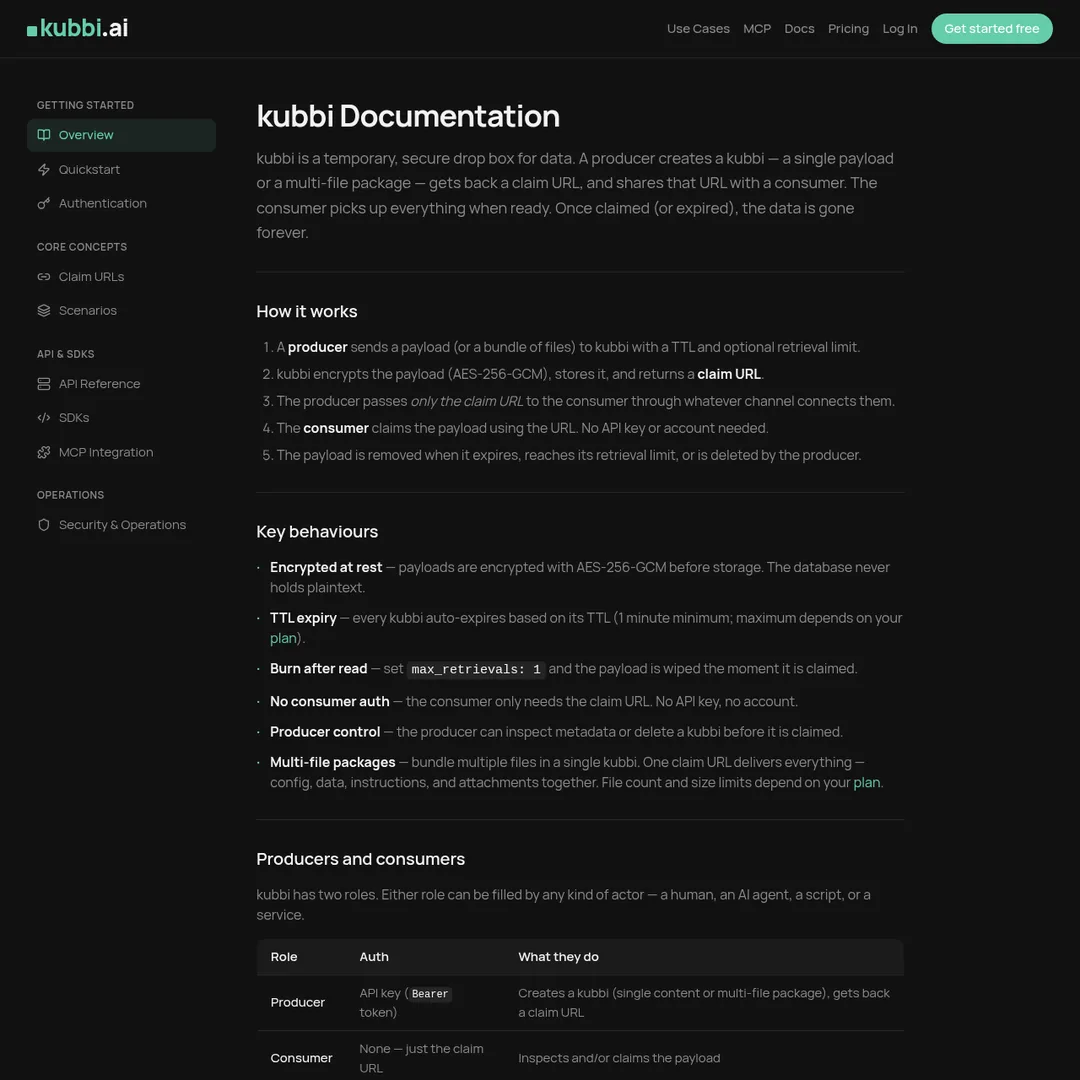

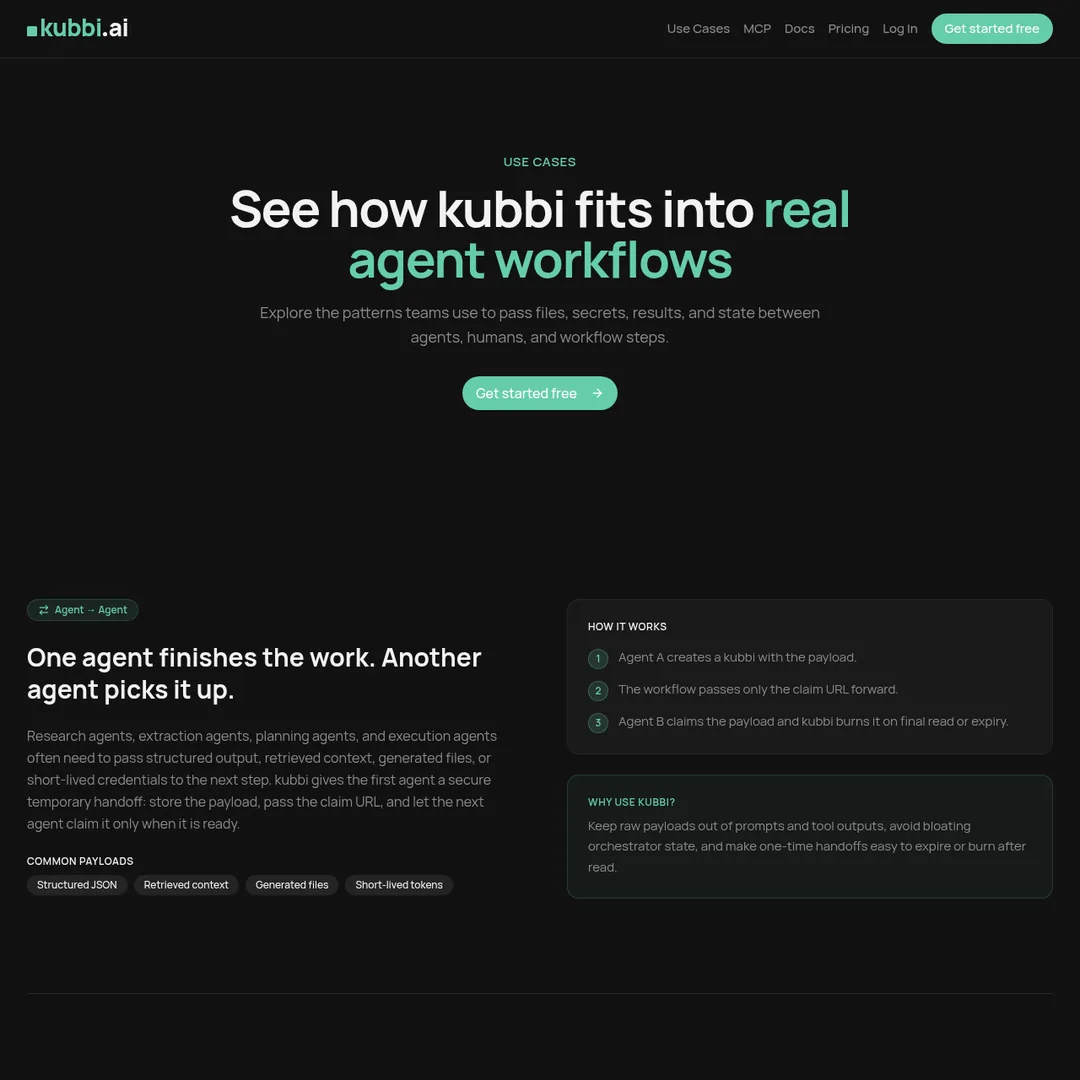

What is Kubbi?

Kubbi is a secure handoff layer purpose-built for AI agent workflows. If you have ever built a multi-step agent pipeline and wondered where to stash the API key, config file, or intermediate dataset between steps — without embedding it directly in the message payload — Kubbi is the answer.

The concept is straightforward. A producer creates a "kubbi" — a temporary encrypted container — with a payload, a TTL (time-to-live), and an optional burn-after-read policy. The workflow only carries the claim URL. The consumer picks up the payload on their own schedule. After the TTL expires or the retrieval limit is hit, the kubbi self-destructs.

Think of it as a dead drop for AI agents. The payload stays in Kubbi. Only the claim URL travels through your MCP channels, A2A messages, queues, webhooks, or task systems. This is not just a nice-to-have — it is a fundamentally cleaner pattern for handling sensitive or large payloads in agent architectures.

Kubbi ships with first-class SDKs for Python and TypeScript, native MCP tool support, and a clean REST API. You can get a handoff working in three lines of Python.

Key Features

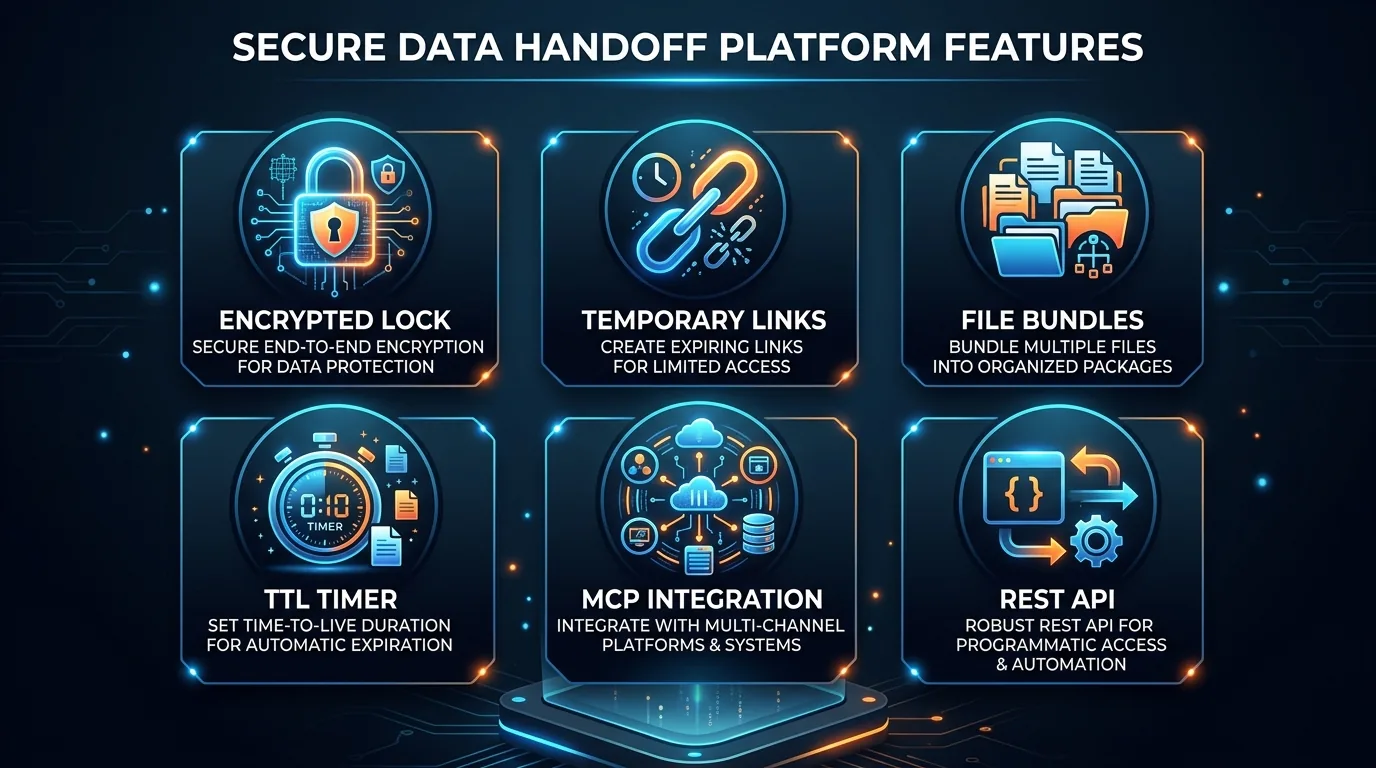

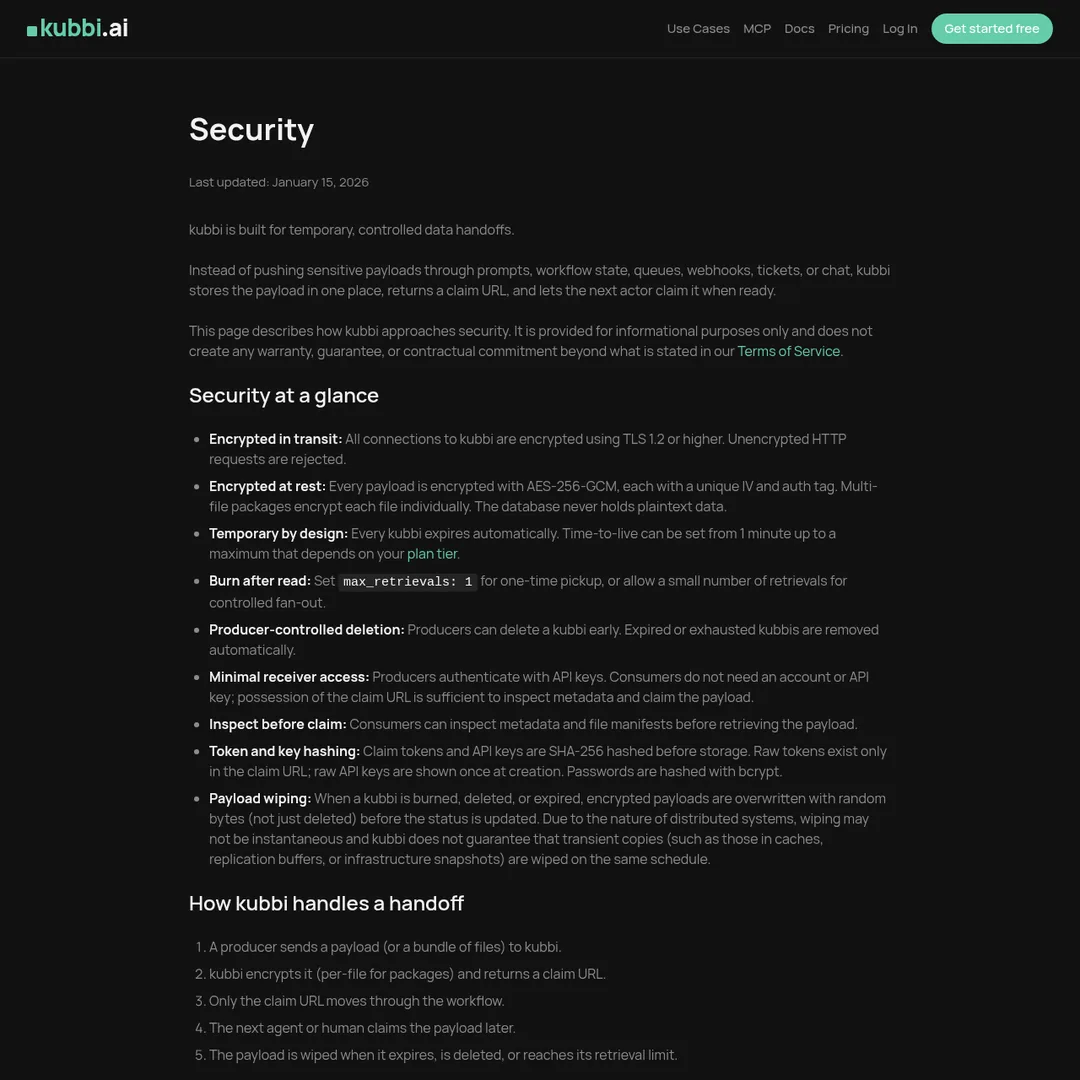

1. Temporary Encrypted Claim Links

Every kubbi generates a unique claim URL with configurable TTL (up to 2 hours free, 2 days on Pro). The payload is encrypted at rest, and the link burns after the retrieval limit is reached. This is the core primitive that makes Kubbi work — you never pass the actual data through your workflow channels.

2. Multi-File Bundles

Bundle up to 5 files in a single kubbi (3 on free). Config, data, instructions, and attachments travel together as one claim link. This eliminates the clunky pattern of sending multiple separate payloads and hoping the consumer collects them all.

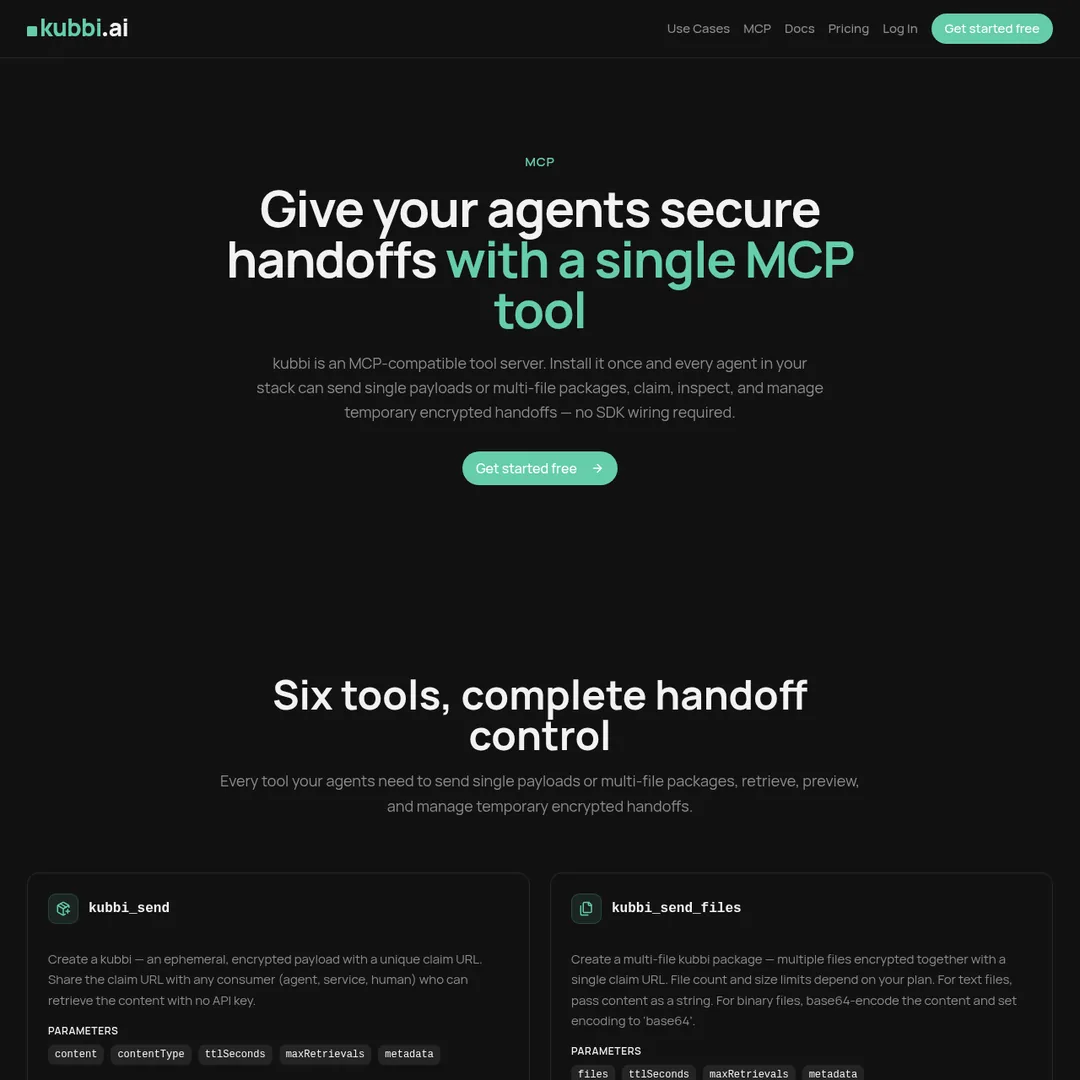

3. Native MCP Integration

Expose Kubbi as a tool inside any MCP-compatible agent. Agents can create, claim, and send multi-file packages natively through the Model Context Protocol. This is huge for anyone building Claude, GPT, or Gemini agent pipelines.

4. Python & TypeScript SDKs

pip install kubbi — async-ready with full type hints. npm install @kubbi.ai/sdk — tree-shakable, zero dependencies, works in Node, Deno, and edge runtimes. Both SDKs let you create handoffs in three lines of code.

5. Configurable Burn Policies

Set expiry time, read limits, and burn behavior at the kubbi level. A kubbi can self-destruct after one read, after five reads, or after the TTL expires — whichever comes first. This gives you fine-grained control over data exposure in your workflows.

6. Clean REST API

A single POST to create, a POST to claim. Standard HTTP — no SDKs required. Works from any language or platform. If you are working in Go, Rust, or anything else the SDKs do not cover, the REST API has you covered.

How to Use Kubbi

1

Sign up free — Head to kubbi.ai/sign-up. No credit card required. You get 20 kubbis per day immediately.

2

Install the SDK — Run pip install kubbi for Python or npm install @kubbi.ai/sdk for TypeScript. Both are production-ready with full type hints.

3

Create a kubbi — Pass your payload (text, JSON, or file bundle), set a TTL and retrieval limit. You get back a claim URL.

4

Pass the claim URL — Send only the URL through your workflow channel — MCP tool result, A2A message, queue, webhook, or task ticket.

5

Consumer claims it — The receiving agent or human hits the claim URL to retrieve the payload. The kubbi then expires or burns based on your policy.

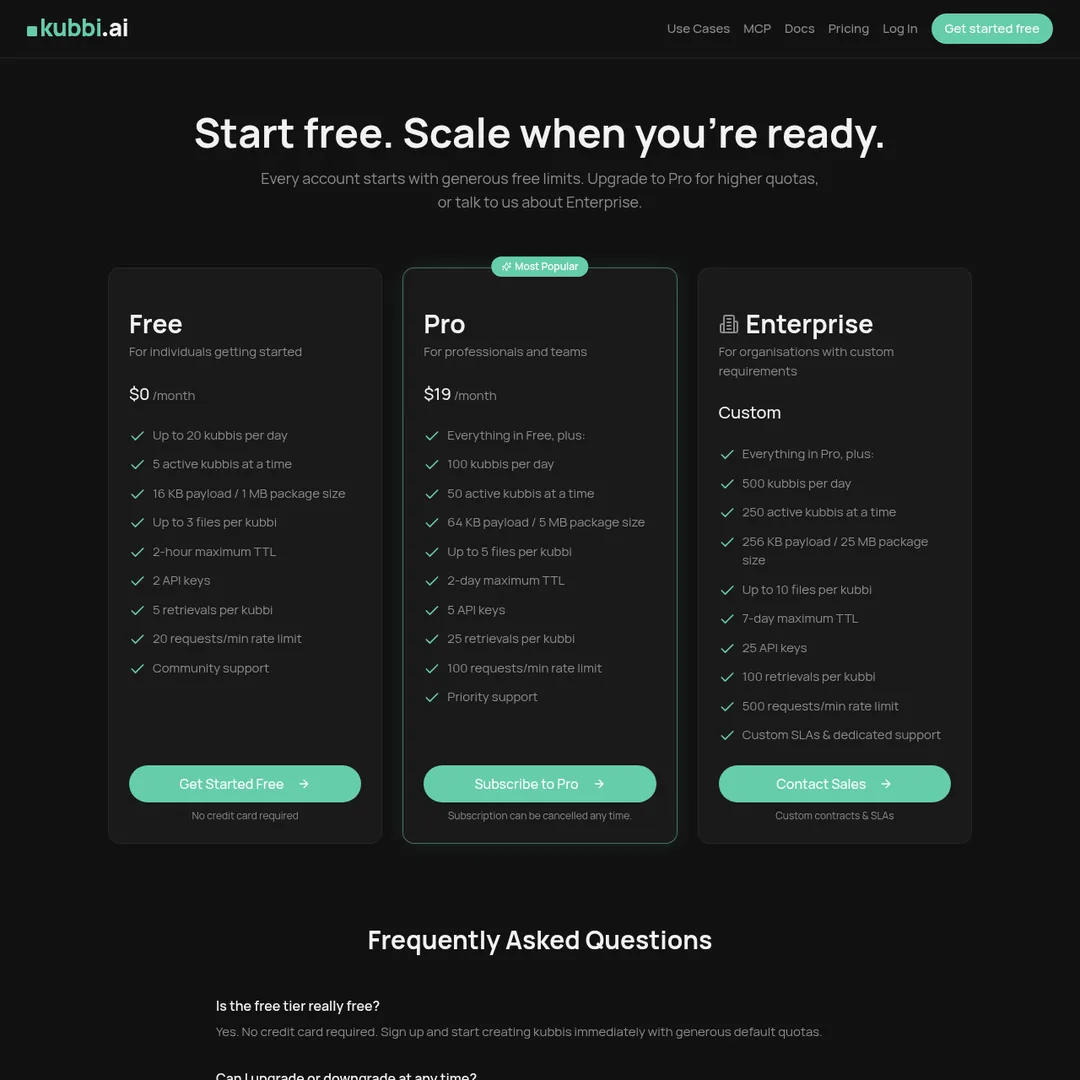

Pricing Plans

The free tier is genuinely useful for prototyping and small projects. $19/month for Pro is cheaper than a single Starbucks-a-week habit, and the 5x increase in limits makes it viable for production agent systems.

Pros and Cons

Pros

- ✓ Solves a real, specific problem in agent architectures

- ✓ Native MCP support is a genuine differentiator

- ✓ Python and TypeScript SDKs are well-built with full type hints

- ✓ Free tier is generous enough for real prototyping

- ✓ Clean REST API works from any language

- ✓ Burn policies and TTL give fine-grained security control

Cons

- ✗ Payload size limits are small (16KB free, 64KB Pro)

- ✗ 2-hour TTL on free tier may not suit async workflows

- ✗ No end-to-end encryption (payload encrypted at rest only)

- ✗ Relatively new — limited community and ecosystem

- ✗ No self-hosted option for air-gapped environments

Kubbi vs Alternatives

Final Verdict

Kubbi is one of those tools that makes you wonder why it did not exist sooner. If you are building multi-agent workflows — especially with MCP — the pattern of passing only a claim URL instead of the actual payload is cleaner, more secure, and easier to debug than any alternative I have tried.

The payload size limits are the main constraint. If you need to pass large files (videos, datasets), you will still need S3 presigned URLs or similar. But for configs, secrets, API keys, small datasets, and instruction bundles — which cover 90% of agent handoff use cases — Kubbi is the right tool.

At $19/month for Pro and a genuinely useful free tier, the pricing is a non-issue. I would rate Kubbi 4.3/5 — docking points for the size limits and the early-stage ecosystem, but giving full marks for the concept, execution, and developer experience.

Ready to Try Kubbi?

Join the growing number of developers using Kubbi to build secure agent handoffs. Free to start, no credit card required.

Get Started Free →

Frequently Asked Questions

What is Kubbi?

Kubbi is a secure handoff layer for AI agents. It creates temporary, encrypted claim links that let agents pass files, secrets, and state between workflow steps without exposing payloads inline. You create a kubbi with a payload, set a TTL and burn policy, and share only the claim URL.

How does Kubbi work?

A producer creates a kubbi containing a payload or file bundle with a TTL and optional retrieval limit. The workflow carries only the claim URL through MCP, A2A, queues, or task systems. The consumer claims the payload on their own schedule. After claiming, the kubbi expires or burns according to its policy.

Is Kubbi free to use?

Yes. Kubbi has a generous free tier with up to 20 kubbis per day, 5 active at a time, 16KB payload size, 2-hour TTL, and community support. No credit card required. The Pro plan at $19/month unlocks higher quotas.

What languages does Kubbi support?

Kubbi has first-class SDKs for Python (pip install kubbi) and TypeScript (npm install @kubbi.ai/sdk). It also has a clean REST API that works from any language or platform with standard HTTP.

Does Kubbi support MCP?

Yes. Kubbi has native MCP tool support, allowing agents to create, claim, and send multi-file packages within any MCP-compatible agent environment. This is one of its key differentiators for AI agent workflows.

How secure is Kubbi?

Kubbi is designed with security as a core principle. Payloads are encrypted, links are temporary with configurable TTL and burn-after-read policies, and retrieval limits control how many times a kubbi can be claimed. The payload never travels through the workflow — only the claim URL does.

Can Kubbi handle multiple files?

Yes. A single kubbi can bundle up to 3 files on the free tier and up to 5 files on Pro. This lets you package config, data, instructions, and attachments into a single claim link.

What are alternatives to Kubbi?

Alternatives include using inline payloads in agent messages (insecure for sensitive data), cloud storage presigned URLs (more setup), HashiCorp Vault (complex, enterprise-focused), and custom webhook solutions. Kubbi is purpose-built for the agent handoff use case, making it simpler than any of these.

Get Premium AI Tool Insights

Subscribe to get weekly curated AI tool recommendations, exclusive deals, and early access to new tool reviews.

Related Tools

Droidrun

ai-automation

Open-source AI framework that lets LLM agents control Android and iOS apps via native accessibility APIs. 91.4% success rate, €2.1M funded.

M

Manus AI

ai-automation

Autonomous AI agent platform that executes complex multi-step tasks — browses the web, writes code, creates files, and works in the background without guidance.

R

Renamer.ai

ai-automation

AI-powered file renaming tool that uses OCR to read document content and automatically generates meaningful file names. Supports 30+ file types and 20+ languages.

MuleRun

ai-automation

Related Articles

Ollama + Claude Code: How to Run It 99% Cheaper (Or Free)

Two proven methods to run Claude Code for free or nearly free. Use local models with Ollama for unlimited private usage, or point Claude Code at OpenRouter free cloud models. Step-by-step setup with config files and honest trade-offs.

How to Automate Social Media Posts From Google Drive With Blotato

Build a hands-free social media pipeline: drop photos into Google Drive and let Blotato auto-post to LinkedIn, Instagram, Facebook, and TikTok using Make.com, n8n, or Claude Code.

How to Run OpenClaw Free Forever: 3 Methods With Local and Free Models

Run OpenClaw with zero monthly costs using Ollama local models, Atomic Chat, or free APIs on OpenRouter. Step-by-step setup for Gemma 4, GLM 4.7, and Qwen 3.6 Plus.