AI Tools Are Getting More Expensive — Here's What's Really Happening

AI Infrastructure Lead

Key Takeaways

- GitHub Copilot paused all new Pro, Pro+, and Student signups on April 20, 2026 — agentic coding workflows were costing more than subscriptions brought in

- Anthropic briefly removed Claude Code from the $20/month Pro plan, then reversed after backlash — but tighter limits remain

- Both companies are shifting toward token-based billing, with Copilot moving to "AI Credits" on June 1

- Anthropic reportedly loses $8-$13.50 for every $1 in revenue — the VC subsidy era is ending

- This follows the exact Netflix/Uber/DoorDash playbook: subsidize growth, build dependency, raise prices

What's Actually Happening Right Now

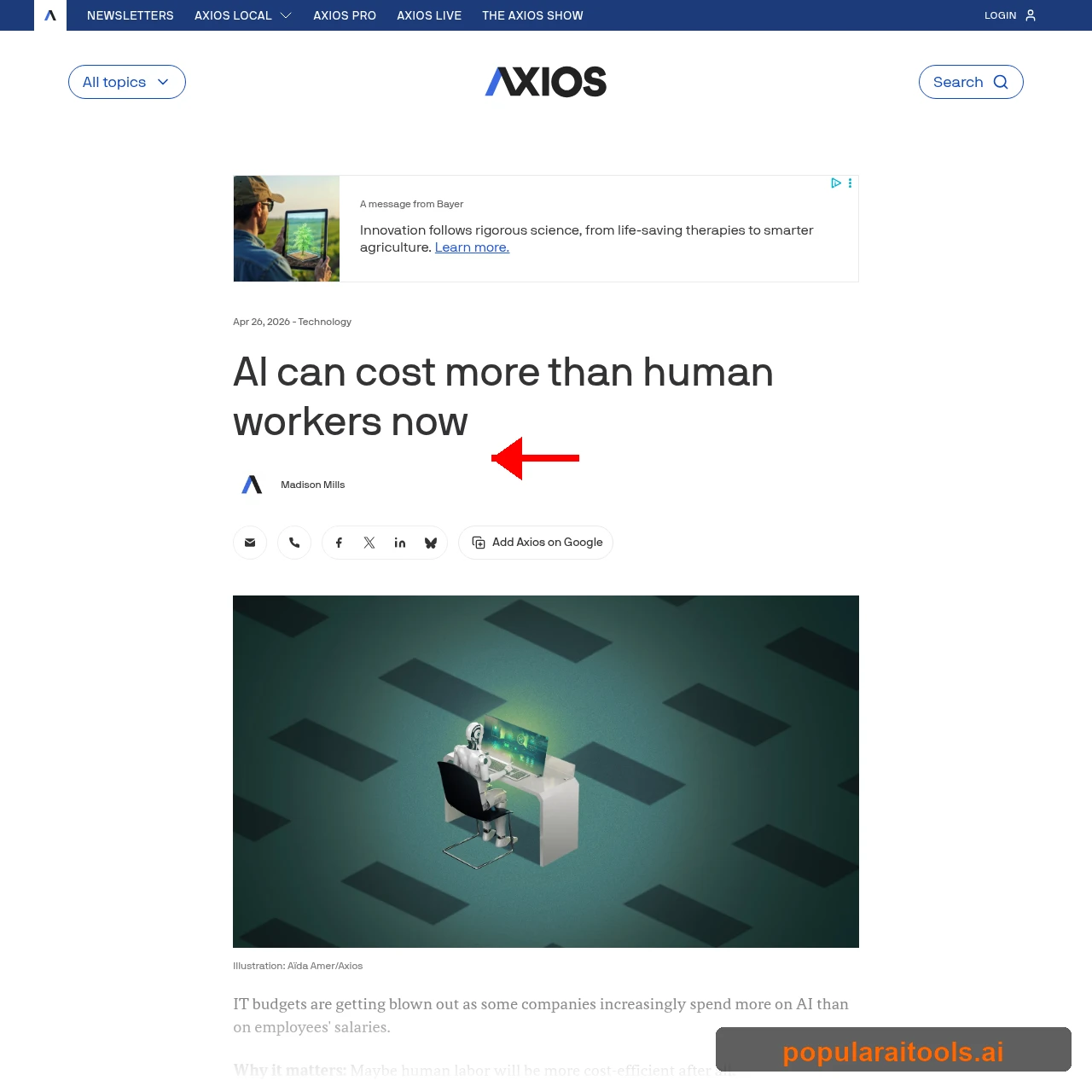

Something broke in AI pricing last week, and it happened fast. Within 48 hours of each other, GitHub froze new Copilot signups and Anthropic tried removing Claude Code from their cheapest plan. These aren't isolated incidents — they're the first visible cracks in a pricing model that was never meant to last.

We've been watching AI tool pricing closely since 2024, and what's happening now follows a pattern we've seen before in tech. The cheap era is ending. Not because the technology is getting worse — it's getting better, which is actually the problem. More powerful models cost more to run, and the companies subsidizing your usage are running out of patience (and VC cash).

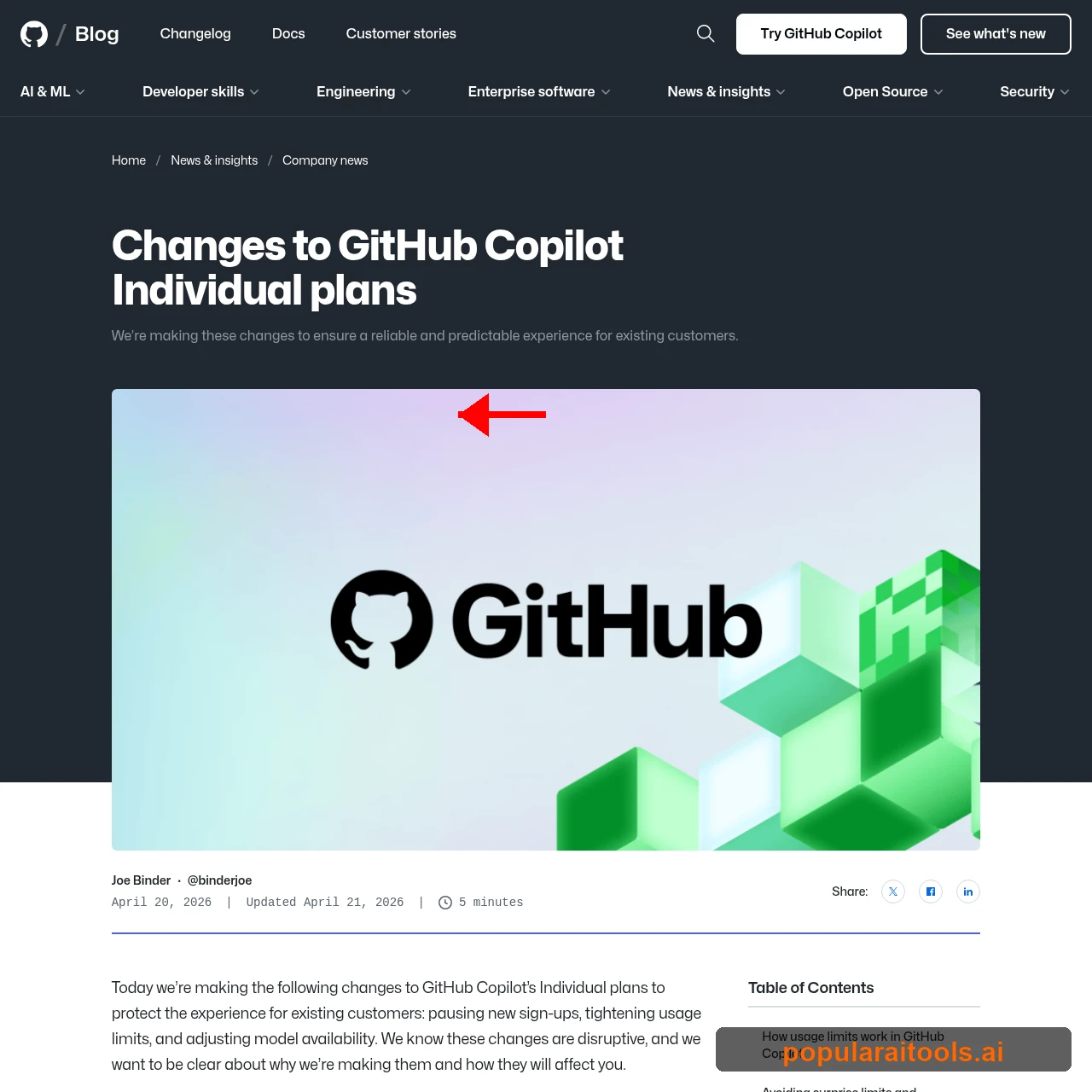

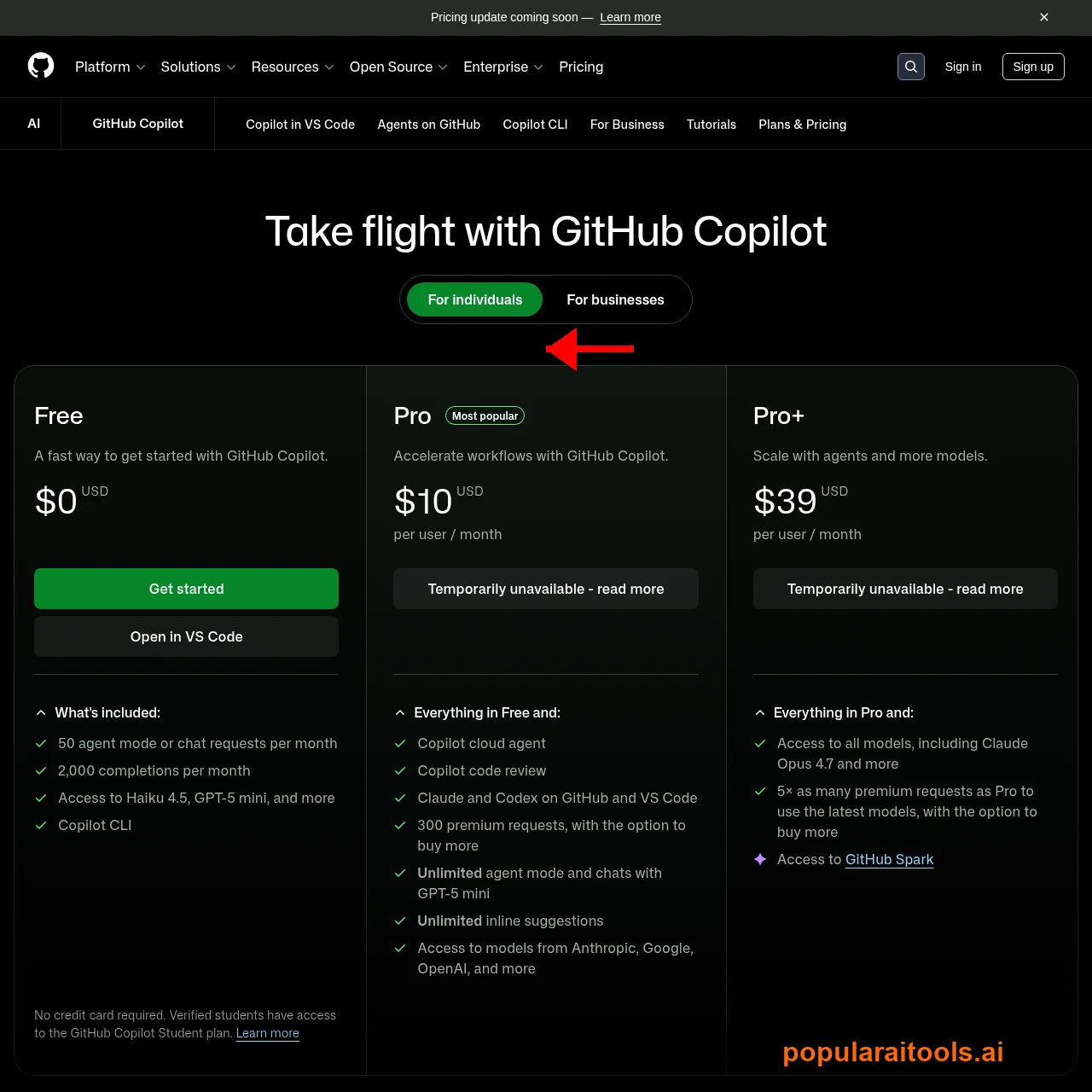

GitHub Copilot: Signups Frozen, Prices Rising

On April 20, 2026, GitHub VP of Product Joe Binder announced that new signups for Copilot Pro ($10/month), Pro+ ($39/month), and Student plans were being "paused." The free tier remains open, but if you want the real power — agentic coding, premium models, unlimited completions — the door is closed for now.

The reason? Agentic AI workflows — long-running, parallelized coding sessions — have "fundamentally changed" compute demands. In GitHub's own words: "It's now common for a handful of requests to incur costs that exceed the plan price." Read that again. A few coding sessions can cost GitHub more than your entire monthly subscription.

The changes go beyond frozen signups:

- Opus models removed from Pro — Opus 4.7 now requires Pro+ ($39/month). Opus 4.5 and 4.6 are being removed from Pro+ entirely.

- Session and weekly usage limits — New caps on tokens consumed per session and per 7-day period, now displayed in VS Code.

- Token-based billing coming June 1 — Copilot will shift to "GitHub AI Credits" tied to actual token consumption, replacing Premium Request Units.

- Refund window until May 20 — Users unhappy with the limits can cancel and get a prorated refund before the deadline.

If you're already a Copilot Pro subscriber, you're safe for now. But the writing is on the wall: flat-rate AI subscriptions are going the way of unlimited data plans.

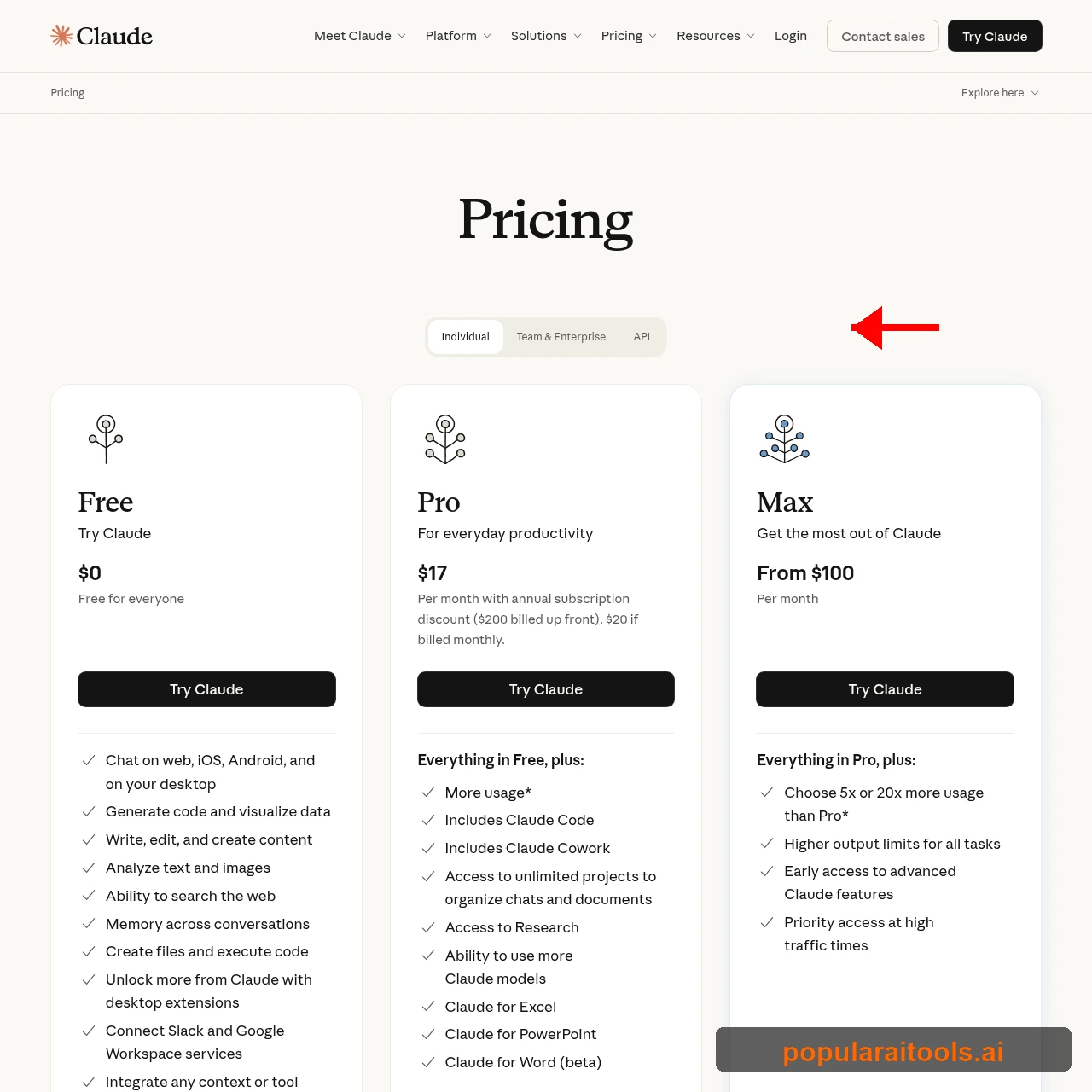

Claude Code: The $20 Plan That Costs Anthropic $260

The same week GitHub froze signups, Anthropic quietly updated their pricing page to show Claude Code as exclusive to the $100+ Max plan — effectively removing it from the $20/month Pro subscription. The backlash was immediate and fierce.

Within hours, Reddit and X erupted. Anthropic's Head of Growth Amol Avasare scrambled to clarify, calling it a "small test on approximately 2% of new prosumer signups." The pricing page was reverted, and Claude Code came back to Pro. But the damage was done — users saw exactly where this is heading.

The real story is in the numbers. According to reporting from Where's Your Ed At, Anthropic is spending $8 to $13.50 for every $1 in revenue. Their much-touted "$14-20 billion ARR" is a marketing spin — actual cumulative revenue through March 2026 was just over $5 billion total, while training and inference costs exceeded $10 billion.

For Pro users, the experience has already degraded significantly. Here's what actual users are reporting:

- Hourly limits: 30-60 minutes of Claude Code use, then a 3-4 hour cooldown

- Weekly caps: Users getting locked out by day 4, unable to use Claude Code for the remaining 3 days

- Model downgrades: Opus "burns tokens like crazy," forcing users onto Sonnet which produces lower-quality code

- Peak throttling: Since March 26, session limits deplete faster during weekday peak hours (5am-11am PT)

- Third-party tools cut off: As of April 4, Cline, Cursor, and Windsurf can no longer use subscription auth — forced onto metered API billing

One user summed it up perfectly: "They've effectively removed Code from the Pro plan even if they haven't officially done so." And some users are finding out the hard way — Belo, an Argentine finance app, had their entire 60-person team locked out by an automated "abuse detection" system that turned out to be a false positive.

Why This Is Happening: The Math Doesn't Work

The fundamental problem is straightforward: AI inference is expensive, and subscriptions don't cover the cost. Let's look at the actual economics.

Nvidia Blackwell GPU rental prices jumped 48% in just two months, hitting $4.08/hour by early April 2026. CoreWeave extended minimum contract terms from 1 year to 3 years. OpenAI's CFO admitted they're "making some very tough trades" because they don't have enough compute.

The token economics tell the rest of the story. Anthropic charges output tokens at 5x the rate of input tokens, and a Concordia University study found that input tokens account for 53.9% of total consumption in multi-agent coding — most of your tokens go to re-reading context, not generating code.

Here's what real-world usage looks like: developer Jenny Ouyang received a $1,600 API bill in just two months from Claude Code usage. A 51-page PDF costs roughly 119,000 tokens to process. Each standard image adds about 1,550 tokens. It adds up fast.

Enterprise customers aren't immune either. Anthropic has started charging Claude Enterprise customers per token on top of their monthly fee. Their justification? Workloads are shifting "from seat-bound productivity into agentic use." Translation: you're using too much, and we can't afford it anymore.

The VC Subsidy Playbook: Netflix, Uber, and Now AI

If this feels familiar, it should. We've watched the same playbook unfold across every major tech disruption of the past decade.

Netflix started at $8/month with access to virtually every movie and show. Now it's $23/month with lower quality content and constant upselling. Uber was dramatically cheaper than taxis during its growth phase — now rides cost the same or more. DoorDash used to offer food delivery at comparable prices to pickup — now you're paying double the food cost in fees and markups.

The formula is always the same: raise billions in VC money, offer unsustainably low prices, build user dependency, then gradually (or suddenly) raise prices once customers are locked in. AI is running this playbook at warp speed.

The AI version is arguably more dangerous. When Netflix raises prices, you can watch something else. When Uber gets expensive, you can take a taxi. But if your entire development workflow depends on AI coding assistance and you never learned the underlying fundamentals? You can't just stop. The switching cost is your ability to do your job.

As VC Tomasz Tunguz put it: "The age of abundant AI is over, and it will remain so for years." He identified five hallmarks of AI scarcity: relationship-based selling (best models go to the highest bidders), inflationary pricing, available-but-slow access, and forced diversification toward smaller or self-hosted models.

The combined AI market cap sits at $23 trillion, but actual industry profits are only $420 billion. That's a 55:1 ratio between what the market thinks AI is worth and what it actually earns. That gap has to close eventually, and it will close from the revenue side — meaning prices go up.

How to Protect Yourself

We're not saying AI tools aren't worth paying for — they clearly are. But the current pricing is artificially low, and building your entire workflow around subsidized rates is asking for trouble. Here's what we recommend:

Learn the Fundamentals

If AI gets 5x more expensive tomorrow, can you still do your job? Understanding the code AI writes for you is the best insurance against price hikes. Use AI to accelerate, not to replace understanding.

Diversify Your Tools

Don't go all-in on one provider. Claude Code has a free tier, Copilot Free still works, and open-source models are improving fast. Vendor lock-in is exactly what these companies want.

Consider Pay-As-You-Go

API access lets you pay only for what you use. Yes, it requires more setup, but it avoids the subscription trap where companies can change the terms at any time. Check our LLM cost calculator to compare.

Watch the Token Economics

Understand how tokens work. Output costs 5x input. Cache reads save 90%. Structure your prompts efficiently. If you're sending a 50-page PDF with every prompt, you're burning thousands of tokens unnecessarily.

Explore Local Models

Models like Hermes 4 and Gemma 4 run locally with zero per-token costs. They won't match Opus quality, but for routine coding tasks, they're more than adequate.

Monitor Your Usage

Know what you're spending. Track tokens, not just dollars. Set budget alerts on API accounts. One developer's $1,600 surprise bill in two months could have been caught early with basic monitoring. Learn the tips that power users rely on.

What Comes Next

The short-term outlook is clear: prices go up, features get gated, and flat-rate subscriptions become less generous. But the long-term picture is more nuanced.

The average cost per token is predicted to drop 90% by 2030 thanks to hardware efficiencies, better model architectures, and competition. Inference costs have already fallen dramatically from where they were in 2024. The problem isn't that AI will always be this expensive — it's that the transition from subsidized to market-rate pricing is happening right now, and it's painful.

Expect to see:

- More tiering. The "one plan fits all" era is over. Expect 4-5 tiers from every provider, with the best models locked behind the highest tiers.

- Usage-based pricing everywhere. GitHub's June 1 switch to AI Credits is the canary. Others will follow.

- Open-source competition. Every price hike from Anthropic or OpenAI is a marketing campaign for open-source models. Meta, Google, and the open-source community will keep pushing capable local alternatives.

- Enterprise revolt. Companies with 10,000 AI-using employees can't absorb 5x price increases. They'll invest in self-hosted solutions, driving the open-source ecosystem forward.

The developers who will thrive through this transition are the ones who treat AI as a tool — not a crutch. If you understand the code, you can switch between providers, optimize your token usage, or fall back to manual coding when the math doesn't make sense. If you can't code without AI, you're at the mercy of whatever Anthropic or GitHub decides to charge next month.

Frequently Asked Questions

Recommended AI Tools

Wondershare Repairit

Hands-on review of Wondershare Repairit (2026): AI-powered file repair for videos, photos, documents, audio, and Outlook email. Pricing, scenarios, comparison with Stellar, EaseUS Fixo, Yodot.

View Review →Wondershare Dr.Fone

After months of real-world use, Dr.Fone has become my go-to mobile rescue kit. AI-powered recovery, transfer, unlock, and repair across iOS and Android, with success rates that genuinely surprised me.

View Review →Wondershare RecoverIt

After six months of putting Wondershare RecoverIt through real recovery jobs (formatted SSDs, dead SD cards, crashed drives) it has earned a permanent spot in my toolkit. Here is the honest, detailed take.

View Review →Emergent.sh

Build production-ready apps in hours, not weeks. Full-stack with auth, payments, hosting included. $20-200/mo pricing.

View Review →