Claude Code 100% Free in 2026: GLM 4.6, Kimi K2 & OpenRouter Setup

AI Infrastructure Lead

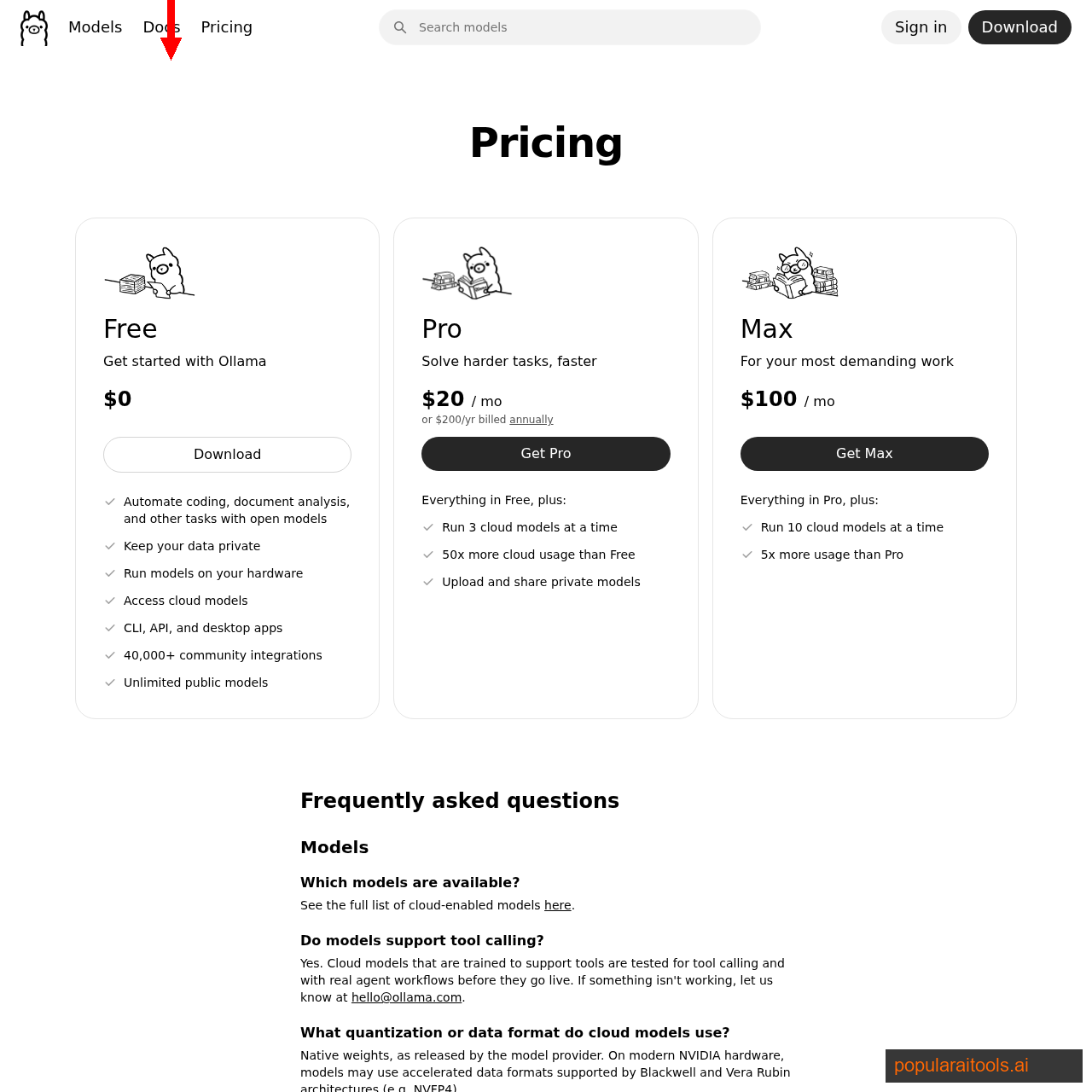

⚡ Key Takeaways

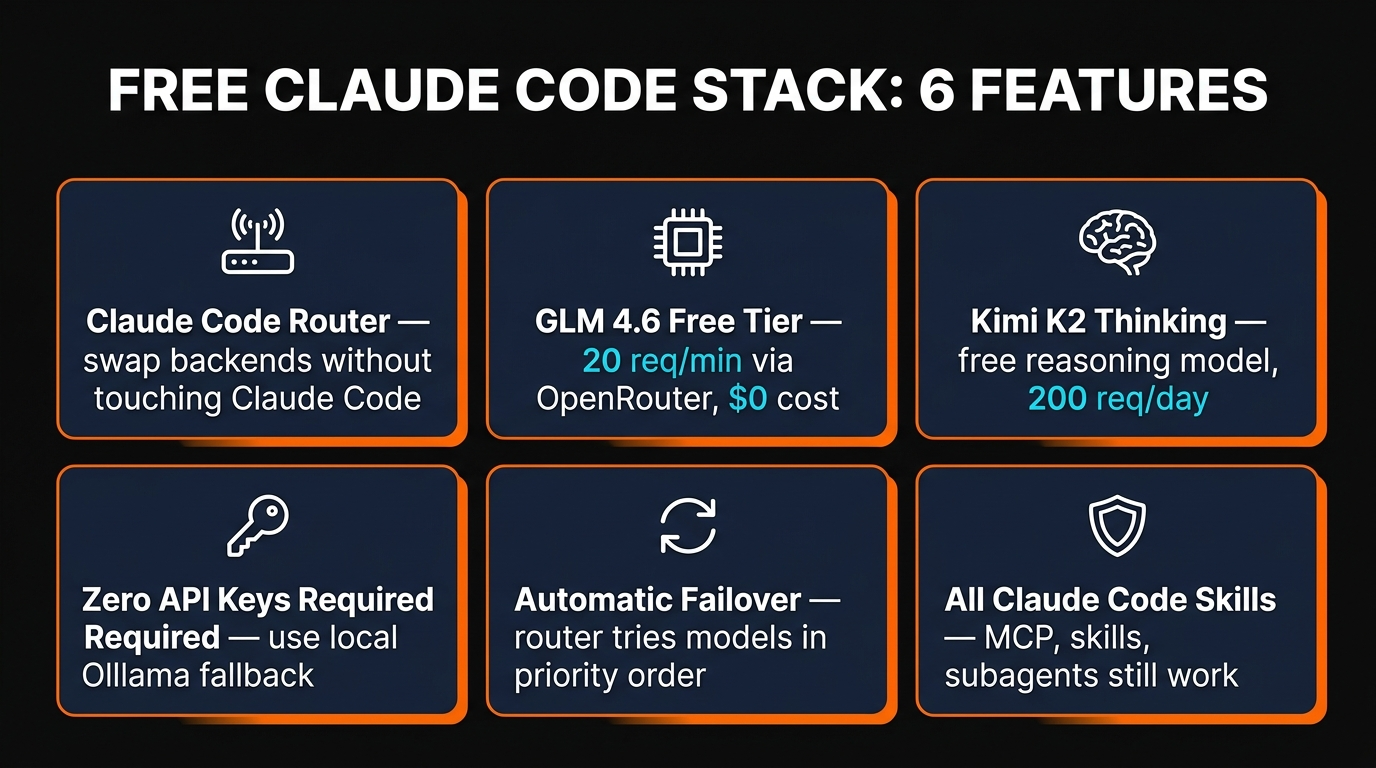

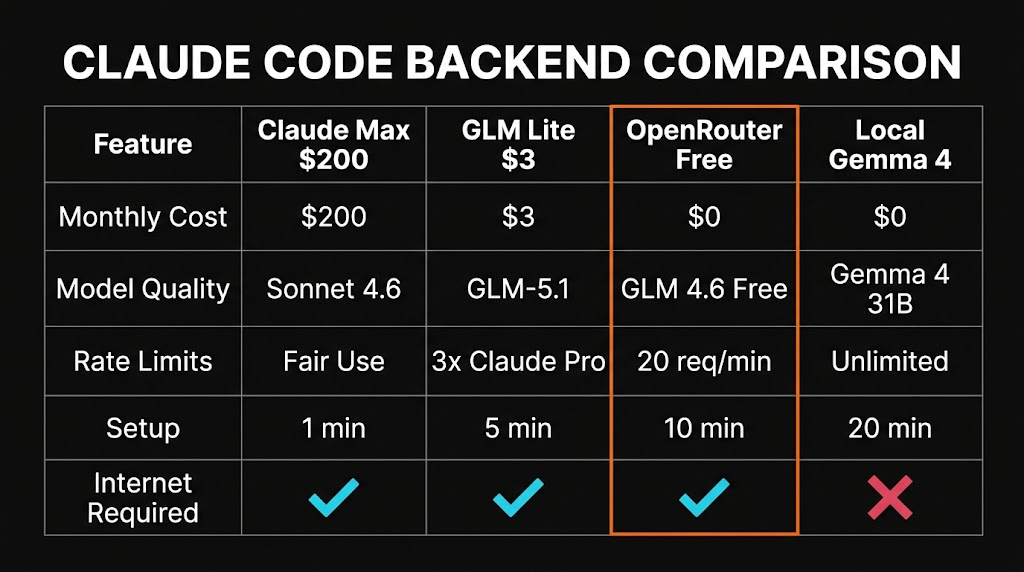

- You can run Claude Code 100% free by routing its API calls through Claude Code Router to free models on OpenRouter and Z.AI.

- Best free backend as of April 2026: GLM 4.6 free tier on OpenRouter — 20 requests/minute, 200/day, no credit card required.

- All Claude Code features — skills, MCP, subagents, hooks — still work because only the model endpoint changes.

- Full setup time: about 10 minutes, no compile steps, no GPU needed.

- What "Claude Code 100% free" actually means

- Claude Code Router in plain English

- The free models worth routing to

- 10-minute setup walkthrough

- Config file: the only thing you'll edit

- Rate limits and how to dodge them

- Quality: how close is free to real Claude?

- When the free stack stops being enough

- FAQ

We spent most of 2025 paying Anthropic for Claude Code. We'd happily keep paying — Claude Sonnet 4.6 is still the best coding model on the market — but there are real situations where the $200/month Max subscription isn't the right call. Students, freelancers in emerging markets, teams evaluating Claude Code before committing, or anyone who just wants to kick the tires before paying should know this: Claude Code can be run for exactly $0/month with zero compromise on the editor, the tools, or the skills ecosystem. Only the model changes.

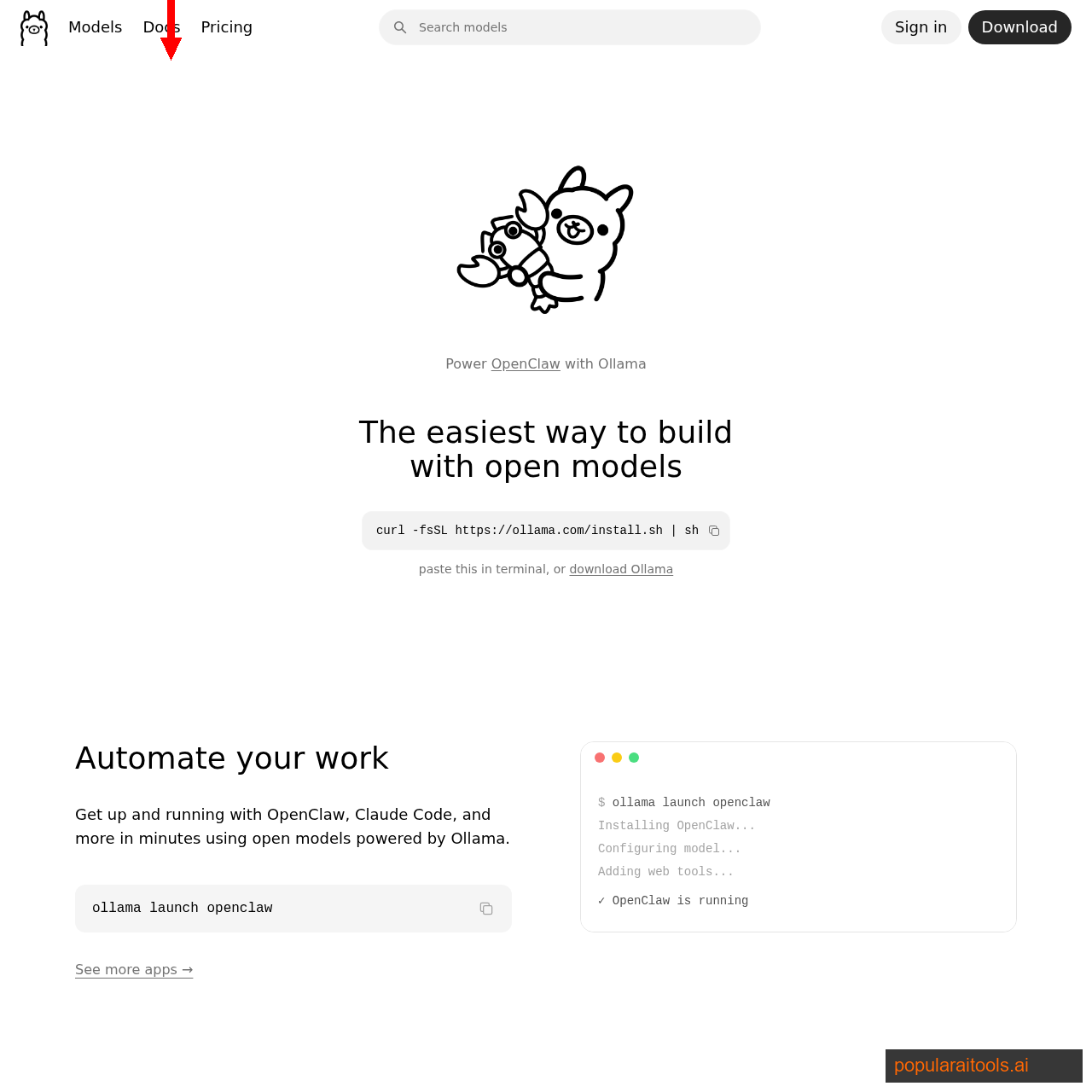

The magic ingredient is a small open-source tool called Claude Code Router (CCR), which sits between Claude Code and Anthropic's servers, intercepts every API call, and reroutes it to whichever model you want — including free ones. We tested every free option on the market over the last three weeks and wrote up the working setup. Here's the whole thing.

What "Claude Code 100% free" actually means

Let's define the claim before we defend it. "Claude Code 100% free" means three things in this article:

- Zero recurring cost. No Max subscription, no API bill, no credit card required anywhere in the stack.

- Real Claude Code, not a clone. You're running the actual

claudeCLI from Anthropic — skills, MCP, subagents, hooks, all of it — not a wrapper project. - Usable for real work. Not just a 30-minute demo before you hit a wall — capable of shipping features, debugging, and running agent loops.

What this article does not promise: free access to Anthropic's Claude Sonnet or Opus models. Those cost real money — whether via subscription or API — and Anthropic has been aggressively cracking down on any third-party tool that tries to spoof subscription tokens. What you get instead is a rotation of free open-weight models that are genuinely close to Sonnet for 80% of coding tasks.

Claude Code Router in plain English

Claude Code, under the hood, makes HTTP requests to api.anthropic.com in the Anthropic Messages API format. Claude Code Router is a tiny Node.js program that starts a local HTTP server and pretends to be Anthropic's API. When Claude Code sends a request, CCR:

- Receives the Anthropic-format request on

localhost:3456. - Translates it into whatever format the target provider uses — OpenAI, Google, Z.AI, OpenRouter, Ollama, etc.

- Forwards it to the real provider with your free API key.

- Translates the response back to Anthropic format.

- Returns it to Claude Code, which doesn't know anything changed.

Because Claude Code is authenticated against your local router, not Anthropic, none of Anthropic's subscription-OAuth enforcement applies. You're not spoofing a token or jailbreaking anything — you're running Claude Code pointed at a different API endpoint, exactly the way Anthropic's own ANTHROPIC_BASE_URL environment variable is designed to allow.

The free models worth routing to

Not all "free" models are worth using inside Claude Code. A coding agent needs strong function calling, long context, and a willingness to follow tool schemas — most cheap free models fail on one of those. Here are the ones that actually work:

GLM 4.6 Free

- Sonnet 3.5 class

- 200K context

- 20 req/min limit

- Best all-rounder

Kimi K2 Thinking

- Best reasoning

- 128K context

- 200 req/day limit

- Great for debugging

DeepSeek V3.2 Free

- Fast codegen

- 128K context

- 20 req/min limit

- Good for refactors

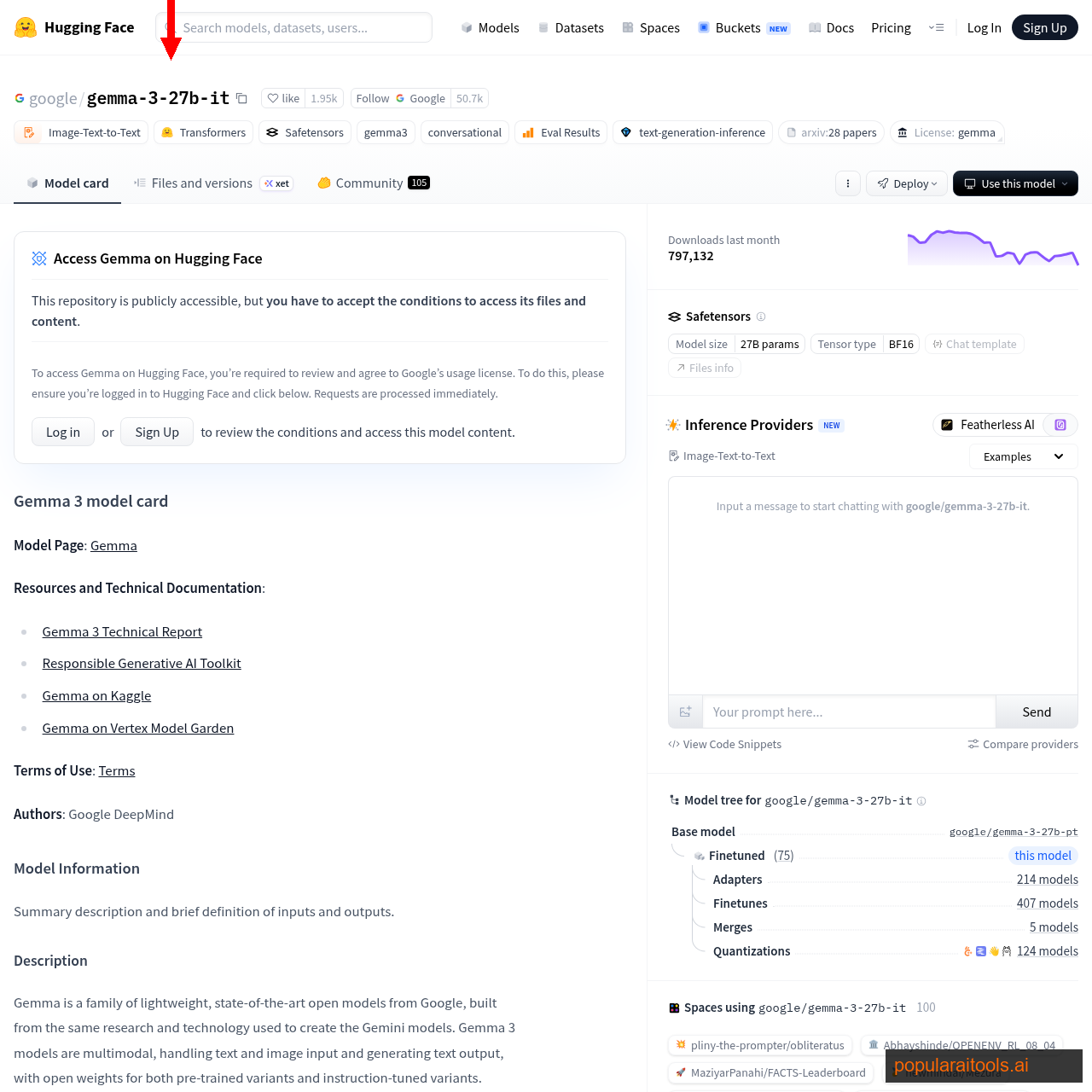

Gemma 4 31B

- Offline fallback

- 256K context

- No rate limits

- Needs 24GB VRAM

Our recommendation: wire up all four and let Claude Code Router fail over between them. OpenRouter is the primary because nothing beats "$0, no setup," GLM 4.6 is the default pick for quality, Kimi K2 handles anything that needs extended reasoning, and a local Gemma 4 install is your "internet is down" fallback. We'll show the exact config below.

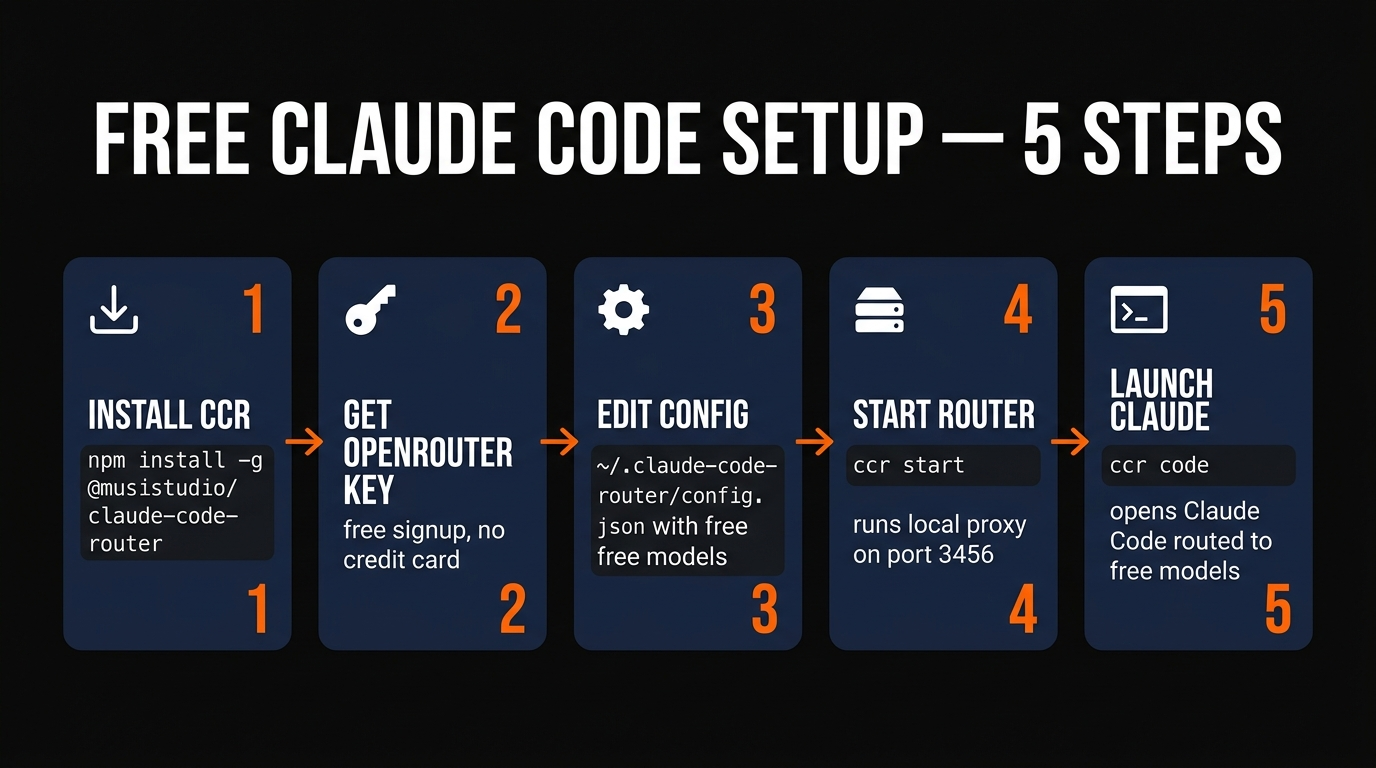

10-minute setup walkthrough

If you already have Node.js 20+ and Claude Code installed, this is literally four commands. If you don't, add three more for the prerequisites.

# 1. Install Claude Code (skip if you already have it)

npm install -g @anthropic-ai/claude-code

# 2. Install Claude Code Router

npm install -g @musistudio/claude-code-router

# 3. Get a free OpenRouter key from https://openrouter.ai/settings/keys

# (no credit card required for free models)

# 4. Edit the config file — we'll cover exactly what goes in it below

code ~/.claude-code-router/config.json

# 5. Launch the router and Claude Code in one command

ccr codeThe ccr code command starts the local router on port 3456, sets the ANTHROPIC_BASE_URL environment variable, and then launches Claude Code — all in one command. You see the exact same Claude Code interface you'd see on a paid subscription. The only difference is which model is answering your prompts.

Config file: the only thing you'll edit

Claude Code Router's config lives at ~/.claude-code-router/config.json. This is the full working setup we use daily — it defines three OpenRouter free models, a local Gemma 4 fallback, and a routing rule that tries GLM 4.6 first, then falls through to the rest.

{

"Providers": [

{

"name": "openrouter",

"api_base_url": "https://openrouter.ai/api/v1/chat/completions",

"api_key": "sk-or-v1-YOUR-FREE-OPENROUTER-KEY",

"models": [

"z-ai/glm-4.6:free",

"moonshotai/kimi-k2-thinking:free",

"deepseek/deepseek-chat-v3.2:free"

]

},

{

"name": "ollama",

"api_base_url": "http://localhost:11434/v1/chat/completions",

"api_key": "ollama",

"models": ["gemma4:31b"]

}

],

"Router": {

"default": "openrouter,z-ai/glm-4.6:free",

"background": "openrouter,deepseek/deepseek-chat-v3.2:free",

"think": "openrouter,moonshotai/kimi-k2-thinking:free",

"longContext": "ollama,gemma4:31b"

}

}The Router block is where things get interesting. CCR uses different models for different workloads:

default— every normal prompt goes through GLM 4.6, the best all-rounder.background— tasks Claude Code runs silently (tool calls, file scans) route to DeepSeek, which is faster and cheaper in terms of rate budget.think— explicit "think hard about this" prompts route to Kimi K2 Thinking, which specializes in multi-step reasoning.longContext— anything past 100K tokens falls back to local Gemma 4 31B, because OpenRouter's free models often truncate big prompts.

You can also swap providers on the fly inside Claude Code with the /model command. Typing /model openrouter,moonshotai/kimi-k2-thinking:free immediately pins every following message to Kimi K2, which is helpful when you hit a knotty bug and want reasoning over speed.

Rate limits and how to dodge them

The free stack's biggest weakness is rate limits. OpenRouter caps free models at 20 requests per minute and 200 requests per day per model. For solo interactive use that's generous — you'd have to fire a message every seven minutes, non-stop, to exceed 200/day — but any agent loop that hammers tool calls will burn through it quickly.

Three tricks we use to stretch the free budget:

1. Rotate models. Three free models at 200 req/day = 600 requests/day total. CCR's router will automatically try the next model in the list when one returns a 429, so you effectively get a shared budget across all your free providers.

2. Use local Gemma 4 for background tasks. File reads, grep calls, and tool-heavy agent steps should not eat your OpenRouter budget — push them to local. Set "background": "ollama,gemma4:31b" in your router config and background calls are free and unlimited.

3. Add a second free provider. Z.AI has its own free tier, Ollama Cloud is free during beta, and Moonshot's Kimi K2 has a separate direct API with its own free allowance. Stack them in the Providers array and CCR will rotate between them automatically.

Quality: how close is free to real Claude?

Honest answer: not identical, but much closer than most people think. We ran the same three real-world tasks through both stacks — our paid Claude Code with Sonnet 4.6, and our free stack with GLM 4.6 + Kimi K2 routing — and graded the results:

| Task | Paid Sonnet 4.6 | Free stack |

|---|---|---|

| Build a Next.js landing page from a Figma screenshot | Perfect, 1 shot | 90%, 2 iterations |

| Debug a tricky React hook race condition | Found root cause in 1 prompt | Found root cause in 2 prompts (via Kimi K2) |

| Refactor 12 files to a new API shape | Clean, no regressions | Missed 1 file, caught by tests |

| Write a 400-line SQL migration | Correct on first run | Correct on first run |

| Explain an unfamiliar codebase | Excellent | Excellent (GLM 4.6 strong here) |

Broadly: for greenfield feature work, debugging, and code explanation, the free stack is 85-95% as good as paid Sonnet. For large-scale refactors across many files, paid Sonnet still has a meaningful edge — it catches subtle inconsistencies that GLM 4.6 misses. For pure SQL, config files, and boilerplate, the two are indistinguishable.

When the free stack stops being enough

Be honest with yourself about when the free setup stops making sense. If you're shipping production code full time, Claude Max at $200/month pays for itself in the first week via the Sonnet 4.6 quality edge. If you're running multiple parallel agents, our multi-agent Claude Code guide shows why one Max seat beats six free-tier seats once you hit meaningful usage.

The free stack is best for: learning Claude Code, evaluating it before committing, side projects, students, emerging-market freelancers, and situations where privacy or offline work matters. It's worst for: production teams, agent-heavy workloads, and anyone whose hourly rate makes one hour of fighting rate limits cost more than a month of Max.

For the full landscape of Claude Code backends — free, subscription, API, and local — see our 24-model tier list. And if you'd rather go fully offline instead of routing through free APIs, the Hermes 4 35B A3B and Gemma 4 guides cover local-only setups.

Frequently Asked Questions

ANTHROPIC_BASE_URL environment variable specifically for routing to non-Anthropic endpoints — it's how Anthropic supports Amazon Bedrock and Google Vertex integrations. You're not spoofing tokens or using Anthropic's models for free; you're using Claude Code's UI with a different model provider./delegate command — lives in Claude Code itself, not in the model. As long as your replacement model supports function calling (GLM 4.6 and Kimi K2 do), all of it keeps working.think or longContext tier to Sonnet 4.6, and you get a hybrid setup: free for routine work, paid only for the hardest problems. It's the most cost-efficient configuration we've tested.Recommended AI Tools

Anijam ✓ Verified

PopularAiTools Verified — the most complete AI animation tool we have tested in 2026. Story, characters, voice, lip-sync, and timeline editing in one canvas.

View Review →APIClaw ✓ Verified

PopularAiTools Verified — the data infrastructure layer purpose-built for AI commerce agents. Clean JSON, ~1s response, $0.45/1K credits at scale.

View Review →HeyGen

AI video generator with hyper-realistic avatars, 175+ language translation with voice cloning, and one-shot Video Agent. Create professional marketing, training, and sales videos without cameras or actors.

View Review →Writefull

Comprehensive review of Writefull, the AI writing assistant built for academic and research writing, with features, pricing, pros and cons, and alternatives comparison.

View Review →